Earlier today as you are reading this (assuming you are reading it on Tuesday 6th September, which is when it’s scheduled to post on the EE Journal website), I gave my presentation to the MSc students studying Embedded Computing at the Norwegian University of Science and Technology (NTNU) in Trondheim, Norway.

I’m actually penning these words a week in the past from your perspective as a punter because I thought you might be interested to hear what I’m planning on talking about, but I won’t have any free time to do anything apart from work my little cotton socks off while I’m there.

As you may imagine (well, as you might hope), I’ve spent a lot of time pondering this presentation. When I first began my cogitations and ruminations, I commenced with the fact that my talk required an underlying theme to tie everything together, otherwise the audience might think I was just throwing random thoughts around (as if). So, what was to be the topic of this talk?

As a starting point I decided to use the ancient Greek philosopher Heraclitus of Ephesus (535-475 BC) as my muse because, as he so pithily said, “The only constant in life is change,” and I’m planning on discussing how fast things have changed on the technology front in my own life and how much faster they appear set to change in the future.

On the other hand, I certainly don’t want my audience to think that I’m being overly dogmatic, so I also turned to a philosopher more attuned to our own time, Bon Jovi, who sang how, “The more things change the more they stay the same.” If the truth be told, this sentiment was first coined in 1849 by French writer Jean-Baptiste Alphonse Karr, but I thought Bon Jovi might be more relatable to my audience.

By this point, I had the theme for my talk: “The only constant in life is change, but the more things change the more they stay the same.” Although this may not roll off the tongue, I flatter myself that I’ve covered all of the bases.

To kick off my presentation, I thought I’d mention a few of the things that might have changed things for us but didn’t. For example, in addition to coming up with the first known heliocentric model that placed the Sun at the center of the known universe with the Earth revolving around it once a year and rotating around itself once a day, the ancient Greek astronomer and mathematician Aristarchus of Samos (310-230 BC) told everyone who would listen that he suspected the stars were other suns that are very far away. Had anyone paid any attention to him, we might have ended up with a very different perspective on our place and significance in the universe.

Similarly, the ancient Greek philosopher Democritus (460-370 BC) formulated an atomic theory of the universe. To be honest, when I first heard this, my knee-jerk reaction was that it had to be a lucky guess. However, I recently read Infinite Powers by Steven Strogatz. In addition to providing a framework for thinking about calculus, Steven also explains how Democritus used mathematical and philosophical reasoning to deduce that everything was made up from small, invisible particles he called atoms that could not themselves be further divided (I don’t think we should hold the existence of quarks—which have been aptly described as “The dreams that stuff is made of”—against him).

Sad to relate, as I previously discussed in my Dropping the Ball and Casting Aspersions columns, Part 1 and Part Deux, we’ve dropped the technological ball on numerous occasions. Consider the Antikythera Mechanism, for example. Estimated to have been built in the late second century BC, this is now considered to be the first known analog computer. It has around 40 gears, and its largest gear, which is 13 centimeters in diameter, has 223 teeth. Employing a 12-month solar year and a 29.5-day lunar month, this bodacious beauty could be used to predict the motions of all five of the planets known to the ancient Greeks. This technology was subsequently lost for close to 2,000 years.

And what about Hero’s Engine, which was a very simple steam engine. The Greek-Egyptian mathematician and engineer Hero of Alexandria described the device in the 1st century AD. What if he’d gone on to making a full-fledged steam engine? Is this farfetched? Well, Hero also invented the first coin-operated vending machine, a wind-wheel, and a wind-wheel operated organ. “Ah,” you may say, “but he couldn’t have achieved the necessary precision, to which I would retort, “What about the Antikythera Mechanism?” Furthermore, in Simon Winchester’s book, The Perfectionists, he notes that the gap between the piston and the cylinder in the first UK steam engines was the width of a coin, and I think the Romans could have matched this. What would the Romans have done if they had steam engines? Pump water? Power galleys? Build steam trains? As you may imagine, I can waffle on about this sort of stuff for ages, and doubtless I will on a future occasion, but for now we have other fish to fry.

To bring things a little closer to home, since these students are studying embedded computing and embedded systems, I’m going to ask them to define what an embedded system actually is. Of course, since I am a tricky little scamp—a “wascally wabbit,” if you will—I’m going to ask them some leading questions in the hope of guiding them to the wrong conclusions. For example, does an embedded system have to be electronic? Does it have to be digital? Does it have to include a computing element like a microcontroller?

At this point, I’m going to ask them to consider a washing machine. Is a washing machine an embedded system? You may call me “old fashioned,” but I don’t think so. I think it’s a washing machine.

Consider a washing machine (Image source: Pixabay.com)

Now consider the electronic control module that you can use to program the temperature of the water, the length of the washing cycle, the ferociousness of the spinning cycle, and so forth. Is this control module an embedded system? I would say so, and I hope the students will agree with me (they will if they know what’s good for them).

“Ah Ha!” I will say, “but now suppose I remove the electronic control module and replace it with a clockwork mechanism that also determines the temperature of the water, the length of the washing cycle, and so forth. Since it is performing much the same tasks as its electronic cousin, does the fact that it’s mechanical make it any less of an embedded system?”

Eventually I will lead them to the concept of centrifugal governors and show them a picture of an awesome example in the form of a centrifugal ball governor on a Boulton and Watt steam engine from 1788. This little beauty performs a very sophisticated PID (proportional-integral-derivative) control function. It’s a dedicated unit that’s embedded in a larger machine. As far as I’m concerned, this is an embedded system, irrespective of the fact that it’s analog and mechanical and hundreds of years old. The main point here is that what we consider to be an embedded system has changed over the years gone by, and it will change again in the years to come (which ties in nicely with “change” being the topic of the talk).

After waffling on about some of the things that changed since I first decided to grace this planet with my presence in 1957, we’re going to take a slight detour into the wonderful world of urbex, which is short for “urban exploration.” This involves the investigation of abandoned structures like amusement parks, factories, asylums, sanatoriums, and power plants. One of my favorite examples is an abandoned power plant in Hungary (thanks to André Joosse for allowing me to share this photo; he has many more on his website at www.urbex.nl).

An abandoned power plant in Hungary (Image source: André Joosse)

Why don’t we build things with this sort of style today? Ah, well… this will lead us to talk about the sorts of display technologies that predominated in the 1950s and 1960s. Some of my favorites are Nixie tubes and analog meters (see also Retro-Futuristic-Steampunk Technologies Part 1 and Part 2, respectively). I have a couple of other technologies that are close to my heart and that I’ll be introducing in my talk, but I’ll save those for future columns.

We will, of course, be talking about light emitting diodes (LEDs). As I always say, “Show me a flashing LED, and I’ll show you a man drooling.” In particular, we’ll be talking about LED-based 7-segment displays, the fact that the first patent for a 7-segment display (using small incandescent light bulbs) was applied for in 1903, and also the fact that George Lafayette Mason filed a patent for a 21-segment display in 1898 (see Recreating Retro-Futuristic 21-Segment Victorian Displays).

Just to keep everyone on their toes, I’m also going to digress into the topic of Synaesthesia, which is a perceptual phenomenon in which stimulation of one sensory or cognitive pathway leads to involuntary experiences in a second sensory or cognitive pathway. In one common form of synesthesia, known as grapheme-color synesthesia or color-graphemic synesthesia, letters, words, or numbers are perceived as being inherently colored. In the following image, for example, what most of us would see as black text could be perceived in color by a synesthete.

Examples of grapheme-color synesthesia (Image source: Max Maxfield)

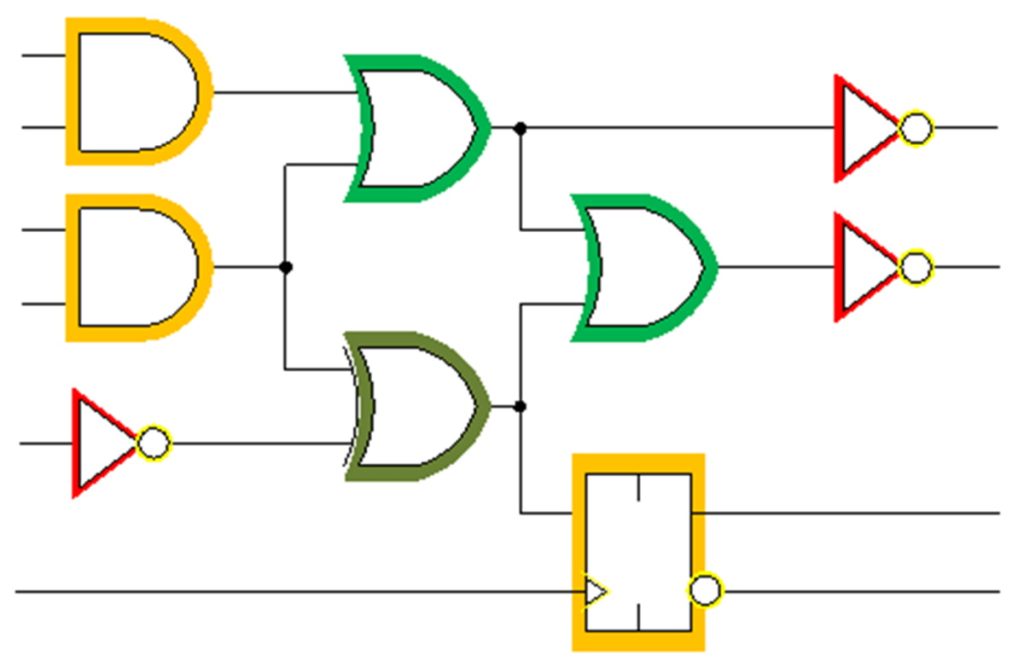

As I wrote in the Seeing Sounds and Tasting Colors topic in my paper on The Evolution of Color Vision, when I first ran across the concept of synaesthesia—on the basis that I’m a digital logic designer by trade—I wondered if there were any synaesthetic digital logic designers who would associate different colors with schematic symbols. After posing this question in some blogs, I eventually heard back from an engineer called Jordan A. Mills, who says that he does indeed perceive different colors when looking at gate-level schematic diagrams. Jordan was kind enough to take a black-and-white schematic I created and to modify it to reflect the way in which he perceives it as shown below.

The way one synaesthetic engineer sees black-and-white digital logic schematics

(Image source: Max Maxfield and Jordan A. Mills)

Now, you may think I’ve wandered off into the weeds. From my point of view, however, I remember being a student and sitting through some gruesomely boring lectures. What I want to do is leave my audience thinking “Wow, that was interesting!” Dare I say that I also want to change the way they think about some of the things we talk about. (Oooh, did you see how I just worked “change” into the conversation?)

This is the point in my presentation when I’ll start to talk about how things were when I went to university (working with analog computers) and when I started my first job (designing mainframe computers). As part of this, we’ll discuss the design tools that were available to us at that time (pencil and paper), how things have evolved (another word for “changed”) since then, and how they are continuing to evolve to this day (as part of which I’ll give examples of EDA vendors who are starting to add artificial intelligence (AI) into their tools).

Oh yes, we’ll also be talking about AI and different flavors of reality (I prefer strawberry), including augmented reality (AR), diminished reality (DR), virtual reality (VR), augmented virtuality (AV) and hyper reality (HR).

Did I mention robots? No? Well, we’ll also be talking about robots and… but I think you get the gist. I would say, “Wish me luck,” but by the time you read this I will be either basking in acclaim with applause ringing in my ears or cowering in the corner with a little tear rolling down my cheek. Oh, what the heck? You can wish me luck anyway!

Darn, Max. From the article’s title, I though I was going to log onto a rendition of Supper’s Ready by Genesis. Bon Jovi? Harrumph and bother.

I love the early Genesis — I never got to see them with Peter Gabriel, but I saw them twice in the late 1970s and early 1980s with Phil Collins — and they were AWESOME!!!