I tell you, I’m starting to feel like I’m riding the crest of the wave when it comes to AI in all its multifaceted glory. Back in the 2010s, I thought it was astonishing that you could show an AI images of cats, dogs, chickens, and penguins, and it could tell these little rascals apart. I know that may seem “old hat” now, but as recently as … Read More → "EnCharge Me Up! 200 AI TOPS at Only ~8 Watts"

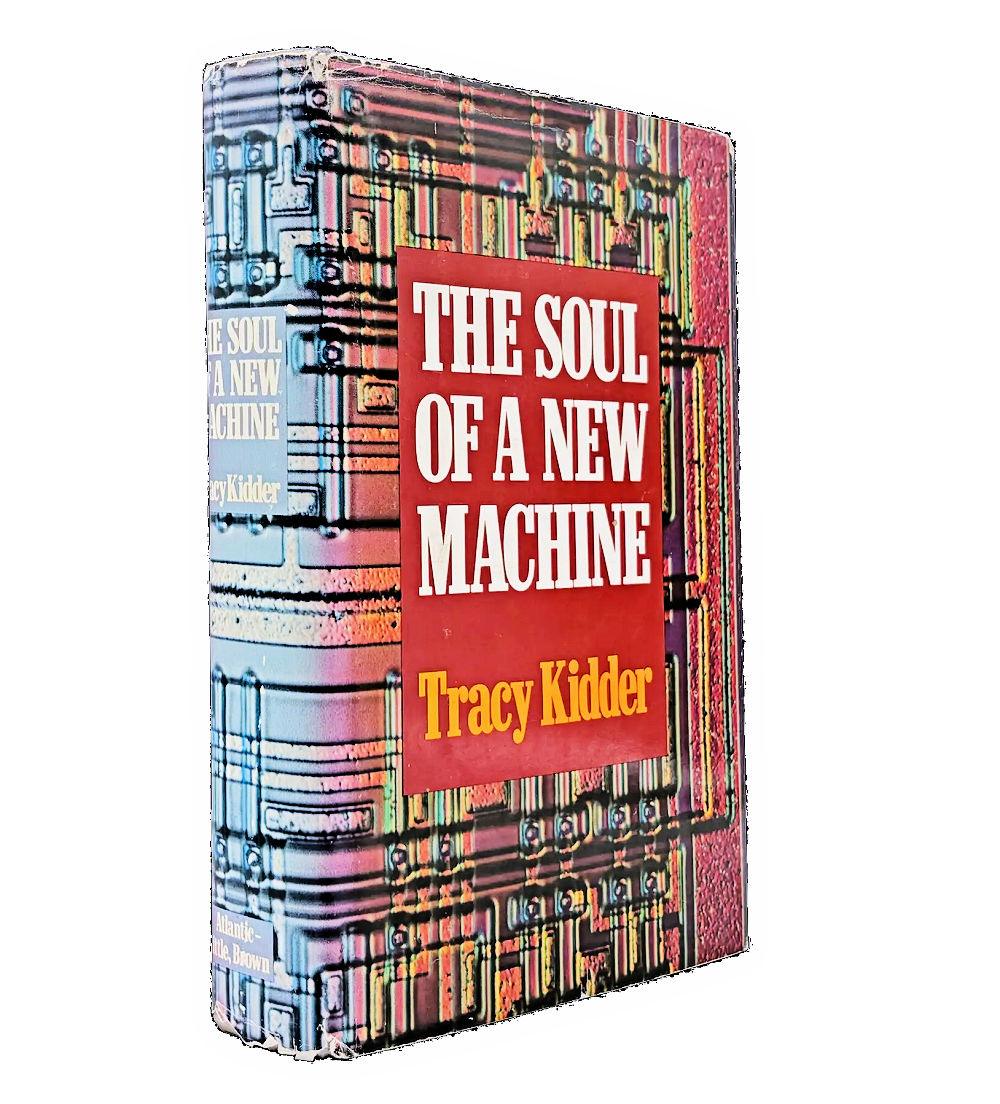

Tracy Kidder, the author of the 1981 best-selling book “The Soul of a New Machine,” has passed away. Kidder wrote many non-fiction books including his first book, “The Road to Yuba City,” and “House,” “Strength in What Remains,” Mountains Beyond Mountains,” “Old Friends,” “Among Schoolchildren,” “Home Town,” and “Good Prose.” “The Soul of a New Machine” was only his second published book and his only book to win a Pulitzer … Read More → "In Memoriam: Tracy Kidder, author of Pulitzer Prize-Winning “The Soul of a New Machine”"

It’s funny how casually we use the word “connectivity” these days, as though it’s always been part of the conversation. However, speaking as someone who was a small (but perfectly formed) lad in the 1960s, I can assure you that it most certainly wasn’t.

Back then, things didn’t “connect” in the way we think of today—they were simply connected. Our … Read More → "The Day “No Signal” Died: 5G Meets Satellites"

When I was 10 years old, my parents decided I was old enough and responsible enough to catch the bus to school (silly parents). This was in England in the 1960s. We didn’t have dedicated school buses (unlike the bodacious yellow beauties in America); instead, we used standard buses with regular passengers and school kids all jumbled together.

I rode with my friend Jeremy Douglas, who lived … Read More → "High-Performance Ultra-Low-Power AI MCUs Running at Only 0.3V (Eeek!)"

I really wish I could have attended this year’s Chiplet Summit, which took place from February 17 to February 19, 2026. This auspicious event was held at the Santa Clara Convention Center in California. I know this facility well—it’s where I met my first telepresence robot—but that’s a story for another day.

As you can see from the Read More → "When AI Meets Multi-Die (and Neither Lets Go)"