Once again, my world has been turned upside down. Until recently, I’ve been gasping in awe at the myriad “you won’t believe your eyes” numbers flaunted by proponents of the latest and greatest GPUs, like Nvidia’s H200, which boasts nearly four petaflops of AI compute… at least, on paper. So, you can only imagine my surprise to discover that these high-end GPUs can be outperformed by … Read More → "FPGAs Beating GPUs at LLM Inference: Say What?!?"

I’m feeling more than a little existential at the moment. Unfortunately, I don’t think there’s a cream for that. I was going to say, “Don’t worry, it’s not catching.” However, the more I think about what I’m about to tell you, the more I fear we might discover that it is.

The idea that bumblebees shouldn’t be able to fly … Read More → "Bumblebees Can’t Fly, and Humans Can’t Exist (I Have Proof)"

A futurist is a person who studies, analyzes, and makes informed predictions about the future, especially regarding trends in technology, society, business, culture, economics, or science. For example, a technology futurist bouncing around today might examine developments in artificial intelligence (AI), augmented reality (AR), robotics, silicon chip process technologies, and semiconductor packaging, and then discuss how these could reshape edge computing over the next 10 to 20 years.

Sad … Read More → "Powering Kilowatt-Plus Processors"

Hello there. Welcome to 2Q 21C. We hope you’ll enjoy your stay. (2Q 21C is the

notation I’ve invented to indicate the second quarter of the 21st century—you’re welcome.)

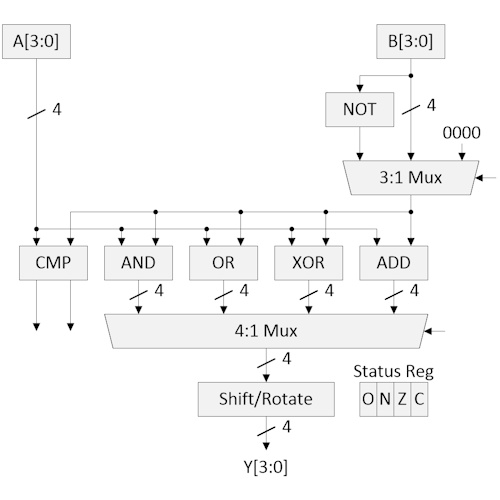

Over the past few years, we’ve been introduced to a cornucopia of new processor designs, many of which target artificial intelligence (AI) and machine learning (ML) applications.

Most of these … Read More → "A 4-Bit CPU for the 21st Century"