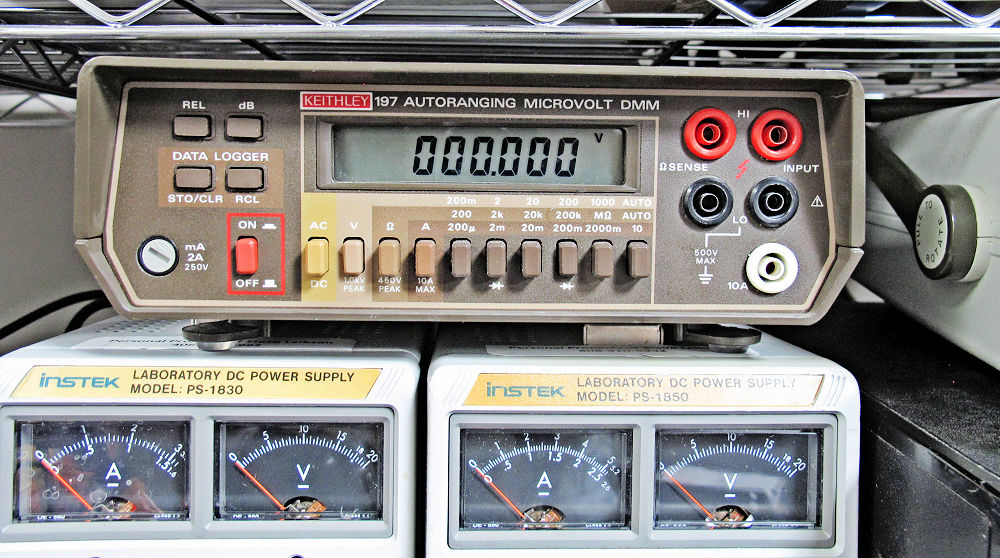

A Tale of Two Multimeters – Part 1: Fixing rubber buttons on the Keithley 197 DMM

I have been building up my home lab these past few years, and I decided that I needed a better multimeter. My original Fluke 77 handheld digital multimeter (DMM) has served me well for more than 40 years, but I wanted more resolution than its 3½ digits. A few years back in a fit of GAS (gear acquisition syndrome), I purchased a 20,000-count Surpeer AV4 multimeter from Amazon for $40. Alas, the Surpeer is no longer available. Eventually, I wanted a faster meter—a bench meter—so I purchased a dead Keithley 179 for $20 from eBay (plus $20.30 for shipping and tax) and described its … Read More → "A Tale of Two Multimeters – Part 1: Fixing rubber buttons on the Keithley 197 DMM"