Today we’re going to talk sound. Two separate stories – one where we can enjoy sound, the other where sound can work for us.

You Sound Well!

Our first story comes from USound, originating in a discussion we had at last fall’s MEMS and Sensors Executive Congress. They’ve developed a small MEMS speaker that can give flat sound-pressure levels (SPLs) from 10 Hz to 16 kHz. That’s below the nominal 20-Hz bottom of the hearing range for humans, and just under the 20-kHz top of the range.

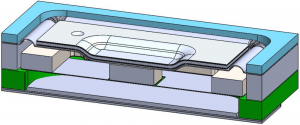

They say that these are the first MEMS earphones; they leverage a piezoelectric membrane that creates the sound. The membrane operates solely in the elastic regime, so there should be no risk of wear-out after long use. It generates low heat and little vibration, and it’s shock resistant. At present they use PZT (lead zirconate titanate), but they’re also working with aluminum nitride; those samples are likely to emerge in mid-2018.

(Image courtesy USound)

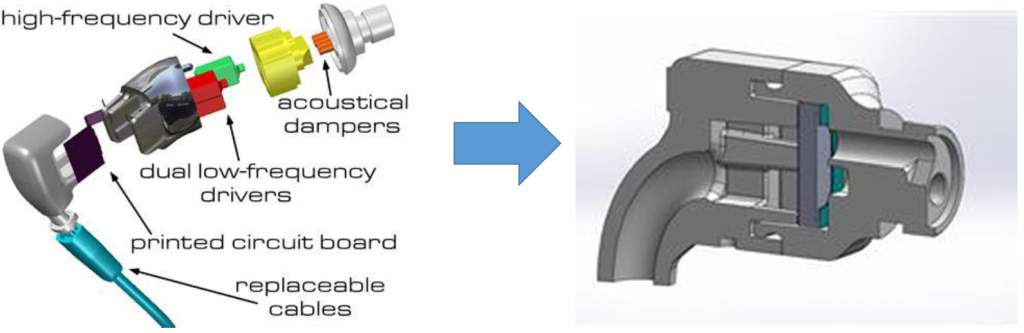

What’s surprising is that they do this with only a single driver (the “driver” in this case being the thing that pushes air around, not low-level software). Incumbent solutions have multiple drivers – even multiple woofers. You have to manage the crossover frequencies, and, overall, it’s more complicated, with more moving parts (literally and figuratively).

(Image courtesy USound)

The resulting headphones are also different from your classic headphones, where every attempt is made to isolate extraneous sounds. Isolation is good from a sound fidelity standpoint, but it also means that you can’t wear them while driving. Or, at least, you can’t wear them in both ears while driving. Might as well have DJ headphones, with one side cocked up.

But the USound headphones are considered “free-field”: the sound that emanates from them mixes with the ambient sound. So you can hear both your music and the siren from that ambulance that’s coming up behind you. That makes it legal to wear them on both ears when driving. There is a sacrifice here: the fidelity is intentionally lower due to the mixing of the ambient, but that’s done in exchange for being able to drive with both sides in.

There are some challenges in putting this solution together. First, our ears are pretty good at noticing when the sound in both ears doesn’t match. So the pieces in each ear have to be carefully coordinated. Obviously, with stereo speakers, the sounds won’t be completely the same on both sides, but it’s important that they be at the same volume and that they be phase-aligned.

The headphones can also be used for noise cancellation. Now… there’s something of a gotcha in this application. For years, noise-cancelling headphones would effectively cancel out only periodic sounds, like the white noise of an airplane (a common place where such headphones were used). The cancellation process was slow enough that it needed to sample the sound for some time to get the gist of the periodic wave; by inverting that wave, that sound could be cancelled.

You could experience this happening in real time when you put on the headphones: at first the background noise would still be audible, and then it would gradually fade away. But it literally took seconds.

By contrast, USound say that their speaker has very fast response – fast enough to cancel non-periodic sounds. That means that, as soon as a sound starts, the response has to be ready so that maybe a little of the ambient sound gets heard due to the slight delay, but not much. Ideally, the response is as close immediate as possible.

You can also add equalization to boost one frequency range without compromising the others.

These phones use USB-C. They can’t run on Bluetooth or from the audio jack, because neither of those can handle digital audio – and this is a digital solution. It requires USB-C (instead of earlier versions of USB) because of the high voltage that the speakers need.

And as a last note, it’s a small bugger: 4x5x1.5 mm3.

Hollah Back!

Our second story comes from Chirp. They’re using ultrasound for ranging in the product just announced.

It’s not new to use ultrasound for something like this; we looked at ultrasonic gesture recognition several years ago. And Chirp does gesture recognition too. But that’s a different application, even if it does share some considerations. In this case, ultrasound used for ranging is clearly competing against optical solutions like cameras and lidar. So what makes ultrasound attractive?

The first and most significant reason is completely non-intuitive. We’re used to higher frequencies somehow yielding better precision. But, in fact, it’s exactly the reverse in this situation. And that’s because sound travels more slowly – meaning that the circuits have more time to process. Infrared systems can provide about 1-cm resolution, and that’s with super-fast electronics. Even with slower circuits, ultrasound can give mm-level resolution out to about 5 feet.

It also outperforms optical in challenging light situations like full sun (which tends to blind optical lenses).

The way the field of view is handled is also different from, say, lidar. With lidar, you get not only a sense that something’s out there, but you also get a mapping of where the things are within the field of view. Ultrasound doesn’t work that way; you merely get a return that says something is there and how far away it is, but without any indication of where within the field of view it is. In other words, you get only one out of three dimensions. That said, it can detect and report the presence of up to 5 separate objects.

You can also trade the width of the field of view against the range. For the longest range, you use a 45° field of view. But it’s capable of a full 180° field of view – which compares to what they said is normally a 25° field of view for optical solutions.

The device consists of a transducer co-packaged with a signal-processing ASIC for a small, low-power solution.

We’ve seen before that audio is cool, and, clearly, work continues in that space. Whether leveraged for our enjoyment or for some other work, expect to see more MEMS solutions here in the future.

More info:

What do you think of USound’s headphones and Chirp’s ultrasonic ranging?