Security cameras and image sensors are pretty stupid, as we’ve explained before. Yet they’re important for a range of applications that have nothing to do with taking selfies at the beach. We use cameras for inspection, for security, for quality control, for medical diagnosis, and for about a hundred other things.

If you’ve ever dealt with image sensors, you know they’re simple in concept but tricky in practice. You generally get a full image every so often, and maybe a series of partial, interpolated images in between. Your job is to make sense of the images. What changed? What am I looking for? Does the image meet my criteria? How much do I need to filter it to get at the information I really want?

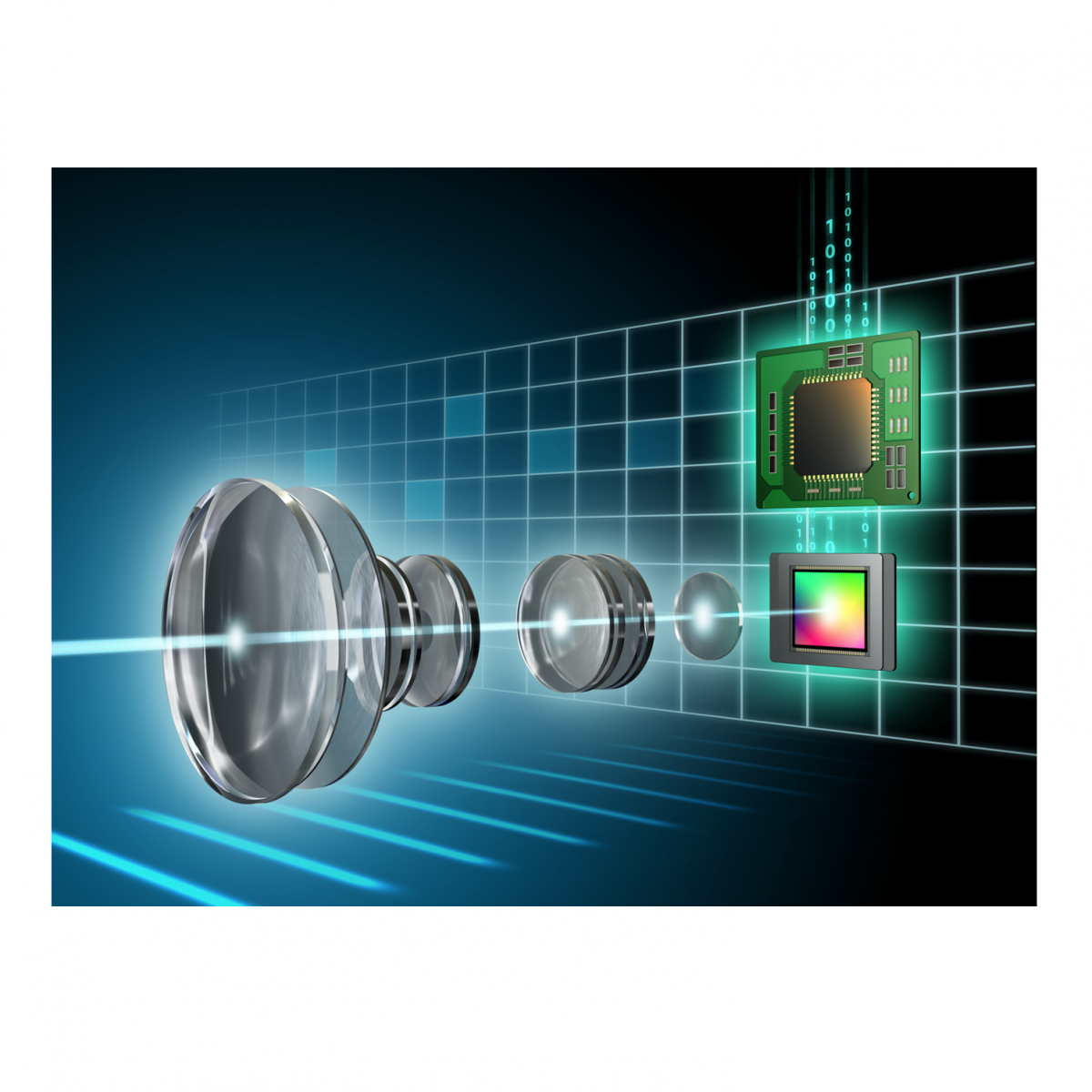

Then there are all the traditional photographer’s problems, like lenses, lighting, exposure, focal length, chromatic aberration, and optical effects that most of us know little about. But hey, once you’ve dialed all of that in, you’re ready to start processing images. Lots and lots of images.

With this as background, a French startup has decided to overhaul the whole idea of the embedded camera and create a new type of image sensor — one that doesn’t need so much babysitting because it does much of the work for you. It’s not just a conventional CMOS image sensor with an MCU or an AI accelerator attached. It’s a different type of device that works in a unique way.

Paris-based Prophesee says its image sensors use “neuromorphic vision that works like the human eye.” That’s a bit confusing and hyperbolic, but basically correct. The key difference is that Prophesee’s image sensors don’t capture images. They look for changes in images and report only that.

That sounds like a trivial difference, but it changes the whole equation when you’re designing a security camera, inspection system, or industrial monitor. The company believes its Metavision image sensors will make less work for programmers, save energy compared to conventional image sensors, and produce better and more accurate results. A win all the way around. But there are some drawbacks.

Normal cameras take pictures at a given frame rate. They’re a series of snapshots, really, and finding the differences between one frame and the next is often the first step to analyzing the images. What changed from the previous frame? Do I care? Is it important?

Frame-by-frame image capture uses either a lot of bandwidth or a lot of processing power to compress the images and save bandwidth. Either way, you’re analyzing frames or comparing frames to each other, looking for significant differences. Higher frame rates produce smoother motion and a more accurate representation of reality, but at the cost of more data, more bandwidth, or more aggressive compression.

In contrast, each individual pixel in Prophesee’s device is sensitive to motion. It never captures an image but instead uses time-continuous monitoring of light levels in the photodiode to report whether its pixel is changing. If the scene in front of the image sensor is perfectly static, the device produces no output. But if something moves, several hundred pixels will fire off a signal to an external host processor. The result effectively outlines the motion edges.

Each pixel in the sensor is asynchronous from all the others; there’s no clock, and there’s no concept of frame rate. They simply report change when they see it, usually within microseconds or milliseconds, which would equate to a frame rate of many thousands of frames/sec. This high equivalent frame rate allows the chip to track very fine motion with high accuracy. By comparison, a conventional camera at 60 fps would miss 99.99% of the motion data. That’s fine if you’re tracking bowling balls, which aren’t very sprightly. But Prophesee expects its devices to be used for medical inspection, vibration monitoring, and small-particle tracking, among other uses.

The company points to mechanical vibration monitoring and predictive maintenance as ideal use cases. Every pixel operates autonomously, so they can each adjust their own exposure rather than share a single camera-wide setting, which simplifies the task of lighting a greasy old motor. High-frequency vibration would normally require a camera with a high frame rate and lots of buffering, but that’s not an issue here. Each pixel can monitor almost any arbitrary frequency independent of the others, on any visible part of the machinery.

Prophesee’s event-driven design philosophy seems like a recipe for spiky bursts of data that might be hard to buffer. Luca Verre, company cofounder and CEO, says that’s not the case. “It’s less data, more information. Even the worst-case scenario of shaking the camera up and down” doesn’t produce a ton of information, he says. Walls, floors, backgrounds, and other components of a natural scene are redundant and get ignored. It’s only the edges of motion that get reported, not every infill pixel. That “sparseness,” he says, provides the data you want without the clutter you don’t want.

The challenge is rewriting already existing code, which is designed for frame-by-frame analysis. To that end, Prophesee is providing an event-based SDK and an ongoing investment in software development.

For those times when you really do want the full image, Prophesee’s sensor won’t help you. It never captures a photo; it reports only on what’s moved. It also doesn’t detect color, for the same reason. In some ways, Prophesee’s image sensor is closer to a lidar or radar sensor than a conventional CMOS image sensor.

Verre points out that radar and lidar are active arrays, whereas his company’s sensor is passive (assuming there’s an ambient light source). Lidar sensors need to shoot out frickin’ laser beams (and radar sends radio pulses), which then reflect back and are processed by the array. Both produce a point cloud with distance information, whereas Prophesee’s sensors are “flat,” in the sense that, like cameras, they don’t inherently perceive distance. Radar and lidar are also synchronous and frame based, so detecting motion means analyzing consecutive frames. In the case of an automotive ADAS application, that might take a significant fraction of a second before you can tell if the car ahead is moving, whereas Prophesee’s chip might be 10× quicker.

As exotic as all of this sounds, Prophesee is already on its third generation of sensor chips, with Gen4 due “soon.” Sounds like there’s a lot of movement inside the development lab.