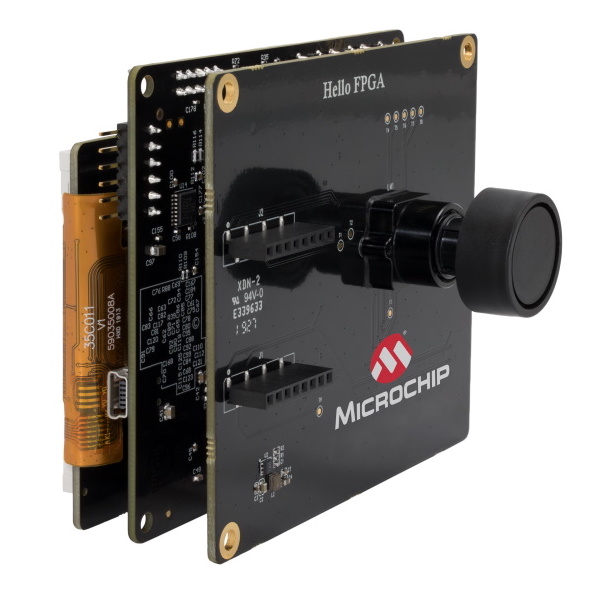

Endpoint AI FPGA Acceleration for the Masses

Let’s face it. Just about anybody can throw a few billion transistors onto a custom 7nm chip, crank up the coulombs, apply data center cooling, put together an engineering team with a few hundred folks including world expert data scientists, digital architecture experts, software savants, and networking gurus, and come up with a pretty decent AI inference engine. There’s nothing to it, really.

But there are a lot of applications that really do need AI inference at the edge – when there just isn’t time to ship the … Read More → "Endpoint AI FPGA Acceleration for the Masses"