I’ve been thinking a lot about the impermanence of data recently. A lot of my own documents and images that used to travel through time with me in the physical realm* – photographs, letters, journals – seem to have become lost in the mists of the past (*as opposed to using my time machine, which is currently hors de combat — you simply cannot lay your hands on spare parts in the time and place I currently hang my hat).

In some respects, the situation is worse in the case of computers, where things can be lurking right under your nose – you know they are there, but you simply cannot track the little rascals to their lairs. I just checked my DropBox folder in which I keep all of my data. I’m up to 49,893 files stored in 8,321 folders consuming 66.5 gigabytes. I tend to follow a fairly rigorous file and folder naming and organizational strategy, so most of the time there’s no problem, but every now and then I run into a MFS (missing file situation), which occasionally results in much gnashing of teeth and rending of garb, let me tell you.

I seem to recall once reading that, when Apollo 11 set off on its mission to the moon, in addition to all of the photographs and videos and suchlike, NASA also commissioned one or more artists to capture the scene in oil paintings using the same canvas materials, pigments, and techniques as the great masters, such as Leonardo da Vinci, on the basis we were confident these lasted for at least 600 years.

I know what you are going to ask. Some cave paintings are 40,000 to 50,000 years old (one in the Maltravieso in Cáceres, Spain, has been dated to more than 64,000 years old), so why didn’t NASA opt for cave paintings? To be honest, I would have thought the answer was obvious — there weren’t any suitable caves near Apollo’s launch site at the Kennedy Space Center on Merritt Island, Florida.

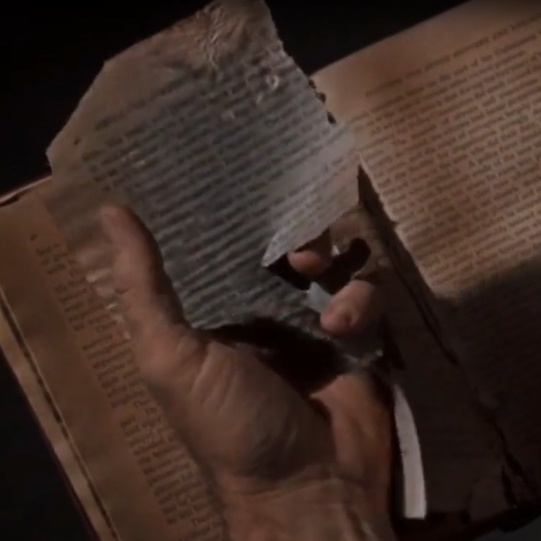

One movie that has really stood the test of time is the 1960 version of The Time Machine by H.G. Wells. And one of the most haunting scenes is where the inventor (played by Rod Taylor) is trying to discover how things came to be as they are in the future, so he asks some of the Eloi, “Do you have books?” One replies, “Yes, we have books,” and leads the inventor to them. As we see in this video snippet, however, the uncared-for tomes crumble to bits in his hands.

As one of the commenters to this snippet so eloquently said: “The logic of this scene applies so tragically well to media preservation today, and as we spiral down into an all-digital age we draw closer and closer to watching the things we love crumble to dust in our hands as well.”

I’m sorry, I can’t help myself. This just reminded me of the Medieval helpdesk sketch, which features a hapless monk who is trying to make the transition from tried-and-trusted scrolls to newfangled book-based technology.

While we are in a video mood, there’s another video snippet that’s relevant to this column. We return to The Time Machine. This is the part where Weena (played by Yvette Mimieux) takes the inventor to see the “talking rings.”

These “talking rings” represent a fictional audio recording and archival technology that describe events from long ago. When I first saw this film as a bright-eyed, bushy-tailed young lad, I remember experiencing a feeling of hope that we would one day have a technology like this that would preserve our data through the ages (I’m a much sadder and wiser man now).

On the other hand, it may not be too long before we do have a truly long-term data archival system. As we see in this video, Microsoft’s Project Silica is intended to use femtosecond lasers to store vast quantities of information inside hard silica glass sheets that can withstand being boiled in water, baked in a standard oven, zapped in a microwave oven, exposed to strong magnetic fields, and even scoured with a scratchy cloth (“How do ya’ like them apples, DVDs?”).

The two main issues with Project Silica are (a) it’s not available yet and (b) it’s a write-only technology. As it says in the Rubáiyát of Omar Khayyám, “The Moving Finger writes; and, having writ, Moves on: nor all thy Piety nor Wit Shall lure it back to cancel half a Line, Nor all thy Tears wash out a Word of it.” I don’t know about you, but it sounds to me that the author well knew the problems associated with WOM (write-only memory) technology.

When it comes to today’s computers, a huge player in non-volatile memory space (“where no one can hear you scream”) is that of solid-state drives (SSDs), which are based on Flash memory. To be honest, I really don’t know as much about these little rascals as I should.

Some of the things I do know are that there are different types of underlying Flash memory technologies, such as single-level-cell (SLC), multi-level-cell (MLC), and tri-level-cell (TLC), with even higher-density technologies on the horizon, and that migrating from one technology to another is not as easy as you might think. I also know that SSDs degrade over time – they have some maximum number of write-read cycles – but that this can be mitigated using ECC (error-correcting code) techniques.

One last thing I know is that the longevity of an SDD is strongly dependent on the profile of the data that’s being run through it. As an over-the-top example, suppose we had an industrial system intended to log data. Let’s further suppose that this system wishes to store a single byte once each second. The problem is that – as far as the drive is concerned — an entire page (say 4 KB) has to be written at once, so attempting to write bytes on an individual basis will quickly degrade the drive beyond any usefulness.

As I noted, the previous example was a bit over-the-top, but the fact remains that the data profile can have a big effect on the life of the drive. One solution to our byte-at-a-time problem would be to buffer the data until it was possible to write an entire 4KB page (with mechanisms in place to ensure an emergency write in the case of an unexpected power-down situation).

The point here is that you typically cannot just go by the SSD specifications. If you have a system that’s supposed to run for 10 years, your SSD spec may look good, but if you don’t manage your data writing habits appropriately, you may render the SSD unworkable long before its time. I’m no expert here, but I’ll take a WAG (wild ass guess) that this wouldn’t be a good thing to have happen.

What’s required is some way to evaluate the SSD in the context of the real-world data workload it’s expected to address and see how long the drive will survive. Based on these evaluations, you might “tweak” the way you handle your data to see how this will affect the longevity of the drive.

Another thing that’s required is to monitor the status of the drive remotely when it’s deployed in the field. It may be that changes in environmental conditions (e.g., the drive ended up being mounted in a system that’s being subjected to much harsher conditions than were originally envisaged) or modifications to the data workload negatively impact the drive’s life expectancy.

One analogy I heard with regard to an SSD and its data workload is to compare it to a car. You might get anywhere from 200 to 400 miles out of a tank of gas depending on how fast and hard you drive the car, where the harder you drive it, the shorter its ultimate lifespan will be.

The bottom line is that developers and administrators need some way to monitor the drive throughout the lifecycle of the product. Now, as it turns out, way back in the mists of time when SSDs were starting out, they were something of black boxes, and it was recognized that developers and administrators required some way to see how well they were doing. Thus, the SSDs’ creators provided about 10 attributes that can be monitored. The downside here is that these attributes are somewhat cryptic in nature, and it takes a lot of wrangling to extract any useful and meaningful information from them.

All of which leads us to a company called Virtium (pronounced “vur-tee-um”), where this moniker is derived from the word virtue, from the Latin virtus, meaning “valor, merit, or moral perfection.”

Virtium’s SSDs span a range of form factors, interfaces, and capacities to address virtually any industrial embedded system’s data storage requirements (Image source: Virtium).

Virtium, which has been around for about 25 years, specializes in industrial-grade memory and industrial-grade SSD. What this means is that, although the folks at Virtium do offer high-speed and high-capacity SSDs, their forte – their raison d’etre, as it were – is rugged, high-reliability, low-power, and small form-factor SSDs that can survive the rigors of an industrial environment.

Even better, especially from a security point of view, all of Virtium’s design and manufacturing is performed in the USA, all loading of firmware is undertaken in their own factory, and everything is 100% checked before it goes out of the door. (See also “Holy Security Systems Batman!” Our Boards are Bugged! and Securing the Silicon Supply Chain.)

As an aside, I remember the days when having a system with 64 KB of memory and two 1.4 MB floppy disks serving as hard drives was considered to be uber-exciting, so the fact that the guys and gals at Virtium offer a 1 TB SSD that’s narrower than a postage stamp makes me shake my head in disbelief (note that the word “Forever” is crossed out on the stamp in the image below because the USPS doesn’t like people posting pictures of stamps in case some little rascal decides to print them out and use them to send letters).

Virtium’s StorFly M.2 small-form-factor SSDs are narrower than a postage stamp (Image source: Virtium).

There are all sorts of other cool things we could talk about, like on-the-fly encryption of the data as it’s stored on the SSD, which means said data can’t be read by an unauthorized player if the drive is stolen. There’s also a capability for secure erase, which is of interest to classified and military projects. In this case, it’s possible to wipe the drive and delete the data using both hardware and software techniques if someone attempts to use an incorrect password more than a specified number of times or if the system detects any attempts to tamper.

But none of this is what I wanted to tell you about (sorry).

The exciting news here is that the chaps and chapesses at Virtium have just introduced a suite of software modules under the umbrella name of StorKit, which is free of charge to Virtium customers.

StorKit features three main modules: vtView, vtSecure, and vtTools. These modules can be embedded in your own system software, which can make calls to them via APIs. Furthermore, these modules can access and monitor the aforementioned 10 cryptic SSD parameters and present the information in a way that makes sense to human beings.

vtView monitors an SSD’s health and predicts its lifespan. It enables you to conduct weekly analyses and predict future maintenance requirements. vtView uses live workloads and usage to predict if the SSD’s lifespan will be less than the planned life of the product. It also provides actionable data for scheduling predictive maintenance and creating alerts.

vtTools provides a suite of foundational software modules you can build on to implement tests and diagnostics within your embedded systems. vtTools provides access to the storage device without going through the file system, thereby enabling you to develop test applications that would be hard to implement if the file system were in-between.

Last, but certainly not least, vtSecure provides storage security features in your systems without the need for you to understand the intricacies of security protocols and programming sequences in storage specifications such as ATA/SATA. Most of the work required to control storage security is done for you. You specify setup security, secured erase, sanitize, and setup TCG OPAL, then you leave vtSecure to handle the vast majority of the nitty-gritty protocol work.

To put all of this in a nutshell, what would have previously required hundreds or thousands of lines of hand-crafted code can now be achieved with a couple of calls to the appropriate StorKit module’s API.

All I can say is that, in these days of data transience, evanescence, ephemerality, and insubstantiality, it gives me a warm glow inside to know that the little scamps at Virtium have us covered with regard to any data stored on their industrial-grade SSDs. (And you thought I couldn’t bring this column home to roost – shame on you!)

One thought on “Worried About Your SSD Data? Virtium Has You Covered!”