I can’t help myself. I keep thinking about the 1961 musical Stop the World—I Want to Get Off. After opening in Manchester, England, the show transferred to the West End, London, where it ran for 485 performances.

It’s not that the plot of this extravaganza has anything to do with what we are talking about here. It’s just that the sentiment embodied by the show’s title reflects the way I’m currently feeling about artificial intelligence (AI) and machine learning (ML).

On the one hand, the current state of play with AI and ML is tremendously exciting. On the other hand, I’m starting to think that I’ve enjoyed all the excitement I can stand.

Just a couple of weeks’ ago, for example, my chum Rick Curl emailed me to say: “Hi Max, I was just tinkering with ChatGPT and I tried this chat: ‘Write an article about semiconductor technology in the style of Max Maxfield’. Here’s the response:”

A Thrilling Journey into the World of Microelectronics

Hello, my dear fellow electronics enthusiasts! I’m Max Maxfield, your friendly neighborhood electronics guru, and I’m here today to take you on an exciting journey into the world of semiconductor technology.

As you may know, semiconductors are materials that have electrical conductivity between that of a conductor and an insulator. These materials are essential in the world of microelectronics and have changed the way we live, work, and communicate…

There was a bunch more. It was very well written and reasonably accurate. Rick was kind enough to say, “While the article was interesting, I certainly would not say it was written by you! No need to worry… YET!” It was the “YET” that sent shivers running up and down my spine.

I’m sure you don’t recall my telling you that I recently started to write a book describing the various things I experienced when I was a young lad—something along the lines of the Ramona books by Beverly Cleary. The reason you don’t recall this nugget of knowledge is that I’ve never shared this before.

One of my stories describes a Saturday when my friend Jeremy and I (both around 7 years old) attempted to dig a hole to Australia in my back yard (it was right at the back, out of sight of the house). When it started raining, we camouflaged our hole so no one could see it, after which we stopped work for the day. I can’t remember why we camouflaged the hole. Maybe we were worried that someone would steal it.

Later that afternoon, I was sitting next to my dad on the sofa watching cartoons on TV when my mum entered the room. She was covered from head to foot in mud. “What on earth happened to you?” asked my dad. I’m not sure how mum replied because I suddenly remembered that I had a pressing engagement elsewhere.

The reason I’m waffling on about this here is that, in the fullness of time, I want each of my vignettes to be accompanied by a little pencil sketch—a bit like the ones in the Winnie the Pooh books. I’m looking forward to working with a human artist to generate just what I’m envisaging. Apart from anything else, we’re going to want to have the images of the same two boys as they grow from 1 year to 11 years old.

Having said this, on a whim, I just went to Stable Diffusion Online, which offers a free Stable Diffusion artwork generator with no login required and a license to use the generated images as you see fit (so long as you don’t do so for nefarious purposes or break any laws). In the Prompt field I entered something like “Pencil sketch of two small boys in boots and shorts digging a hole in a garden with shovels.” The following is what it came up with:

AI-generated artwork (Source: Stable Diffusion Online)

This isn’t quite what I’m looking for, but it’s awesome as a placeholder. The scary thing is that generating this image took only about 10 seconds!

But we digress… The trigger for my current cogitations was that I was just chatting with Nandan Nayampally, who is the Chief Marketing Officer at BrainChip. It seems like yesterday—but it was almost a year ago to the day—that I posted a column Brain Chips are Here! This described how the folks at BrainChip were taking orders for Mini PCIe boards flaunting the first generation of their Akida platform in the form of their AKD1000 neuromorphic chips.

Even though it’s been around for only one year, the Akida 1.0 platform has enjoyed tremendous success, having been used by the chaps and chapesses at a major automobile manufacturer to demonstrate a next-generation human interaction in-cabin experience in one of their concept cars; also by the folks at NASA, who are on a mission to incorporate neuromorphic learning into their space programs; also by a major microcontroller manufacturer, which is scheduled to tape-out an MCU augmented by Akida neuromorphic technology in the December 2023 timeframe. And this excludes all of the secret squirrel projects that we are not allowed to talk about.

Just to make sure we’re all tap-dancing to the same drum beat, although the folks at BrainChip make their devices like the AKD100 available for evaluation and prototyping purposes, and while they will be happy to sell these devices to you if you wish, their main business model is to license their neuromorphic platform in the form of IP that you integrate into your own SoC designs.

Now, just 12 months later, the guys and gals at BrainChip are announcing the second generation of their Akida platform, whose mission it is to drive extremely efficient and intelligent edge devices for the artificial intelligence of things (AIoT) solutions and services market that is expected to be $1T+ by 2030.

There’s so much to talk about here that I’m afraid we’re going to have to take a speed run at it, so scrunch down in your chair and hold on tight.

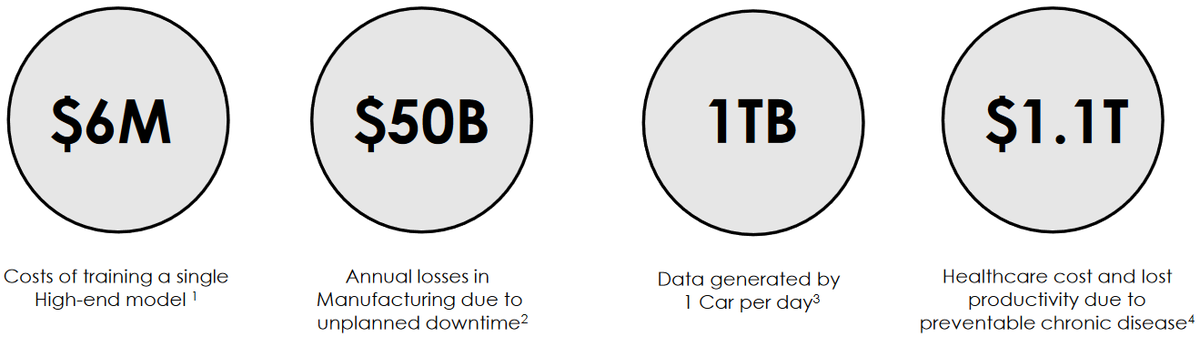

There’s an ever-increasing requirement to push neural processing capability into edge devices. There are also a bunch of considerations to… well… consider. It’s said that big problems lead to big opportunities, and we certainly have some big problems. For example, primary training is performed in the cloud and—according to IEEE Spectrum—it can cost $6M to train a single high-end model, so you don’t want to have to go back to ground zero and retrain your models if you can help it.

Big problems lead to a big opportunity (Source: BrainChip)

Meanwhile, according to Deloitte, the annual losses in manufacturing due to unexpected downtime are $50B in the US alone. This could be dramatically reduced with neural processing on the edge being used in a predictive maintenance role.

The folks at Forbes make the point that today’s smart cars can each generate 1TB of data per car per day. Uploading all of this data into the cloud—as opposed to processing a lot of it at the edge—results in a ginormous glut of information on the interwebs.

One factoid that blew my mind is that the CDC estimates annual losses in productivity in the US due to people not showing up for work due to preventable chronic diseases is in the ballpark of $1.1T.

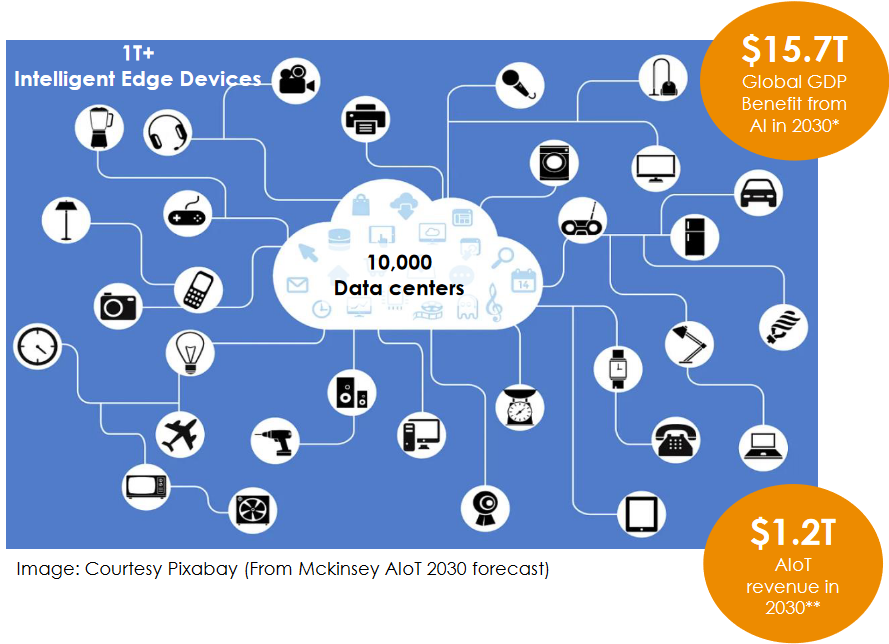

If big problems lead to big opportunities, then big opportunities come with some big challenges. Consider that the folks at PwC project that the impact of AI on global GDP by 2030 will be around $15T. Obviously, that’s not going to all come from the cloud—it’s reasonable to assume that the anticipated 1T+ intelligent edge devices may have something to do about it.

Big opportunities have big challenges (Source: BrainChip)

According to Forbes, AIoT revenue could be $1.2T in 2030. And on top of all this, the 2023 Edge AI Hardware Report from VDC Research estimates that the market for Edge AI hardware processors will be $35B by 2030. I tell you; I thought this year was setting out to be exciting, but now I have my sights firmly set on 2030.

So, a $35B market for edge AI processors. That certainly explains why the folks at BrainChip are bouncing around with excitement regarding their Akida 2.0 platform, which is a fully digital neuromorphic event-based processor. Once you’ve trained your original model in the cloud, this platform supports the unique ability to learn on-device without cloud retraining, thereby allowing developers to extend previously trained models. This new incarnation can also run large models like ResNet-50 completely on the neural processor, thereby freeing up the host processor.

There’s so much more to tell about this tempting technology that I’m going to save the really good bits until my next column. In the meantime, as always, I’d be interested to hear your thoughts on all of this.

Also, Brain-Boggling.

Oooh — now I wish I’d used that as the title 🙂