I remember the heady thrill of the early 1980s when the unwashed masses (in the form of myself and my friends) first started to hear people talking about “expert systems.” These little rascals (the systems, not the people) were an early form of artificial intelligence (AI) that employed a knowledge base of facts and rules and an inference engine that could use the knowledge base to deduce new information.

It’s not unfair to say that expert systems were pretty darned clever for their time. Unfortunately, their capabilities were over-hyped, while any problems associated with creating and maintaining their knowledge bases were largely glossed over. It was also unfortunate that scurrilous members of the marketing masses started to attach the label “artificial intelligence” to anything and everything with gusto and abandon, but with little regard for the facts (see also An Expert Systems History).

As a result, by the end of the 1990s, the term “artificial intelligence” left a bad taste in everyone’s mouths. To be honest, I’d largely pushed AI to the back of my mind until it — and its machine learning (ML) and deep learning (DL) cousins — unexpectedly burst out of academia into the real world circa 2015, give or take.

There are, of course, multiple enablers to this technology, including incredible developments in algorithms and frameworks and processing power. Now, of course, AI is all around us, with new applications popping up on a daily basis.

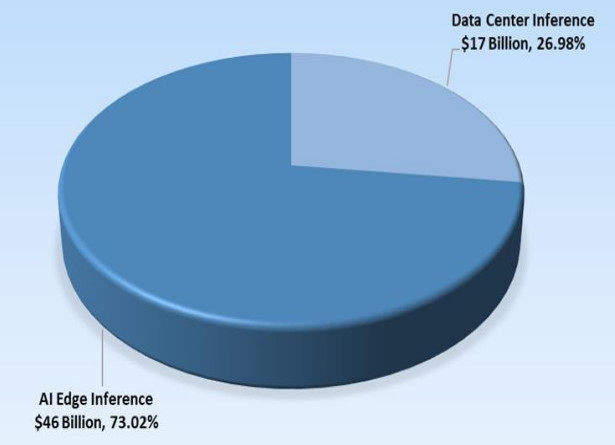

Initially, modern incarnations of AI were predominantly to be found basking in the cloud (i.e., on powerful servers in data centers). The cloud is still where a lot of the training of AI neural networks takes place, but inferencing — using trained neural network models to make predictions — is increasingly working its way to the edge where the data is generated. In fact, the edge is expected to account for almost three quarters of the inference market by 2025.

The predicted inference market in 2025 (Image source: BrainChip)

The thing about working on the edge is that you are almost invariably limited with respect to processing performance and power consumption. This leads us to the fact that the majority of artificial neural networks (ANNs) in use today are of a type known as a convolutional neural network (CNN), which relies heavily on matrix multiplications. One way to look at a CNN is that every part of the network is active all of the time. Another type of ANN, known as a spiking neural network (SNN), is based on events. That is, the SNN’s neurons do not transmit information at each propagation cycle (as is the case with multi-layer CNNs). Rather, they transmit information only when incoming signals cross a specific threshold.

The ins and outs (no pun intended) of all this are too complex to go into here. Suffice it to say that an SNN-based neuromorphic chip can process information in nanoseconds (as opposed to milliseconds in a human brain or a GPU) using only microwatts to milliwatts of power (as opposed to ~20 watts for the human brain and hundreds of watts for a GPU).

Now, if you are feeling unkind, you may be thinking something like, “Pull the other one, it’s got bells on.” Alternatively, if you are feeling a tad more charitable, you might be saying to yourself, “I’ve heard all of this before, and I’ve even seen demos, but I won’t believe anything until I’ve tried it for myself.” Either way, I have some news that will bring a smile to your lips, a song to your heart, and a glow your day (you’re welcome).

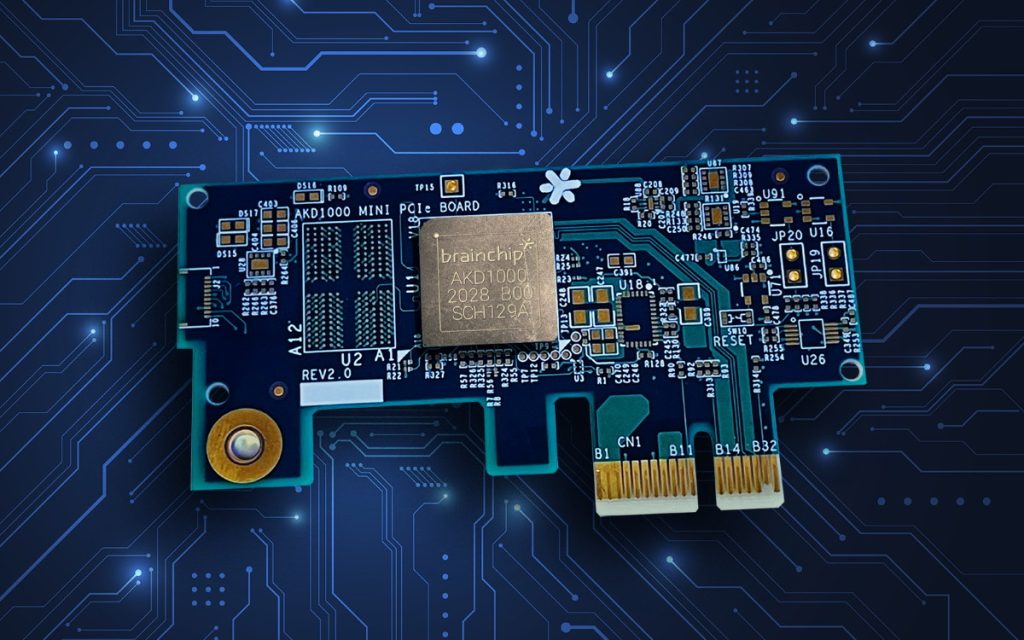

You may recall us talking about a company called BrainChip here on EEJournal over the past couple of years (see BrainChip Debuts Neuromorphic Chip and Neuromorphic Revolution by Kevin Morris, for example). Well, I was just chatting with Anil Mankar, who is the Chief Development Officer at BrainChip. Anil informed me that the folks at BrainChip are now taking orders for Mini PCIe boards flaunting their AKD1000 neuromorphic AIoT chips.

Mini PCIe board boasting an AKD1000 neuromorphic AIoT chip

(Image source: BrainChip)

These boards and chips and BrainChip’s design environment support all of the common AI frameworks like TensorFlow, including trained CNN to SNN translation.

If you are a developer, you can plug an AKD1000-powered Mini PCIe board into your existing system to unlock the capabilities for a wide array of edge AI applications, including Smart City, Smart Health, Smart Home, and Smart Transportation. Even better, BrainChip will also offer the full PCIe design layout files and the bill of materials (BOM) to system integrators and developers to enable them to build their own boards and implement AKD1000 chips in volume as stand-alone embedded accelerators or as co-processors. Just to give you a tempting teaser as to what’s possible, there’s a bunch of videos on BrainChip’s YouTube channel, including the following examples:

- Tactile Sensing Demo

- Wine Tasting Demo

- In-Cabin Vehicle Demo

- Key Word Spotting Demo

- Edge-Based Learning Demo

These PCIe boards are immediately available for pre-order on the BrainChip website, with pricing starting at $499. As the guys and gals at BrainChip like to say: “We don’t make the sensors — we make them smart; we don’t add complexity — we eliminate it; we don’t waste time — we save it.” And, just to drive their point home, they close by saying, “We solve the tough Edge AI problems that others do not or cannot solve.” Well, far be it for me to argue with logic like that. For myself, I’m now pondering how I can use one of these boards to detect and identify stimuli and control my animatronic robot head. Oh, I forgot that I haven’t told you about that yet — my bad. Fear not, I shall rend the veils asunder in a future column. In the meantime, do you have any thoughts you’d care to share of a neuromorphic nature?