“The sad thing about artificial intelligence is that it lacks artifice and therefore intelligence.” – Jean Baudrillard

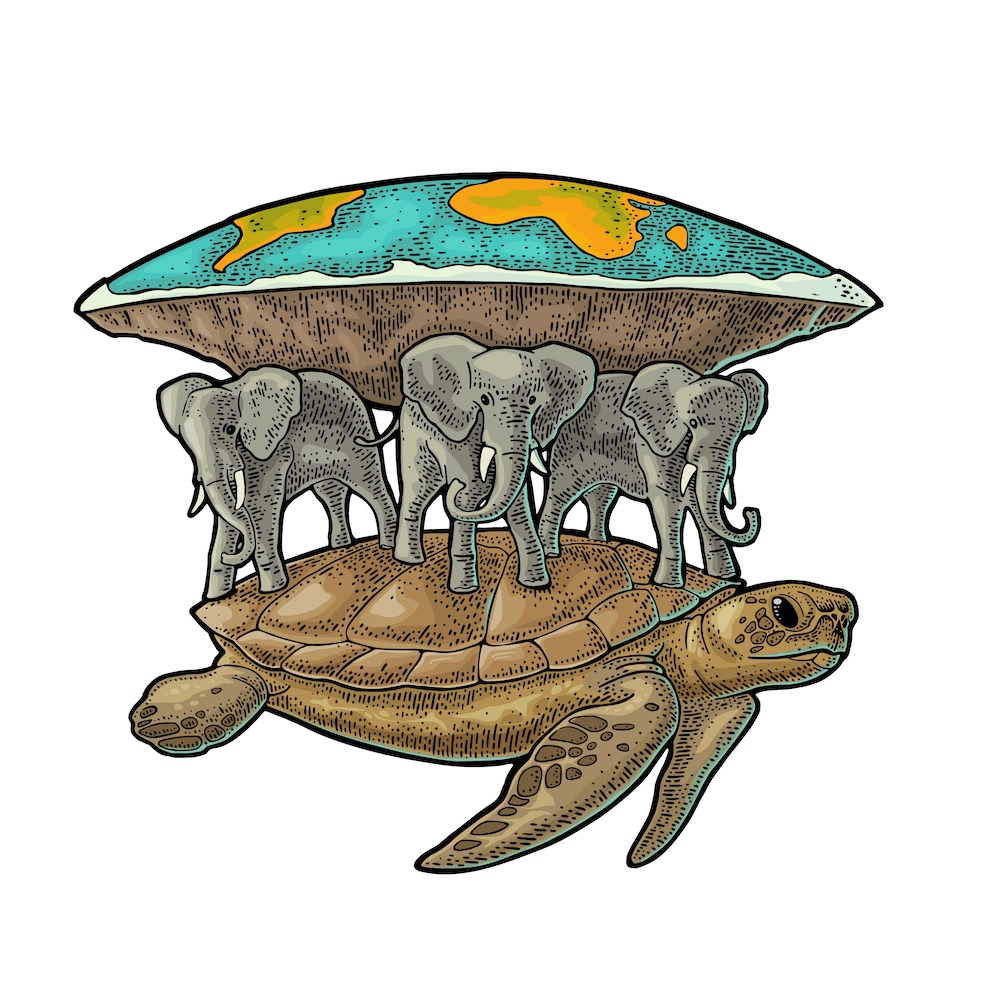

It’s turtles all the way down. That’s the takeaway from a deep dive into Esperanto’s upcoming AI chip, melodically named ET-SoC-1. It’s organized as layer upon layer of processor cores, memory blocks, and mesh networks as far as the eye can see. This thing scales better than a tuna.

Esperanto Technologies has been designing its ET-SoC-1 for a few years now, and the company has yet to receive first silicon, but the project just got its first public reveal. Head honchos Dave Ditzel (founder) and Art Swift (CEO) are like happy parents excited about their new baby. Except this isn’t the first rodeo for either one of them. Ditzel is kind of a big deal in the microprocessor world, having been a Vice President at Intel, the founder of x86 clone maker Transmeta, CTO of Sun’s SPARC business, and a UC Berkeley grad with a Masters degree from a certain Dr. David Patterson, Mister RISC-V Himself. Swift wears the necktie in this relationship but got his EE degree from Penn State before eventually heading up the prpl Foundation, the RISC-V Foundation’s marketing department, and eventually becoming CEO of Wave Computing before joining Esperanto. Nurturing new processors is what these guys do.

The 100-person company thinks we’re going about AI all wrong. First, comparing one vendor’s AI chip to another’s AI chip is pointless. The right way is to look at AI capabilities per watt, not per chip. Watts matter. Chips are just a packaging option.

Second, programmability is key. “If you give an AI problem to a hardware guy, they’ll want to custom-design something to optimize the inner loop. But that’s going to be hard to program,” says Ditzel. “A general-purpose ISA [instruction-set architecture] is good at outer loops, for very little extra overhead.”

Esperanto has combined the special and the generic, the custom with the open-sourced. Its AI-acceleration hardware is custom, but it’s grafted onto the generic RISC-V architecture. That RISC-V undercarriage makes the ET-SoC-1 chip easy to program, says Ditzel, while the custom accelerators make it worthwhile to do so. The whole chip is designed with low power consumption in mind, so that it delivers 30× to 50× better performance than “incumbent solutions” while also boasting 100× better power efficiency. That’s according to Esperanto’s simulations; real silicon is still a few months away.

In these comparisons, the “incumbent solutions” are x86 chips from Intel and AMD, although Esperanto never explicitly says so. Esperanto offered no comparisons to AI chips from other vendors, such as Groq, Mythic, or Swift’s previous employer, the nearly defunct Wave Computing.

Because so many machine learning tasks are “embarrassingly parallel,” in Ditzel’s words, a massively parallel design for ET-SoC-1 seemed the right way to go. And it certainly is that. The chip has 1093 processors on it, all based on RISC-V. The overwhelming majority (1088) of those are so-called ET-Minion processors, being babysat by four ET-Maxion processors and one service processor (also RISC-V based).

The Minions are clustered into groups of eight, called a “neighborhood.” Four neighborhoods make a “shire,” and a 6×6 array of shires makes one ET-SoC-1 chip. (One shire is populated with the four ET-Maxion cores and one with PCIe logic, which is why the total isn’t 1152.) The whole thing weighs in at 23.8 billion transistors.

But it keeps going. Each ST-SoC-1 chip is designed to be clustered with similar chips, with up to six chips on a standard plug-in card, along with memory and support logic. Those cards can be combined into “sleds,” the sleds go into “cubbies,” eight cubbies fit into a standard 19-inch rack, and of course, thousands of racks line the halls of your typical datacenter. You almost expect Esperanto to lay out zoning commission plans for scaling out the datacenter buildings.

Scalability is a big deal to these guys.

Zooming back to the beginning, each ET-Minion core starts with a fairly simple implementation of the RISC-V pipeline combined with a massive AI accelerator. It’s designed for moderate clock speeds (in the neighborhood of 1 GHz) at the lowest voltage possible. Esperanto’s initial silicon is being fabricated in TSMC’s 7nm process, and it’s designed to operate at the low end of the voltage range, with nearly everything on the same voltage plane, even the caches. “Transistors are 5×–10× more efficient at low voltage, but not near threshold voltage. As architects, we know how to make up for reduced speed,” says Ditzel, defending his chip’s relatively pedestrian operating frequency. “Seven nanometers is different from other nodes. The wires are resistive, and high-frequency operation needs lots of buffers. At lower speed and voltage, the wires back out of the way. A gigahertz is about right. Some customers want less than a gigahertz. Besides, if [the application] is memory bound, high frequency doesn’t help performance.”

Each ET-Minion’s CPU is a single-scalar, dual-threaded, in-order implementation. Combined with that is a custom vector/tensor unit with both a 256-bit floating-point half and a 512-bit integer half. The FP half can perform a single 256-bit operation per cycle or (more likely) 16 single-precision (32-bit) operations or 32 half-precision (16-bit) operations. The integer side can similarly do either one 512-bit operation or 128 byte-wide operations every cycle.

Ditzel and Swift didn’t detail exactly what those operations can be, other than to hint that they can be quite long and complex. “Tensor instructions can run for hundreds of cycles,” and the RISC-V pipeline will sleep until it completes, saving power. “Programmers think it’s RISC-V, but 99.9% of the time is spent on tensor instructions.”

Theoretically, each ET-Minion can deliver 128 GOPS/GHz, according to the company. Stated another way, that’s 128 operations per cycle. And that’s just one of the ET-Minion cores, of which there are 1088 on each chip.

Tiling lots of specialty cores is one thing; getting them to communicate in a meaningful way is another. Diztel agrees. “Most of the work and cleverness here is in the memory system,” he says. “Multiply-add is not the hard part. The chip has a real memory system, with three levels of caches, etc. Software people look at it and say, ‘I know how to program that!’”

Caches appear on each ET-Minion core, in each neighborhood, and in each shire. Each cache can optionally be configured as a scratchpad RAM, if that makes sense. The entire thing is bound together by Esperanto’s own mesh network, and the hardware implements several synchronization primitives, including atomics, barriers, and IPI (intelligent peripheral interconnect) support. Interfaces to the outside world are through PCI4 Gen 4 and LPDDR4x.

The four ET-Maxion processors, in contrast, are high-performance out-of-order implementations that are meant to act as the “host” processors in a standalone system. Datacenter customers are likely to prefer an x86 processor from Intel or AMD, in which case, the Maxions can step aside (or just be ignored).

Esperanto says the chip’s “typical operating point” is under 20 watts, which seems remarkable for such a massively provisioned device. Either “typical” conditions are atypical or Ditzel’s design team succeeded spectacularly in their goal of delivering the best AI performance per watt. For comparison, a newly minted laptop processor like Intel’s Core i7-1068 (10th generation Sunny Cove/Ice Lake-U microarchitecture) has a TDP of 28W. And that’s for just four x86 cores and a GPU. Some of Intel’s low-power processors dip below 15W or 20W TDP, but the company’s desktop and server processors, with which Esperanto would compete, inhabit the 100–200W realm. That’s an order of magnitude difference in Esperanto’s favor even before accounting for the (presumed) gains in performance.

Benchmarking machine-learning workloads is a whole different ball game compared to benchmarking traditional CPUs (which is hard enough). It’s hard to know how fast and effective any AI processor is, much less how it compares in inferences/watt, GOPS/GHz, or furlongs/fortnight. Based on its all-star cast of seasoned professionals, though, I’d say the oddsmakers are laying bets in Esperanto’s favor. Let’s see what the spring season brings.

One thought on “Esperanto AI Chip Exploits Thousands of Minions”