I was just perusing and pondering the official definition of extrasensory perception (ESP). This is also referred to as the “sixth sense” based on the fact that most people think we (humans) are equipped with only five of the little rascals: sight, hearing, touch, taste, and smell.

As an aside, we actually have many more senses at our disposal, including thermoception (the sense by which we perceive temperature*), nociception (the sense that allows us to perceive pain from our skin, joints, and internal organs), proprioception (the sense of the relative position of the parts of one’s body), and equilibrioception (the part of our sense of balance provided by the three tubes in the inner ear). (*Even if you are blindfolded, if you hold your hand close to something hot, you can feel the heat in the form of infrared radiation. Contra wise, if you hold your hand over something cold, you can detect the lack of heat.) But we digress…

Returning to ESP, the official definition goes something along the lines of, “claimed reception of information not gained through the recognized physical senses, but rather sensed with the mind.” Alternatively, the Britannica website defines ESP as “perception that occurs independently of the known sensory processes.” Typical examples of ESP include clairvoyance (supernormal awareness of objects or events not necessarily known to others) and precognition (knowledge of the future). Actually, I may have a touch of precognition myself, because I can occasionally see a limited way into the future. For example, if my wife (Gina the Gorgeous) ever discerns the true purpose of my prognostication engine, I foresee that I will no longer need it to predict her mood of the moment.

What about senses we do not have, but that occur in other animals, such as the fact that some creatures can detect the Earth’s magnetic field, while others can perceive natural electrical stimuli? Wouldn’t it be appropriate to class these abilities as being extra-sensory perception as compared to our own sensory experience?

In the case of humans, one of our most powerful and pervasive senses is that of sight. As we discussed in an earlier column — Are TOM Displays the Future of Consumer AR? — “Eighty to eighty-five percent of our perception, learning, cognition, and activities are mediated through vision,” and “More than 50 percent of the cortex, the surface of the brain, is devoted to processing visual information.”

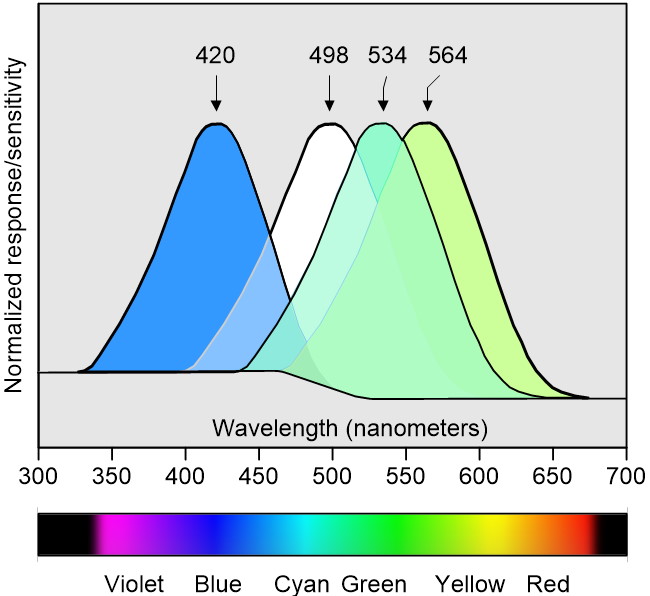

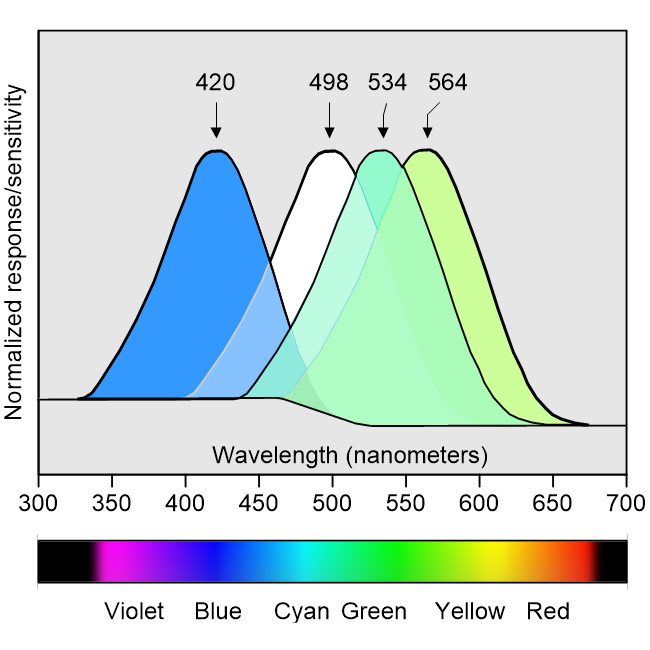

In my Evolution of Color Vision paper, I make mention of the fact that the typical human eye has three different types of cone photoreceptors that require bright light, that let us perceive different colors, and that provide what is known as photopic vision (the sensitivity of our cones peak at wavelengths of 420, 534, and 564 nm). We also have rod photoreceptors that are extremely sensitive in low levels of light, that cannot distinguish different colors, and that provide what is known as scotopic vision (the sensitivity of our rods peaks at 498 nm).

Typical humans have three types of cone photoreceptors (think “color”) along with rod photoreceptors (think “black-and-white”)

(Image source: Max Maxfield)

There are several things to note about this illustration, including the fact I’m rather proud of this little scamp, so please feel free to say nice things about it. Also, I’ve normalized the curves on the vertical axis (i.e., I’ve drawn them such that they all have the same maximum height). In reality, rod cells are much more sensitive than cone cells, to the extent that attempting to contrast their sensitivity on the same chart would result in a single sharp peak for the rods contrasted with three slight bumps for the cones. The bottom line is that rods and cones simply don’t play together in the same lighting conditions. Cones require bright light to function, while rods are saturated in a bright light environment. By comparison, in the dim lighting conditions when rods come into their own, cones shut down and provide little or no useful information.

As another aside, people who don’t believe in evolution often use the human eye as an example of something so sophisticated that it must have been created by an intelligent designer. I think it’s fair to say that I count my eyes amongst my favorite organs, but it also needs to be acknowledged that there are some fundamental design flaws that wouldn’t be there had engineers been in charge of the project.

When it comes to how something as awesome as the eye could have evolved, I heartily recommend two books: Life’s Ratchet: How Molecular Machines Extract Order from Chaos by Peter M. Hoffmann and Wetware: A Computer in Every Living Cell by Dennis Bray. Also, in this video, the British evolutionary biologist Richard Dawkins provides a nice overview of the possible evolutionary development path of eyes in general.

I don’t know about you, but I’m tremendously impressed with the wealth of information provided to me by my eyes. From one point of view (no pun intended), I’m riding the crest of the wave that represents the current peak of human evolution, as are you of course, but this is my column, so let’s stay focused (again, no pun intended) on me. On the other hand, I must admit to having been a tad chagrined when I first discovered that the humble mantis shrimp (which isn’t actually a shrimp or a mantid) has the most complex eye known in the animal kingdom. As I noted in my aforementioned Evolution of Color Vision paper: “The intricate details of their visual systems (three different regions in each eye, independent motion of each eye, trinocular vision, and so forth) are too many and varied to go into here. Suffice it to say that scientists have discovered some species of mantis shrimp with sixteen different types of photoreceptors: eight for light in (what we regard as being) the visible portion of the spectrum, four for ultraviolet light, and four for analyzing polarized light. In fact, it is said that in the ultraviolet alone, these little rapscallions have the same capability that humans have in normal light.”

What? Sixteen different types of photoreceptors? Are you seriously telling me that this doesn’t in any way count as extrasensory perception?

The reason I’m waffling on about all of this here (yes, of course there’s a reason) is that I was just chatting with Ralf Muenster, who is Vice President of Business Development and Marketing at SiLC Technologies. The tagline on SiLC’s website says, “Helping Machines to See Like Humans.” As we’ll see, however, in some ways they are providing sensing capabilities that supersede those offered by traditional vision sensors, thereby providing the machine equivalent of extrasensory perception (Ha! Unlike you, I had every faith that — much like one of my mother’s tortuous tales — I would eventually bring this point home).

Ralf pointed out that today’s AI-based perception systems for machines like robots and automobiles predominantly feature cameras (sometimes single camera modules, sometimes dual module configurations to provide binocular vision). Ralf also noted that — much like the human eye — traditional camera vision techniques look only at the intensity of the photons impinging on their sensors.

These vision systems, which are great for things like detecting and recognizing road markings and signs, are typically augmented by some form of lidar and/or radar capability. The interesting thing to me was when Ralf explained that the lidar systems that are currently used in automotive and robotic applications are predominantly based on a time-of-flight (TOF) approach in which they generate powerful pulses of light and measure the round trip time of any reflections. These systems use massive amounts of power — say 120 watts — and the only reason they are considered to be “eye-safe” is that the pulses they generate are so short. Also, when they see a pulse of reflected light, they don’t know if it’s their pulse or someone else’s. And, just to increase the fun and frivolity, since light travels approximately 1 foot per nanosecond, every nanosecond you are off may equate to being a foot closer to a potential problem.

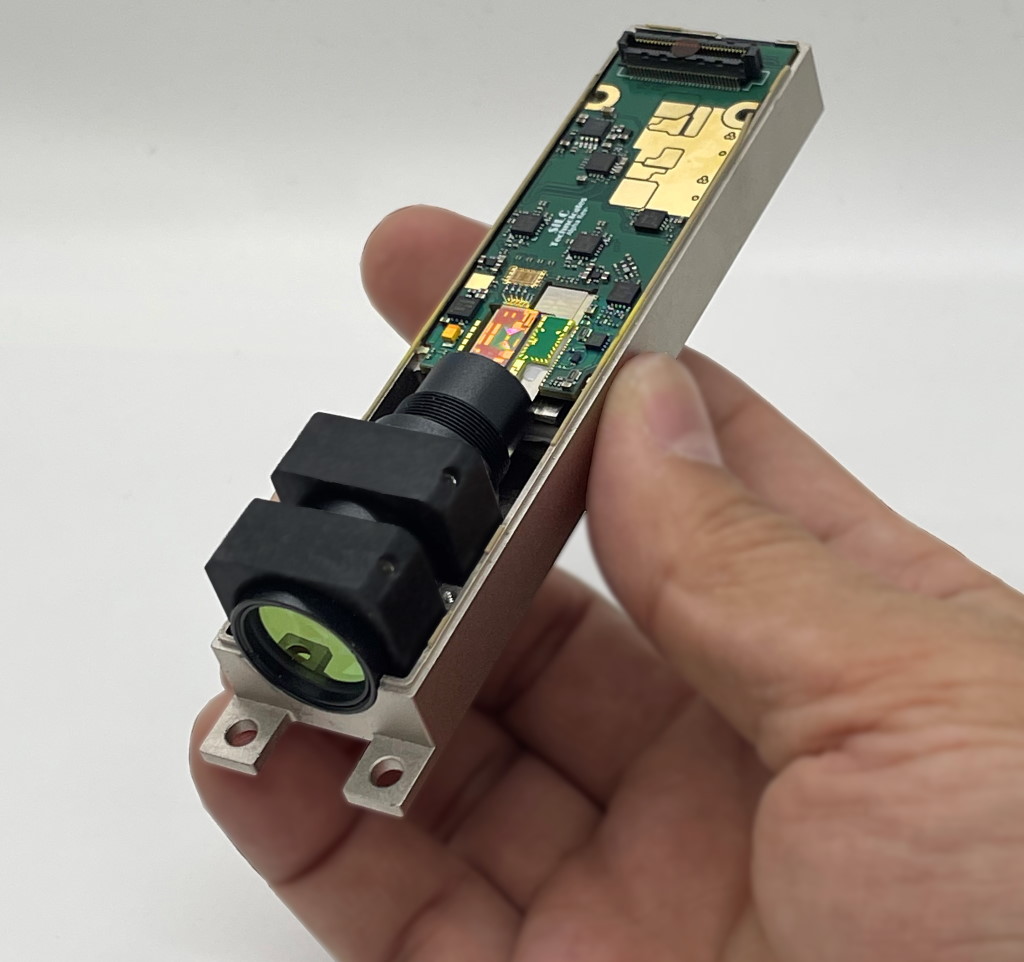

This was the point when Ralf playfully whipped out his Eyeonic vision sensor, in a manner of speaking. The Eyeonic features a frequency modulated continuous wave (FMCW) lidar. Indeed, Ralf says that it’s the most compact FMCW lidar ever made and that it’s multiple generations ahead of any competitor with respect to the level of optical integration it deploys.

The Eyeonic vision sensor (Image source: SiLC)

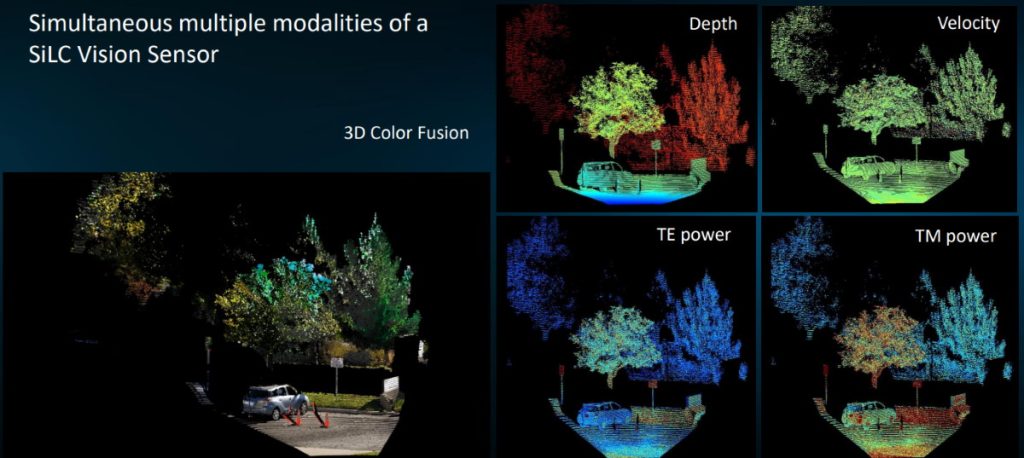

But what exactly is an FMCW lidar when it’s at home? Well, first of all, the Eyeonic sends out a continuous laser beam at a much lower intensity than its pulsed TOF cousins. It then uses a local oscillator to mix any reflected light with the light generated by its coherent laser transmitter. By means of some extremely clever digital signal processing (DSP), it’s possible to extract instantaneous depth, velocity, and polarization-dependent intensity while remaining fully immune to any environmental and multi-user interference. Furthermore, the combination of polarization and wavelength information facilitates surface analysis and material identification.

As yet one more aside, the material identification aspect of this caused me to consider creating robots that could target people sporting 1970s-style polyester golf pants and teach them the error of their ways, but then I decided that my dear old mother would be dismayed by this train of thought, so I promptly un-thought it.

Modalities of Eyeonic vision sensors (Image source: SiLC)

It’s difficult to overstate the importance of the velocity data provided by the Eyeonic vision sensor because the ability to detect motion and velocity is critical when it comes to performing visual cognition. By making available direct instantaneous motion/velocity information on a per-pixel basis, the Eyeonic vision sensor facilitates the rapid detection and tracking of objects of interest.

Of course, the FMCW-based Eyeonic vision sensor is not going to replace traditional camera vision sensors, which will continue to evolve in terms of resolution and the artificial intelligence systems employing them. On the other hand, I think that traditional TOF lidar-based systems should start looking for the “Exit” door. I also think that the combination of camera-based vision systems with FMCW-based vision systems are going to fling open the doors to new capabilities and possibilities. I cannot wait! What say you?

Max, what’s the significance of the change in the baseline of your photoreceptor sensitivity plot—that kink between ~470 and 575 nm?

Wow — well spotted — do you know that you are the first person who ever asked me that. The short answer is that I drew it this way because I can’t stop over-engineering everything. The longer answer is that, even though I say I normalized the Y-axis, I was trying to indicated that the human eye is most sensitive to green in low-light conditions. Have you noticed that if you are out and about when dusk falls, just before everything devolves into different shades of grays, the last color you see is a hint of green?

Actually, my first response is a bit of a simplification — there’s a better answer on Quora (https://www.quora.com/What-is-the-most-visible-color-in-the-dark) that says:

The most visible color in the dark is traffic-light green, or 500–505 nm, which is perceptually halfway between green and blue-green. (For traffic lights, they do that on purpose so that people with red-green colorblindness can more easily see the different between red and green.)

The rod-cell opsin (rhodopsin) has a peak sensitivity of about 495 nm, but gets scooted up to about 505 nm because of the yellowness of the macular pigments and the lens.

When seeing with both rods and cones (in twilight vision) the rods cells act as green-blue-green color receptors through the rod-cone opponent neurons. However, when rod cells alone are stimulated, things get complicated.

Our rod cells are incapable of operating in pure grayscale mode, just because of the way they’re wired. As a result, everything we see with our night vision will have a tinge of color, usually green-blue-green. However, depending on the visual environment, it can be other colors, such as slightly red, yellow, green, or blue. The result is still monochrome, just with faint color where gray would normally go.

The most visible color in the daytime is optic yellow, also known as tennis-ball green (555 nm).

Your humor and puns makes the article even more interesting to read and I completely agree with you AI and robotic application definitely can improve at a great pace by utilizing Fmcw based vision system.

Hi Harvansh — thank you so much for your kind words — I for one cannot wait to see how these things develop in the next few years.