I’ve said it before and I’ll say it again, I think that one of the biggest game-changers heading our way is the combination of artificial intelligence (AI) and augmented reality (AR). Together, these two tantalizing technologies are going to change the ways in which we interact with our systems, the world, and each other.

Before we plunge into the fray with wild abandon — which really is the only way to plunge into a fray (plunging into a fray with meek forbearance, for example, is almost certainly fated to end in tears) — for those who need a quick refresher, may I make so bold (I fear I may have watched Sense and Sensibility one time too many) as to remind you about my What the FAQ are AI, ANNs, ML, DL, and DNNs? and What the FAQ are VR, MR, AR, DR, AV, and HR? columns?

As I said in the latter of these two pieces: “[AR] refers to an interactive experience of a real-world environment in which the objects that reside in the real world are enhanced by computer-generated perceptual information, sometimes across multiple sensory modalities, including visual, auditory, haptic, somatosensory, and olfactory.”

Of these, the visual modality is arguably the most important when we consider that the vast majority of information that we take in from the outside world is via our eyes. “Exactly how much does ‘the vast majority’ mean?” I hear you cry. Well, to be honest, this is a bit of a tricky question because (a) how does one measure something like this? and (b) different sources tell us different things. According to this Vision is our Dominant Sense column on Brainline.org, for example: “Research estimates that eighty to eighty-five percent of our perception, learning, cognition, and activities are mediated through vision.” Meanwhile, in The Mind’s Eye article on the University of Rochester Review, the William G. Allyn Professor of Medical Optics, David Williams, is quoted as saying: “More than 50 percent of the cortex, the surface of the brain, is devoted to processing visual information.” And, just to add a hint of a sniff of a whiff of confusion, from this Perception of Vision column, we are informed that: “[…] it is now estimated that visual perception is 80 percent memory and 20 percent input through the eyes. In other words, sensory information is not transmitted to the brain; it comes from it.” None of these points of view actually contradicts the others — they are focusing on different facets of the visual experience — they do, however, leave us wondering exactly what it all means.

But we digress… When a lot of people think about AR, they visualize real-world visuals being overlaid with scrolling textual information as portrayed by Arnold Alois Schwarzenegger in the role of the cyborg assassin in the 1984 American science fiction action film The Terminator. In reality, in addition to textual information, real-world scenes can also be augmented by graphical elements.

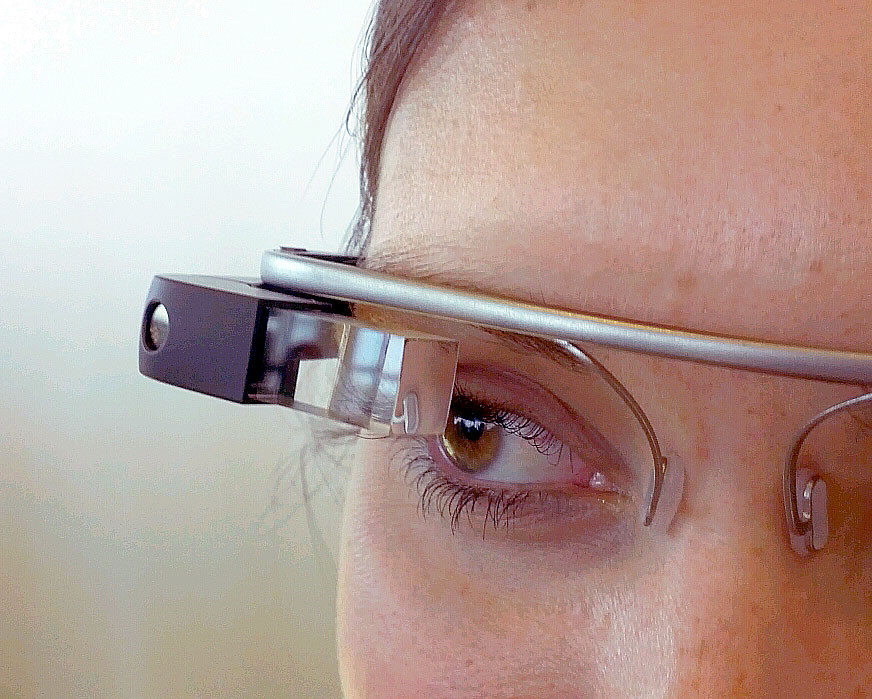

The next point to ponder is how all of this information is going to be offered to our orbs. One of the original contenders was Google’s Glass, an early prototype of which is seen below.

A Glass prototype as seen at Google I/O in June 2012

(Image source: Wikipedia/Antonio Zugaldia)

A lot of people assume that Glass was a failure that has faded into obscurity, but the current Enterprise Edition 2 version is going strong. The way Glass is currently being presented is as “a small, lightweight wearable computer with a transparent display for hands-free work.” As far as I’m concerned, however, Glass does not provide AR in a form for which I yearn.

At the other end of the spectrum, we might choose to have our AR presented to us by means of virtual reality (VR) style headsets as illustrated below.

AR presented by VR-style headsets.

Now, you might think that, although this VR-style headset would be OK for a work environment, it would be a tad too outré for casual wear. For example, you’d never be caught sporting this sort of headgear whilst strolling around your local supermarket. Personally, I beg to differ. I agree that you probably wouldn’t want to be the first person on your street to start flaunting this form of headset outdoors. On the other hand, I would argue that if 80% of the people you saw strolling around were thus equipped, it wouldn’t take long before you started to worry about all the information with which they were feasting their eyes and to which you had no access.

Mayhap a slightly more acceptable solution on the style-front would be something like a Microsoft HoloLens, although — costing between $3,500 and $5,000 depending on the accoutrements — it has to be acknowledged that these are a mite expensive for casual use.

The ideal solution, of course, would be some form of display mechanism that’s relatively unobtrusive while being affordable enough to support widespread deployment for consumer use.

The reason I’m waffling on about all of this here is that I was just chatting with Phil Garfinkle, who is President and CEO of NewSight Reality (NSR). Phil started by explaining that most of today’s smart glasses and AR solutions employ waveguide or projection-based approaches. Also, that they use holographic coatings, diffractive or reflective waveguides, prisms, or Fresnel surfaces as combiners. Also, that they use things like lasers or LCoS/DLP for light sources (where LCoS stands for Liquid Crystal Technology on Silicon and DLP stands for Digital Light Processing, which works by reflecting light off microscopic, mirrored panels). My head hurts.

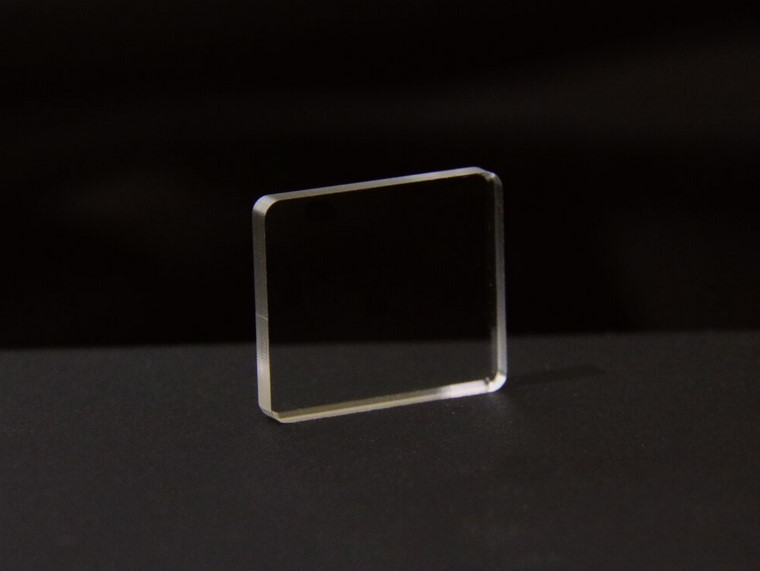

By comparison, the bodacious boffins at NSR have come up with something they call a TOM, which stands for Transparent Optical Module.

Say hello to a TOM (Image source: NewSight Reality)

Only 1 to 2 mm thick, the TOM is a multilayer hermetically sealed transparent module that provides both light engine and optical engine functionality. Inside the TOM is an array of LEDs, which can be monochromatic or tricolor, depending on the application. These little scamps are only 3.5 microns in size, and so are essentially invisible to the naked eye. The greater the density of the LEDs the greater the resolution, but even a relatively high density makes itself visible only as a slight darkening of the TOM.

The LEDs can be implemented using organic LED (OLED), transparent OLED (TOLED), or iLED technologies. To be honest, this is the first I’ve heard of iLEDs, but Phil says they are smaller than OLEDs and generate a tremendous amount of light. He also says that their yields are not so great at the moment, making it cost-prohibitive to deliver mass quantities of iLEDs today, but that — for brightness and power consumption reasons — iLEDs will ultimately be the way to go in the future.

One thing that initially confused me was the concept of focus. That is, if I’m sometimes looking at something far away and other times looking at something closeup, how can whatever information is being displayed on the TOM remain in focus? The answer is that the TOM also incorporates a micro-lens array (MLA), which basically means that there’s a lens for each LED (or group of LEDs, depending on the application).

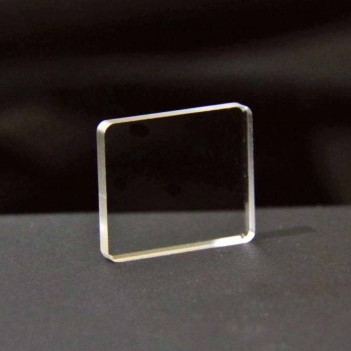

The great thing about TOMs is that they can be integrated into all sorts of things, including prescription eyeglasses, safety goggles, microscopes, telescopes… the list goes on. In the case of eyeglasses, TOMs can be used to provide binocular displays or monocular versions as shown below. Even better, TOMs are scalable and can be created in curved forms, potentially replacing the entire eyeglass lens.

Monocular TOM-enabled eyeglass deployment

(Image source: NewSight Reality)

Phil says that NSR is not currently planning on providing complete end-to-end solutions. Instead, they will provide TOM modules for other companies to design into their products. NSR’s timeline is for TOMs to become available on a consumer scale basis circa 2024 or 2025. In the meantime, NSR is currently building these display modules for the US Military because it’s been awarded a firm-fixed-price contract for design and test of a functional prototype level TOM with the US Special Operations Command (SOCOM) Directorate of Science and Technology.

I don’t know about you, but I think that real-world AI+AR deployments are closer than we think, and that technologies like NSR’s TOMs are going to make AI+AR devices ubiquitous in our daily lives. I, for one, cannot wait. What say you to all of this?

I say… I want some of those normal looking AR glasses!

Me Too!!!!!