I must admit that I’m starting to feel quite enthused and excited. Of course, these are my usual states of being, so it might be tricky for a casual observer to tell if there’s any difference. The reason for my current invigoration and vivification is that I spent much of the weekend past starting to sketch out the presentations I will be giving in Trondheim, Norway, around the beginning of September.

Did you know that Norway’s national bird is the white-throated dipper? The reason I drop this tidbit of trivia into our conversation is that the capital of Norway, Oslo, is on the south coast of Norway, and Trondheim is about 390 km (approximately 242 miles) north of Oslo as the white-throated dipper flies.

Located on the south shore of Trondheim Fjord, the city was founded in 997 CE as a trading post. A beautiful metropolis replete in history, Trondheim served as the capital of Norway during the Viking Age until 1217. Today, Trondheim is the third most populous municipality in Norway. Amongst many other things, the city is home to the Norwegian University of Science and Technology (NTNU), which is where I’ll be giving a guest lecture to a gaggle of MSc electrical and electronic engineering students.

My lecture at NTNU will take place on Tuesday 6 September. The following day, I’ll be giving the keynote presentation at the FPGA Forum, which is the premier annual event for the Norwegian FPGA community. This is where FPGA-designers, project managers, technical managers, researchers, final year students, and the major vendors gather for a two-day deep dive into the latest and greatest happenings in FPGA space (where no one can hear you scream).

It’s been 10 years since I last presented the keynote at the FPGA Forum. That august occasion took place in February 2012, which is not the warmest month on the Norwegian calendar, let me tell you. On the bright side, following my talk at the forum, I got to take a magical train ride through the snow-festooned landscape from Trondheim to Oslo. While there, I got to give a guest lecture at the University of Oslo, I managed to visit the Kon-Tiki Museum that holds the balsa wood raft that the Norwegian explorer and writer Thor Heyerdahl and friends used to cross the Pacific Ocean, and I found myself wandering through Vigeland Park at midnight where – under the light of the moon – I was surrounded by hundreds of snow- and ice-covered statues reminiscent of a scene from The Lion, the Witch, and the Wardrobe by C. S. Lewis.

By comparison, at this year’s FPGA Forum in September, I can look forward to a heady 55°F (13°C) accompanied by wind and rain (much like my holidays in England when I was a kid), which means I won’t need to devote much space in my suitcase for sunscreen.

I’m really looking forward to both of these presentations. In the case of the university talk, which will span two 45-minute periods, I have so many potential topics bouncing around my poor old noggin that I think I’m going to be hard-pushed to squeeze everything in.

When it comes to the FPGA Forum itself, I’ve been cogitating and ruminating on how much things have changed over the past 10 years. For example, although they were lurking in the wings, as it were, things like artificial intelligence (AI), machine learning (ML), and deep learning (DL) were not topics on everybody’s lips. Thinking about it, neither were virtual reality (VR), augmented reality (AR), diminished reality (DR), augmented virtuality (AV), mixed reality (MR), or hyper reality (HR).

Furthermore, although it has been only 10 years, there were some interesting FPGA companies that are sadly no longer with us, such as Tabula, which ranked third on the Wall Street Journal’s annual “Next Big Thing” list in 2012, but which closed its doors in 2015. On the other hand, some new players have appeared on the scene, such as Efinix, with its Trion FPGAs, and Renesas, with its ForgeFPGA family.

In the case of my keynote, I have no doubt that FPGAs will work their way into the conversation, but I don’t intend to spend overmuch time waffling on about their nitty-gritty details. Instead, we will be looking at some of the exciting new applications that can benefit from the capabilities offered by FPGA technologies. Also, I plan on talking about some of the amazing new technologies that may dramatically change the way we do things in the future.

For example… one company I plan on introducing is CogniFiber, whose claim to fame (well, tagline) is “Computing @ The Speed of Light.” Recently, I had a very interesting chat with Dr. Eyal Cohen, who is CEO and co-Founder of CogniFiber.

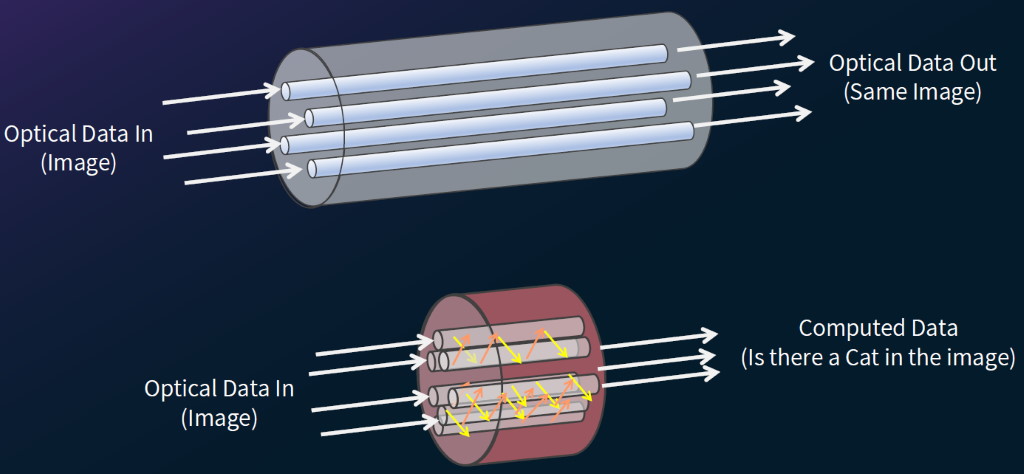

This takes a little bit of wrapping one’s head around — to be honest, I’m still wrapping mine around it – but I’ll explain it as best I can. Let’s start with the following image. My knee-jerk reaction when someone says “fiber optics” is to think of an optical cable containing multiple optical fibers, but my understanding is that, in this case, we are actually talking about optical fibers containing hundreds or thousands of optical cores. In traditional applications, we try to keep any crosstalk between cores as low as possible. In the upper part of the image, for example, our input is an optical image, each of whose pixels is transmitted down a core, and our output is the same image.

By comparison, what CogniFiber are doing is trying to maximize the crosstalk between cores and to use this crosstalk to perform AI/ML type computations. The idea is that you can feed an image into one end of the fiber and receive computed data (“is there a cat in this image?”) out of the other end. So, what we essentially have here is a neural network implemented inside a single optical fiber composed of thousands of cores.

Turning optic fibers into processors (Image source: CogniFiber)

I really am still trying to wrap my brain around all of this. It all seemed so clear when Dr. Cohen was explaining it to me, but it all seems so fuzzy now (which makes me think of Fuzzy Logic, but I refuse to let myself become distracted).

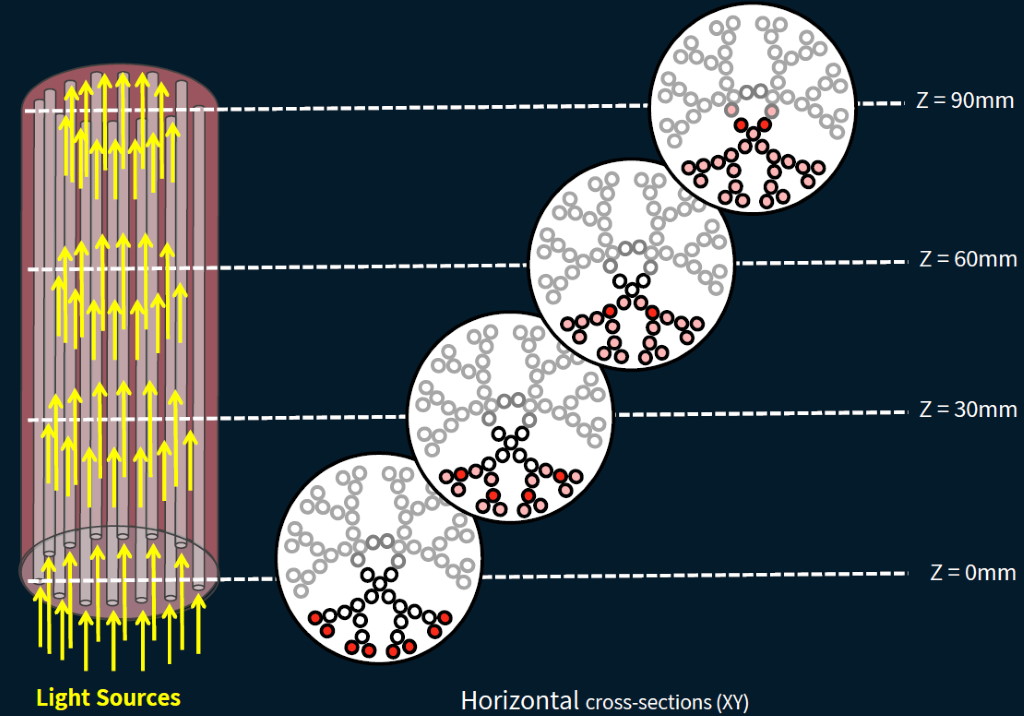

The main things I remember are that it’s possible to establish the parameters and coefficients of these neural networks and to optimize these parameters during training in much the same way one can do for FPGA/GPU implementations, excepting – of course – that everything is done using light. Also, that depending on the diameters and proximity of the cores, it’s possible to perform computations using fibers with a Z value from as long as 1 meter to as short as a few microns.

Think about the way an artificial neural network (ANN) used in a deep learning application usually works. Each layer in the network requires a humongous matrix multiplication involving myriad parameters. The results generated by each layer are fed as inputs to the next layer, but this involves temporarily storing the intermediate results. Now, consider the CogniFiber equivalent as illustrated below.

Computing on the fly with no read/write to memory (Image source: CogniFiber)

The result of this in-fiber processing is to deliver a 100-fold boost in computational capabilities while consuming a fraction of the power of a traditional semiconductor-based solution.

I must admit to being rather excited by all of this, but we must remind ourselves that we are still in the very early days of this technology. The current roadmap is for an Alpha Prototype Launch in Q3 2022, Beta Prototype Acceleration in Q4 2022, Beta Deployment in Q2 2023, and Production in Q3 2023.

The thing is that I’m seeing all sorts of new optical technologies appearing on the scene at the current time. In fact, the folks at CogniFiber have themselves announced the development of a glass-based photonic chip that they say will bring their technology one step closer to revolutionizing edge computing. When I think of everything that’s changed in the last 10 years, I’m excited to see what the next 10 years will bring. How about you? Do you have any thoughts you’d care to share about any of this?

Sounds like witchcraft, or mumbo jumbo, which means it will probably feature in mobile phones and laptops within the next 5 years, lol.

The way things are racing along at the moment, I’m learning to believe three impossible things before breakfast LOL