I’m a digital logic designer by trade. In an ever-changing and increasingly unreliable world, I find the certainty of Boolean equations to be extremely reassuring. You know where you are with a Karnaugh map, you can trust a De Morgan transformation, and you can gratify your desire for single-bit transitions with a Gray code. By comparison, I find the wibbly-wobbly nature of analog electronics to be somewhat disconcerting, which is unfortunate because there’s so much of it about these days.

One of the funny things is that “analog” was supposed to be the stuff of yesteryear. When I was coming up in electronics, digital functions were expensive in terms of transistors, and transistors were expensive in terms of cold, hard cash. That’s one of the reasons why analog computers hung on for decades after their digital counterparts first arrived on the scene. It was possible to implement analog signal processing (ASP) functions using a handful of transistors (or vacuum tubes) that would have required hundreds or even thousands of transistors to be realized as digital signal processing (DSP) equivalents. Even more demeaning (to a digital engineer) was the fact that many analog operations functioned faster and consumed lower power than did their digital counterparts.

Over time, of course, the art of creating integrated circuits flourished, and transistors became astoundingly smaller, cheaper, and faster, thereby promoting the evolution of DSP in the 1960s and 1970s, driving the growth of DSP in the 1980s and 1990s, and feeding the explosion of DSP in the 2000s and beyond. Once real-world signals have been transported, transformed, and transmogrified from the analog realm into the digital domain, it is possible to do anything you want with them. This caused pompous industry pundits in the 1980s and 1990s to proclaim the imminent death of analog engineering, with the result that many students entering universities spurned analog and succumbed to the siren song of digital.

Not surprisingly, this resulted in a dearth of analog engineers, which was unfortunate because analog refused to die. In reality, of course, analog is all over the place, especially in the case of things like high-speed interconnects and interfaces and PHYs. It’s also a sad fact of life that analog engineers can rarely restrain themselves from smugly informing anyone who will listen to them that digital is a subset of analog, but no one likes people who are smug, so we will instead pity them as being digitally disadvantaged and move on with our lives.

Actually, I was just looking at The Decadal Plan for Semiconductors. This is a 150-page report that was published in January 2021 by the Semiconductor Research Corporation, which is a leading non-profit industry-government-academia microelectronics research consortium funding academic research tasks selected and directed by industry and government members. This report details “Five Seismic Shifts” that they believe will define the future of semiconductors and information and communication technologies.

Sitting on the top of the pile, the first of these seismic shifts is “The Analog Data Deluge,” which is summarized as follows: “Fundamental breakthroughs in analog hardware are required to generate smarter world-machine interfaces that can sense, perceive, and reason…”

Where things start to become extremely interesting as far as I’m concerned is the current resurgence of analog in the context of artificial intelligence (AI) and machine learning (ML) applications. As you may recall, deep in the mists of time we used to call September 2020, I penned a column — A Brave New World of Analog Artificial Neural Networks (AANNs) — about the activities of a company called Aspinity.

I don’t want to rehash that column in its entirety (you can read it at your leisure), so let’s briefly summarize things as follows:

Today’s edge processing is inefficient. There are expected to be ~40+ billion connected IoT devices by 2025. Many of these devices will be always-on, and a large proportion of these always-on devices will be battery-operated. Somewhere around ~80 zettabytes (ZB) of data will be generated and digitized, and ~80 to 90% of this digitized data will be irrelevant to the task at hand.

In the case of wake-word detection, for example, only 10 to 20% of an audio signal is voice, while 80 to 90% is ambient noise. Constantly monitoring 100% of the signal using digital techniques consumes far too much power. The solution is to use an inherently low-power analog-based AI to constantly monitor the audio stream, only activating higher-level digital systems when a human voice is detected.

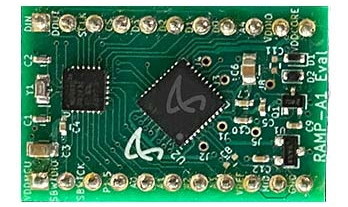

All of which leads us to Aspinity’s AnalogML Core, which is a teeny-tiny device that’s based on Aspinity’s Reconfigurable Analog Modular Processor (RAMP) technology platform. By combining the sophisticated functionality of a tinyML chip with low-power analog neuromorphic computing concepts, the AnalogML Core can be used to constantly monitor the signals from audio (microphone) or vibration (accelerometer) sensors and perform AI/ML-based feature extraction and classification (inferencing), all in the analog domain while consuming only 25 microamps (µA) of power.

The teeny-tiny AnalogML Core (Image source: Aspinity)

The AnalogML Core can be used for a wide variety of tasks, including voice activity detection, acoustic event detection, and vibration monitoring. Next, we have the EM1 breakout board (BOB), which makes it easier to access the functionality of the AnalogML Core for prototyping purposes.

The EM1 breakout board (Image source: Aspinity)

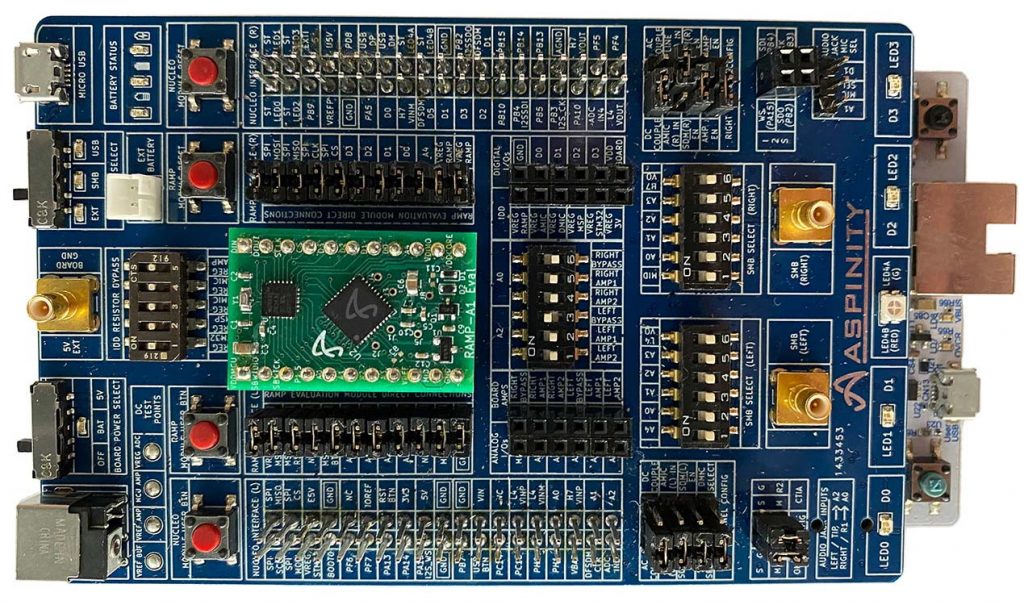

Now, this is where things can get a little confusing unless you pay careful attention (observe that at no time do my hands leave the ends of my arms). In January 2020, the folks at Aspinity announced their Voice Activity Detection Evaluation Kit in the form of the EVK2. This little rascal provides a front-to-back solution for analog voice activity detection and system wake up using the analogML core via the EM1 BOB.

The EVK2 voice activity detection evaluation kit (Image source: Aspinity)

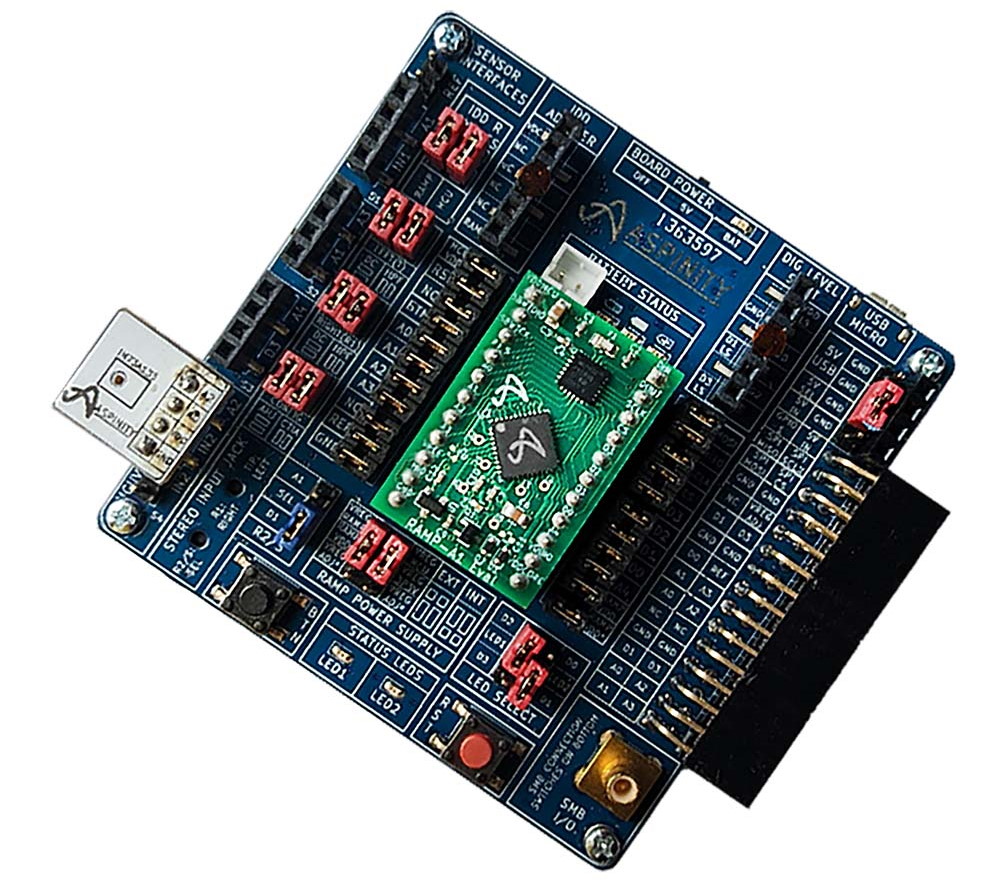

But wait, there’s more, because just a couple of weeks ago as I pen these words, the folks at Aspinity announced the introduction of their Acoustic Event Detection Evaluation Kit in the form of the EVK1. This little scamp provides a complete evaluation platform for battery-operated, smart home devices that are always listening for acoustic triggers such as window glass breaks, voice, or other acoustic events.

The EVK1 acoustic event detection evaluation kit (Image source: Aspinity)

“Hang on,” I hear you cry. “Why did they release the EVK2 a year before the EVK1?” That’s a good question. I’m glad you asked (it shows you are paying attention) but I don’t know the answer. I felt it would be impolite of me to inquire. I was going to say that the one thing of which I feel we can be reasonably confident is that — since the folks at Aspinity work in the rarified realms of analog AI/ML — they aren’t numerically challenged. On the other hand, now that I come to think about it (and returning to our discussions at the beginning of this column), it might be that spending their lives swimming in the wobbly-wobbly analog waters means they no longer care to acknowledge digital foibles like the fact that the number 1 traditionally precedes the number 2.

Moving on, as the folks from Aspinity say: “Traditional acoustic event detection devices are notoriously power-inefficient because they continuously monitor the environment and immediately digitize all microphone data for analysis — even though most of that data are simply noise. A window glass break, for example, may happen only once a decade, but the typical glass break sensor uses high-power digital analysis of 100% of the ambient sound data to detect a trigger that rarely (or never) occurs. Aspinity’s EVK1, on the other hand, demonstrates a power-saving alternative. By using an analogML core to detect acoustic events at the start of the signal chain while the microphone data are still analog, the downstream digital system can remain in an ultra-low-power sleep mode until an event is detected. This architectural approach allows designers to build acoustic event detection devices with batteries that last years, instead of months, on a single charge.”

All I can say is that I’m currently seeing a surge in the use of analog for AI/ML applications. For example, in addition to the chaps and chapesses at Aspinity, who are focusing on sound and vibration, we have the guys and gals at AIStorm, who are integrating analog AI/ML directly into vision sensors (see also An AI Storm is Coming as Analog AI Surfaces in Sensors by yours truly and AIStorm Makes AI Sensors Cheap & Easy by Jim Turley). To be honest, and I hate to hear myself saying this, if I were poised to commence my career today — perusing and pondering university courses to pursue — I would seriously think about including analog signal processing in my curriculum. What say you?

3 thoughts on “Aspinity’s Awesome Analog Artificial Neural Networks (AANNs)”