It can be a funny old world when you come to think about it. I remember when I was a young engineer and industry pundits portentously predicted the passing of analog electronics, which resulted in students not wanting to study a dying field, which resulted in universities not bothering to offer courses. Perhaps not surprisingly, the fact that analog continued to go from strength to strength proved to be a tad embarrassing to all concerned, not least that — due to so few people studying it — there quickly grew to be a dearth of engineers who have an analog clue.

I think it’s fair to say that I know as much analog as the next digital engineer; that is, not a lot. I find the logical certainty of the digital domain to be reassuring. Contra wise, the wibbly wobbly uncertainty of the analog arena brings little comfort to my soul. This is sad, in a way, because a little bit of analog can go an awfully long way… if you know what you are doing.

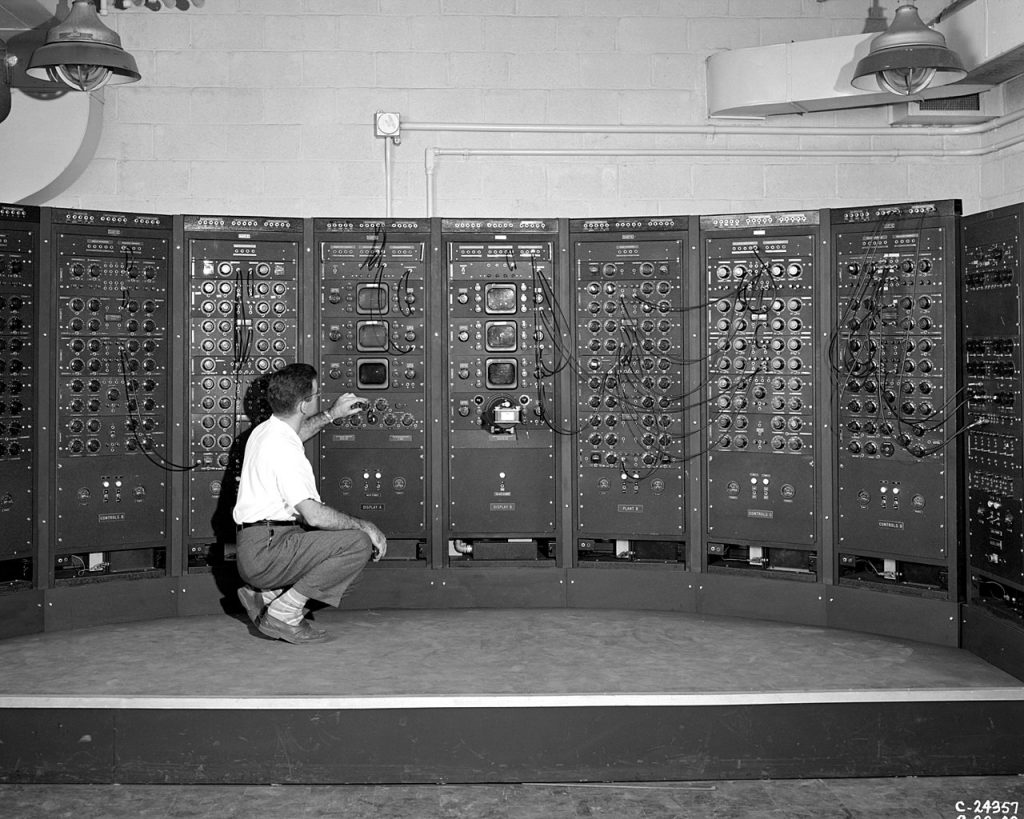

As an aside, when I started my degree in 1975, the only computer we had in the engineering department was an awesome analog asset. As I recall, it was not dissimilar to the bodacious beauty shown in the photograph below.

Analog computing machine at the Lewis Flight Propulsion Laboratory circa 1949 (Image source: NASA)

This machine was comprised of a large number of analog modules — multipliers (amplifiers), dividers, adders, subtractors, integrators, differentiators, comparators, and so forth. We used flying leads reminiscent of an old telephone exchange to connect the outputs of some modules to the inputs of others, and then we turned knobs, toggled switches, crossed our fingers, muttered arcane oaths, and — if the fates smiled upon our endeavors –simulated things like the temperature changes one might expect to see if one were to open a refrigerator door. Exciting times!

Looking back, I wish I’d taken a photograph of myself standing next to the little scamp, but — of course — I had no idea that I would one day be writing this column.

We also had access to an unbelievably large and incredibly slow digital mainframe computer, but that was located in its own building across town. We used Teletype terminals in the engineering building to capture our programs on punched cards, and then we walked across town to hand these card decks over the counter in reception and be curtly instructed to “come back next Tuesday.” When we did return, it was only to discover a scrap of paper attached to our card deck by an elastic band carrying a message along the lines of, “Missing comma, line 2,” at which point we would start the cycle all over again. As you can imagine, it could easily take a semester to get a “Hello World” program up and running.

Based on the lack of digital computing resources when I was attending university, it is perhaps not too surprising that no one talked about digital signal processing (DSP). Recently, I was surprised to discover that the concept of using digital computers to process signals was around as far back as the 1960s. In fact, TI created a transistorized computer for the digital signal processing of seismic data as early as 1961.

The real game changers came with the discovery of the fast Fourier transform (FFT) in the mid-1960s followed by the introduction of microprocessors in the early 1970s. It must have been soon after this that the enthusiasm for all things digital took hold, along with the corresponding gloomy predictions for the demise of analog, because I just ran across a reference to a 1984 report, Analog, Still Without Fear, that begins, “This report is an update of one issued in 1977, which predicted that the predicted death of analog circuitry (also called linear) would not occur…”

I must admit that it took me a little time to wrap my brain around this sentence, but eventually I realized that the 1977 report was saying that the death of analog that had been previously predicted was not actually going to come to pass. Wow! People certainly had it in for poor old analog — and for my poor old noggin — for a long time, didn’t they? If only they knew then what I now know and what I’m about to tell you now (if I cannot be as tautologically hyperbolic as a 1984 update to a 1977 report, then my name’s not Max the Magnificent). We will return to the analog conundrum in a moment, but first…

Don’t Fall Off the (Intelligent) Edge

If we were to go back in time five to ten years, we would discover that everyone was talking about pushing data and processing up into the cloud. Over the past five years, however, there’s been an increasing move to push as much processing as we can to the edge. Analyzing data and making decisions at the site where the data is captured and/or generated may also be referred to as the “intelligent edge” (see also What the FAQ is the Edge vs. the Far Edge?).

The reason this is important is that, according to the International Data Corporation (IDC) in its Worldwide Global DataSphere IoT Device and Data Forecast, 2019–2023 (doc #US45066919, June 2019), by 2025, there will be 41.6 billion connected Internet of Things (IoT) devices merrily generating 79.4 zettabytes (ZB) of data, where the prefix zetta indicates multiplication by the seventh power of 1,000 or 10^21 in the International System of Units (SI). Whichever way you look at things, that’s a lot of bytes!

As another aside, do you feel that numbers like 79.4 are just a smidgen too precise when we are talking about predictions for five years in the future? By some strange quirk of fate, earlier today as I pen these words, I was chatting to my chum, analog expert Bill Schweber, about this very topic, and he noted that he often sees predictions that manage to be both extremely precise and highly inaccurate at the same time. But we digress…

Currently, one of the fastest growing IoT segments is that of always-on battery-operated devices, whose key challenges are providing sophisticated processing while using little power and maintaining user privacy. The problem is that today’s architectures waste anywhere between 70% to 90% of their battery life processing irrelevant data.

To put this another way, there’s a danger that up to 50 ZB of the 79.4 ZB of data being processed in 2025 will be being processed unnecessarily. For the 1.6 billion battery-operated voice-first devices alone, that’s equivalent to 36 million AA batteries being wasted every day!

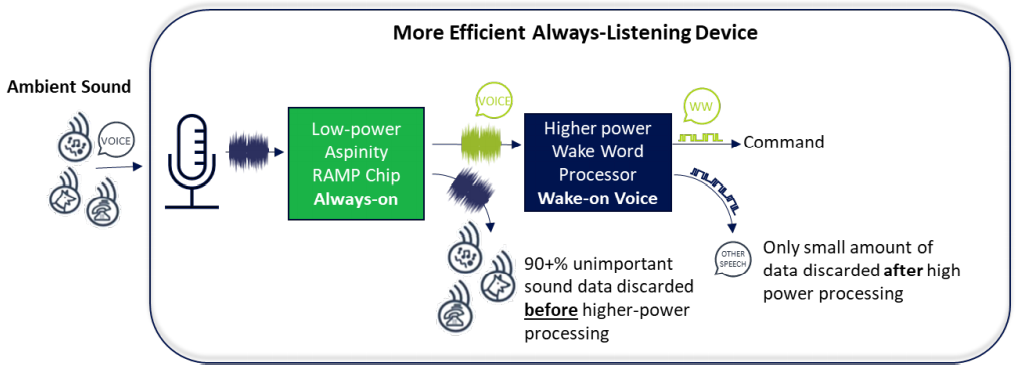

If this seems a little extreme, consider an always-listening device that’s waiting for a wake word to trigger it to leap into action. The problem is that the world is full of ambient sounds — dogs barking, cats barfing (I speak from experience), birds chirping, systems “binging” inside the house, cars and trucks rumbling by outside the house, wind, thunder, rain… the list goes on. Unfortunately, the high-power wake word processor is always-on, processing every sound that comes its way to determine if that sound is human speech and, if so, if the sound corresponds to the wake word. As a result, 95+% of sound data is discarded AFTER the high-power processing has been performed.

Typical always-listening device (Image source: Aspinity)

There’s an old saying that if your only tool is a hammer, then every problem looks like a nail. Much the same thing applies to the problem of processing signals — if the only tool with which you are familiar is DSP, then every signal processing problem looks like a candidate for a DSP solution. People rarely think of analog signal processing (ASP) because so few of us truly understand the analog domain.

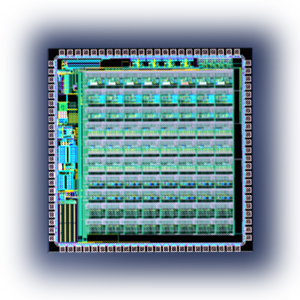

As a prime example, most of the time when we hear about things like artificial intelligence (AI) and machine learning (ML) in the context of artificial neural networks (ANNs), we think of the neurons forming the networks in the form of digital implementations (see also What the FAQ are AI, ANNs, ML, DL, and DNNs?). Well, the clever guys and gals at Aspinity have come at things from a completely different direction — analog ANNs (dare we call these AANNs, or would that be pushing things a tad too far?).

What we’re talking about here is Aspinity’s Reconfigurable Analog Modular Processor (RAMP) family of devices. These little beauties monitor the signals from audio (microphone) or vibration (accelerometer) sensors and perform AI/ML-based feature extraction and classification (inferencing), all in the analog domain. In the case of an always-listening device, for example, adding a RAMP chip results in a massively more efficient solution.

More efficient always-listening device (Image source: Aspinity)

To cut a long story short, in this more efficient implementation, the higher-power wake word processor spends most of its time asleep, during which it consumes almost no power whatsoever. Meanwhile, the always on RAMP listens to every sound, using its analog AI/ML neural network to determine if that sound is human speech, and doing this while consuming only 25 microamps (µA) of power. As soon as the RAMP detects human speech, it wakes the higher-power wake word processor.

“Just a minute,” I hear you cry, “doesn’t this mean that by the time the higher-power wake word processor has woken up, the wake word itself has come and gone?” That’s a good question. I’m glad you asked because it shows that you are, yourself, awake. In fact, in addition to the wake word itself, the wake word processor requires about 500 ms of preceding sound (this is called “pre-roll”). In order to address this, the RAMP chip constantly records and stores (in compressed form) the pre-roll. When it detects human speech and wakes the wake word processor, it un-compresses the pre-roll and feeds it to the wake word processor with the live audio stitched onto the back.

Now, you may be wondering how the RAMP’s analog ANN can offer such a power-efficient solution. Well, do you recall right at the beginning of this column when I noted that “a little bit of analog can go an awfully long way… if you know what you are doing.” As one simple example, a large proportion of signal processing — including AI and ML — involves vast numbers of multiply-accumulate operations, each of which consists of a multiplication and addition with the result being stored in an accumulator register. The hardware unit that performs such an operation is known as a multiplier-accumulator (MAC), and things like ANNs can contain humongous numbers of these little rascals.

Those of us who don digital trousers in the morning have grown used to having vast quantities of logic gates and functions at our disposal. We pride ourselves on how small and low power are our transistors, with the result that we throw them around like confetti. The problem is that a MAC implemented using digital techniques consumes a lot of transistors and an ANN consumes a lot of MACs. Even if these transistors consume little power individually, the overall power consumption mounts when you are talking about tens or hundreds of thousands of the little scamps. By comparison, an analog MAC requires only a handful of transistors to perform its magic.

To be honest, we’ve only scraped the beginning of what is possible with this technology. Another example would be a battery-powered audio or vibration sensor for a burglar alarm whose purpose is to detect the sound of breaking glass. Once again, the RAMP’s analog ANN can be trained to recognize the signature of breaking glass, at which point it can hand things over to a higher-power, more capable processor.

I don’t know about you, but I’m jolly excited about the prospects for Aspinity’s technology. I should point out that the folks at Aspinity are themselves jolly excited by the fact that earlier this month they closed $5.3M of Series A funding, which does sound as though it could be exciting if I had a clue what “Series A funding” meant, but I don’t (I’m trying to not lose sleep over my lack of knowledge). How about you, what are your thoughts on all of this?

Further Reading

For your delectation and delight, Bryon Moyer wrote about Aspinity for EEJournal on September 16, 2019 in his column: RAMPing Up Always-On AI: Aspinity’s Analog Detector Reduces Power.

Great Article! I suspect analog will rise again 😉

We trained in a similar era

Hi Max,

Great article.

I always thought analogue computing would rise to the top again, sadly at a time when my biological computer is degrading.

It was amazing what you could achieve as a computing function with the very humble 741 Op_Amp.