Every now and then, I run across something that causes me to exclaim, “Brilliant!” Of course, my job is made somewhat easier when the thing I run across is a company called Brilliant, and easier still if the thing they’ve developed is… dare I say it… BRILLIANT!

It probably goes without saying, but I’ll say it anyway, that this reminds me of the classic UK Guinness video ads built around the catchphrase “Brilliant!”—especially the ones featuring the deadpan delivery and understated surrealism that made the punchline funny precisely because everyone was acting as though something completely ordinary was astonishingly impressive.

But we digress… The tale I’m about to tell has multiple facets. From my own perspective, it started when I took possession of one of the first Oculus Rift virtual reality (VR) headsets in 2016. Around that same timeframe, various players were making noise in augmented reality (AR) space (where no one can hear you scream). Two that immediately spring to mind are Microsoft, with its HoloLens (they had a real shipping product in 2016), and Magic Leap, with its hollow hype (they didn’t ship a real product until 2018, and—while technically impressive—that was disappointing in the context of their preceding promises of delight).

Also, it would be remiss of us to fail to mention Google Glass, which dates back to 2013-ish. This was more of a heads-up notification display than true spatial AR, and it famously ran into social backlash (“Glassholes”) and privacy concerns before eventually pivoting toward industrial and medical use cases.

Throughout the late 2010s, whilst speaking at events like the Embedded Systems Conference (ESC), I often mentioned my vision (no pun intended) for a comfortable, unobtrusive, lightweight headset combining AI and AR capabilities.

One example I often used was my being able to say to my headset, “Do you remember the name of the book I was reading about six months ago, the one that mentioned Lady Ada Lovelace in the context of AI?” And for the headset to respond, “Yes, it was the classic Tea, Crumpets, and Computational Engines by Professor Cuthbert Dribble.” I could then ask, “Where is that book?” And the headset would tell me where I’d left it (or hidden it behind a pile of other books).

Now, it seems that we are poised to take a giant leap forward with the Halo headset from Brilliant Labs. I was just chatting with Bobak Tavangar, who is the company’s CEO. As we see in the photo below, Bobak was sporting a pair of black glasses almost exactly like my own, if we exclude fiddling little details like the fact that his also features sophisticated AI+AR capabilities.

Bobak Tavangar looking nonchalant while flaunting his Halo AI+AR glasses (Source: Brilliant)

We will return to Halo in a moment, but I’d first like to share with you how I came to be chatting with Bobak in the first place. This all started deep in the mists of time we used to call 2023, when I wrote a column: New MCUs Provide 10^2 the Performance at 10^-2 the Power. This column introduced a company called Alif Semiconductor that develops ultra-low-power AI-enabled microcontrollers and fusion processors with integrated wireless connectivity for edge and wearable devices.

It was around that time that I started working on the second incarnation of my Countdown Timer, whose job it will be to count down the years, months, days, hours, minutes, and seconds to the commencement of my 100th birthday celebrations (the first version met an unfortunate end when it fell off a shelf and experienced a rapid unscheduled disassembly (RUD) event).

The new version (which I’ll doubtless be writing more about in the future) features vacuum fluorescent display (VFD) tubes, which offer an awesome retro-futuristic aesthetic. Unfortunately, I’d already taken possession of the little rascals and commenced work on the design when I finally took the time to read the datasheet, only to discover that they had a quoted lifetime of only around 10 years under continuous operation, but it’s still ~30 years until the big event. “Oh dear,” I said to myself (or words to that effect).

This is when I came up with the idea of augmenting my timer with machine-vision capabilities. The idea was that the tubes would be activated only when someone was actually looking at the display. If the room was empty—or if its occupants were looking elsewhere—the tubes could be put to sleep, thereby extending their operational lifespan. In turn, this led me to post my column, Issuing a Challenge to Edge AI Processor Manufacturers, in which I posed my problem and invited suggestions and solutions.

You can only imagine my surprise when, a couple of days later, Henrik Flodell from Alif got in touch to say that they had just what I was looking for and he was poised to drop one in the post to me. As I described in My AI Will Be Watching You column, we’re talking about Alif’s postage-stamp-sized Vision App Kit, which pairs a tiny camera with one of the company’s Ensemble AI-equipped MCUs.

Sadly, something came up, causing me to put my Countdown Timer project on the back burner for a while. However, I’m delighted to report that, just a few weeks ago as I pen these words, I resurrected the project and we’re now “full steam ahead.”

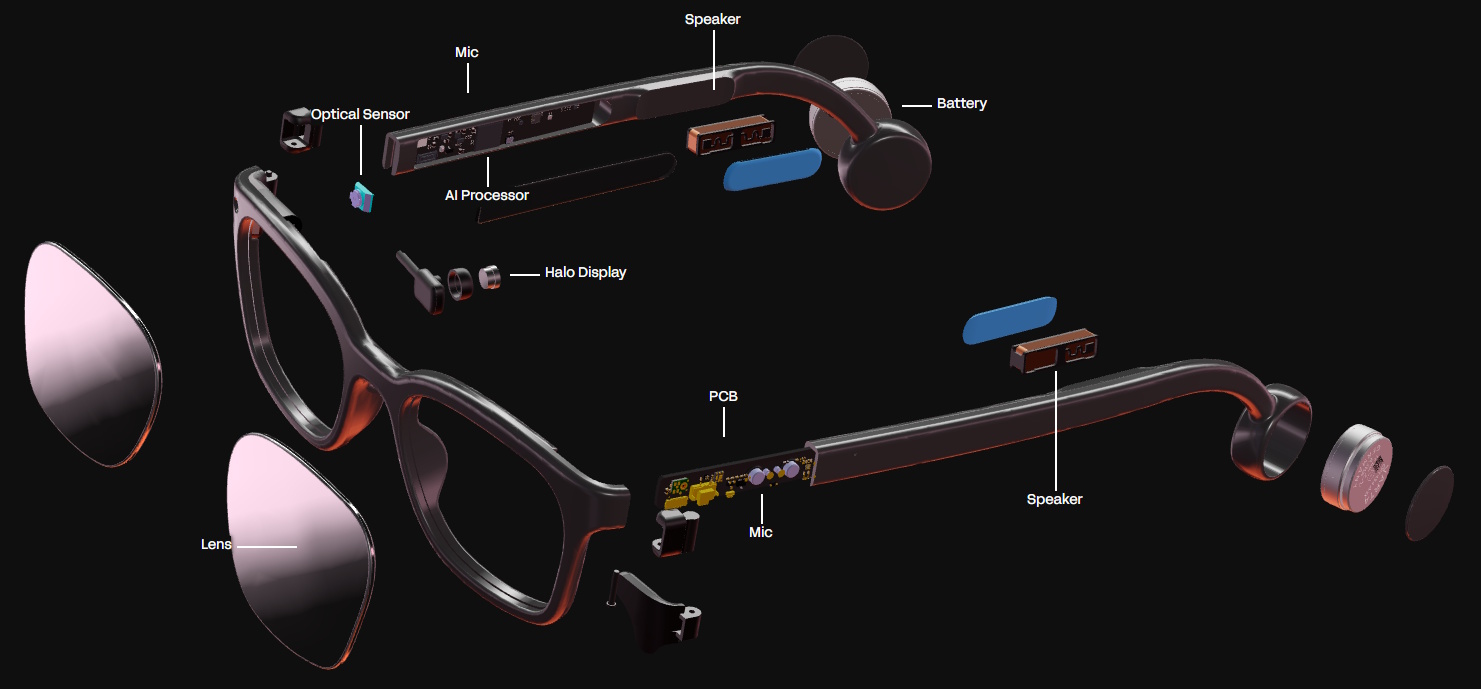

The reason I mention all this here is that I have a soft spot for the folks at Alif, so I was more than interested when they emailed me to say their Balletto B1 wireless AI MCU was a key element in Brilliant’s Halo glasses. This was when they offered to make an introduction between Bobak and me. Speaking of which, this is probably a good time to present the following image, which shows an exploded view of the glasses Bobak was wearing during our chat.

Exploded view of Halo glasses (Source: Brilliant)

Although the writing is really small (it’s bigger on Brilliant’s website), the “AI Processor” annotation points to Alif’s chip, whose WLCSP120 package is a teeny-tiny 3.9mm × 3.9mm. I’ll tell you more about all this in a moment, but first…

I saw something in a Buzzfeed column a few months ago. A lady said that sometimes, when she is annoyed with her husband, she adds imaginary items to the shopping list she gives him, then berates him for not finding them when he returns home. My wife, Gigi the Gorgeous, doesn’t do this (at least, I hope she doesn’t), but it’s certainly safe to say that I find it extraordinarily difficult to find many of the items she asks for at our local supermarket.

I’m not saying they aren’t there—I usually manage to track them down—but they are invariably tucked away in places no sane person would think to look. And when I do find what I think is the thing I’m looking for, I’m typically racked with doubt (“Is this really what she wants?”). All of which makes this video of someone wearing a pair of Halo glasses as he tries to satisfy his wife’s request particularly poignant.

A key part of all this is Noa, which is Brilliant Labs’ multimodal AI assistant. In this context, “multimodal” means Noa can simultaneously process information from multiple sources, including what the wearer is seeing through the camera, hearing through the microphones, and is saying in conversation.

According to Brilliant Labs, Noa can engage in real-time contextual conversations, answer questions about the surrounding environment, translate text and speech, and—perhaps most intriguingly—act as a kind of long-term memory assistant by remembering things the wearer previously saw, heard, or discussed.

Brilliant Labs also says that Noa employs what the company calls a “Narrative” memory system. Rather than merely responding to isolated prompts, the system incrementally builds a personalized knowledge base derived from the wearer’s experiences and interactions over time. This is what enables use cases like asking, “Where did I leave my car keys?” or “What was the name of the person I met at last week’s conference?”

What makes Halo particularly interesting is that it’s not trying to be another bulky, all-encompassing virtual reality headset that makes users look like they’ve strapped a toaster oven to their faces. Instead, Brilliant Labs has focused on creating something lightweight, stylish, and—most importantly—useful.

As Bobak explained during our chat, Halo contains a surprisingly rich collection of technology hidden inside what otherwise appears to be an ordinary pair of spectacles. There’s a camera tucked into the frame that continuously observes the world around the wearer, dual microphones that listen to conversations and ambient audio, bone-conduction speakers resting near the temples (see also Headphones for a Zombie Apocalypse), and a tiny full-color OLED display that projects information into the wearer’s field of view when they glance downward.

The display itself is fascinating. Rather than attempting the sort of full immersive augmented reality experience favored by some earlier entrants in this space, Halo takes a far more pragmatic approach. When the wearer tilts their head slightly downward, the display appears as a floating virtual screen, roughly equivalent to viewing an iPad at arm’s length. When the wearer looks normally ahead, the display essentially disappears from view.

In many ways, this feels less like traditional augmented reality and more like a highly intelligent heads-up companion system. And I must admit that the more I think about this approach, the more sensible it seems. Instead of attempting to plaster graphics over every square inch of reality (see also the classic Hyper Reality video), Halo focuses on delivering useful information just when it’s needed.

Of course, squeezing all this functionality into a lightweight pair of glasses requires some rather cunning engineering. This is where Alif’s Balletto B1 wireless AI MCU enters the stage. As Bobak explained, earlier prototypes relied on combinations of processors and FPGAs sitting in the video path between the camera and the display. Unfortunately, this apparently resulted in design cycles that, as Bobak says, “felt like running through peanut butter.”

By contrast, the tiny Balletto B1 integrates processing, AI acceleration, Bluetooth connectivity, camera interfacing, audio handling, and display control into a single low-power device small enough to disappear into the frame of the glasses. Bobak was especially enthusiastic about the package size, noting that fashionability and thinness matter enormously for wearable devices. He’s absolutely correct. Nobody wants to wander around looking like they escaped from a low-budget 1970s science fiction movie (never again!).

Another aspect of Halo that I’m enthusiastic about is Brilliant Labs’ commitment to open source. Not just “sort of” open source. Not “we’ll release a few SDKs if we’re feeling generous” open source. We’re talking hardware, firmware, software, and even the associated smartphone applications being fully open source.

Now, at first blush, hearing that Brilliant Labs consists of only five core team members may sound somewhat alarming. After all, creating advanced AI-enabled wearable hardware isn’t exactly the sort of thing most people tackle over a quiet weekend with a soldering iron and a packet of McVitie’s chocolate digestives (which are arguably the best cookies ever—just sayin’).

However, the story becomes considerably more interesting when you learn that the company has cultivated a community of more than 13,000 developers, engineers, makers, and enthusiasts spread across GitHub and Discord. In effect, Brilliant Labs has transformed itself into something akin to an open-source ecosystem rather than merely a conventional startup.

As Bobak pointed out, communities like this cannot simply be purchased. They grow organically over time. Many of these contributors have participated through multiple generations of products, starting with Monocle, progressing through Frame, and now arriving at Halo. The result is a kind of massively distributed co-development model in which ideas, code, applications, experiments, and enhancements continuously flow back into the platform.

Power consumption and battery life are also critical in devices like this. Happily, Halo appears to have avoided the “operate for seventeen minutes before bursting into tears” battery model favored by some modern gadgets. The glasses employ rechargeable coin cells hidden near the rear of the frame, and Bobak says the system is designed to last all day before being recharged overnight via a magnetic USB-C cable.

Equally fascinating is Brilliant Labs’ partitioning of AI processing across multiple layers of compute. Some AI functionality happens directly inside the glasses themselves using the Alif device. For example, the glasses can perform low-power audio activity detection locally. If the system detects that the wearer is engaged in conversation, it can wake up additional processing functions and begin streaming relevant data to the user’s smartphone over Bluetooth.

The smartphone, meanwhile, performs significantly more sophisticated AI inference using the handset’s own neural processing hardware. According to Bobak, Brilliant Labs has managed to run models directly on the phone’s NPU for both speech and image processing. Only compact numerical representations—known as embeddings—are then transmitted to cloud services for higher-level contextual reasoning and memory retrieval.

This architecture is actually rather clever. It reduces latency, lowers cloud processing costs, conserves bandwidth, and significantly improves privacy. And, speaking of privacy, this is one area where Brilliant Labs appears eager to differentiate itself from certain larger technology companies who shall remain nameless (we all know who you are), but whose business models may perhaps be described as “vacuum up absolutely everything and ask awkward questions later.”

Interestingly, Halo does not include an LED that lights up whenever the camera is active. At first glance, this might sound alarming. However, Bobak explained that the company deliberately chose not to continuously record and store raw audio or video data. Instead, information is rapidly transformed into the aforementioned embeddings, which are essentially mathematical summaries capturing the important features of what was seen or heard. Rather than storing actual photographs, conversations, or video recordings, the system stores numerical strings that represent the semantic characteristics of the information.

Bobak likened this to the way fingerprint systems often store extracted feature information rather than complete raw images. This means that even if, against all odds, someone somehow managed to access the stored embeddings, they would not find meaningful, human-readable recordings waiting to be viewed.

Now, obviously, some readers will still have concerns regarding privacy, and that’s entirely understandable. Personally, however, I find Brilliant Labs’ architectural approach considerably more reassuring than systems whose entire business model depends upon hoovering vast quantities of personal data into centralized storage silos.

Finally, there’s manufacturing. Halo isn’t some speculative laboratory prototype assembled by hand beneath a flickering light bulb in a mysterious basement workshop. According to Bobak, the glasses are currently being mass-produced by Foxconn, which certainly lends a degree of industrial credibility to our story.

At the time of writing, Halo glasses are priced at $349 in the United States, which is surprisingly approachable considering the technology involved. In fact, when you compare this against the eyewatering prices attached to some competing AI/AR systems, Halo starts looking remarkably affordable.

Ironically, one of the killer applications for this sort of technology may be something magnificently mundane: remembering where we left things like our car keys. When I think of the countless hours of frustration I’ve spent looking for things like breadboards (“In the white container in the garage”), sensors (“In the gray container in the closet under your hiking boots”), and tools (“You just covered your wire strippers with your notepad, you bozo!”), I quickly conclude that a pair of Halo glasses would pay for themselves in just a couple of months.

Will devices like Halo ultimately become as commonplace as smartphones? I honestly have no idea (although I have a sneaking suspicion they will). But I do know this: for the first time in quite a while, I find myself looking at a wearable AI platform and thinking, “This doesn’t feel like yet another technology demo; it actually feels like a tempting taste of things to come!”