As I’ve mentioned on occasion, I predate many of the technologies that now surround us. I remember the heady days of the first 8-bit microprocessor units (MPUs) and early single board computers (SBCs) that were based on these little rascals. Glancing at the bookshelves in my office, I see my trusty companions of yesteryear in the form of 6502, Z80, etc. data books.

One thing that reflects how far things have progressed from those days of yore circa the late 1970s is the fact that those microprocessor data books assumed minimal prior knowledge. Tomes of this ilk typically started off by explaining the binary number system, for goodness’ sake.

These days, I spend a lot of time “doing stuff” with microcontroller units (MCUs), which we can think of as MPUs augmented with on-chip memory (SRAM and some form of non-volatile memory (NVM) like Flash) and peripheral functions like counter/timers, analog-to-digital converters (ADCs), digital-to-analog converters (ADCs), and so forth.

I love today’s MCUs. Many of them are like supercomputers compared to what I started out with. On the other hand, it must be acknowledged that there’s a lot of inertia with respect to the MCU silicon architectures that have been developed by large, established companies. What delights could a small, nimble, young company bring to the party?

Well, by some strange quirk of fate, I happen to be in a position to answer that question because I was just chatting with Henrik Flodell, who is the Senior Marketing Director at Alif Semiconductor. From the company’s inception in 2019, the folks at Alif decided to start with a clean slate, pick the right technology, and develop a scalable architecture capable of powering future generations of products.

One thing that impresses me is that, from the get-go, they identified support for artificial intelligence (AI) and machine learning (ML) as being key capabilities for future MCUs to provide. Depending on your point of view, four years ago may seem to be a lifetime away or close enough to sniff, but I think it predates much of the AI/ML hype.

Their goal was to create a family of off-the-shelf MCUs that could cover not just simple time-series AI/ML use cases like vibration data that can be addressed with standard Cortex-M0 and Cortex-M4 microcontrollers, but to also be able handle more advanced use cases like voice processing and even video, all on an MCU without need for external memory and suchlike.

In a crunchy nutshell, Alif’s mission was to provide highly integrated processors enabling intelligent end-point applications requiring:

- Scalable Processing: Single to Multi-Core; RTOS and Linux

- Long Battery Life: Architected for Lowest Power Consumption

- Strong Security: Built in from the Ground Up

- High Integration: Large on-die Memories in extremely Small Packages

- Edge AI/ML: First MCUs that can handle Vibration, Voice, and Vision

Well, they succeeded. Just a few days ago as I pen these words, the folks at Alif Semiconductor announced full availability of their Ensemble product line through global distribution channels. The Ensemble family is claimed to offer the most secure, power-efficient, Edge AI-enabled MCUs and fusion processors on the market.

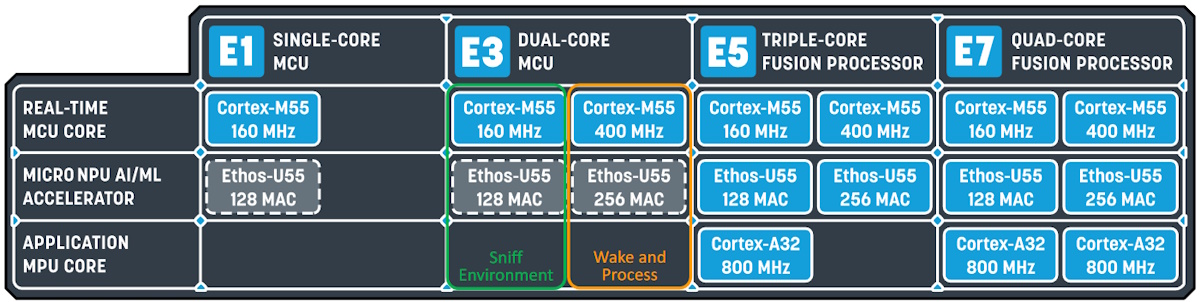

The easiest way to wrap our brains around this family is to start with the image below. The Ethos-U55 is a micro neural processing unit (microNPU), which is optional in the E1 and E3 devices.

High-level view of the Ensemble MCU family (Source: Alif)

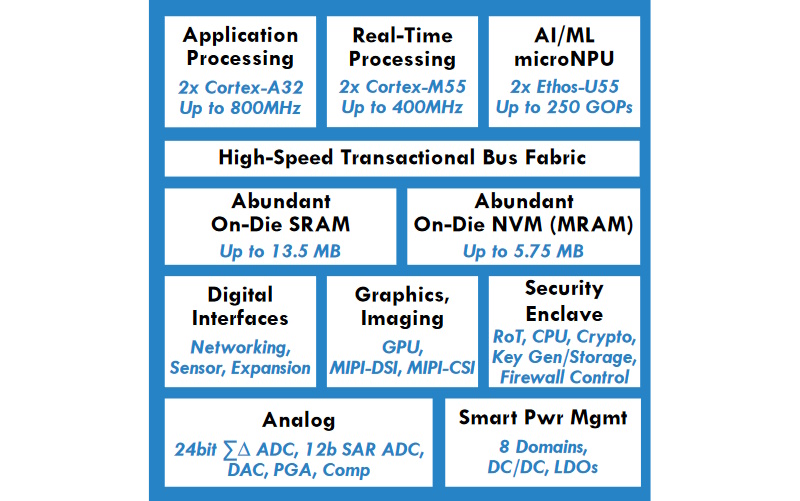

A slightly more detailed view of the E7 Quad-Code Fusion Processor is shown below. Observe that this is presented as a single monolithic die. Also, observe the abundant on-die SRAM (up to 13.5MB) and the abundant on-die NVM in the form of magnetoresistive random-access memory (MRAM) (up to 5.75MB).

Medium-level view of the Ensemble Quad-Code Fusion (Source: Alif)

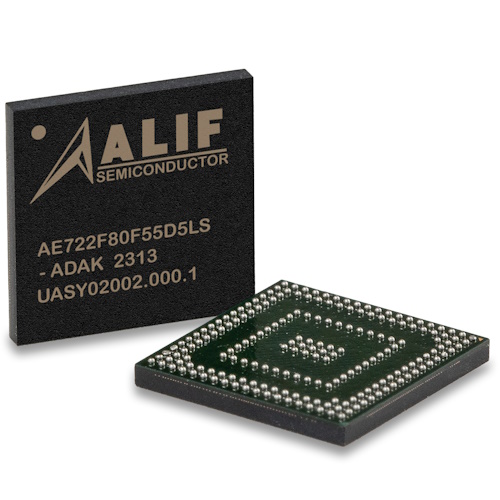

Going back to the days of my misspent youth, if I had seen an MCU specification reflecting the block diagram shown above, I would have assumed that this device would have been the size of a refrigerator* and that it would have required the services of two strong engineers to move it into position. (*Coincidentally, the 1MB hard disk drive I used in 1981 was indeed the size of a refrigerator). So, just how big is an Ensemble MCU? Well, as always, a picture is worth a thousand words.

Ensemble MCU (left) vs. matchstick (Source: Alif)

Did I really need to add the “(left)” qualifier to the Ensemble MCU in the image above. Well, do you recall the classic jape someone played in respect to the Scottish National Antarctic Expedition of 1902-1904 as presented in the Wikipedia? One of the images in that article is of a Scottish guy playing the bagpipes. The original caption was as shown on the left below.

Piper and penguin (Source: Wikipedia)

The original caption with its “indifferent penguin” was funny enough (which may explain why it’s since been removed), but then some wit added one word “(right)” as shown on the right above. I’m still laughing! But we digress…

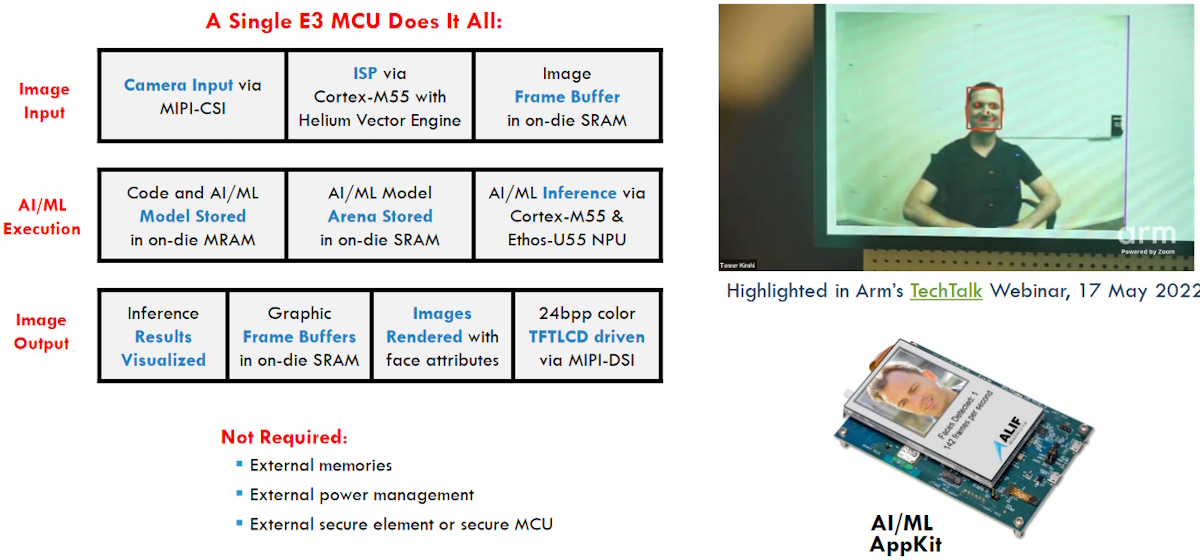

So, what can we do with one of these bodacious beauties (an Ensemble MCU, not a Scottish piper or his enamored penguin)? Well, consider a task like object detection, such as working out which portion of an image is a human face, for example. This isn’t the sort of task one would undertake on a regular MCU, but it’s well within the capabilities of a dual-core Ensemble E3.

One chip and done (Source: Alif)

The really telling points are summarized on the bottom left: No external memory is required, no external power management is needed, and anything required for security is already on-chip. Speaking of which, the isolated security enclave subsystem embraces the following features:

- Isolated Security Enclave Subsystem.

- Separate dedicated core, memory, crypto HW, OTP memory.

- Hardware-based Root of Trust (RoT).

- Unique device ID and Key Pair for each individual chip.

- TrustZone expanded to handle multiple CPU cores.

- Configurable firewalls regulate access of each CPU to sections of memories and individual peripherals.

- Security services: Secure boot, key management, cryptographic services.

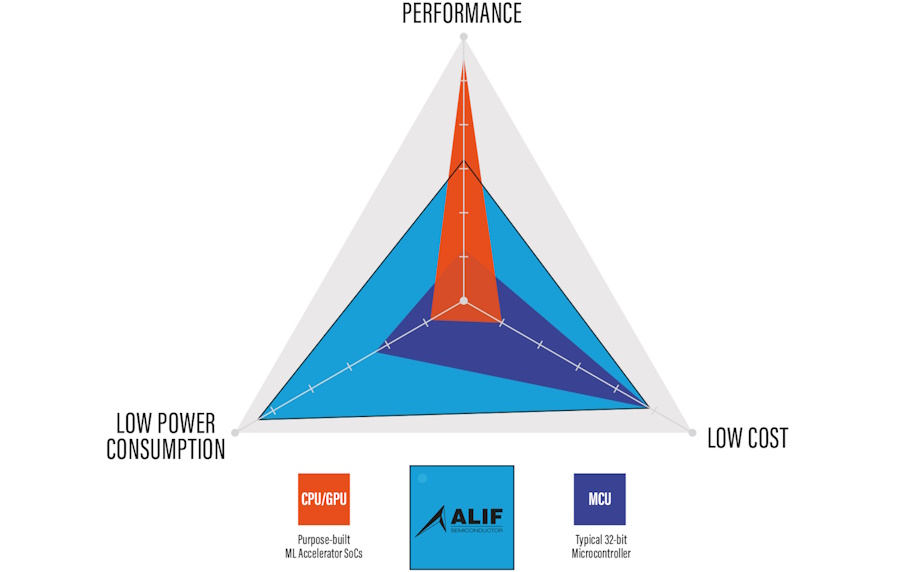

I don’t know about you, but I really like spider chart (a.k.a. radar chart) graphical representations used to display a comparison of multivariate data in the form of a two-dimensional chart of three or more quantitative variables represented on axes starting from the same point. For example, consider the spider chart comparing performance, power consumption, and cost of traditional MCUs with custom-built CPU/GPU-based SoCs and with Alif’s Ensemble devices as illustrated below.

Comparison of performance, power consumption, and cost (Source: Alif)

As Henrik told me, when compared to typical MCUs, Alif’s Ensemble MCUs and Fusion Processors provide two orders of magnitude more performance while consuming two orders of magnitude less power. I just ran across a more formal presentation of this in the associated press release as follows

The Ensemble 32-bit microcontrollers and fusion processors are designed to deliver an uplift in performance of at least two orders of magnitude compared to executing similar workloads on traditional 32-bit MCUs across a wide range of AI models. The uplift stems from Alif’s architecture that efficiently integrates a microNPU next to each CPU core. A clear example: using one microNPU with one CPU core to run an object detection model reduces inference time by 74x compared to using its CPU core alone. Since Alif’s CPU core already represents a 10x AI/ML performance increase when compared to the core used in traditional MCUs, the total performance uplift reaches 740x. This uplift in performance is not at the expense of energy consumption because in this example each object detection inference consumed only 0.27 mJ, which is also two orders of magnitude less than typical MCUs.

As is often the case, I’m left contemplating what the expressions would be on my old university lecturers’ faces if I could ever get my time machine to work and use it to take one of Alif’s Ensemble devices back in time to show them. How about you? What do you think about all of this?

One thought on “New MCUs Provide 10^2 the Performance at 10^-2 the Power”