With the promises and challenges of artificial intelligence (AI) and, more specifically, machine learning (ML), this is a time of great architectural innovation as developers compete to provide the best ML solutions. All engineering solutions have trade-offs; the trick here is to find the solution(s) with the fewest bad trade-offs.

In that spirit, we have a new architectural proposal from Aspinity, a start-up company. It’s called RAMP, or Reconfigurable Analog Modular Processor. Yes, you read that right: analog. Whoa, whoa, whoa – don’t run away. Hear this out first! If it helps, the analog is already buried inside their chip. It will look practically digital to you.

Attacking Always-On Power

Aspinity is addressing the problem of always-on detection of events (often, but not exclusively voice) that use ML. The problem is, ML is pretty power-intensive, and that makes it tough to use it in an always-on implementation. The applications they’re addressing include wake-word detection for so-called smart speakers, as well as vibration analysis. In both cases, your detector has to be on all the time, or else you’ll miss something. Hence the conundrum: how to do this at the edge with a limited power budget?

From the time we conquered signal analysis in the digital domain, the mantra has been the same: take inputs from the stubbornly and uncooperatively analog world, digitize it as soon as possible, and then work within the digital domain up until the point when (and if) an analog result is needed. And, honestly, it’s worked pretty well for us. Analog-block power is often continuous, while it can be easier to duty-cycle digital blocks – which helps to compensate for the relatively high power required to digitize the analog signals in the first place.

But, in an always-on application, you can’t do the power cycling that reduces the digital power below that of an equivalent analog circuit. If left on all the time, that implementation will drain more energy from the battery than an analog version. Which raises the question: why not stay analog for longer?

That’s what Aspinity is doing, claiming resulting power an order of magnitude less than what would be required for a standard digital implementation. Granted, there’s only so much you would want to do with analog, so they’re not proposing to do everything in the analog domain; just the always-on portion.

Analog In-Memory Computing

But how do they do machine learning in analog? One of the keys to ML math is the multiply-accumulate (MAC) operation needed for dot products and other matrix functions. In the digital domain, this is most effectively done using a sea-of-MACs architecture (so to speak). But we’ve also seen that in-memory computing can simplify things if you have an analog memory.

We’ve looked at RRAM as a possible approach (which is still a long way from being proven viable), but flash also has that capability to some extent (which we’ll look at more closely in the future). And Aspinity has developed their own custom analog flash memory block.

This means that, at least for the limited functionality needed during the always-on phase, everything can stay in the analog domain – until an event of interest is detected. At that point, they wake up the digital circuit, and it can take over. They say that the audio of interest is typically only 10-20% of the overall audio that’s out there, meaning that the digital circuits can sleep through the roughly 80-90% of the audio that’s just a buncha noise (from the application’s point of view).

Three Key Apps

There are three main applications that Aspinity has targeted, although these aren’t exclusive. That said, the RAMP block has specific features that enable those applications.

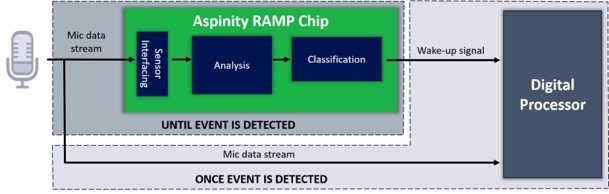

The simplest is audio event detection. An example of this would be the sound of a window breaking. If the analog detector decides that the event has occurred, it simply wakes the digital circuit and informs it of the event.

(Image courtesy Aspinity)

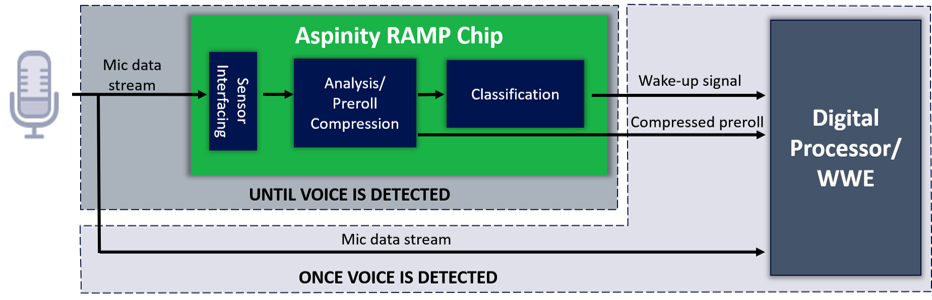

Wake-word recognition is the second app, and it’s similar except for one thing: the data that caused the wake-up must also be sent to the cloud for analysis by Siri or Alexa or whoever happens to be on duty that day. And it’s not just a matter of saying, “OK, digital circuits, turn on and send everything you hear, starting from now, to the cloud.” First of all, that would work only if you could guarantee that this would all happen between the time the wake-word ended and the rest of the text started. Not so sure about that…

But, more importantly, the voice recognition engines need a so-called pre-roll: the half-second or so of audio that precedes the wake-word. This is effectively a sample of the background noise, and the cloud engines use it to clean up the speech for easier recognition. So the Aspinity solution captures the pre-roll, compresses it, and sends it to the digital circuits for forwarding to the cloud.

(Image courtesy Aspinity)

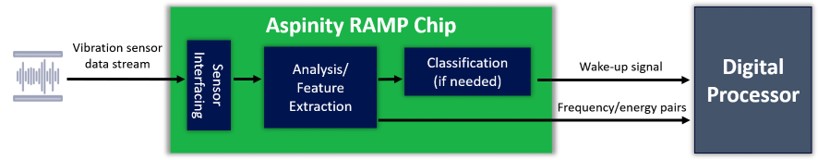

The third app is vibration analysis. This has been one of the classic desirable applications in industrial settings. Every moving thing creates some pattern of vibrations. There’s no right or wrong pattern; it’s more a matter of learning how a particular machine “sounds” when running normally. If that sound starts to change, then it’s an indication of some problem – maybe simply aging. Caught quickly, it can allow for an ordered shut-down and replacement, without the chaos resulting from the machine literally dying when no one expects it.

A classic way of characterizing the sound is through frequency analysis, which, effectively, means figuring out the sound energy at a variety of frequencies. Frankly, this is yet another specialized event-detection application, with the event being the change of tone of the vibration. But, in this case, in order for the digital circuits to make sense of what’s going on, the analog portion needs to tell them what the sound changed to. So it passes the frequency/energy pairs to the digital circuits.

(Image courtesy Aspinity)

Model Development

Even though this is a way of implementing a neural net far different from what “everyone else” is doing, the basic training approach starts out in a familiar fashion. The neural-net structure is provided to one of the classic training frameworks, where the model is developed. From there, the model can be downloaded to the Aspinity tools for further refinement into the Aspinity architecture. You may end up iterating the model a few times within the Aspinity tools or even by sending a modified network back up to the original framework for retraining.

So we have yet another twist on how to optimize systems that leverage machine learning. And, in this case, one that might make analog cool again. (Oh, what am I saying? Analog has always been cool; just hard. But, in this case, Aspinity has taken care of the hard bits.)

More info:

Sourcing credit:

Tom Doyle, CEO/founder, Aspinity

Brandon Rumberg, CTO/founder, Aspinity

Marcie Weinstein, PhD, Strategic and Technical Marketing, Asperity

What do you think of Aspinity’s analog approach to always-on AI?