At this week’s Xilinx Developers Forum (XDF) in San Jose, California, Xilinx announced “Vitis” – a new framework for developing applications that use Xilinx programmable logic devices such as FPGAs, ACAPs, MPSoCs, RFSoCs, and all the other acronyms they can come up with that refer to what we’d call “FPGAs.” With an abundance of grandiosity, the company proclaimed that Vitis was “five years and a total of 1,000 man-years in the making.” Whoa! OK. We don’t frequently encounter person-millenia metrics for new product announcements. Even if we assume that they started this in 1984 when Xilinx was founded, they would still have needed at least 28 engineers writing code full time for Vitis over that 35 year span.

This should be good!

Spoiler: engineering-eons aside, Vitis IS impressive, and more importantly, is likely to mature to be even more impressive over the coming years. But, before we jump into the “what” of Vitis, it is important to understand the “why.”

Modern FPGAs (yes, and ACAPs and all the rest) are possibly the most complex semiconductor devices ever created. The Versal device Xilinx was showing at XDF is comprised of a staggering 36 billion transistors. But transistor count alone doesn’t begin to capture the essence of the problem. These devices contain loads of programmable logic LUT fabric, thousands of high-performance arithmetic units, multiple 64-bit application processors, specialized adaptable vector processors, various types and sizes of memory resources, high-speed serial IOs, a plethora of hardened memory and standard interfaces… The list goes on and on.

With all of that hardware sitting there on your chip looking at you expectantly, one thing screams out: “How do I program this thing?” You’ll need to partition your application into parts that need to run in software versus hardware, on vector versus applications processors, with various allocations of memory resources, parallelism, pipelining, lions, tigers, bears. It’s terrifying!

For many application development teams, the most significant challenge is presented by the FPGA fabric itself. The pot of gold at the end of the programmable logic rainbow is massive parallelism that can generate spectacular throughput, miserly power consumption, and all kinds of knock-on benefits for your system performance and efficiency. But, once you’ve decided which parts of your system can benefit most from dedicated hardware acceleration, you face the sobering task of designing complex digital hardware, which requires engineers with ever-so-rare expertise in hardware description languages, synthesis, place and route, and other mystical arts.

FPGA companies have struggled with this problem for decades. The adoption of FPGA technology in the market has always been limited by the severe learning curve required to take advantage of it. Still today, the big FPGA companies have more engineers dedicated to the development of design tool software than to the development of the chips themselves. On top of that, they command vast armies of field-applications engineers ready to swoop in and help flailing customers figure out how to get their FPGA designs from perpetual “almost there” status to the finish line. Finally, there are large third-party ecosystems of consultants and add-on tool suppliers who make their living helping to make FPGA design just a little bit easier.

Now, these devices are moving into the rapidly expanding markets for compute acceleration, network acceleration, and storage acceleration from the edge to the cloud as well as in stand-alone embedded systems – in markets like 5G, data center, and automotive. Most of the development teams in those arenas do not have access to FPGA design expertise, and most of the applications are designed in the software domain, with an increasing reliance on AI functions.

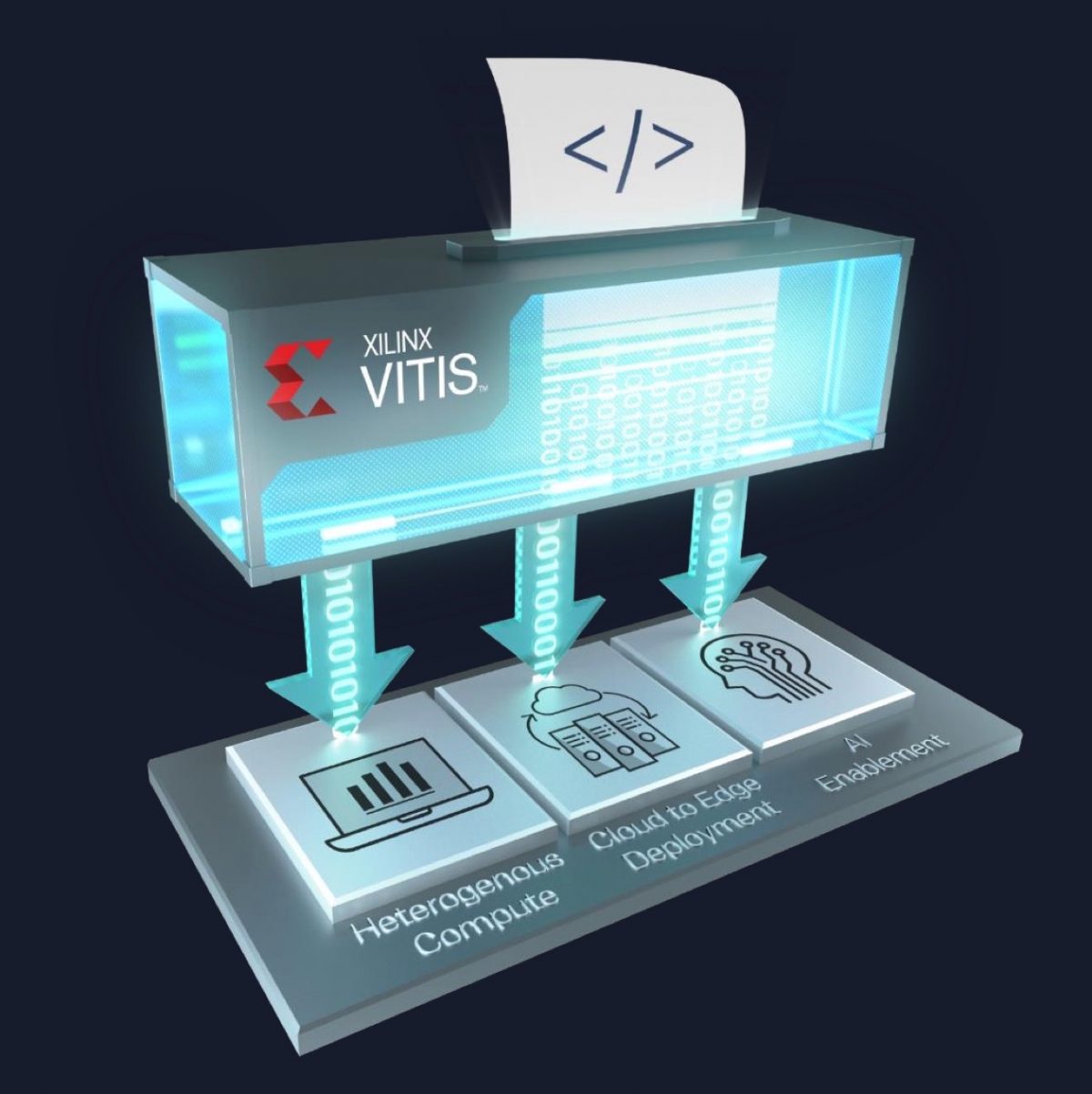

What this all means is that the world desperately needs development flows that allow domain-specific software (and AI) developers to harness the power of complex heterogeneous computing hardware that includes FPGA fabric – without having to understand the underlying details of that hardware, and without having to bring in FPGA experts to design the hardware acceleration bits.

Gratissimum Vitis! (Welcome, grapevine!)

Vitis is Xilinx’s answer to unified software development for complex, heterogeneous compute systems (that happen to contain Xilinx devices). Xilinx touts Vitis as “free” and “open.” And, while we agree with the “free” part – the “open” claim has at least a small asterisk, which we’ll explain in a bit. Vitis sits on top of Xilinx’s Vivado suite of tools for FPGA hardware design, providing the necessary utilities for software developers to work in their own choice of software IDE to develop and debug applications that will ultimately run on Xilinx-powered systems.

Vitis does not impose its own IDE (although it does offer one), which is a smart move on Xilinx’s part. Instead, it plugs into common software developer tools and frameworks and relies on a set of open source libraries to connect to the underlying Xilinx ecosystem. Xilinx says that the libraries, as well as the hardware IP, are all open source – a semi-bold move on the company’s part. The asterisk, of course, is that these are open-source libraries and IP blocks that were designed specifically for Xilinx hardware architectures. That means the system is “open” – as long as you’re designing with Xilinx hardware as part of the target platform. While it might technically/legally be possible to use Vitis to design for a competitor’s chips, or for an ASIC implementation – you’d likely have to do a lot of work to get reasonable results with that approach, and there are definitely other, better options.

The Vitis core development kit includes compilers, analyzers, and debuggers that sit on top of a runtime library. The runtime library is open source, and it manages the data movement between various computing domains and subsystems. The runtime library sits on top of a “base layer” Vitis target platform that includes a board and pre-programmed I/O.

Sitting on top of that core development kit is a third layer, which consists of eight libraries aimed at various application domains, containing a total of more than 400 pre-optimized open-source applications. The eight libraries are the Vitis Basic Linear Algebra Subprograms (BLAS) library, the Vitis Solver library, the Vitis Security library, the Vitis Vision library, the Vitis Data Compression library, the Vitis Quantitative Finance library, the Vitis Database library, and the Vitis AI library. The goal of all these pre-optimized libraries, of course, is to dramatically ease the workload of developers in these targeted applications areas.

That third layer is where the most value is likely to be noticed by most high-level application developers. You should be able to grab one of those libraries and very quickly have a working prototype of a rough sketch of your application that takes advantage of the enormous computing power of these Xilinx devices, and that also provides you a roadmap to partitioning your own application between the various computation, memory, and data movement resources.

Behind the scenes of that third layer, however, is where all the real magic happens. Functions that are best implemented in FPGA fabric hardware, for example, are distributed as open source C/C++ blocks pre-optimized for Xilinx’s high-level-synthesis (HLS) compiler. This allows these functions to be flexibly implemented in hardware with no need for RTL development, debug, synthesis, place and route, and timing closure (OK, all these things still happen, but they occur in the background, where they are less likely to terrify innocent application developers).

Finally, setting on top of the third layer is a fourth layer called “Vitis AI,” aimed specifically at AI developers. Vitis AI integrates a domain-specific architecture (DSA), which configures Xilinx hardware to be optimized and programmed using standard AI frameworks such as TensorFlow, PyTorch, and Caffe. Vitis AI provides tools for the optimization of trained AI models. Typically, AI models are initially trained in a data center environment using high-precision floating point. When these models are deployed into the field to be used in inference, there is tremendous opportunity for performance, latency, and power optimization by parallelizing, quantizing, pruning the network, and performing other time-, space-, and power-saving optimizations. Vitis AI can facilitate those optimizations, which should be able to deliver orders-of-magnitude improvement in inference on Xilinx hardware.

Vitis is a huge step forward for Xilinx with their tool suite. It cleans up their (previously very confusing) tool offering into a much easier to understand external view, with clear entry points and familiar environments for the three “care-about” engineering types – hardware experts, software experts, and AI experts. Now that Xilinx is building silicon that requires all three of these groups to be intimately involved with creating applications, this clear, simple, user-oriented external view should provide an enduring, scalable infrastructure for the company to build upon.

Looking across the aisle at major competitors, Intel and NVidia immediately come to mind. Vitis, in a way, is analogous to what NVidia did years ago with CUDA, creating a programming framework that allows normal software engineers to take advantage of an unconventional hardware architecture. With CUDA, NVidia opened an entirely new market for GPUs as data center accelerators. Vitis could do for Xilinx what CUDA did for NVidia, making programmable logic more accessible for acceleration applications.

Intel, in a similar vein, has announced what they call “One API” – which, on the surface, appears to be very similar to Xilinx’s Vitis. Intel says One API “offers a common developer environment for CPUs, GPUs, and FPGAs (not just Intel) with the ability to tune and target for a specific architecture. One API also provides the ability to efficiently connect to XEON with UPI and in the future CXL.” Intel’s answer to Vitis AI is their distribution of the OpenVINO toolkit, which they say “enables deep learning inference and easy heterogeneous execution across multiple Intel platforms… providing implementations across cloud architectures to edge devices.”

Probably, partnership and “ecosystem” niceties aside, neither Xilinx nor Intel would shed any tears if NVidia suddenly vanished entirely in a virtual puff of parallel pixel processing. Vitis and One API should give Xilinx and Intel sturdy weapons to counter NVidia’s current dominance in acceleration applications. Against each other, however, more research is needed. Both companies are flaunting their “openness,” but clearly both are also creating software development ecosystems that assume that they are supplying the underlying hardware. It is possible/likely that each of these environments is extremely “sticky” – (despite Intel’s assertion that they support non-Intel hardware targrets) in that developing your application on one would make it very difficult to port to the other. Intel’s much broader portfolio of processing products, (which also includes Xeon) would seem to give them the advantage in that scenario, but it is too early to assess the whole situation with the information available to date. It will be interesting to watch.

8 thoughts on “Xilinx Vitis and Vitis AI Software Development Platforms”