I think most of us have come to appreciate how incredibly useful AI can be, and it’s getting more efficacious every day. The funny thing is that it’s becoming harder to remember a time before AI (much like younger people being unable to visualize a world without high-definition flat-screen TVs, smartphones, wireless connectivity, and the internet).

Although researchers from many fields—computer scientists, mathematicians, neuroscientists, cognitive scientists, and linguists—have been beavering away on various flavors of this technology in the background for yonks and yonks (that’s a lot of yonks), most regular folks remained blissfully unaware of what was going on in AI space (where no one can hear you scream) until the public launch of ChatGPT in November 2022. That’s just a little over three years ago as I pen these words—but my, how rapidly things have changed.

As an aside, many people are surprised to learn just how far back AI’s foundations go. For example, the first artificial neuron model was the McCulloch–Pitts neuron, proposed by neuroscientist Warren McCulloch and logician Walter Pitts in 1943. The first natural language chatbot, ELIZA, was developed in 1966 by Joseph Weizenbaum at MIT. ELIZA simulated a Rogerian psychotherapist, and people were surprisingly willing to treat it as if it really understood them. Then there was Terry Winograd’s natural-language-driven SHRDLU program in the late 1960s and early 1970s, which could understand and manipulate blocks in a virtual “blocks world”—primitive by today’s standards, perhaps, but astonishing for its time. However, we digress…

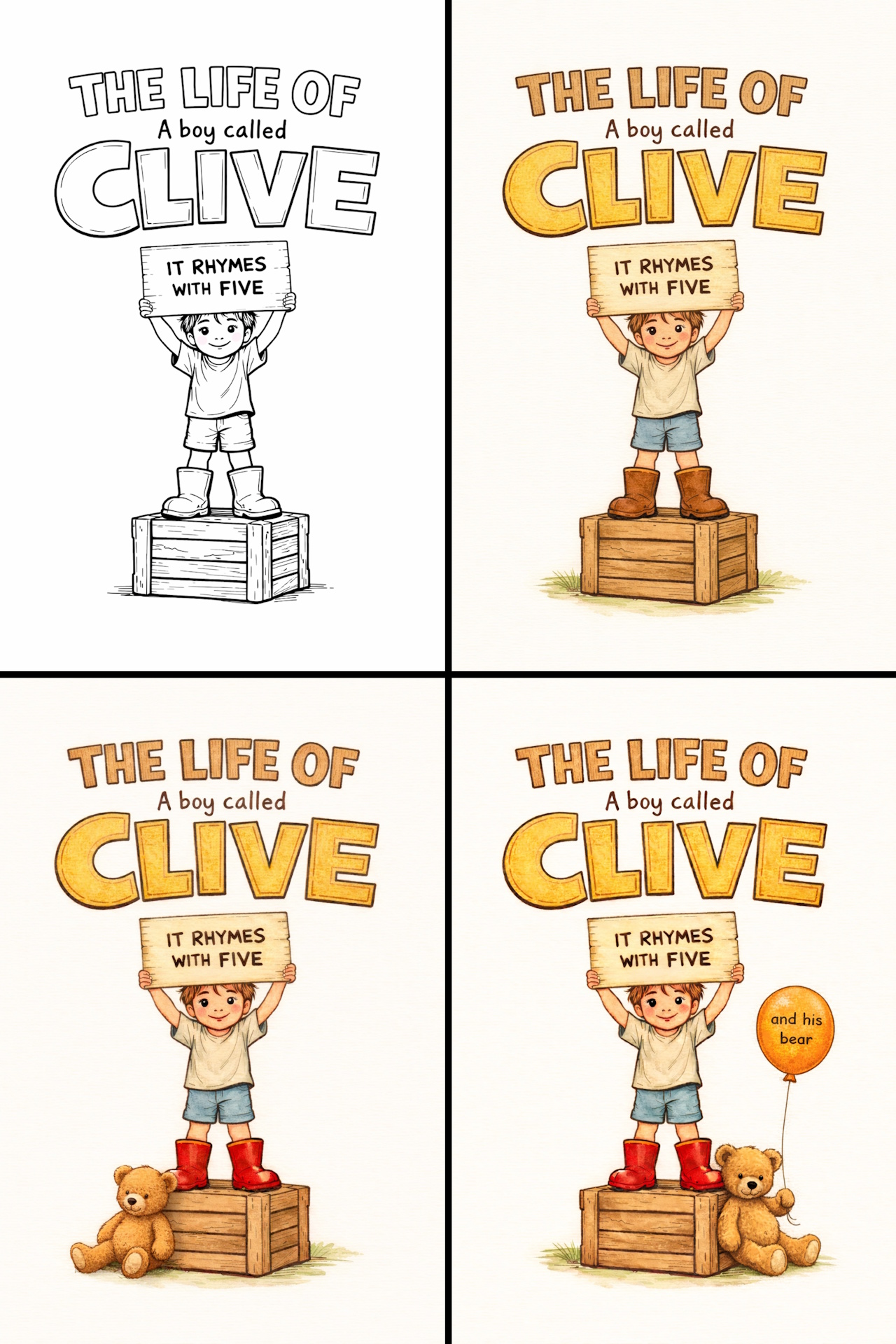

I wonder what the early pioneers would have thought if they could see us now. Just today, for example, I had a little dabble myself (it’s alright, no one was looking). As I’ve mentioned before, I’ve been working on a book called “The Life Of (a boy called) Clive” about growing up in England in the 1950s and 1960s. This book contains many vignettes about your humble narrator, many of which also feature my partner in crime, “Big Ted.”

I’m currently on the final edit. Ideally, I’d like to festoon the book with lots of little pencil sketches reminiscent of Winnie-the-Pooh books, but this level of illustration is beyond my feeble skills. At a minimum, I need something for the cover.

Since “Clive” is not a common name in America (many hear it as “Clyde,” so I often end up saying, “Like ‘Olive,’ but swap the ‘O’ for ‘C’”), I’ve long envisaged a picture of a six- year-old boy in shorts, a T-shirt, and wellington boots, standing on a wooden packing crate, holding a cardboard sign saying, “It rhymes with five.”

I’ve not been able to find a graphic artist willing to sit down and talk to me about all this. So, earlier today, I described what I was thinking to ChatGPT and asked if it could rough out a line-art illustration. I must admit that I was really impressed by what it came up with (the upper-left corner of the composite image below). A little later, I was video-chatting with my chum Joe Farr, who hangs his hat in the UK (the wonders of today’s connected world). I emailed him a copy of my image, saying I was thinking of adding a bit of color. While we were still chatting, Joe ran this through ChatGPT at his end, resulting in the upper-right corner of the image below.

I know it sounds daft, but it simply hadn’t occurred to me to ask ChatGPT to do this. While we were still talking, I suggested to Joe that it might be a good idea to make the Wellington boots bright red to stand out a bit. I also mentioned that I’d been thinking of adding my teddy bear, Big Ted, into the image. Well, before you could say “supercalifragilisticexpialidocious,” the boots were red, and Big Ted had graced us with his presence (in the lower-left corner of the image above).

I’m never satisfied. Next, we asked ChatGPT to tweak the image to have Big Ted holding a string attached to an orange balloon bearing the words “and his bear.” The result is shown in the lower-right corner of the image above. At first, we were surprised to see that Big Ted had moved to the right of the wooden crate, but then we realized this better served the “top-to-bottom” and “left-to-right” flow of the text across the page (clever ChatGPT).

The bottom line is that it’s amazing that we can use AI to generate something this sophisticated in just a few minutes. Now I’m feeling bad that I didn’t employ a human graphics artist, but as I said, I’ve not been able to find one willing to sit down with and discuss this with me.

The reason for my current waffling (yes, as always, of course there’s a reason) is that I was just chatting with Ananda Roy, who is Senior Product Manager for Low-Power Edge AI at Synaptics.

As you may recall from my previous columns—Reimagining How Humans Engage with Machines and Data (2022), BYOD or BYOM to Synaptics’ AI-Native Edge Compute Party (2024), and Next-Gen Multimodal GenAI Processors for the AIoT Edge (2025)—Synaptics has a storied history on the AI front. In fact, the company has been developing AI-enhanced technologies since before most people even knew there was such a thing as AI.

For decades, Synaptics has focused on how humans interact with machines—touch, voice, vision, and other sensory inputs. Along the way, the company quietly incorporated machine-learning techniques into its products to make devices smarter, more responsive, and more intuitive to use. Long before generative AI burst onto the public stage, Synaptics engineers were already using AI to interpret sensor data, improve human-machine interfaces, and enable devices to understand what users were trying to do.

More recently, Synaptics has taken things to the next level with its AI-native edge computing strategy. Rather than sending everything to the cloud, the company is building platforms that enable intelligent processing to happen locally on devices—everything from smart cameras and industrial systems to consumer electronics and IoT devices. At the heart of this effort is Synaptics’ Astra platform, which brings together heterogeneous processors, AI accelerators, and software tools designed specifically for the emerging world of multimodal AI at the edge.

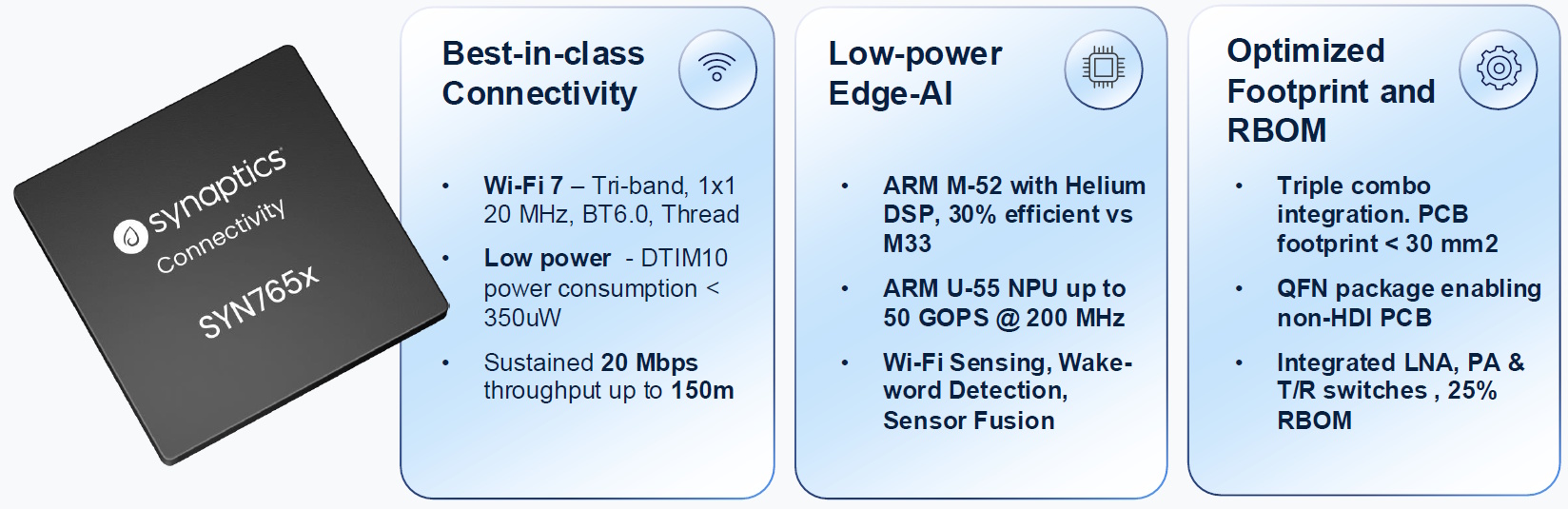

The reason for our chat was for Ananda to bring me up to date with Synaptics’ latest- and-greatest offering—the SYN765x. This little scamp (the SYN765x, not Ananda ) is best described as a “Wi-Fi 7 AI-native connected MCU.” That may sound like a bit of a mouthful, but the concept is actually quite straightforward… and rather clever.

At its heart, the SYN765x combines three increasingly important components in modern edge systems: high-performance wireless connectivity, local AI compute, and a general-purpose microcontroller, all in a single compact chip.

The SYN765x Wi-Fi 7 AI-native connected MCU (Source: Synaptics)

Let’s start with the connectivity side of the story. The SYN765x supports tri-band Wi-Fi 7, along with Bluetooth 6.0 and an 802.15.4 radio for Thread and Zigbee. This means a single chip can talk to the home network, communicate with nearby devices over Bluetooth, and participate in emerging smart-home ecosystems built around Thread and Matter.

According to Synaptics, the device can sustain 20 Mbps throughput at distances exceeding 50 meters, which is more than enough for most smart-home or IoT scenarios—and it maintains that performance over longer ranges than many competing solutions.

Of course, connectivity is only part of the story. The “AI-native” part of the description refers to the on-chip compute subsystem. This includes a 200 MHz Arm Cortex-M52 MCU with Helium DSP extensions, along with a dedicated Arm Ethos-U55 neural-processing unit (NPU) capable of delivering up to 50 GOPS of AI performance.

In practice, this means developers can run powerful machine-learning models directly on the device rather than sending data to the cloud. Typical applications include sensor fusion, wake-word detection, and environmental sensing.

One particularly interesting example Ananda mentioned during our conversation was Wi-Fi sensing. The idea is for the system to analyze subtle changes in Wi-Fi signal patterns—what engineers call channel-state information—to detect, for example, presence or movement in a room. In other words, if the Wi-Fi signals are already bouncing around your home anyway, why not use them as a sensing mechanism? With the help of the on-chip NPU running machine-learning algorithms, the device can detect presence, motion, and potentially other environmental changes without requiring dedicated sensors.

Another key differentiator is the level of integration. In many connected appliances today, you’ll typically find a connectivity chip, a companion MCU for user-interface tasks, and sometimes additional processors for sensing or control. The SYN765x collapses all these functions into a single device, allowing designers to simplify their system architecture, reduce board space, and lower the overall bill of materials.

The chip also integrates RF front-end components such as the low-noise amplifier, power amplifier, and transmit/receive switching, and it comes in a QFN package that allows designers to use simpler four-layer PCBs rather than more expensive HDI boards.

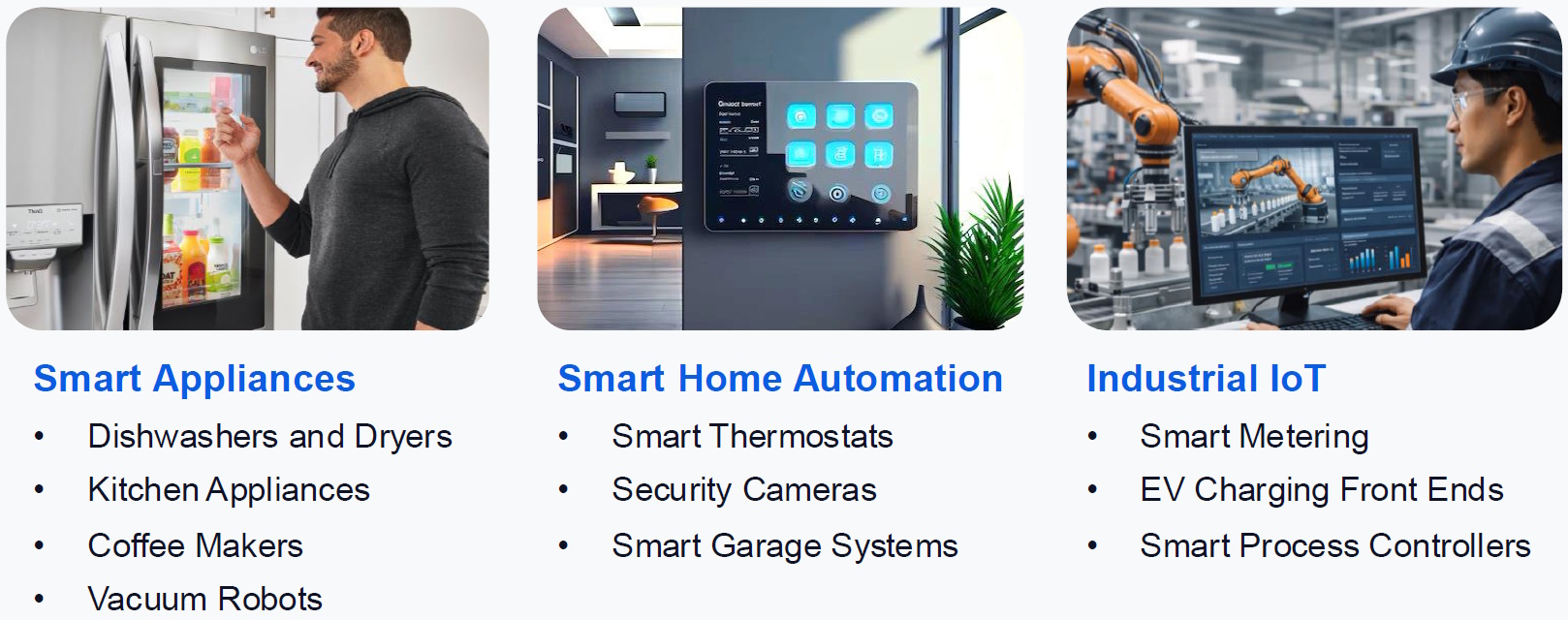

The target markets for this little rascal are exactly the sorts of systems we increasingly encounter in everyday life: smart appliances, home-automation devices, and industrial IoT equipment; think smart thermostats, security cameras, EV-charging front ends, and connected kitchen appliances.

Some target market examples for the SYN765x (Source: Synaptics)

Perhaps the most important point, however, is what this device represents in the broader evolution of edge AI. Instead of requiring expensive processors or cloud-based computation, the SYN765x enables local intelligence directly inside everyday connected devices. That means faster responses, improved privacy, reduced network traffic, and—perhaps most importantly—bringing practical AI capabilities into products that previously couldn’t justify the cost or power consumption.

In short, the SYN765x provides yet one more example of how AI is steadily migrating outward, from massive cloud servers to the tiny embedded systems that quietly run the devices around us.

It’s really not so long ago that, if you wanted to do anything remotely “intelligent,” you had to send data up to the cloud, wait for some distant server to chew on it, and then wait again for the answer to come back. Increasingly, however, that intelligence is moving closer to where the data is actually generated—in our homes, our appliances, our factories, and our cities.

And that’s the real story here. Devices like the SYN765x aren’t just “adding a bit of AI to the edge.” Instead, they’re helping redefine what the edge actually is. When even a humble thermostat, security camera, or washing machine can run its own AI models, the line between “smart device” and “intelligent system” begins to blur.

If the AI pioneers of the 1940s, 1950s, 1960s, and 1970s could see us now, I suspect they would be amazed—not just that AI works, but that it’s becoming so ubiquitous and finding its way into everything. Even, perhaps, into the image of a little boy in Wellington boots standing on a crate next to a teddy bear holding an orange balloon.

I’m afraid Big Ted is no longer with us, but I have a feeling he’d approve.

Postscript: I just had a FaceTime call with my mum and asked her what happened to Big Ted. She says he’s busy travelling the world and exploring things (apparently, she still receives occasional postcards). Well, that certainly sounds like him.