I was just chatting with the folks at Synaptics about their recently launched FlexSense family of teeny-tiny multi-modal smart sensor processors. Actually, I was thinking about using “Teeny-Tiny Multi-Modal Smart Sensor Processors” as the title for this column, but I saw “Reimagining How Humans Engage with Machines and Data” on some of their materials and I ended up flipping a metaphorical coin. (I defy anyone to tell me that this opening paragraph won’t bring tears of joy to the bean counters who stride the corridors of the SEO domain, especially those interested in human-machine interfaces (HMIs), the Internet of Things (IoT), and artificial intelligence (AI) on the edge, Ha!)

Just to set the scene and remind ourselves how we came to be here, Synaptics was founded in 1986 by Federico Faggin and Carver Andress Mead, both of whom are legends in their own lifetimes (thus far, I’ve managed only to become a legend in my own lunchtime, but I’m striving for greater things).

As you are doubtless aware, Italian-American physicist, engineer, inventor, and entrepreneur Federico Faggin is best known for his role in designing the first commercial microprocessor, the Intel 4004 (actually, Faggin headed the design team along with Ted Hoff, who focused on the architecture, and Stan Mazor, who wrote the software). Meanwhile, Carver Mead is an American scientist and engineer who is a pioneer of modern microelectronics, making contributions to the development and design of semiconductors, digital chips, and silicon compilers, to name but a few. In the 1980s, Carver turned his attention to the electronic modeling of human neurology and biology, creating what are now known as “neuromorphic electronic systems.”

Carver and Federico’s interest and research on neural networks and using transistors on chips to build pattern recognition products is what led them to found Synaptics, whose name is a portmanteau of “synapse” and “electronics.” In 1991, Synaptics patented a refined “winner take all” circuit for teaching neural networks how to recognize patterns and images. In 1992, Carver and Federico used these pattern recognition techniques to create the world’s first touchpad. As we read on Wikipedia:

A touchpad or trackpad is a pointing device featuring a tactile sensor, a specialized surface that can translate the motion and position of a user’s fingers to a relative position on the operating system that is made output to the screen. Touchpads are a common feature of laptop computers as opposed to using a mouse on a desktop, and are also used as a substitute for a mouse where desk space is scarce. Because they vary in size, they can also be found on personal digital assistants (PDAs) and some portable media players. Wireless touchpads are also available as detached accessories.

Following its founding, in addition to the touchpad, the folks at Synaptics invented the click wheel on the classic iPod, the touch sensors on Android phones, touch and display driver integrated chips (TDDI), and biometric fingerprint sensors. Over the years, they’ve expanded their touch (I couldn’t help myself) into all aspects of how humans communicate with machines, and vice versa.

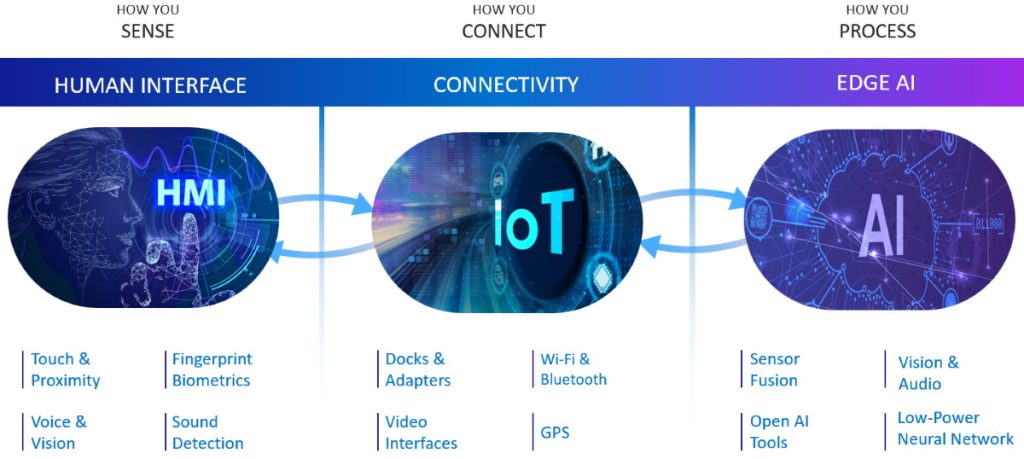

If you want to communicate with a machine, the folks at Synaptics have you covered (Image source: Synaptics)

Before we proceed, I just wanted to remind you about my July 2020 column DSP Group Dives into the Hearables Market. At that time, more than a billion products boasted having the DSP Group’s technology inside. In August 2021, we heard the news Synaptics to Acquire DSP Group, Expanding Leadership in Low Power AI Technology. This was followed by my October 2021 column, Meet the Tahiti ANC+ENC+WoV SoC Solution, in which I waffled on about the evolution of HMIs, starting with the technologies featured in the 1936 incarnation of Flash Gordon and the 1939 introduction of Buck Rogers, transitioning to Synaptic’s interest in high-end audio processing for headsets, leading to its introduction of the AS33970 Tahiti SoC (Phew!)

We left that latter column with me drooling with desire over the thought of my flaunting a 21st century Tahiti-powered headset featuring no-boom microphones, thereby allowing me to conduct business in a crowded public place without looking out of place, as it were (I’m just dropping this into the conversation to remind the guys and gals at Synaptics that they said they would send a pair of these bodacious beauties for me to review when they become available LOL).

Moving on… In my recent chat with the folks at Synaptics, they were explaining how they are expanding their footprint in all sorts of areas. One of the things that’s of particular interest to them is the explosion of devices that need to be intuitively interactive, which is best achieved using multiple sensors combined with the intelligence to understand what they are sensing.

In addition to home automation devices like thermostats, and automotive applications like touchscreens and knobs and buttons, other examples are controllers for gaming, augmented reality (AR), and virtual reality (VR), along with wearables like true wireless stereo (TWS) earbuds. All of these devices would be able to serve us better if they were intuitively interactive, which means that they don’t just need to know that the user is touching them; rather, they need to know how the user is touching them.

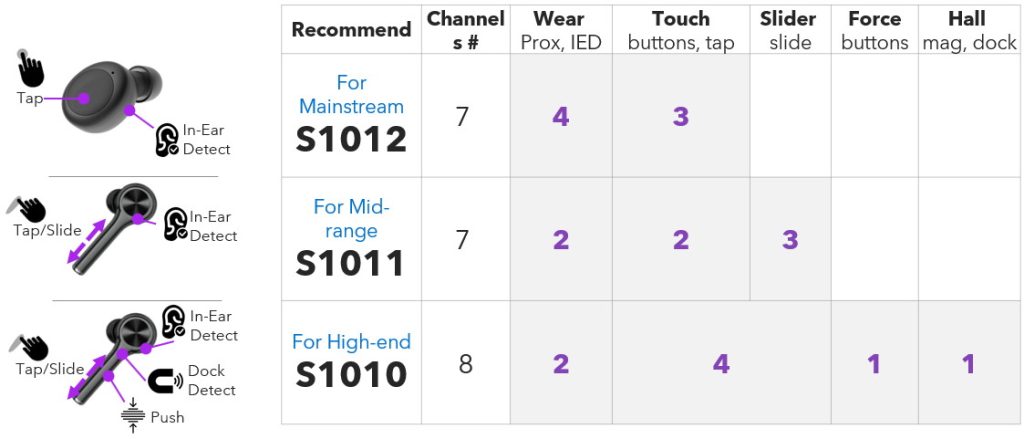

Example wearable interactions (Image source: Synaptics)

I must admit that I hadn’t given this much thought, but now I do think about it, it makes sense that — in the case of something like TWS earbuds — it would be useful to be able to detect touch (e.g., single tap = pause/play, double tap = next track, triple tap = previous track), slide (e.g., swipe down = volume down, swipe up = volume up), in-ear detection that works on all shapes of ears (so you can save power when the buds are simply sitting on a table, for example), and the ability to detect if the buds are in their dock being charged (also if the dock lid were closed or had just been opened, the latter case indicating that this might be a good time to start syncing with the host device so as to be ready for action when called to action).

As we’ve already noted, the folks at Synaptics are famous for their capacitive touch technology. As they told me, the biggest benefits of capacitive sensing are responsiveness and low latency, which is why people love things like touch screens. Paradoxically, the biggest disadvantage of capacitive sensing is that it can be too responsive; that is, responding when you didn’t actually want it to respond. (This reminds me of my dear old mother, whose memory is so sharp that she can remember things that haven’t even happened yet!)

What this means is that the system needs to be able to reliably distinguish a real interaction from a fake interaction. A fake interaction is known as a mistouch (verb [transitive] “to touch wrongly or by mistake”), while dealing with such an event is officially referred to as “accidental contact mitigation.”

The situation is further complicated by the fact that things like capacitive sensors are affected by environmental conditions like temperature, humidity, and moisture. This means that — in addition to being small, lightweight, rugged, and parsimonious with power — sensing systems need to be able to employ multiple sensors of different types, varying the “weight” (perhaps “significance” is a better word) accorded to the data from each sensor depending on situational awareness.

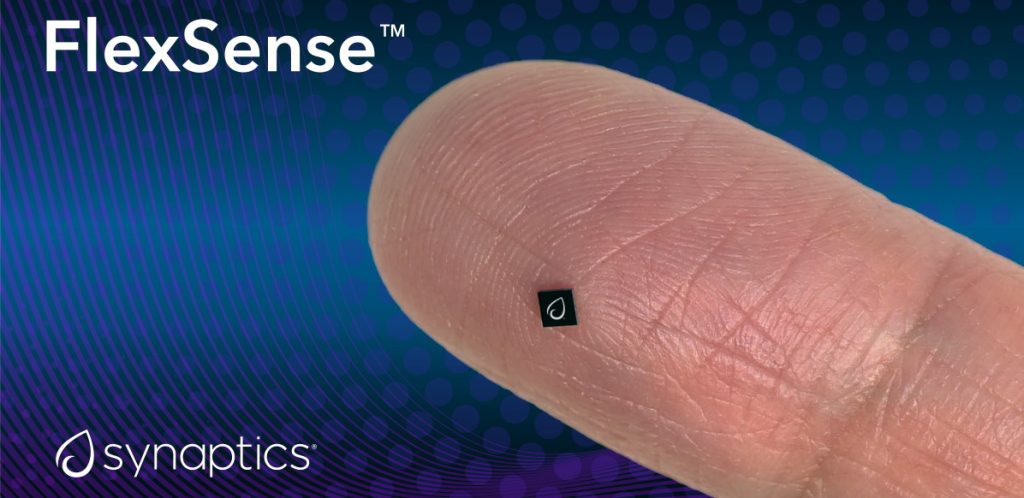

All of this leads us to the latest Synaptics offerings — which are available for use by OEMs (original equipment manufacturers) and ODMs (original design manufacturers) — in the form of FlexSense integrated sensor processors (I feel this deserves a “Tra la!”). These are the teeny-tiny multi-modal smart sensor processors referred to in the opening paragraph to this column. “How small?” you cry. Well, feast your orbs on this one, but try not to sneeze lest you blow it away:

FlexSense integrated sensor processor (black) sitting on human finger (pink) (Image source: Synaptics)

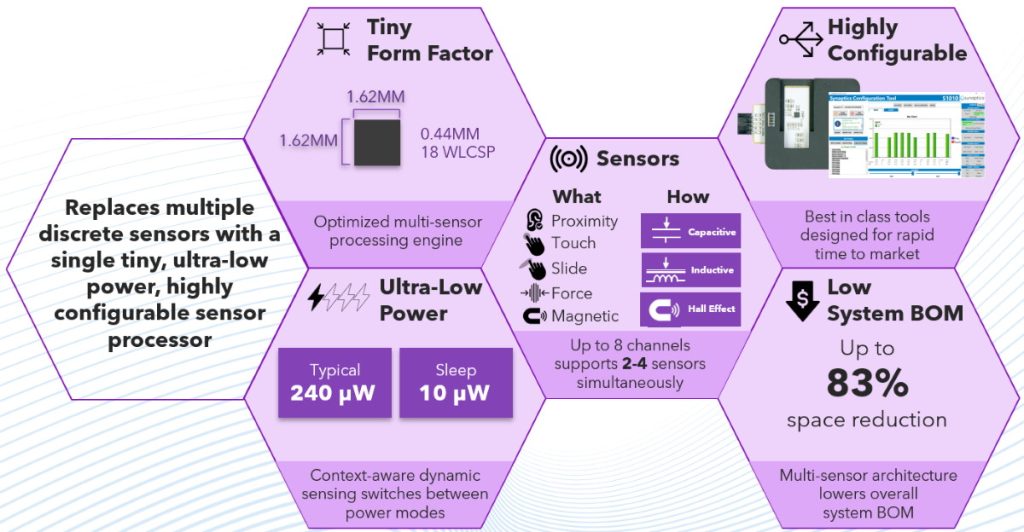

In addition to an ultra-low-power 16-bit processor, this little scamp boasts an integrated temperature sensor, integrated hall-effect (magnetic) sensors, multiple analog hardware engines to accept input from external sensors, an integrated power management controller, an I2C interface for communicating with a host system, and a bunch of programmable general-purpose input/outputs (GPIOs). (Different family members boast different features and functions as noted below.)

What all this means in a crunchy nutshell is that a FlexSense integrated sensor processor supports a wide range of sensor inputs, it can perform low-power, low-latency signal conditioning and conversion to reduce noise and other artifacts associated with the sensor signals, it can dynamically optimize power by turning sensor channels on and off as required, it can perform automatic environment calibration to compensate for drift, and it can intelligently analyze the data from multiple sensor inputs, perform sensor fusion, and send the results to the host.

FlexSense integrated sensor processors in a crunchy nutshell

(Image source: Synaptics)

For developers, the FlexSense family accelerates time-to-market (TTM) by providing a simple, out-of-the box solution with a single processor and up to eight analog input channels. As was noted earlier, some FlexSense processor versions can also include a magnetic field sensor to enable Hall effect sensing integrated directly on the chip. This can be used for dock detection, contactless buttons, and other applications. Consider the FlexSense TWS product family as illustrated below:

The FlexSense TWS product family (Image source: Synaptics)

The S1012 targets mainstream deployments, the S1011 is of interest for mid-range devices, and the S1010 is intended for high-end implementations. These durable, reliable, and robust sensor processors are sampling now, with mass production coming in Q4 CY2022.

If you are a developer who is raring to get started, an S1010 evaluation kit (EVK) is available on request. In addition to featuring touch, slider, and flick-slider gestures, along with inductive and hall-effect sensors, this little beauty is accompanied by a Synaptics Configuration Tool that allows signals from the various channels to be viewed, thresholds to be updated, and features to be enabled and disabled.

I don’t know about you, but I’m envisioning a future in which sensors are embedded in pretty much anything with which humans come into contact, from home appliances (and doors and furniture) to tools to automobiles to… everything. What say you? Do you have any thoughts you’d care to share with the rest of us?

As always, great post.

Thanks

Hi Mihir — thank you for your kind words 🙂