“The benchmark of quality I go for is pretty high.” – Jimmy Page

By now it will have escaped no one’s attention that the fastest computer in the entire world is powered by… ARM processors.

Yup, the little Acorn that could is all growed up now and playing with the big boys. The biggest, in fact. The same CPU architecture that powered your old iPod is now plotting global takeover. Or making weather predictions. Or curing cancer, or whatever it is the fastest supercomputers do. An impressive career arc.

What’s also remarkable about ARM’s ascendancy is that it was so sudden. In the 25 years or so that Top500 has been keeping track of the world’s fastest computers, there were never any ARM-based machines whatsoever until two years ago, and even now there are only two (in 1st and 244th place) out of 500.

It’s safe to say this is not what ARM was originally designed for. It’s also safe to say that the folks at ARM are thrilled by this turn of events, coming, as it does, on the heels of Apple’s announcement that it’s also switching its high-end machines to ARM. And away from Intel’s x86.

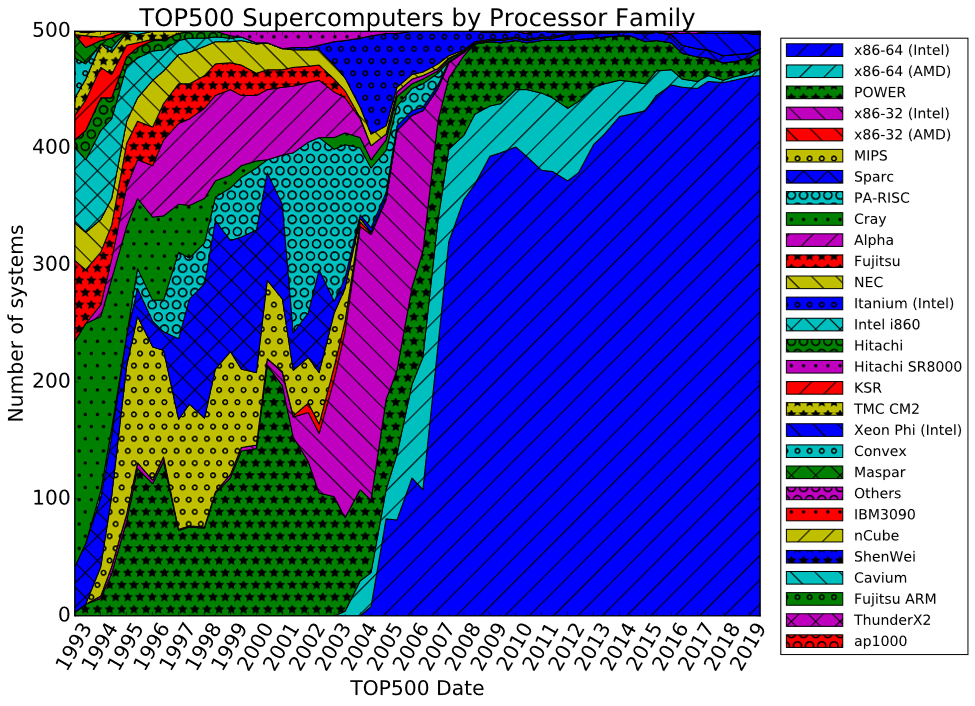

Speaking of which, x86 has utterly, completely, and wholly dominated the supercomputer rankings for most of living memory. It’s not even close. Until last month’s update, a whopping 480 of the top 500 supercomputers were x86-based, and the overwhelming majority of those were Intel chips, not AMD’s. As the graph below shows, ARM barely even makes it onto the legend.

It’s almost as if computer history was divided into two parts. The most recent dozen years are all Intel, all the time. But before that there was diversity. There was change. There was competition. I miss those days.

Looking at the first dozen years from the mid-90s onward, the mix is so jumbled that it’s hard to pick out the winners from the losers. (“Loser” here being defined as merely the 100th fastest computer in the entire world.) We’ve got SPARC chips, we’ve got Alpha chips, we’ve got PowerPC chips, we’ve got MIPS chips. We’ve even got Cray, Convex, and Maspar machines. The lead changed every time the chart got updated, and the overall mixture of architectures changed constantly. Oddsmakers would’ve had a tough time picking the winners and losers in the CPU sweepstakes back then. Just ask any VC on Sand Hill Road.

We can tease a few interesting trends out of this data. IBM’s Power family (green stars on the chart) started out small in the early 1990s, then rocketed up to the top spot 15 years later. Indeed, the #2 and #3 machines today are both an IBM Power System AC922. Power has held the top spot, or nearly the top spot, for more than a decade. That’s pretty incredible staying power, given how much the goalposts keep moving, and how so many other architectures have risen and fallen over a similar span of time.

It’s also surprising how few of the top machines are custom designed. Almost all of them are based on well-known CPU architectures. Most even use actual, commercial chips that you and I can buy. That big blue chunk of the chart is almost all Xeons, Pentiums, and Athlons. Apart from Cray and a few short-lived interlopers, they’re all “normal” processors with part numbers and price tags.

Which is encouraging. It’s good to know that you, too, can buy the very same technology that powers massive room-sized supercomputers. Just add water.

That’s especially true now, with Intel’s utter dominance of all but the top few spots. It’s got the 99th percentile all sewn up. What’s the reason for that dominance, and why the sudden shift around 2006 or so?

In a word: commerce. Twenty years ago, there was fierce competition among RISC and CISC computer vendors, as the Top500 results demonstrate. It was anybody’s game. Most industry analysts, pundits, and engineers figured that Intel’s days were over, and that RISC (of some flavor) would usher in a whole new era. After all, the x86 dated from the Late Cretaceous period of CPU design. How could it possibly compete with the newer, more modern, more evolved product families? Even RISC might not be aggressive enough; maybe VLIW is the way to go.

But that very competition led to fragmentation. Alpha, MIPS, SPARC, and all the others each sold to a small niche of users. Meanwhile, Intel was selling x86 processors by the truckload to every PC maker on the planet while backing up forklifts full of cash into its bank vaults. The x86 franchise was an obscenely lucrative cash cow, even if some engineers ridiculed it as outmoded technology. Intel sold more processors than all the other vendors put together. And CPU development is expensive. Very expensive. One by one, the boutique CPU makers (Sun, Digital, Silicon Graphics, Intergraph, et al.) gave up on their in-house designs and started buying commercial processors, often from Intel. And, one by one, those companies failed anyway. PCs running Windows on x86 were ubiquitous and cheap. Artisanal Unix workstations running proprietary RISC processors were expensive – and not much faster than a PC anyway. The full-custom route just didn’t add up.

The huge uptake in x86-based supercomputers starting around 2005 wasn’t because Intel chips got a lot faster (although they did). It’s because most of the other competitors defaulted. They left an empty field for Intel to dominate. If the brains behind Alpha (to pick just one example) had had Intel levels of R&D money to work with, they probably would have stayed near the top of the performance heap for as long as they cared to. But that’s not reality or how the game is played. If my grandmother had wheels she’d be a wagon.

Now the same story is playing out again, but in ARM’s favor. ARM has the volume lead, beating even Intel by orders of magnitude in terms of unit volume. And, although ARM collects only a small royalty on each processor, not the entire purchase price, it also doesn’t have the crushing overhead costs that a manufacturer like Intel carries. ARM’s volume encourages a third-party software market to flourish, and that fuels a virtuous feedback loop that makes ARM’s architecture even more popular. There’s nothing inherently fast about ARM’s architecture – it definitely wasn’t designed for supercomputers – but there’s nothing wrong with it, either. If the x86 can hold the world’s performance lead, any CPU can. All it takes is time and volume.