“Your car goes where your eyes go.” – Garth Stein

A brand-new survey of autonomous-vehicle designers tells an interesting story about their progress, their concerns, and their outlook for fully autonomous vehicles.

If I may offer a brief summary: they’re scared $#!%-less.

The survey was conducted by Forrester Consulting and paid for by microprocessor company ARM, so we can assume that ARM had a hand in designing the survey and vetting its results. ARM has had its eye on the automotive business for a while, and autonomous vehicles (AVs) should be a big growth market, so there’s some natural interest there. For its part, Forrester distributed questionnaires to 54 “global AV practitioners” (and personally interviewed five of them), ranging from CEOs and CTOs, to vice presidents, directors, managers, and lowly engineers.

Fifty-four respondents isn’t a big group for a survey – that’s only about four elevator-loads – so the results could be skewed a bit by an oddball response here or there. On the other hand, this isn’t Forrester’s first rodeo; the company knows how to select representative sample groups and how to compile the statistics. The respondents were pretty evenly distributed by geography (North America, Asia, etc.) and by company size, from specialized technology firms all the way to the big automakers you’d expect.

Not surprisingly, their concerns were all over the map, but the top-ranked worry was reliability. When asked to list the criteria needed for a production-ready design, nearly half of them (48%, or 26 respondents out of 54) identified “creating a reliable system” as their #1 concern. In third place, with a 37% response, was “dealing with functional safety needs.” Another 37% picked “cyberthreats.” In all, three of the top four concerns all had to do with safety and reliability. Issues of cost, performance, power efficiency, weight, and space constraints all ranked much further down.

For consumers, that’s probably a good sign that developers are focused on their safety. For automakers, it means that developers’ biggest anxiety is just getting the sucker to work, never mind what it costs, weighs, or looks like. Those are the marks of a very early-stage design, where basic feasibility is still in question. Once you get the machine to work, you can worry about cost-optimizing it. For now, developers just want the box to hold together and not tip over.

Some follow-up questions of my own confirmed this. Robert Day, Director of Automotive Solutions & Platforms at ARM, told me that the developers he’s met with are still running prototypes to test what/how many sensors they need, and then getting the software to interpret the sensor information and make the right decisions. Very few are preparing designs for production; they’re still figuring out what’s needed, how to connect everything, and how to program it all. It’s still early days.

Sensors, not processors, were widely cited as a bottleneck. “Many of the engineers we spoke to during the qualitative interview phase said that the sensor technology needed for production-ready AV systems is still nascent,” reports Forrester. One of the five developers interviewed said, “…sensor technology [for full automation] doesn’t exist. Computing horsepower and algorithms don’t exist yet.”

No less an authority than the CTO of Ford Motor Company agrees. In an unrelated interview, he threw shade on the current crop of AV systems, citing a lack of sensors and data. “That’s why these vehicles that don’t have LIDAR, that don’t have advanced radar, that haven’t captured a 3D map, are not self-driving vehicles. Let me just really emphasize that. They’re consumer vehicles with really good driver-assist technology.”

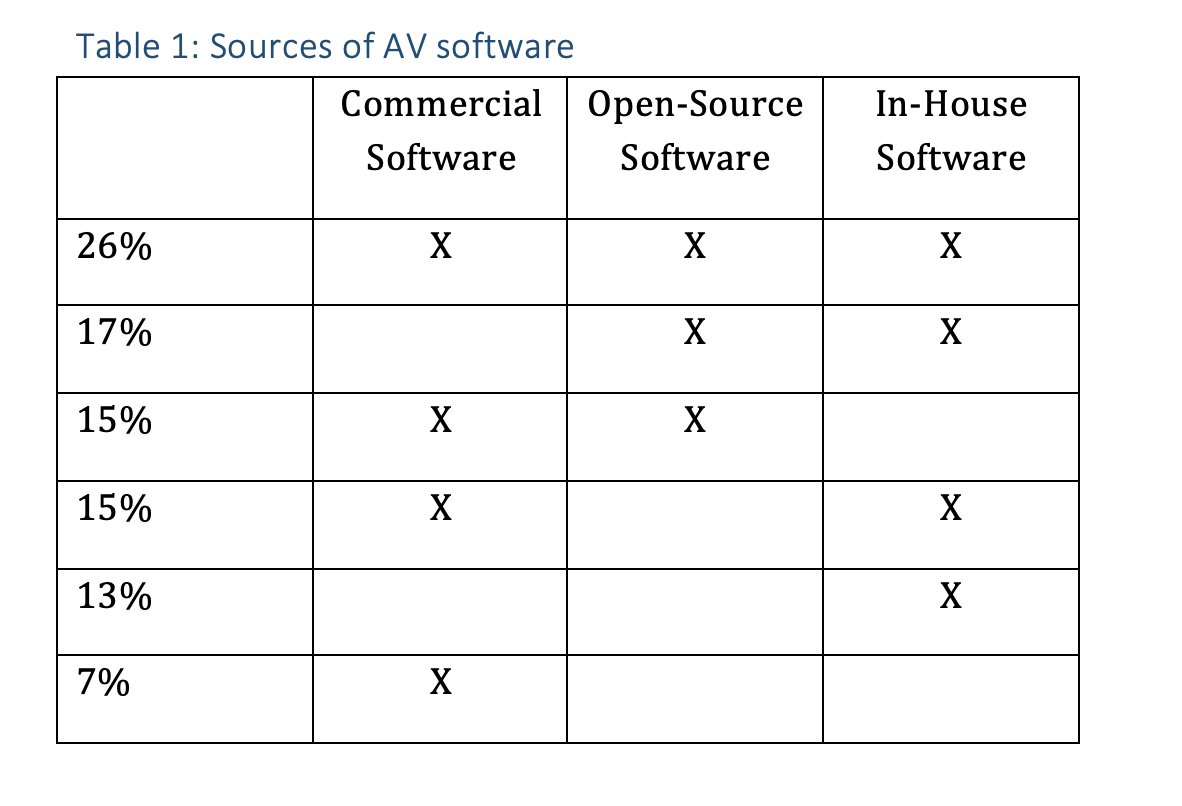

Where does the software for self-driving vehicles come from? Just about anywhere, according to Forrester’s subjects. About one-quarter of respondents said they use a combination of commercial software, open-source software, and in-house software (see Table 1). Others had different mixes, with some eschewing commercial code, some bypassing open-source, and a few that apparently develop no code in-house.

The survey doesn’t specify what open-source software developers are using, but Linux would be a good guess.

The AV angst extends beyond the usual prototyping and development problems. Once the system works, who decides when you’re allowed to ship it? Automobile safety is heavily regulated in all parts of the world, but what agency determines when a self-driving system is ready for commercial release? What regulatory hurdles do you have to cross?

Nobody knows, and that’s a lurking problem in developers’ minds. One interviewee said that national safety standards “are completely individualized. There are no centralized standards or methods and tools to follow to validate the systems.” Another complained that, “NIST is nowhere near the capability of certifying this stuff themselves. They’re looking to the industry to develop best practices that evolve into standards over time.” In other words, you guys make up your own standards and the legislators will think about adopting them.

When standards do exist, they’re often irrelevant or out of date. “Everyone wants to have their algorithm certified according to the [ISO-26262] standard — [but] that standard does not contain anything about deep learning.”

How do you develop a complex system when you don’t know what the pass/fail criteria will be? Regulation and certification are “something we’re thinking about and nervous about. We don’t know what we’re going to run into. How resistant do the sensors have to be to weather conditions? How do you even derive a safety test for an autonomous vehicle? Which scenario do you run it through? A lot of people are trying to leverage simulation. Simulation works, but you can’t simulate everything.”

Then there’s the marketing challenge, which is not necessarily the development team’s problem, but could greatly affect the commercial success of all this work. If car buyers aren’t buying it, all that effort is for naught.

The consensus is that self-driving cars need to be better – a lot better – than human drivers before we’ll trust them. And that’s a tough goal to define, never mind achieve. Says one developer, “The amount of human judgment in driving in boundless conditions is such that it will take over 10 years before a computer can make all the judgments in a guaranteed safer way than a human in all situations. The computer is going to have to be at least a factor of 10 better than what a human can do before the public will accept it.”

Here’s Ford’s CTO again: “Getting the AI right in a vehicle is really hard. It involves a lot of testing and a lot of validation, a lot of data gathering, but most importantly, the AI that we put into our autonomous vehicles is not just machine learning. You don’t just throw a bunch of data and teach a deep neural network how to drive. You build a lot of sophisticated algorithms around that machine learning, and then you also do a lot of complex integration with the vehicle itself.”

At least one engineer interviewed wasn’t optimistic we’d see fully self-driving cars anytime soon – or maybe ever. Echoing Zeno’s Paradox, he could “…see a path to 96–97% of the way to full autonomy, but the final 3–4% would be exponentially harder to achieve. Indeed, given the technologies currently available, he couldn’t see how the ultimate goals of self-driving vehicles would be achievable.”

Humans are terrible at assessing risk (mosquitos kill 200× more people than sharks) and really good at distorting hazards and probabilities. How realistically will we evaluate self-driving technologies? How would we even quantify their effectiveness? And would it matter? A single Instagram or Facebook post about a fiery crash involving a self-driving car could tank autonomous vehicle sales, never mind the hundreds of similar crashes that happen every day. We’re just not good at gauging risk and we’re quick to develop superstitions and misconceptions about scary new technologies. Consider cell phones, microwave ovens, and vaccines.

In the meantime, here’s a simple test. If a carmaker offered to sell you a $10,000 box that turned your current daily driver into a fully autonomous vehicle, would you buy it?

Or to turn that around, how much would a full-time chauffeur cost? You could pay someone to drive your car for you, celebrity-style, and not have to switch cars. Or, that same amount of money could pay for a lot of Uber, Lyft, or taxi drivers. Plus, you could sell your car and save money on insurance, fuel, and license fees. Maybe New Yorkers have got this self-driving thing already figured out.

I’m still strongly on the page that the exec’s and senior developers/managers that sign off on these designs need to go to jail for man slaughter when they kill in situations where a human would have avoided the accident, or reduced it to a minor injury. And for really stupid designs where they know that it is not ready, or it kills bystanders like pedestrians or children, murder 1 should be on the table.

None of this plausible deniability B S. None of this that it works most of the time B S. None of this we thought it was ready B S. None of this that it wasn’t in the test set B S.

We hold human drivers accountable, we need to hold the management and developer teams accountable. And that doesn’t mean that as a society we are willing to throw millions at victims families to short cut design and test.

And I strongly mean that Murder 1 is on the table when the design is fatally flawed.

IE senor resolution and processing power lack the ability to see and act on clear, normal, situations.

IE that LIDAR, if used as the primary vision sensor, be able to spot small children in a walkway AND STOP before reaching them.

IE that optical vision, if used as the primary vision sensr, be able to spot children/adults in camo pants/shirts, or any other clothing choice against ANY background that a human would. This generally means that the pixel resolution be high enough that hands, shoes, and head all CAN BE issolated and not rely on the clothing/background differentiation.

That this applies to poor vision cases as well … rain, sun rise/set glare, where a human is EXTRA cautious.

This applies to side entry cases, where the broad field of view of humans, sees and avoids obvious potential accidents. Including train tracks, non-standard side entries like lane closures, cones, and other common changes to lane usage, including temporary conditions like public safety workers (construction, police, fire, etc) are directing/blocking a lane.

If the car kills, those responsible need to face jail.

We should not care how big of pockets the company has to throw money at the victims families and regulators.

No more than we would allow a very rich driver to kill, pay and walk away without jail time for avoidable accidents, or purposeful disregard for others.

This should never be about means, this should always be about basic responsibility and being held fully accountable as a human driver would.

Undoubtedly, autonomous driving is a step into the future for which we are not yet ready. Engineers find the problem in adaptation, because each region has its own driving style, moreover, each person. I would say that the most important problem, which engineers also name, the form of skepticism is not being solved. Nothing is being done to improve statistics (75% against). Making promises about a car that drives better than a person, is safer (although they promise it only in 5 years https://blog.andersenlab.com/de/can-self-driving-cars-drive-better-than-we-do) , the availability and popularity of this car is useless. Everything that I have listed above is just words and promises. It is unlikely that such cars will receive huge popularity, if only because of the price. But I believe, someone would take this opportunity to talk about the disadvantages of autonomous driving rather than its achievements. And no, I’m not skeptical after reading many articles, but try to convince the rest, non-tech people …