There are many things I don’t know much (if anything) about. So many, in fact, that I could write a book about them… or not, as the case might be. An example of one of the things I know very little about would be touchscreens, which makes it somewhat paradoxical that these little scamps are to be the topic of this column. Well, not touchscreens per se, but rather the sensing technology that lurks behind, within, or in front of them (for some reason, the thought “Pay no attention to the man behind the curtain” just popped into—what I laughingly call—my mind, but there’s no need for you to worry because it just popped out again).

Although I just made mention of touchscreens, what we are going to talk about is actually applicable to a wide range of sensing activities. The reason we are starting with touchscreens is that most of us engage with these little rascals multiple times each and every day.

As I’ve recently come to appreciate, there are so many aspects to touchscreens that my head is now spinning like a top. Let’s start with the fact that there are a variety of fundamentally different touchscreen technologies, the five most popular of which are surface capacitive touchscreens, projected capacitive touchscreens, resistive touchscreens, infrared touchscreens, and surface acoustic wave (SAW) touchscreens.

Another consideration is that, in addition to the underlying display technology like liquid crystal displays (LCDs), light-emitting diode (LED) displays, and organic LED (OLED) displays, we also have concepts like in-cell (touch sensor functions are embedded alongside the display elements in the form of pixels), on-cell (touch sensor functions sit “on top” of the display elements), and out-cell (the touch sensor functionality is integrated with the protective glass screen).

I was going to say that, for the purposes of these discussions, we were going to focus on projected capacitive touchscreens in out-cell presentations. However, this really isn’t the case because we’re going to use these only as an example of “the old way of doing things,” as compared to the spiffy new technology I’m poised to introduce with a fanfare of sarrusophones. (Remembering that a sarrusophone is reminiscent of an ophicleide with an oboe or bassoon reed, this is the sort of fanfare that really makes a statement, but don’t ask me what sort of statement because my ears are still ringing.)

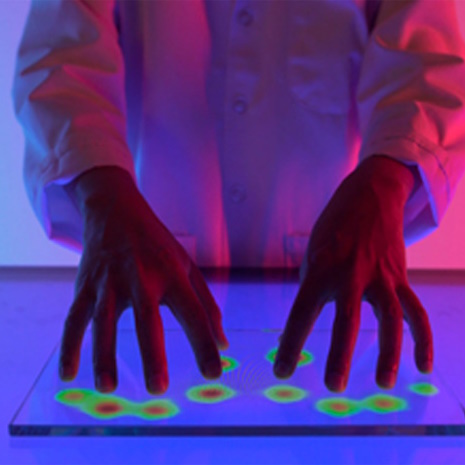

Let’s start with the projective capacitive touchscreens on smartphones (like my iPhone) and tablet computers (like my iPad Pro). Underneath the glass screen are rows and columns of conductors (think “wires”) formed from some transparent material. We can visualize of just one of these rows and columns as looking something like the following:

Conceptual view of capacitive touchscreen technology (Source: Max Maxfield)

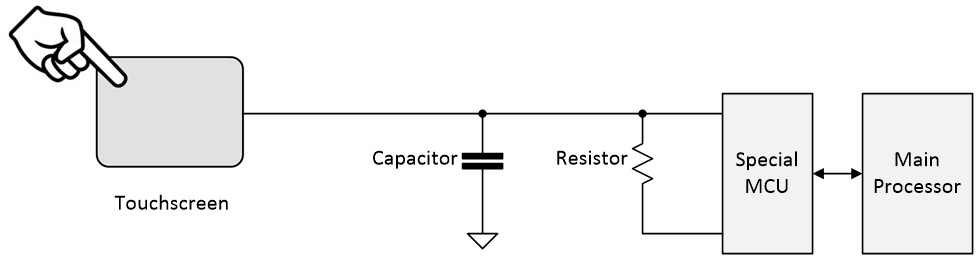

The rows and columns of conducting material on the touchscreen act like capacitors (because they are capacitors, now I come to think about it). The only reason I’ve shown this capacitance in the form of a capacitor symbol in the diagram is to give us something to envisage while we’re talking. The touchscreen controller is implemented as a special microcontroller unit (MCU). When this controller wishes to sense a row or column, it applies a voltage to charge the corresponding capacitor. After some time, it measures the voltage on the capacitor, knowing what the value should be if no one is touching the screen. When a human touches the screen, they act like an additional capacitance, thereby affecting the time it takes to charge and discharge this row or column.

As we will see, the voltages used are relatively high in the scheme of things, which increases the amount of time it takes to charge and discharge the capacitor. Another consideration is that each row and column is sampled one after the other, which—if the finger is moving—can cause distortion problems (similar to a rolling shutter on an image sensor). All of this limits the rate at which the touchscreen can be sampled. And, just to rub salt into the wound, as it were, this form of sensing results in a relatively large amount of noise.

I recently hosted the virtual RT-Thread 2023 Technology Conference. One of the speakers made the point that artificial intelligence (AI) was becoming the new user interface (UI). The thing is that the efficacy of AI processing systems is degraded by the digitization of analog data in general, and noisy analog data in particular (the reason I mention this will become apparent in a moment).

Now, before we go further, I feel as though I’ve been a little mean. I think it’s only fair to acknowledge the fact that traditional capacitive touchscreens are really rather awesome. If I could get my time machine working and go back 45 years to visit me as a student to flaunt the touchscreen on my iPad, then I (from now) would say “What on earth are you wearing and you need a haircut,” while I (from then) would say “What on earth are you wearing and what happened to our hair?”). But we digress…

I know that I (from then) would have been blown away by the capabilities of my iPad’s touchscreen (not to mention that it’s in glorious technicolor). On the other hand… I gave my dear old mum an iPad Pro and she loves it to bits, but I can’t tell you how many times I’ve heard her muttering under her breath as she tries to select something on the screen with no response. I think it’s because she’s 93 years old and seniors tend to be less hydrated than the rest of us (according to Visiting Angels, the amount of water in the body decreases by 20% by the age of 80), which lowers their capacitance. In turn, this degrades the touchscreen’s ability to detect them. Other things that make using capacitive touchscreens problematical are water (try using your smartphone in the rain) and gloves.

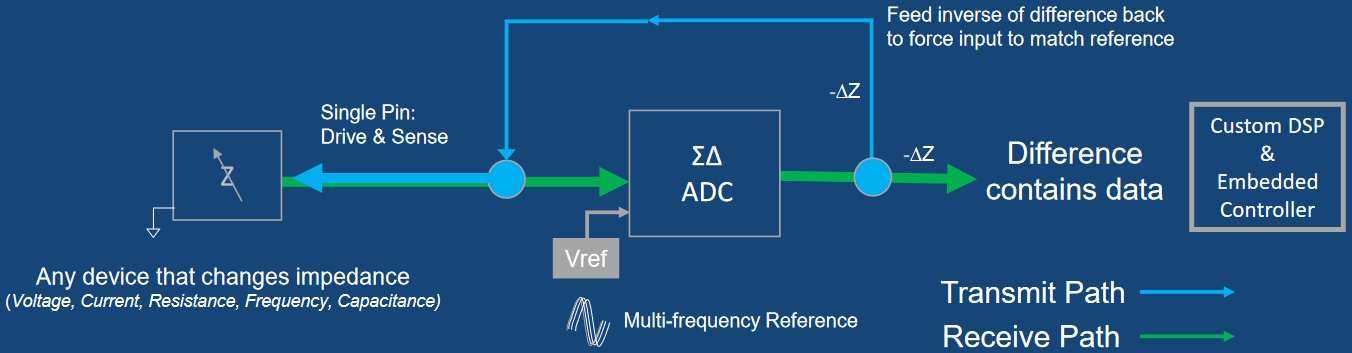

All of this leads us to the folks at SigmaSense. These clever chaps and chapesses have come up with a new approach that offers high-fidelity software-defined sensing suitable for the 21st century. Take a look at the following image:

Meet the SigmaDelta Modulator (SDM) (Source: SigmaSense)

Rather than trying to charge and discharge a capacitor, the SigmaDelta Modulator (SDM) transmits a ~1V peak-to-peak sine wave down the row or column. The presence of any object, like a human finger, will change the impedance of the signal path, thereby causing a difference between the signal that should be there and the signal that is there. The SDM monitors the situation in real-time and feeds the inverse of the difference back into the signal to force the input to match the reference.

But wait, there’s more, because the controller can transmit different frequencies down each row and column simultaneously. Even more amazing, it can transmit multiple frequencies down each row and column simultaneously. The custom DSP and embedded controller device performs fast Fourier transforms (FFTs) on all of the signals simultaneously. “All of the signals?” you ask? Why yes, didn’t I mention that? That’s why I keep on saying “simultaneously.” The controller samples all of the rows and columns simultaneously (similar to a global shutter on an image sensor).

This also explains the “software-defined sensing” portion of this column’s title, because all of the frequencies are software-defined and the sensed information is immediately available to the software in digitized form.

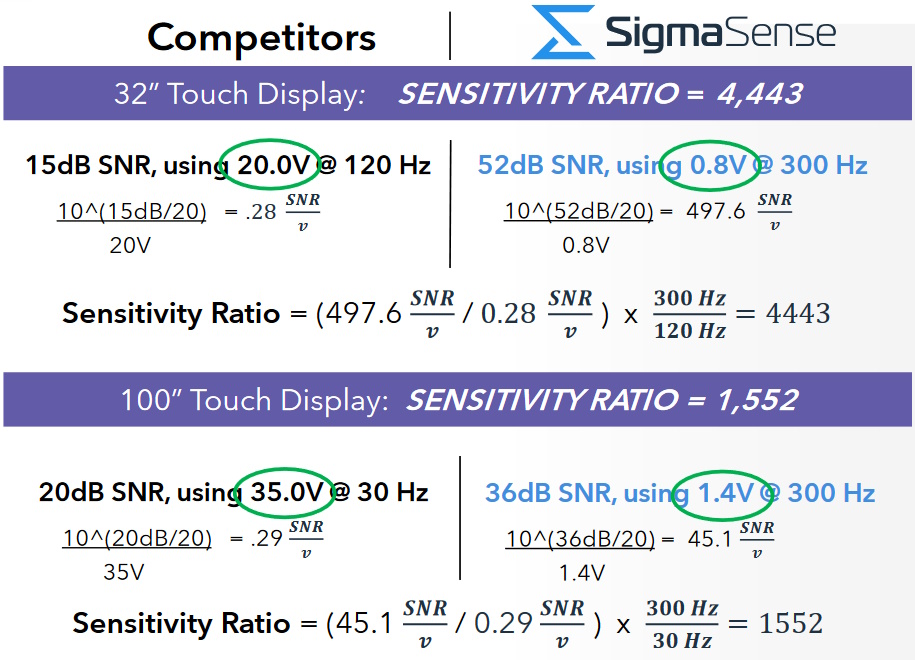

As always, the proof of the pudding is in the eating, so feast your orbs on the results from an example touch display benchmark as shown below:

Signal-to-noise ratio per volt normalized for time (Source: SigmaSense)

WTW? (“What the What?”), is all I can say. I mean, 52dB (vs. 15db) SnR using only 0.8V (vs. 20V) at 300Hz (vs. 120Hz) for a 32” touchscreen. Wow! And they can also achieve a 300Hz sampling rate on a 100” touchscreen (vs. only 30Hz for the competitor). I have some friends who enjoy high-end gaming whose eyebrows are going to be raised when they read this.

Apart from anything else, this technology works in rain and if the user is wearing gloves. Also, the fact that all points in the array are sampled simultaneously at 300Hz—coupled with the fact that this sensing technology doesn’t require the user to actually be touching the screen—means the device can effectively track the user’s multi-handed gestures like a movie in 3D. Speaking of movies, maybe this would be a good time to watch one:

In fact, there’s a bunch of related videos on the SigmaSense website (I was just blown away by this offering). Sad to relate, I’ve only touched on this technology here, not least that its applications extend far beyond smartphones and tablet computers. Yes, of course there are gaming and automotive applications, but this technology can also be used to perform esoteric tasks like measuring the charge remaining in a battery.

I’ll tell you what. Rather than me waffling further. why don’t you bounce over to the SigmaSense website and have a root around, then come back here and post a comment to tell the rest of us what you think?