Did the abundance of abbreviations with which the title of this column abounds cause you to pause for a moment and think, “Say, what?” Well, that’s just what I thought when the guys and gals at Synaptics introduced me to their AS33970 Tahiti System-on-Chip (SoC) device, but first…

Human-machine interfaces (HMIs) have come a long way since I was a lad. These days, of course, we think of HMIs in the context of people and computers (mayhap “people versus computers” might be more apposite). However, I’m thinking of a time before computers as we know and love them today appeared on the scene.

For example (cue “travelling back through time” sound effects and visuals), I remember being a sprog watching old reruns of Flash Gordon and Buck Rogers on TV. I’m not talking about the Buck Rogers in the 25th Century television series that first aired in 1979. Unsurprising to a modern audience, this series, which starred Gil Gerard and Erin Gray, was presented in color. And I’m certainly not talking about the Flash Gordon television series starring Eric Johnson and Gina Holden that debuted in the USA in 2007. No, indeedy-doody, what I’m talking about is the original 13-chapter Flash Gordon science fiction film serial starring Buster Crabbe and Jean Rogers, which first hit the silver screen in 1936, and also the 12-part Buck Rogers science fiction film serial starring (once again) Buster Crabbe and Constance Moore, which launched in 1939. It probably goes without saying that both of these series were presented in glorious black-and-white.

Originally, these were shown to kids at the cinema on Saturday mornings. I just called my mom in England on FaceTime. She says it was called the “Penny Rush” because you could rush in for just one penny. There was a minimum age of about 12-years-old for kids on their own, but younger kids could get in if they were accompanied by an older sibling. Each week’s show was about two hours long and — in addition to the main feature — boasted a bunch of shorter items. These might include a cartoon and a short thriller, like an episode of Flash Gordon or Buck Rogers, where each episode, which was about 20 minutes long, commenced with a recap of the previous week and ended with a cliff hanger. Mom says she remembers her older brother taking her to these shows. She also remembers being riveted to her seat watching an episode of Flash Gordon where the cliff hanger left him poised to walk over a bed of burning coals.

For your delectation and delight, and much to my amazement, I’ve found compilations of all of the episodes of both Flash Gordon and Buck Rogers on YouTube.

What do you mean, “What on Earth does this have to do with HMIs?” If you’ll settle down, I’ll tell you. I recall one scene where someone said something like, “Turn the light on,” and our hero bounced across the room and proceeded to use both hands to laboriously rotate a gigantic wheel reminiscent of the wooden steering wheel on a 17th century pirate ship. Now, that was an HMI to write home about!

I’m sorry. I just got sidetracked looking up the difference between a capstan and a windlass, neither of which — it turns out — has anything to do with a ship’s steering wheel. So, where were we? Oh, yes, I remember…

Both of the aforementioned 1930s visualizations of the future were chock-full of HMIs of this ilk, like sliding switches used on some esoteric piece of equipment, where the switches had knobs the size of baseballs and that required a tremendous amount of physical effort to use (at least, if the actions of the actors were anything to go by). It makes you wonder why the shows’ creators did this. Was it because they wanted something meaty to fill the screen, or was it because they really thought people in the future would feel the need to use oversized controls?

Let’s jump forward to the 1950s, at which time only a handful of TV channels were available. The problem was that, when you wanted to change a channel, you were obliged to get up and walk across the room and press a button or rotate a knob. It didn’t take long for inventors to realize that a remote control would be a good idea, but how to create such a beast? The first offering was the Zenith “Lazy Bones,” which involved a motor-driven mechanism that clipped over the tuning knob. A long wire connected this mechanism to the controller held by the head of the household in her command chair. Unfortunately, more than one grandparent tripped over the wire and exited the room through an inconveniently placed window (or failed to exit the room due to an even more inconveniently placed wall).

It was obvious that the wire had to go, but it’s important to remember how limited technology options were back then. The first wireless solution was introduced in 1955 in the form of the Zenith “Flash-Matic,” which involved four photoelectric cells mounted around the four corners of the screen in the TV cabinet. The person in control used a flashlight-like device to shine a beam of light onto the desired cell to control volume (higher/lower) and channel selection (up/down).

Zenith Flash-Matic (Image source: Wikipedia/Edd Thomas)

Unfortunately, the Flash-Matic needed to be precisely pointed for it to work, and the photoelectric cells were unable to distinguish between light from the Flash-Matic and that from other sources. As a result, it was not uncommon for the channel and/or volume to change of their own accord, almost invariably when the program had just reached “the good bit.”

It just struck me that the Flash-Matic would not have looked out of place had it been wielded by Flash Gordon or Buck Rogers in their original series, but we digress…

Next up was the Zenith “Space Command,” which made its appearance in 1956. This little rascal was a handheld device that was mechanical in nature, containing four aluminum rods with carefully constructed dimensions. Each rod had an associated pushbutton, which — when pressed — struck (or plucked) its rod, thereby causing it to vibrate with its own fundamental ultrasonic harmonic frequency (enough audible noise was produced by pressing the buttons that consumers began calling remote controls “clickers”). Circuits in the television detected the ultrasonic sounds and interpreted them as channel-up, channel-down, sound-on/off (mute/unmute), and power-on/off. The downside of this type of control, of course, is that it was extremely annoying to household pets like dogs, which — in turn — became extremely annoying to their owners.

It probably won’t surprise you to hear that I could waffle on about this stuff for hours but — sad to relate — we have other poisson to frire.

Returning to the folks at Synaptics, you — like me — probably tend to think of them in the role of an interfacing company that has historically been known for its HMIs in the form of touch controllers and display technologies. (Did you see how I just brought everything back home on the HMI front? My mom will be so proud.) Well, times have moved on, and the guys and gals at Synaptics now describe themselves as “Combining human interfaces with IoT and AI technologies to engineer exceptional experiences.” Now, that’s a mission I can sink my teeth into, much like a tasty little poisson frit (I tell you, sometimes my writings are like the circle of life writ small).

Over the past few years, the chaps and chapesses at Synaptics have added all sorts of multimedia technologies to their portfolio, including things like far-field speech and voice recognition, video interfaces, and biometric sensors, to name but a few. Do you recall my DSP Group Dives into the Hearables Market column from the summer of 2020? Well, just a few weeks ago at the time of this writing, I saw this Synaptics to Acquire DSP Group, Expanding Leadership in Low Power AI Technology press release (see also my chum Steve Leibson’s writings: A Brief History of the Single-Chip DSP, Part I and A Brief History of the Single-Chip DSP, Part II).

But let’s not wander too far out into the weeds (I know, I know; look who’s talking). The reason for this column is that the folks at Synaptics were telling me about their current focus on high-end audio processing for headsets, which led us to their new AS33970 Tahiti SoC.

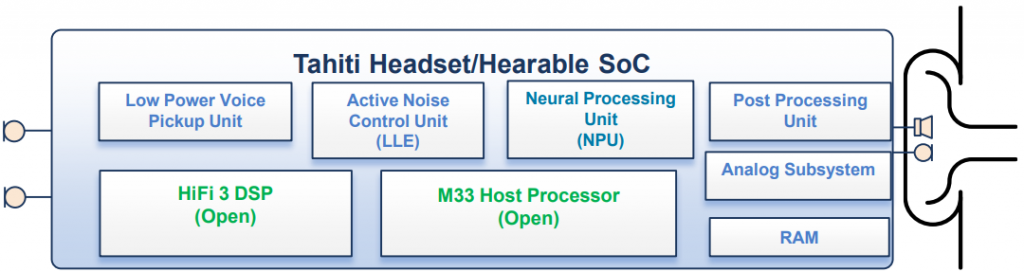

Block diagram of Tahiti SoC (Image source: Synaptics)

This is where we come to the abundance of abbreviations alluded to earlier, because the Tahiti SoC boasts mindbogglingly awesome capabilities in the realms of things like active noise cancellation (ANC), electronic noise cancellation (ENC), and wake on voice (WoV), all of which are enhanced by the use of artificial intelligence (AI) in the form of a neural processing unit (NPU). You will be happy to hear that the folks at Synaptics have a cornucopia of additional abbreviations that they can deploy at a moment’s notice, but I think we’ll save those for another day.

The point of all this is that Tahiti offers the ideal platform to power the next generation of high-performance headsets with embedded microphones. I don’t know about you, but the headset I have in my office has a boom microphone that wraps around to the corner of my mouth. Not that there’s a problem with this per se but — given a choice — I’d prefer the boom to be… not there. Well, this is one of the headset features that will be facilitated by Tahiti. Where this becomes rather interesting is when one is working “on the road.” If you see someone ambling around an airport sporting a big pair of bodacious Beats — you think nothing of it. However, if those Beats boasted a boom microphone, people would tend to stop and stare.

Now imagine strolling around flaunting a 21st century Tahiti-powered headset featuring no-boom microphones, thereby allowing you to conduct business in a crowded public place without looking out of place, as it were.

I’m not sure as to the exact date, but I think I purchased my current pair of noise-cancelling headphones about 10 years ago as I pen these words. Sad to relate, these little scamps have seen better days, and they are nearing the end of their existence. Even though they’ve served me well, when I come to think how technologies have evolved over the past decade, I can’t even imagine how awesome noise-cancelling headphones have grown to be today. I can easily visualize myself proudly parading around with a Tahiti-powered headset perched perkily on my noggin in the office, at home, and on the road. Should such a frabjous day arrive, have no doubt that you will be the first to hear the news in a column here on EEJournal. In the meantime, as always, I welcome your insightful questions and captivating comments.

6 thoughts on “Meet the Tahiti ANC+ENC+WoV SoC Solution!”