The first thing you should know about the new Portable Stimulus standard from Accellera is that, strictly speaking, it doesn’t provide for portable stimulus. Somehow, in the effort to solve a problem through portable stimulus, the solution morphed – but the name didn’t. That said, it does address the targeted problem, so perhaps this distinction merits no more than a shrug.

Let’s start with the problem: SoC verification. Over the long course of a project that turns ideas into working silicon, verification happens at multiple stages.

- You’ve got architects assembling blocks and ideas and checking that, at least at the abstract level, it all works. Perhaps SystemC is the language of choice here.

- Each block designer performs unit tests on his or her portion of the whole, likely with SystemVerilog and UVM.

- As the SoC blocks are assembled into a whole, some rudimentary level of chip-level simulation can be done – again, using low-level hardware languages.

- For more thorough chip-level verification, emulation is required for its performance. Here actual software code can be executed – albeit still enabled through hardware-level languages.

- At a higher level of abstraction, C or C++ stress tests can be applied, pushing the computing platform into various corners of execution to ensure that everything works at the extremes.

That’s a lot of verification. And here’s the crux of the problem: at the very beginning, one can isolate a wide range of specific cases that need to be tested. These cases will execute under a wide range of modes in which the chip can operate. These tests may be performed in ways that range from high-level at the architectural stage to cycle-accurate on an emulator. The set of tests will hopefully be hierarchical and modular so that pieces can be leveraged in unit testing.

Except for one thing: the tools and/or languages used for this verification at these different stages are all different and will require a different way of articulating the tests. Which means that the tests have to be rewritten over and over – hopefully all covering the same ground, but possibly not.

At the very least, this is a productivity problem – more simply put, a waste of time and effort. But, worse than that, it’s error-prone. Will every engineer or team in the design cycle work off of a central document defining what the tests will be? Does such a specification even exist? And, if it does, is it unambiguous enough to ensure that all actual instantiations will be faithful, isomorphic representations of that spec?

The chances are that this ideal situation hasn’t been realized, which can mean yet more time harmonizing tests and figuring out why later test results don’t match early ones: is the problem the test or the design?

This, then, is the problem that was attacked by the standard-setting team at Accellera. You’d think that the obvious solution would be to define a common stimulus format so that no rewriting would be necessary at each successive stage of verification. Hence the name, “Portable Stimulus.”

But, as so often happens, the original envisioned solution isn’t the one that results. So what we have now is not a replacement of stimulus formats with a new, common one. Instead, we have a format for expressing stimulus intent; that format can then be automatically translated into the native stimulus format of whatever type of verification is on hand at the moment.

Accellera held a breakfast panel at DAC where Faris Khundakjie of Intel, Sanjay Gupta of Qualcomm, and Karl Whiting of AMD answered questions and commented, now that the process has run its course and an early-adopter version is under evaluation for official launch at the end of 2017 or the beginning of 2018.

Even without this portability aspect, Mr. Gupta noted, this was still a productivity enhancement for UVM. Mr. Khundakjie further amplified that, for all the good that UVM has brought, the quality of UVM stimulus has suffered. So a standard like this allows the benefits of UVM while shoring up UVM’s stimulus issue.

Two Formats

It would appear that there was ample debate focusing on the specific level that the standard should address. A great many of the participants in the process were die-hard silicon folks, attuned to the needs of low-level verification. At the other end of the scale, companies like Breker (or perhaps exclusively Breker) operate at the C/C++ level.

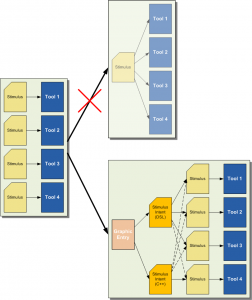

In the end, they agreed to disagree and brought forth two different ways of articulating stimulus intent. One is a so-called “domain-specific language,” or DSL (no, not that DSL). In other words, it doesn’t use any of the languages it might ultimately target, but rather defines a new language. The other format is C++-based.

Even though some tools will be more appropriate for the DSL and others for C++, it would appear that, so far, all tools will accept both inputs, so there’s no need, for example, for a converter. (This is indicated by the dashed lines in the figure above).

There’s one more “format” that’s likely to be available – at least early on. That’s a graphic approach that allows the assembly of a set of tests through a graphic-block-oriented approach. That diagram would then generate the underlying code.

You’d think that the graphic approach (assuming it covered all of the power of the languages) might be the easiest, but Mr. Whiting suggested that it would likely be used only in the early days while engineers became more comfortable with the DSL. After that, it will be all hand-coding. He said that, specifically at AMD, no graphic approaches were used by design engineers.

This may partially reflect engineering culture, which often eschews graphic approaches as somehow not serious enough. There have been runs of that sentiment on and off for years in both hardware and software circles – ever since Apple popularized graphics.

But there’s a more critical reason: trust. Each step along the way involves trust that the tools are working as advertised. There’s already one level of trust that has to be earned now: trust that the Portable Stimulus-enabled tools are correctly generating the native stimulus formats of the underlying targeted systems.

Using a graphic approach adds a new level of required trust: that the graphics tool is correctly generating the DSL (or C++). This doesn’t eliminate the need to trust that, from there, the correct native stimulus will result. So, the feeling goes, one might as well learn the text language and eliminate one tool from the stack. It’s for this reason that the graphic entry tool is depicted lightly in the figure above.

The DSL side of things has apparently been filled out pretty completely; the C++ side still has more work – anticipated to be complete by the 1.0 launch. Early next year, then, the wider audience of engineers should start to be able to bring Portable Stimulus into their workflow.

As for tools supporting the new standard (in alphabetical order):

- Breker: TrekSoC and TrekUVM support the early-adopter version.

- Cadence: Perspec supports the early-adopter version (as of last June).

- Mentor: InFact currently supports the new standard (as of last June).

- Synopsys: they didn’t commit specifically, but said that they have “a great track record of supporting what our customers find useful in addressing their challenges. We collaborate very closely with our customers to continue delivering on this track record.”

I also asked whether portable stimulus would also apply to test vector generation. After all, there could be a subset of the verification sequence that would also be used for testing. Cadence said that this was outside the scope of the working group, and Mentor said that, while they’ve had no requests for this, and therefore don’t support it, they could if demand materialized.

More info:

Accellera Portable Stimulus standard

What do you think of the new Portable Stimulus work from Accellera?

Thank you for the great article, Bryon. We at Breker want to clarify a few points. Although Breker led the effort for the C++ approach, the need for C++ was driven by several large semiconductor user companies on the Portable Stimulus Working Group Committee. The one aspect of the C++ approach that did not make it into the Early Adopter release is Coverage Modeling — this will be in the 1.0 release. As you correctly note, Breker fully supports both the DSL and C++ versions of the standard.

Both DSL and C++ are a work in progress and the point of the Accellera Portable Stimulus Standard Early Adopter release is to gather feedback and fix issues before they become too ingrained into the standard.

Adnan Hamid

CEO

Breker Verification Systems

http://www.brekersystems.com