As I may have mentioned on occasion, I love reading science fiction books and watching science fiction films. A couple of tomes that really caught my imagination were A Deepness in the Sky and A Fire Upon the Deep by Vernor Vinge.

An underlying theme upon which these tales are layered is that our Milky Way galaxy (and, presumably, all other galaxies) is divided into concentric volumes called the “Zones of Thought.” These zones roughly correspond to galactic-scale stellar density. As we read in the Wikipedia (I’ve cut this down drastically):

The Zones reflect fundamental differences in basic physical laws, with one of the main consequences being their effect on intelligence, both biological and artificial. Artificial intelligence and automation is most directly affected, in that advanced hardware and software from the Beyond or the Transcend will work less and less well as a ship “descends” towards the Unthinking Depths. But even biological intelligence is affected to a lesser degree. The four zones are spoken of in terms of “low” to “high” as follows:

- The “Unthinking Depths” are the innermost zone, surrounding the Galactic Center. In it, only minimal forms of intelligence, biological or otherwise, are possible.

- Surrounding the Depths is the “Slow Zone.” The Earth (called “Old Earth”) is located in this Zone, and humanity is said to have originated there, although Earth plays no significant role in the story. Biological intelligence is possible in “the Slowness,” but not true, sentient, artificial intelligence.

- The next outermost layer is the “Beyond,” within which artificial intelligence, faster-than-light travel, and faster-than-light communication are possible. A few human civilizations exist in the Beyond.

- The outermost layer, containing the galactic halo, is the “Transcend,” within which incomprehensible, superintelligent beings dwell. When a “Beyonder” civilization reaches the point of technological singularity, it is said to “Transcend,” becoming a “Power.”

Both these books embrace myriad concepts, any one of which could have been developed into a story in its own right. A somewhat related item that keeps on tickling my imagination is the science (no fiction) book Reinventing Gravity by John W. Moffat. Galaxies spin so fast that, based on the amount of visible matter in them, their stars ought to be hurled out into the void. Cosmologists are attempting to solve the problem by positing dark matter. Moffat’s modified gravity theory (MOG) can model the movements of the universe without recourse to dark matter. In a crunchy nutshell, Moffat says that both Newton and Einstein were wrong, and then things get complicated. It was unfortunate that I read Vinge and Moffat around the same time, resulting in both concepts coalescing in my cranium.

A big part of Vernor’s Zones of Thought concept is the way in which the power of artificial and biologics intelligence increases the further removed one is from the galactic center. This makes so much sense—I’ve long believed that (what I laughingly call) my mind is not working at its full potential. I could be so much more if I were in possession of a faster-than-light spaceship, and—much like Captain James T. Kirk—I was able to boldly travel behind the beyond, behind which no man has boldly travelled behind, beyond, before. But we digress…

The reason all these thoughts pertaining to artificial intelligence are currently rattling around my poor old noggin is that I was just chatting with Kim Lee, who is Senior Director of Systems Applications Engineering for Sensors, Solutions, and IoT at Infineon Technologies Americas. Kim’s responsibilities include “Managing a team of software, hardware, and algorithm engineers focusing on IoT and sensor solutions, utilizing the company’s product-to-solution (P2S) solution strategy” (try saying that ten times quickly).

This all started when one of Infineon’s Minions (I couldn’t help myself) reached out to tell me, “We just announced a partnership with Archetype AI to develop sensor chips with artificial intelligence functionality. Infineon will pilot Archetype AI’s Large Behavior Model (LBM), which is designed to generate AI agents that automatically create code for customer-specific sensor use cases to run as edge models on customer devices. For example, sensors built by Infineon with help of the LBM platform will make devices like TVs, smart speakers, and smart home appliances aware of people and the world around them.”

They had me at “sensor chips with artificial intelligence functionality.” After that, I was “putty in their hands.” I just bounced over to the Archetype AI website to discover that they are on a mission to UNDERSTAND THE REAL WORLD IN REAL TIME.

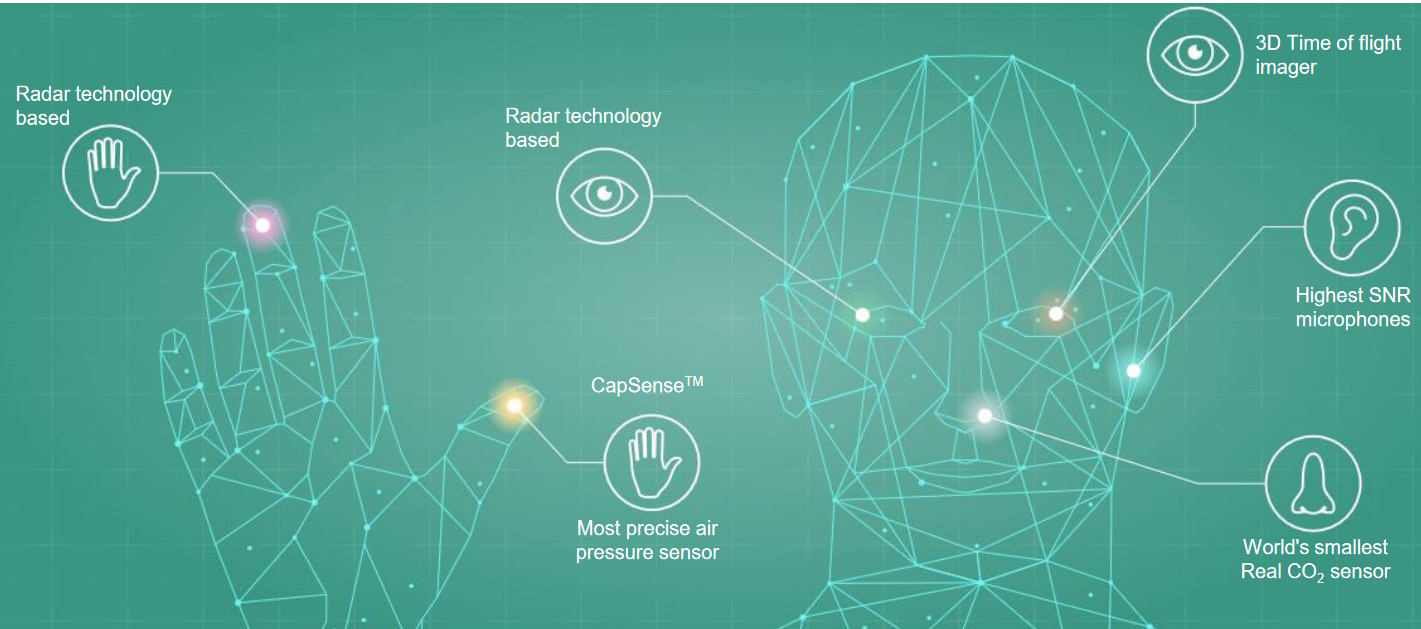

It’s no secret that the folks at Infineon design and manufacture a wide variety of XENSIV sensors, including MEMS microphones, 3D Time-of-Flight (TOF) image sensors, and high-precision, small form-factor environmental sensors. However, the sensors that were the focus of our conversation were Infineon’s ultra-low-power 3D radar sensors.

Giving “things” human senses (Source: Infineon)

When it comes to things like object detection and recognition, many people’s knee-jerk reaction is to think of optical, camera-based technology, but radar can offer many advantages. For example, radar can detect even the smallest kind of motion, including whether someone is breathing, for example. This means there’s no need to periodically jump up and down waving your arms to keep the lights on (yes, I’m thinking of my frustrating experiences with traditional PIR sensors).

Radar is versatile and flexible (it can sense distance and velocity, breathing and heart rate, people and gestures), it’s environmentally robust (it works in both sunlight and darkness and is resilient to dust, fog, and temperature), and it can anonymously detect through obstacles, which means it can be inconspicuously embedded in end products (see also Radar Sensors for the IoT).

The combination of Archetype AI’s AI (if you see what I mean) with Infineon’s 3D radar sensors will allow developers to uncover the hidden patterns of behavior in unstructured sensor data and blend real-time sensor data with natural language to create a living view of the world to analyze complex sensor-based data (again, try saying that ten times quickly).

I can easily envision the day when AI-augmented sensors surround us, powering products that hang onto every word and gesture, poised to pounce into action to sate and satisfy our every whim. How about you? Are you, like me, preparing yourself for a life of intelligent sensor-powered delectation and delight?