[A previous version of this article contained a metaphor to watching pornography which has been removed, with our apologies.]

For two days in late August, an estimated 1,000 engineers crowded into Stanford Memorial Hall in Palo Alto, Calif. for Hot Chips 2019. #HotChips19, aka #HotChips31, featured some of the most impressive microprocessors seen in Silicon Valley in some time. That’s because we are in a new golden age of chip architecture, according to tech gurus John Hennessy and David A. Patterson, who co-wrote a leading textbook on computing and were among the engineers at the annual event.

The golden age was born when two opposing trends smashed into each other – the decline of Moore’s law and the rise of deep learning. The decline in Moore’s law basically means tomorrow’s semiconductor process technologies won’t speed up yesterday’s processor designs anymore.

That’s bad timing, because, since about 2012, the computer industry basically discovered a whole new way to compute stuff with deep learning – aka AI. It can identify your face in a picture, translate an article like this one into Hebrew, and even make occasionally smart dinner recommendations faster than your significant other. The trouble is, it needs lots of high-performance microprocessors running mountains of linear algebra equations.

So, silicon-savvy venture capitalists and entrepreneurs have cold-called every chip architect in their Rolodexes over the last few years. They’ve formed a few dozen startups and as many projects in major companies to create the new Holy Grail – the AI accelerator. This new breed of chips comes in two flavors – training (chocolate) and inference (vanilla), though, eventually, there will be several flavors of inference.

Reveals of these AI accelerators made up about half of the two-day program of Hot Chips 2019. As it turns out, there’s a boatload of other things happening in tech these days, so the other half was a Baskin Robbins of silicon.

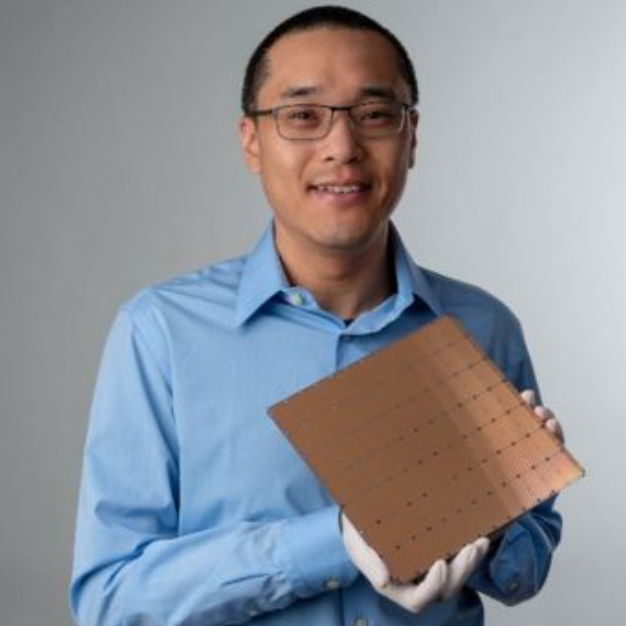

Startup Cerebras won the award for the biggest reveal—an entire wafer (minus shaved off silicon moustaches) dedicated to AI training. It was the talk of the show, with one investor calling its approach a whole new way to build computers.

Time will tell whether Cerebras can actually ship and sell its 46,225 mm2 processor profitably, let alone whether anyone else will be able to master its technique of wafer-scale integration. Others have tried and failed.

Huawei in some ways had an even more evocative treat for the crowd. It laid out details of its entire family of AI processors and the systems they go into. A top Huawei engineer did this from the safety of a video hookup from China, lest the Trump administration arrest him for indecency or some other trade violation.

China’s communications-systems giant made its point that it could be almost entirely self-sufficient in this new ultra-competitive area of AI. It needs only a leading-edge, high-volume foundry, and the main country with one of those is Taiwan, which China apparently hopes to annex, along with Hong Kong, someday.

Alibaba, the Amazon of the East, doesn’t need any AI accelerators from the West either, it seems. It described a part that could process voice commands for a smart speaker in 1/50th the time of a top GPU. So watch out, the Party is listening, and it can understand what you are saying.

Not to be undone, Intel announced two AI accelerators at the event – one chocolate and one vanilla. One of its veteran firefighters, Gadi Singer, was in the wings making sure the talks came off without a hitch. Intel even staged a nearby tongue-in-cheek show one evening after Hot Chips with two of its top architects working on a third chip known so far only by a showbiz name meant to inflame the imagination of techies -Xe. The “e” apparently stands for exascale, in homage to the Aurora supercomputer expected to be the first system using it.

Rounding out the AI part of the program, startup Habana described a chocolate chip that can scale out cheaply using standard interfaces such as Ethernet. Xilinx described its chip that can make chocolate or vanilla, and Nvidia, which leads in data center AI, disclosed a research project – sorry, no peeks at its 7nm Ampere until 2020!

Like Nvidia, Facebook just teased the crowed with a talk on Zion, its accelerator-agnostic system. No word on what, if any, silicon it is designing, although it is working on an open-source AI compiler to pour over Zion. O happy day!

In a sign of how fast AI is moving, the MLPerf non-profit that is developing benchmarks suggested that it wants to double the number of metrics it has created so far. In addition, it is broadening its purview to include repositories of data sets and best practices.

At this rate, the organizers of Hot Chips 2020 will have to turn away good AI papers or rename the event AI Chips. Luckily, that was not the case this year.

Tesla gave a talk on its accelerator for self-driving cars. Intel and Hewlett-Packard Enterprise described advances in storage-class memory with Optane chips and the first GenZ chip set, respectively.

A handful of talks described emerging packaging technologies that are cropping up almost as fast as new architectures. (Arguably we are also in a golden age of chip packaging.) And AMD even revealed a new x86 core – the sort of talk that would have been the highlight of the show back in the golden age of PCs.

At the end of the second day, organizers scheduled a fun talk to help engineers cool down before they were released back into the daylight of the Stanford campus. Microsoft described the silicon guts of its latest HoloLens mixed-reality headset. Yes, Virginia, all this and VR, too!

Attendance broke historical records for the event that was created back in the heyday of the 386. Red wine flowed at evening receptions as engineers reconnected with former colleagues and bosses and networked with potential new ones.

There were plenty of reasons to celebrate. Salaries for AI-savvy engineers are going through the roof, and lifetime employment is virtually guaranteed for anyone who can spell RTL.

It’s a little tougher for those of us who write about all this stuff, given the broken print advertising model (thank you, Google). But hey, even we non-technical folks downstream picked up on the excitement and had a bit too much wine.

Of all the analogies you could pick to describe attendee enthusiasm, you pick porn?

Could this be a reason why women are leaving engineering?

It could be a reason I leave this magazine.

@SK: It sounds like you were strongly offend by my choice of metaphor which was not my intention. It would be really unfortunate if anyone chose to stop reading EE Journal–or worse–to leave or not enter engineering due to that choice.

I am offended. I’m an engineer and I am excited by brilliant advances in science and technology. To suggest that I’d be equally excited by a porn festival is an insult, pure and simple. And, to the degree that other male engineers are NOT offended by your analogy, it’s understandable that women don’t feel comfortable in engineering.

When I was in engineering school, 45 years ago, there were six women in my class of 150. They were bright, hard working, and as interested in understanding how things worked as anyone. It was a pleasure to learn alongside them. In the four decades since, I’ve been saddened to see that their ranks have not increased. Engineering is still a boy’s club, and your analogy is disheartening evidence of it.

Were your daughter an engineer, would you ask her if the Hot Chips conference was as exciting to her as a porn film festival?

Yes, it would be unfortunate for a women to not enter engineering because of misogyny. So stop promoting it.

SK – I agree with you.

As editor in chief of EE Journal, I would like to apologize. Our EE Journal team is mostly women and we take issues like gender disparities in engineering seriously.

We also have a tradition of hiring highly-skilled and knowledgeable writers, and giving them a lot of trust and discretion in their work. We will revisit that policy when trying out new writers, and we are re-writing this article with that in mind.

Thank you … for responding to SK and moving EEJ into a better place.

I’ve mentored more than a few women in engineering over the last 55 years, all of which have struggled badly against the boys club. This includes my daughter struggling with a career as a MechEng in this all boys club in a very large US company. Great annual reviews, but no advancement opportunity. Finally changing companies after being locked out of advancement after advancement for years.

This is certainly getting worse as we have more foreign born and raised engineers and managers, that clearly, and often vocally, object to women taking a mans job, because it conflicts with the culture they were raised in.

And people wonder why retention of women engineers in the industry is so bad.

Prompt, responsible, & productive solution. Thank you for stepping up quickly to rectify and adjust going forward.