In IoTland, communication is king. It puts the Internet in the IoT. But how to communicate? LTE? BlueTooth? WiFi? It partly depends on the application and the location, but it also depends on how far you are from a wall plug.

Because many IoT devices are small, portable, and – this is key – battery-powered, they will forever be infinitely far away from the wall plug. While WiFi has featured in IoT discussions for years, there’s always been that bit about WiFi consuming too much energy for thrifty tastes. So what to do about that? One approach is to use purpose-built protocols like ZigBee. But, man, something as everywhere as WiFi is – including on our phones and routers – it’s hard to compete with, even if you have a lower-power solution.

The other approach, of course, is to reduce the energy consumption of the WiFi implementation (which WiFi folks would be well motivated to do to avoid losing out to something with lower power). Obviously, you can do only so much when constrained by a protocol that was invented long before the IoT was a gleam in anyone’s eye. But what if you could cut the WiFi power in half? Would that ease the pain enough to forego swapping protocols?

Silicon Labs claims to have done just that. Through a number of means – which we’ll address – they say that the energy requirements for their WiFi chip are 50% less than that of a low-power WiFi competitor (for DTIM=3 – more on this later). Let’s walk through the things with which they credit this substantial savings.

Respect

When you ask them how they did it, one of their early answers is, “We respect the network, and we respect the data.” So… what does that mean? First, they’ve architected for fewer retries. How does that matter? WiFi can be a crowded environment (even worse if there are other protocols at play in the same spectrum). If you try to send, you might be blocked – so you back off and try again later. Or, if a message isn’t received correctly, you may have to resend. Each sending attempt uses energy.

Features that contribute to fewer retries include:

- Well-managed antenna diversity for clearer reception (and therefore fewer fails)

- A 115-dBm link budget, increasing the likelihood that a message is successfully received the first time.

- Playing better with other 2.4-GHz protocols like Bluetooth, which they claim to do. They say that, in IoT devices, the transceivers often clobber each other. And, of course, a clobbered message isn’t a received message, causing a retry.

Then there’s security. They hearken specifically to a WiFi exploit called Broadpwn that reintroduced the malware worm into our world after a couple of OS improvements had made them mostly a thing of the past. And there was one specific reason why this was possible: the Broadcom WiFi chip that they exploited did no code attestation. Meaning, the attackers could make code changes without the system noticing.

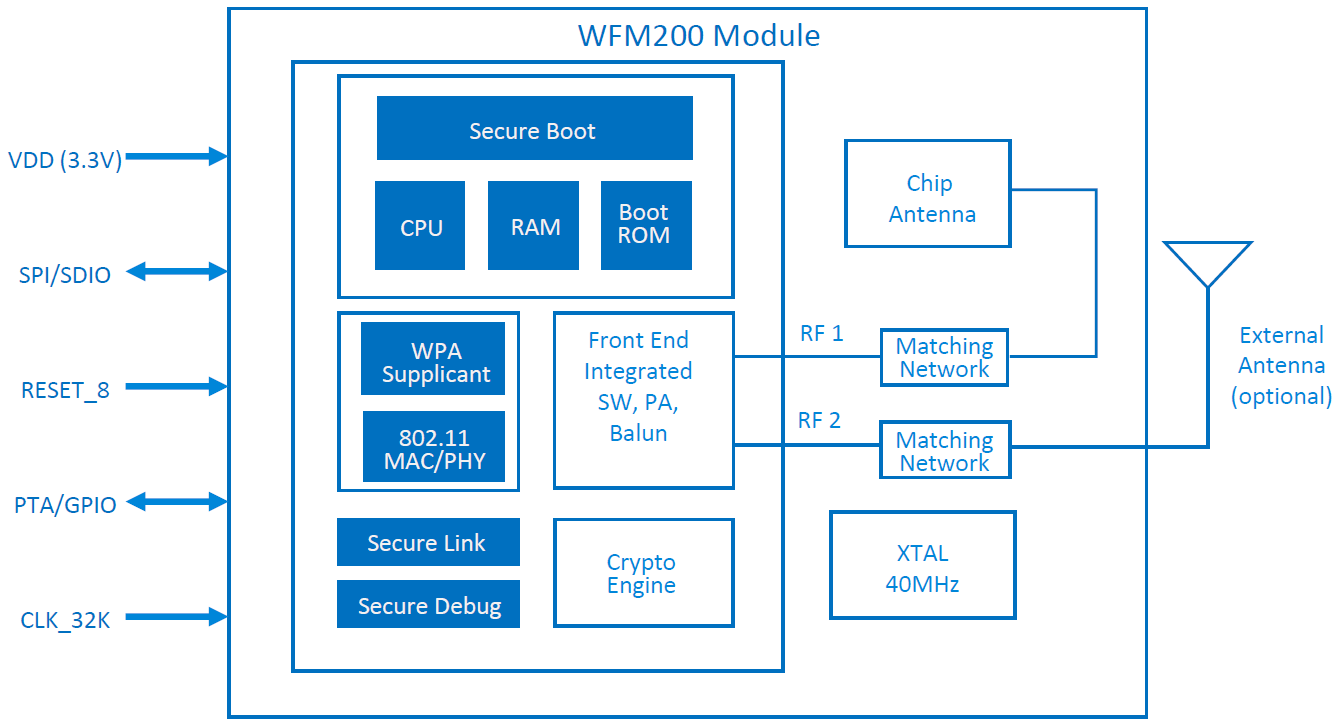

So the Silicon Labs versions have a secure boot loader, with trusted software that will fail if changed, and with secure updating.

Then there’s the possibility of sniffing data on the board or module. The WiFi chip handles the lower layers of the protocol; the upper layers are handled by the host processor. That means that, after the lower layers have been processed, the packet gets transferred across a physical connection to the host. If someone can access that data while it’s transferred, then you have a breach.

So Silicon Labs has implemented an encrypted connection on the SPI link between the WiFi chip and the host MCU. The chip comes with a pre-installed (OTP) master key (unique per device) that’s used for key validation. Bringing up the connection involves the usual public-key authentication that results in private symmetric keys for the AES encryption. During authentication, the public key is “tagged and then authenticated with” that stored key. Meanwhile, they use standard network encryption when talking to the outside world. All of the cryptography is accelerated in hardware.

![]()

(Image courtesy Silicon Labs.)

These architectural features account for much of the power savings. But they say that low-power circuit design also had a part. On that score, however, they aren’t pointing to any specific circuit-design breakthroughs. It’s more a matter of ensuring that as many of the known low-power design techniques as possible are leveraged.

The results? They claim transmit current of 138 mA and receive current of 48 mA, with average current (over time) of 200 µA – with DTIM of 3. So there’s that DTIM thing again. What is that?

Mapping Message Intervals

For those of you who, like me, haven’t been steeped in WiFi protocol details, this turns out to be related to how multicast or broadcast messages are sent. You might wonder, do people really send multicast messages so frequently that such a feature would impact average power? Surprisingly, the answer is, “Yes” – but not for the reasons you might think.

Some such messages aren’t explicitly sent by a user: they could be system messages. A good example is when the system doesn’t know the IP address of a named recipient. So it sends out an address request using the Address Resolution Protocol (ARP). That could happen more often than you think.

Prior to sending a unicast message, the system sends a beacon to the recipient, telling it to expect a message. That beacon is referred to as the Traffic Indication Map (TIM). (Not sure why it’s a map…) For multi- and broadcast messages, however, you have a separate interval called the Delivery TIM (DTIM) – yeah, these names don’t seem (at least at first glance) to be particularly helpful in understanding what they do.

The idea is that you don’t necessarily have to prep a recipient for a broadcast message as often as for a unicast message. You could do so, or you could wait some number of TIMs for each broadcast beacon. That’s what the DTIM is: it specifies how many TIMs between broadcast beacons. A DTIM of 1 means that every other beacon can contain broadcast advisories. A DTIM of 3 means that there are three TIMs between every DTIM.

Having a higher DTIM number reduces the broadcast frequency and therefore results in lower power. But it also adds to the latency of those broadcast/multicast messages. And if one of those, for example, is an ARP intended to properly route a unicast message, then a longer-latency ARP results in a longer-latency unicast message. So… as always, it’s a tradeoff.

What’s for Sale?

What does this all mean in terms of actual product? Well, technically, nothing’s for sale at the moment. Samples are ready now, but full production starts in Q4 of 2018. And there are three components to the offering: chips, a module, and software.

The WF200 transceiver chips will be available for those building their own boards or modules. The WFM200 SiP modules, on the other hand, are good to go as is – including pre-certification by the FCC (US), CE (Europe), IC (Canada), South Korea and Japan. The module itself is 6.5 mm on a side, not much bigger than the solo transceiver, which is 4 mm on a side.

(Image courtesy Silicon Labs.)

Finally, there are two software options. One has the upper MAC layer (UMAC) execute in the chip itself, done in hardware. The other has the UMAC run in software on the host. Now, if you’re like me – knowing just enough to look stupid – then you might ask, “If I can do it in hardware, why would I ever opt to do it in software instead?”

Well, I guess if the WiFi standard were iron-clad and unambiguous with only required features, you could. But… turns out that’s not the case, as told by Silicon Labs. There are some features not implemented in hardware, and there are other aspects that aren’t particularly clear – and therefore might be done differently by different people. So if the hardware implementation suffices, then you’re good. If not, you have the software option. Doing it in software, of course, requires more computing resources than using the hardware. So, again, a tradeoff.

More info:

If WiFi consumes half the energy, will it work for battery-powered Things?