I tell you, I’m starting to feel like I’m riding the crest of the wave when it comes to AI in all its multifaceted glory. Back in the 2010s, I thought it was astonishing that you could show an AI images of cats, dogs, chickens, and penguins, and it could tell these little rascals apart. I know that may seem “old hat” now, but as recently as ten years ago, it was considered nothing short of mind-boggling.

Since that time, which I’ve come to think of as the true dawn of perceptive AI (everything before paled by comparison), we’ve raced through enhancive AI, assistive AI, generative AI, and—most recently—agentic AI. Now we live in the heady days of vibe coding (see also What the FAQ are Perceptive, Enhancive, Assistive, Generative, Agentic, and Physical AI (and Vibe Coding)).

It’s also increasingly common to see mention of human-in-the-loop (HITL). This refers to an AI development approach in which humans interact with machine learning models for training purposes and to improve accuracy, safety, and ethical practices. The idea is that by providing feedback, training data, and decision oversight, humans ensure that AI systems are reliable. I wonder how long it will be before this term is supplanted by human-out-of-the-loop (HOOTL). (In the days of darkness that are to come, remember you heard this term here first.)

I also have a ringside seat when it comes to watching new companies emerge into AI space (where no one can hear you scream). Some of these entities are with us for too short a time, while others seem to hit the ground running and start to soar before I’ve caught my breath.

An example of such a soaring company is EnCharge which officially launched in December 2022 (less than four short years ago as I pen these words). I had a chat with two of the company’s founders at the start of 2023, only a month or so after they emerged from stealth mode (see Performing Extreme AI Analog Compute Sans Semiconductors).

In a crunchy nutshell, AI can be largely split into training on the frontend and inference at the backend. Training is performed once (well, relatively few times), while inference is performed millions or billions of times. Around 95% to 99% of inference involves matrix operations, at the core of which are multiply-accumulates. The folks at EnCharge have come up with a novel way to perform these multiply-accumulates in low-power, high-performance analog, the nitty-gritty details of which are discussed in my Performing Extreme AI Analog Compute Sans Semiconductors article.

Well, here we are, just a couple of years later, and EnCharge is going from strength to strength. I was just chatting with CEO Naveen Verma, who was kind enough to bring me up to speed with the company’s latest developments.

What makes EnCharge especially intriguing is that they are not simply building yet another digital AI accelerator. Their secret sauce lies in performing the core multiply-accumulate operations directly in memory using analog techniques. By reducing the need to shuttle data back and forth between memory and compute blocks—a major source of power loss in conventional architectures—they can achieve dramatically better efficiency.

Of course, clever silicon on its own is only half the battle. Since we last spoke, EnCharge has also built out the software stack—quantization tools, compiler flows, runtime support, and firmware—to ensure that real-world AI workloads can actually take advantage of all this analog wizardry.

The chaps and chapesses at EnCharge are still pursuing an edge-to-cloud strategy. Their target is outside the data centers, close to the edge, but not at the extreme edge. Their original focus was large-scale automation tasks that require state-of-the-art AI/ML models, including industrial, smart manufacturing, smart retail, warehouse logistics, robotics, and so forth.

Large-scale automation is still a major focus, but a lot has happened since 2023, resulting in greater urgency to put serious AI horsepower onto end users’ local compute platforms. I know I would love to be in a position to have my own high-end AIs running locally on my home and office workstations.

So, you can only imagine my surprise and delight to be introduced to the EN100, which the folks at EnCharge describe as “The game-changing AI accelerator that delivers 200 TOPS of AI computer power in an ultra-efficient chip. Engineered to bring advanced AI capabilities to laptops, workstations, and other compute appliances, EN100 enables a new era of personalized AI at the edge.”

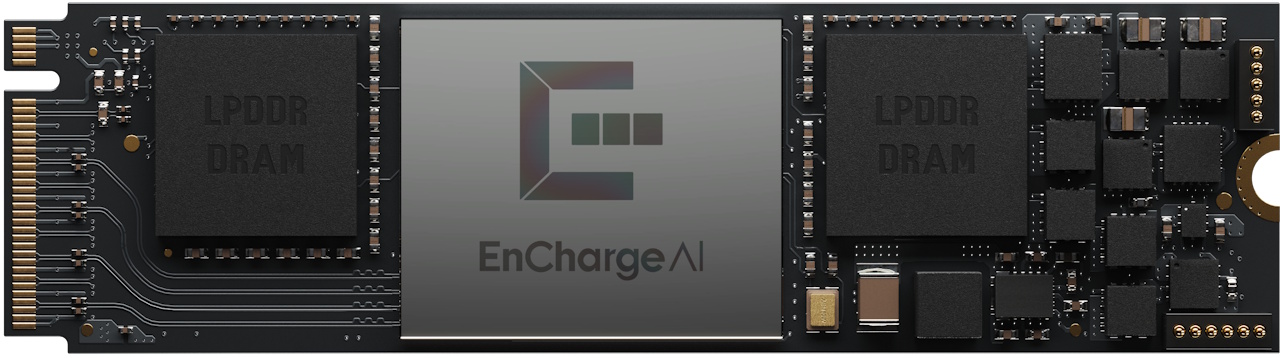

As shown below, a single EN100 device, which is implemented in the 16nm CMOS process node, is available on an M.2 2280 card for use in laptop computers. This bodacious beauty offers 200+ TOPS while consuming only ~8.25W. It’s equipped with 32GB of on-board memory with up to 68GB/s bandwidth (wow!).

Single EN100 presented on an M.2 card for use in laptops (Source: EnCharge)

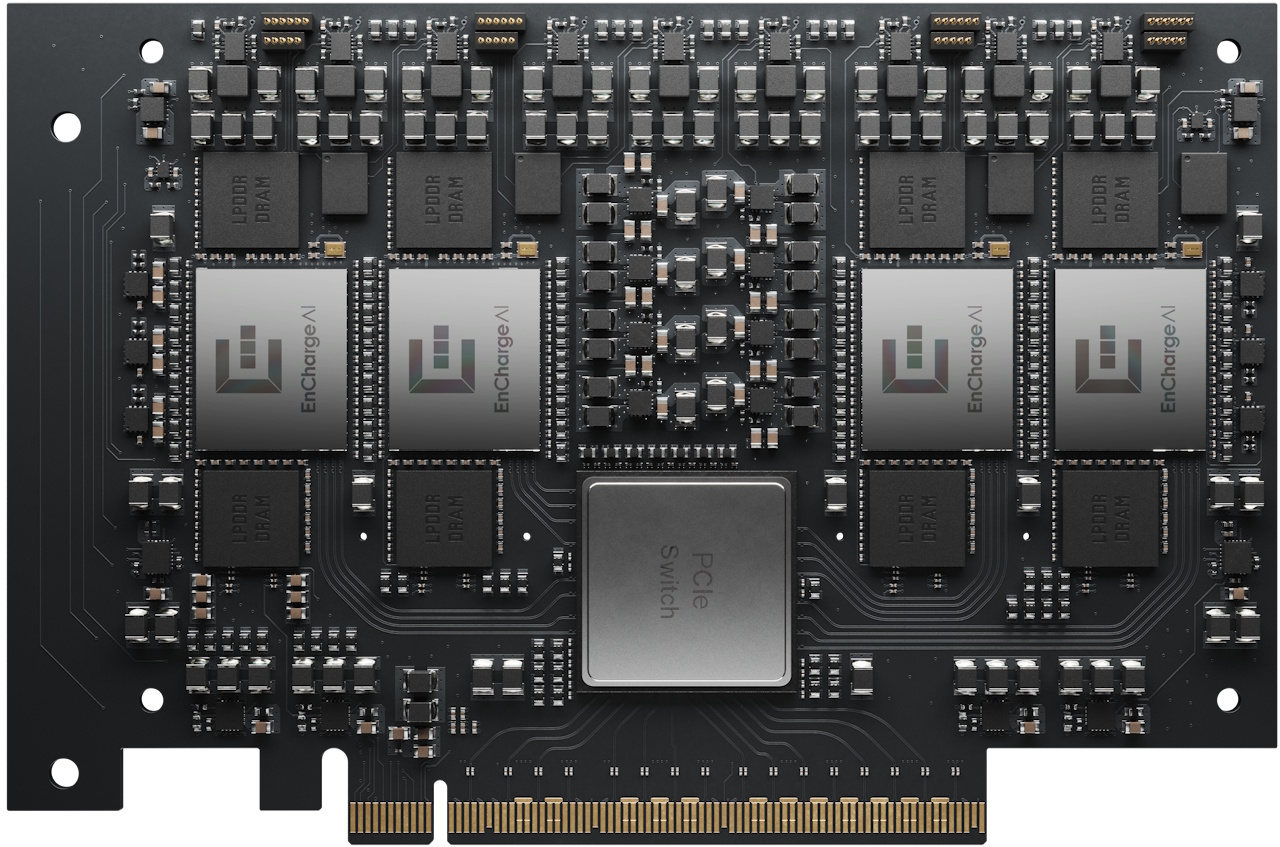

Alternatively, you can deploy four EN100 devices on a PCIe card as shown below. This awesome scamp offers ~1 PetaOPS of peak TOPS while consuming only ~40W. It’s equipped with 128GB of on-board memory with a cumulative bandwidth of 272GB/s (wow^2!).

This allows you to bring data center AI performance to your desktops, workstations, and on-prem servers with GPU-level compute capacity at a fraction of the cost and power consumption. As the folks at EnCharge say, this is “ideal for professional AI applications in secure and sovereign multi-user environments.”

Four EN100s presented on a PCIE card for use in desktops, workstations, and on-prem servers (Source: EnCharge)

And this could be just the beginning. As Naveen pointed out, physical AI—robots, autonomous machines, and other systems that interact with the real world—is a natural next step. On many robotic platforms, AI compute can consume a surprisingly large chunk of total system power. Cut that compute power dramatically, and you can also reduce cooling, battery size, weight, and even motor requirements. In short, making AI more efficient doesn’t just improve a robot’s brain; it can transform its whole body.

When I stop and think about it, what really strikes me is how quickly our expectations have changed. Barely a decade ago, we were marveling that machines could distinguish cats from chickens (I don’t know about you, but I still am). Now we’re comfortable talking about fitting serious AI acceleration into laptops, workstations, robots, and who knows what else. If the past few years have taught us anything, it’s that tomorrow has a habit of arriving far sooner than expected. I think it’s safe to say that the future may be even more extraordinary—and more exciting—than we can currently imagine (and you can quote me on that).