As I’ve mentioned several times on previous occasions, when I commenced my degree course in Control Engineering at Sheffield Hallam University in England circa the mid-1970s, the only computer available to the students that physically resided in the engineering department building was a room-sized analog beast. In this case, we implemented our algorithms using plug-in cables to connect the various analog functions.

We did have access to a time-sharing digital monster that resided in its own environmentally controlled building. In this case, we captured our programs on perforated paper products like paper tape and punched cards, and then we hand-carried these creations to the computer building to be processed whenever its surly gatekeepers felt moved to do so.

As the years passed by, digital computing and digital signal processing (DSP) rose to ascendancy while analog computing and analog signal processing (ASP) fundamentally faded from the collective consciousness. Then along came artificial intelligence (AI) and machine learning (ML). Surprise! Although digital inferencing engines currently dominate the scene, we are seeing an ever-increasing number of analog technologies entering the fray.

When one thinks about it, inferencing using an artificial neural network (ANN) is essentially signal processing, irrespective of whether we employ digital or analog techniques. Suppose we are looking for a relatively small number of keywords or command phrases, like “TV On,” “Channel 20,” “Volume Up,” and so forth. Each of these commands will have an associated output neuron that will report its confidence level that it’s detected the key word or phrase to which it’s dedicated.

I am, of course, a devotee of the digital domain. It must be acknowledged, however, that performing many computational and signal processing tasks using digital techniques can be horrendously inefficient. By comparison, analog computation and signal processing is inherently fast, low power, and low latency.

What really amazes me is how quickly things are moving in this area. For example, I was just chatting with Roger Levinson, who is Co-Founder and CEO, and Martin Mason, who is Head of Product Marketing, at Blumind. Although this company, whose tagline is “AI For Everyone, Everywhere,” was established in 2020, which is only three years ago at the time of this writing, the folks at Blumind are already exiting stealth mode and entering the fray with products in the form of the BM110 SoC for always-on audio and time series data applications, and the BM210 for always-on video and image classification applications.

Some of Blumind’s always-on applications and markets (Source: Blumind)

For the purposes of the remainder of these discussions, we will focus on an application to detect, say, ten different spoken commands, in which case we would probably opt for one of Blumind’s off-the-shelf BM110 SoCs. This is accompanied by an associated ANN architecture (with PyTorch, TensorFlow, Caffe, etc. representations) that can be trained in the cloud using an appropriate data set. The Blumind translator will then perform any necessary co-efficient quantization, compression, and mapping into the BM110.

Roger and Martin told me that their long-term vision for this incarnation of their technology is to get the power, size, and cost so low that it can replace buttons, switches, sliders, and a wide variety of other human interface/touch interface type products.

Although I’m primarily focused on technology, and I’m less enthused by the business side of things, I was interested to learn that, in addition to their own off-the-shelf offerings, the folks at Blumind are happy to work with customers who wish to create their own devices, either in the form of complete SoCs or as chiplets (see Are You Ready for the Chiplet Age?). Furthermore, they are also happy to provide their technology in the form of IP, so you could incorporate the functionality of a BM110 (for example) directly into your own microcontroller unit (MCU), application processor (AP), or SoC.

Hang on. Wait a moment. If this is analog technology, then won’t we need to implement it using a horrendously complex process technology with lots of extra layers? I’m sure that—like me—you are thinking of some of the analog AI technologies we’ve seen that rely on boutique processes using exotic non-volatile memory type solutions or similar capabilities. If so, then I’m also sure that—like me—you are remembering some of the issues that can be associated with those technologies, like the need to address their sensitivities to process, voltage, and temperature (PVT) variations and drift.

This is where things start to get really interesting because—instead of using floating gates to store the network’s coefficients (weights)—the guys and gals at Blumind are using the high-k dielectric insulating layer on the gates to the MOS transistors used in today’s advanced CMOS nodes (they store their weights as 8-bit sign-magnitude values). This means that, while traditional* memory-based analog AI inferencing solutions start to stall at older process nodes and face real challenges getting to 32nm and below, Blumind’s technology kicks in at 32nm with a roadmap all the way down to 5nm and below (*I can scarce believe I’m saying “traditional” in the context of technologies that were radically new just a couple of years ago).

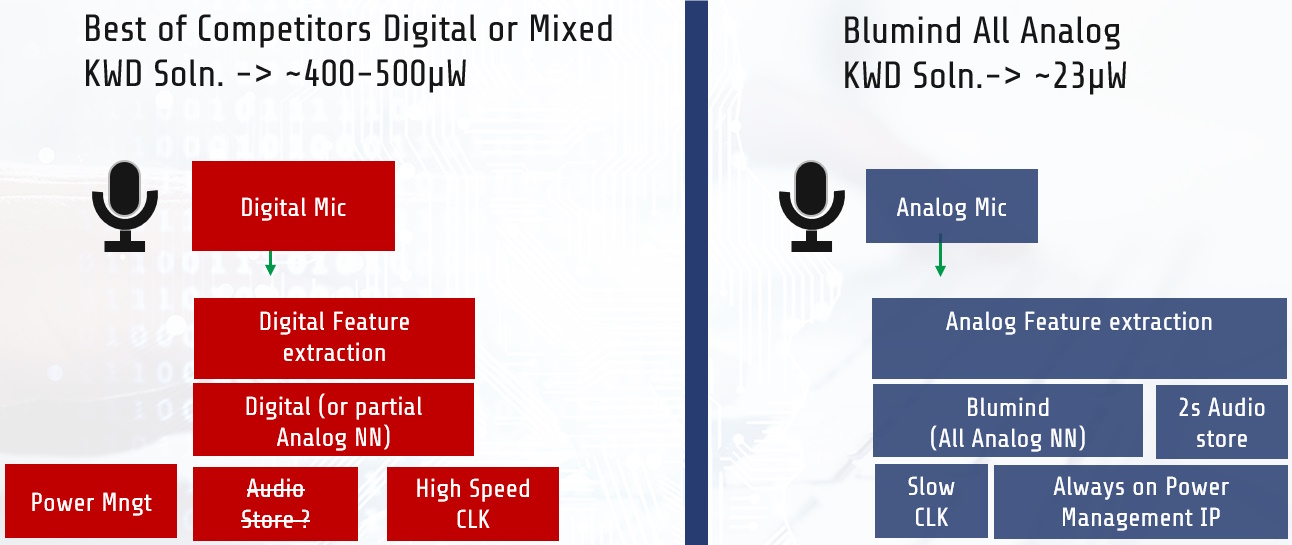

So, how does Blumind’s all-analog technology compare with conventional digital keyword / phrase detection? Let’s consider the case of power consumption, for example, as shown below.

Audio example of Blumind’s all-analog total system advantage (Source: Blumind)

Roger and Martin gave me a detailed breakdown of the nitty-gritty numbers, but they want to keep a lot of details under an NDA umbrella. Suffice it to say that their all-analog solution consumes less than 1/20 the power of their nearest digital competitor.

Let’s say we are looking for one of ten keywords or phrases. How can we tell when a keyword or phrase has been detected and which one it is? Well, this depends on your implementation. In the case of the BM110, it will raise an interrupt flag when it detects one of its assigned words or phrases. When you see this interrupt, you would use an SPI or I2C interface to read a register inside the device that will tell you “What you’ve got,” as it were.

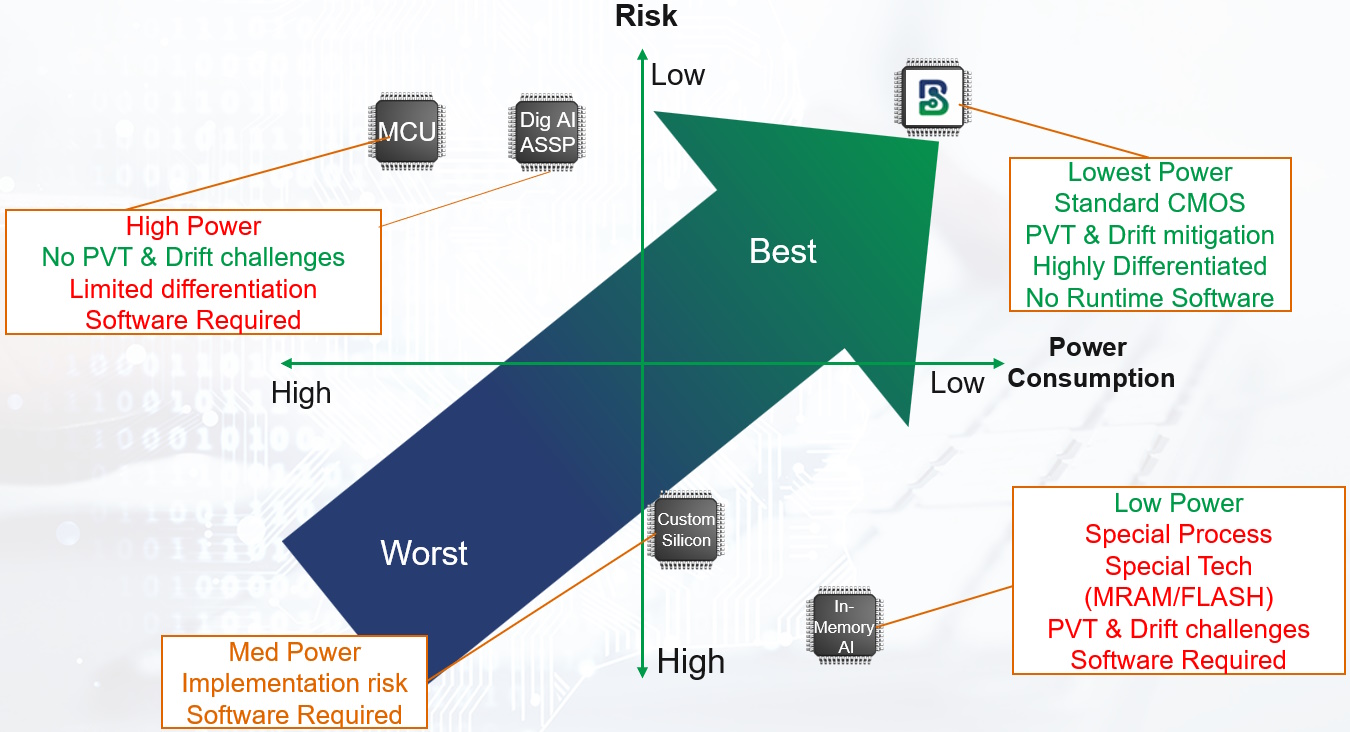

Every technology has its advantages and disadvantages, its risks and rewards, and its… you know the sort of thing. One way in which the chaps and chapesses at Blumind compare and contrast their technology to their competition is as depicted below.

Depiction of relative risk and power consumption for various solutions (Source: Blumind)

I don’t know about you, but I can’t stop thinking about the fact that they are implementing an always-on, all-analog neural network using a standard CMOS process, and that they’ve managed to mitigate all the PVT and drift issues that have plagued their predecessors.

All I can say is that I certainly didn’t see this one coming. As always, I will end by presenting you with my usual poser, which is: What do you think about all of this?