Which chip is faster: Apple’s M1 or Intel’s Core i7? The new or the old? ARM or x86? The mobile gadgets company or the major microprocessor maker?

Spoiler alert! It’s a trick question – a Rorschach test. You’ll see what you want to see in the benchmark results, and what you take out of the scores is, as Yoda says, “only what you take with you.”

Computer benchmarking, like auto racing, has been around ever since they made the second one. It’s natural to try to find out which chip is “better,” for some definition of better. Drag racing a couple of CPUs seems easy, but once you get into it you realize it’s fraught with problems. What, exactly, are you measuring? Computers do different things differently, so deciding what to measure is just as important – if not more so – than the actual measurements.

Apple clearly felt that designing its own ARM-based chips was better than buying Intel’s x86-based processors. But better how? Was the Cupertino company simply looking to save money? To reduce power consumption? Improve performance? Gain control over its CPU roadmap? All of the above?

As soon as M1-based Macs went on sale, people started benchmarking them. And the results were… pretty good! The M1 seems fast and it appears to deliver good battery life. A home run, then? A big technological win for Apple? Maybe… maybe not.

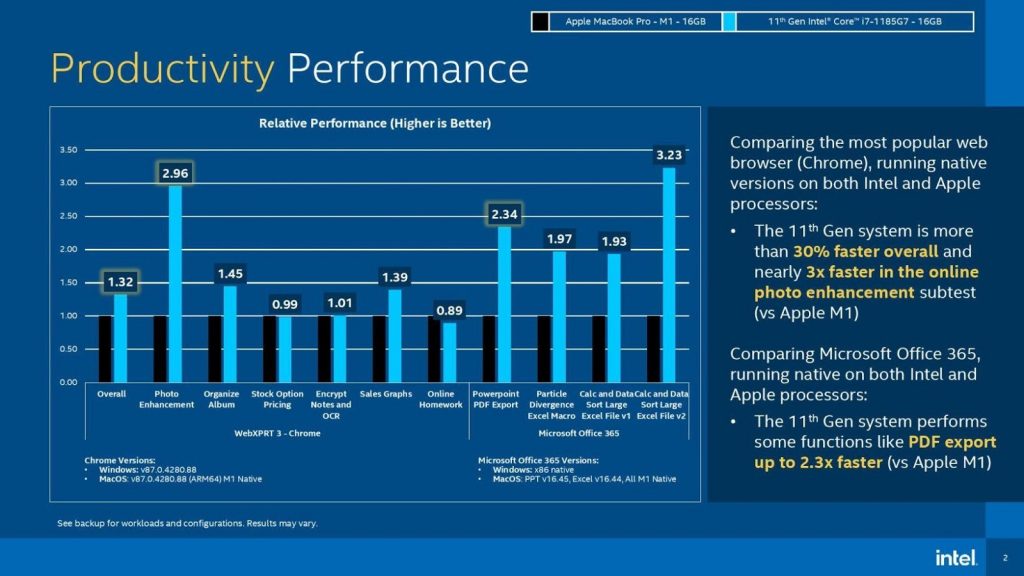

It was equally inevitable that x86 partisans would benchmark equivalent Intel processors. And those results were… also pretty good! Then Intel itself weighed in with a very Apple-specific presentation comparing M1 against its own Core i7 processor. Well, processors, plural, as we’ll see.

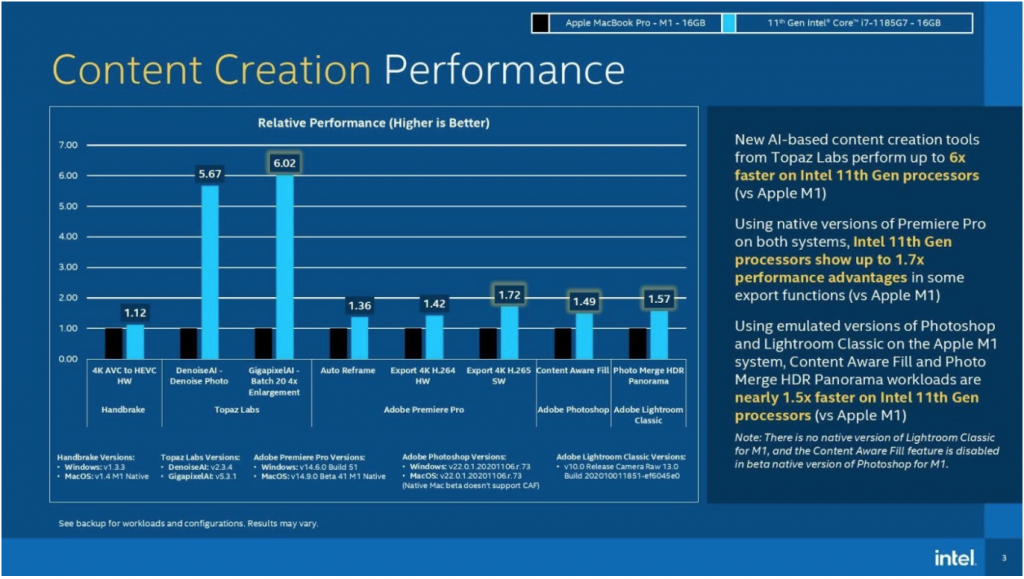

As you might expect, the Intel-supplied benchmarks showed Intel on top, sometimes outperforming M1 by ratios of 3:1 or even 6:1. Somewhat less expectedly, Intel also took on Apple in a battery-life test, graciously admitting defeat by the barest 1% margin (10 hours, 6 minutes of battery life vs. 10 hours, 12 minutes.)

Source: Intel

Source: Intel

Source: Intel

Seems pretty straightforward, right? Surely you can’t fake such large differences in performance, and Intel’s candid willingness to concede a tiny advantage to Apple shows that their heart’s in the right place. Bonus points for honesty.

Well, yes and no. Benchmarks show what you want them to show, and Intel’s benchmarks were… unusual. Nowhere do we see the standard benchmarks like GeekBench, 3DMark, Cinebench, CoreMark, PassMark, SPECmark, or even the hoary old Dhrystone. Instead, we’re treated to Microsoft Office 365 apps running so-called RUG: Intel’s own definition of “real-world usage guidelines.” Not a benchmark I’m familiar with. You?

The numbers also show browser tests using the WebXPRT 3 benchmark, which was developed by Principled Technologies in cooperation with Intel. It relies heavily on x86 extensions, so guess how that one turned out. For the record, AMD processors do well on WebXPRT 3, too.

Intel’s most impressive performances came from Topaz Labs’s AI tests. But like WebXPRT, Topaz’s software leans heavily on x86-specific architecture extensions. And this highlights a fundamental question about benchmarks. Should we use tests deliberately designed to favor one CPU architecture over another? One on hand, optimizing code is A Good Thing, and there’s every reason to design a test to exercise unique parts of the hardware. And vice versa. Plenty of CPU designs get tweaked to improve software performance. We call that progress. Should a benchmark highlight such progress or hide it under a blanket? Intel’s thrashing of Apple’s M1 in this case is wholly deserved. But is it relevant? That’s up to you.

Flipping from performance to efficiency, we find some “benchmarketeering” going on. Intel changed both sides of the equation for the battery tests, swapping out both its hardware and its competitor’s. For the abovementioned performance tests, Intel used a Core i7-1185G7 processor running Windows 10 Pro “on [an] Intel white-box system.” In other words, we don’t know anything about the motherboard, core logic, disks, or any other hardware that may have been involved. We do know it was running at 3.0 GHz with 16 GB of LPDDR4-4266. On the Apple side, Intel chose a MacBook Pro running MacOS 14.0.1 and outfitted with Apple’s 3.2-GHz M1 processor and the same 16 GB of LPDDR4-4266.

That’s for the performance tests. For the battery tests, Intel switched to a commercial Acer laptop with a slightly different Core i7-1165G7 processor. No explanation was given. More significantly, it also swapped out the MacBook Pro for a MacBook Air, which has a smaller battery. (Both Apple machines use the same M1 processor and DRAM.) The two dueling laptops were able to play Netflix videos on battery power for essentially the same amount of time, with a difference of just 6 minutes over 10 hours. Had Intel used the same MacBook Pro that it used in the performance tests, however, it certainly would have been trounced.

Benchmarks aren’t everything, and Intel offered more than just scores. The company also promoted qualitative differences between Apple’s MacBooks and the world of x86-based laptops. In fact, only three or four of Intel’s 21-slide presentation covered actual benchmarks. Most of the rest was devoted to squishier points about “new experiences” and “day in the life responsiveness scenarios” and “choice.” (Plus four slides of small print on disclaimers and configuration details.)

Intel’s main point here was that there are a lot of PC makers in the world, but only one Apple. PCs have more peripherals; PCs have more games; PCs come in more shapes and sizes; PCs can have multiple monitors; PCs cover a broader price spectrum. In the end, Intel’s “benchmark” presentation was really about lifestyle. Do you want to be part of the PC ecosystem or the MacBook ecosystem?

And that’s the real question, and the one that makes all the CPU benchmarks irrelevant. Who cares if Apple’s M1 processor is slightly faster or slower than Intel’s current laptop processors? Who actually chooses an Apple, Acer, Dell, or Lenovo system based on that?

Apple doesn’t sell M1 chips, so the distinction is irrelevant to engineers. We can’t design around M1 even if we wanted to. We’ll never have to decide between the two. There’s exactly zero cross-shopping between Intel processors and Apple processors.

There’s also little cross-shopping between Apple systems and Intel-based systems. End users pick the ecosystem, not the CPU. Do they want MacOS or Windows? Once you’ve made that call, the rest fades into the background. The choice is between buying a Mac or not buying a Mac. In the former case, you’re getting M1 (or its successors), and if not, you’re not.

That said, Intel needed to save face and remind the world that it still makes good laptop processors. It just went about it in a typically Intel way. Maybe this is a good time to resurrect the “Intel Inside” ad campaign on TV.

For us technology nerds who benchmark CPUs for recreation, the results are interesting but not very useful. Vendor-supplied benchmarks are always suspect, no matter the vendor. The term “cherry picking” comes to mind, and these tests seem well picked over. Yes, they show the old stalwart outperforming the new media darling, but is anyone really convinced? Will it sway anyone’s design decision? Or did we already know the results before we even looked?

“Had Intel used the same MacBook Pro that it used in the performance tests, however, it certainly would have been trounced. ”

Why does the MacBook Pro battery life beat MacBook AIR? Is it primarily because the MacBook Pro has a larger battery?

I recall a comment associated with Intel’s battery life comparison test about it using a more reasonable screen brightness than Apple used in their own battery life PR. Wouldn’t that be expected to have a lot to do with the times being closer in these tests.

Are there expected trade-offs between battery life and support for features such as touch-screen support, pcie4 storage support, multiple external monitor support, Thunderbolt 4, eGPU support?

Yup, the batteries are different. Screen brightness was the same in the M1 vs. Core i7 benchmarks that Intel ran.

And yet both afraid to compare their benchmarks against AMD.

Funny choice of Intel benchmarks. First, they use Windows and Office 365. These huge apps are of course optimized for Intel processors. Microsoft has a long, long history of damaging their apps running on Macs. To make the Windows experience better than the (now highly damaged) Mac experience.

Next, they use Adobe apps. Adobe’s content creation apps running on Macs used to be the Standard of the Universe for quality, 20 years ago. Today, the experience of running current (subscription-priced) Adobe apps on a Mac is abysmal. Makes DOS 3.0 look like a jet.

Why not “real” benchmarks? The people who write these benchmarks go to a great deal of trouble to make them “honest” and neutral. And each benchmark tests specific capabilities.

Why is Intel is inventing their own units of measure? They are not using known units, like feet and inches. These Standard units may be awkward, but at least everybody agrees what they are. (Except for pounds, gallons, nautical miles, etc.) They use, “Intel-feet,” which seem to vary in length depending on which way you are facing.

Microsoft has all but given up on the consumer market, focusing instead on the datacenter market, which is much more lucrative (and harder to steal).

In recent years, Window’s stability has improved dramatically, now that Caligula Gates is no longer in charge.

But … will Microsoft end-of-life Windows? Will they brick your older computers? Would not be the first time. Apple cannot end-of-life consumer product as that is all they sell. Although they sure can sure make life difficult for users.

Pick your ecosystem. Pick your poison. But please use a real ruler.

Why are you so butt hurt?

So what?

Microsoft likes to be biased, doesn’t that just make it better to use an Intel CPU, why be a warrior when you can win without fighting.

Just go with AMD.

What matters most is the system, not the CPU.

Intel sells CPUs that are overloaded with complexity that tries to hide the fact that data is in memory and the CPU has to get an instructions and operands from memory and put the result in memory.

Starting with the GPU Apple got on the heterogeneous computing wagon and now they put memory and CPU on the same chip. Accessing memory is just as fast as transferring register values.

The irony is that Intel FPGAs have embedded memory that can be used for accelerators that beat CPUs that run at 10x clock speed. Microsoft Research has a paper “Where’s the beef” that project Catapult is based on.

And now the Roslyn Compiler API is available so the Syntax Walker will show the keywords, expressions, variables and value expressions used to run a program.

So what? Here’s what. three dual port embedded memory blocks and a few hundred LUTs can execute a C program at accelerator speed.

Instead of allocating memory in shared memory, just allocate space in the embedded memory. Also load the control memory with the key words and expressions.

There is no way to measure performance as a single quantity and there is no such thing as a general purpose benchmark. Want to try to compare a doctor to a lawyer? What is your benchmark?

If that was really true, then the MacBook wouldn’t have LPDDR4-4266 DRAM in it. The RAM is in the same package with the CPU, but not on the same chip. The only benchmarks that matter to most people are how long they have to wait on the computer to do the tasks they do day-in and day-out. In this regard, Intel is looking pretty good. Nothing against the M1, it seems snappy enough for most things.

The only reason Intel put out a benchmark was because Apple put out a bogus benchmark. In what World knowing what we know about architecture did Apple try and convince us that the M1 chip could compete.. SMH.. This is a slam dunk and Intel is Michael Jordan. ARM Processors including the “M1” isn’t in the same league… it will take Apple many, many years to just to get close and they will give up and use someone else’s chips branded with their name again with a slight change as usual.

What needs to be compared is two MacBooks. One with the M1 chip and another or various others with intel chips. That would supply the consumer with some real, usable information.