I was 16 or so years old when I built my first brainwave amplifier circa 1973. This was prior to the widespread availability of microcontrollers. As far as I know, it was also before anyone had even coined the term DSP (digital signal processing), because—to the best of my knowledge—all signal processing at that time was ASP (analog signal processing), which was performed using analog components and techniques.

My humble brainwave amplifier artifact required a cornucopia of electrodes to be clamped around my cranium, buffered with conductive paste, and connected to my noggin by surprisingly sticky sticky tape. Why is it that such tape is always at its stickiest when you don’t want it to be while managing to be at its least sticky when you need it the most? Suffice it to say that once the sensors were firmly attached, I didn’t feel a great urge to take them off again in a hurry. The idea was to detect and filter out theta and alpha brain waves, which increase when you enter a meditative state, amplify them, and use them to stimulate a pink noise generator into outputting a relaxing “chuff, chuff, chuff…” sound a bit like an old-fashioned steam locomotive (see also Colors of Noise).

Theoretically, after practicing for only a few weeks, it should have been possible for me to out-meditate a Shaolin monk. In practice… well, that’s a tale that remains to be told. Suffice it to say that my brainwave amplifier did not distinguish itself on either the “small” or “lightweight” fronts. So, you can only imagine my surprise to discover that various groups are currently working on creating AI-augmented earbuds that can monitor your brainwaves. “Why on earth would anyone want to do this?” I hear you cry. Well, I shall expound, explicate, and elucidate shortly, but first…

I was just chatting with Martin Croome from GreenWaves Technologies, which is a fabless chip manufacturer that was founded in 2014 and is based in Grenoble, France. Martin dons a strange double hat in the company—on the one hand, he’s the VP of marketing; on the other hand, he’s the chief architect for the company’s neural network development tools (and I thought my life was confusing).

The core mission for the folks at GreenWaves is to design, develop, and market extreme processors for energy-constrained devices. In this context, an energy-constrained device may be something tiny, like an earbud that must keep running for a day or more on a single charge, or it may be something like an IoT sensor node that has larger batteries but that must truck along for years or even decades.

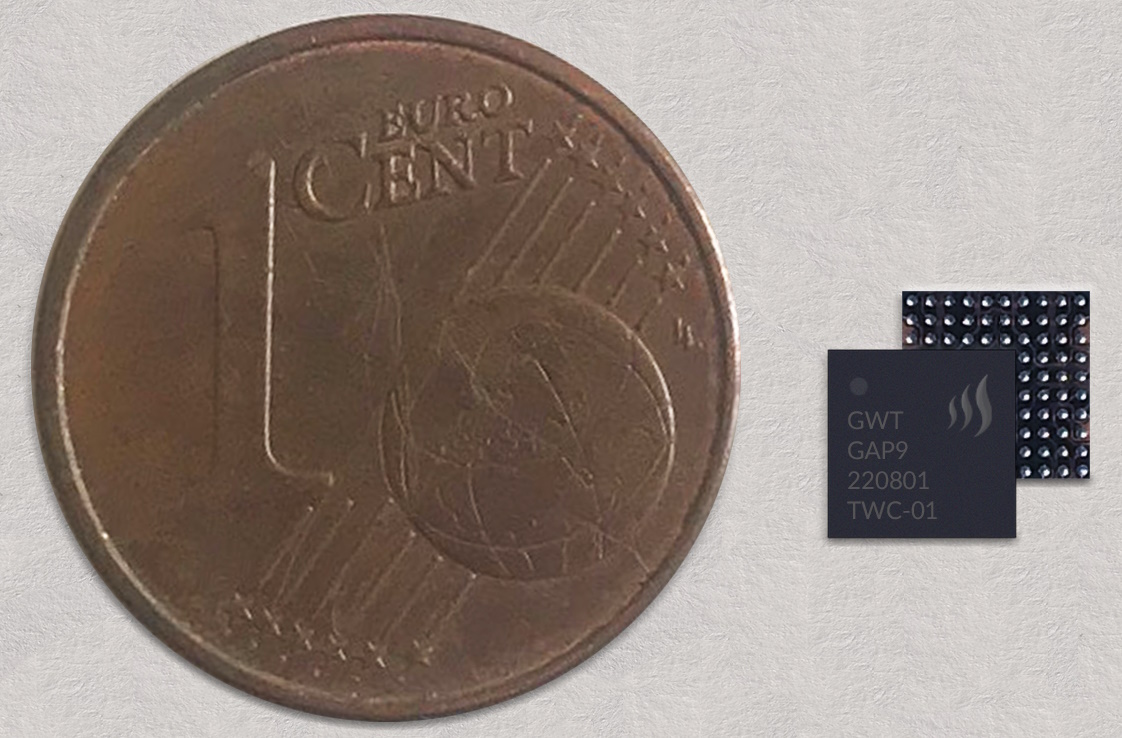

Their initial product offering, the GAP8, has been in production since 2020. As one of the very first AI-enabled RISC-V-based microcontrollers on the market, the GAP8 is currently deployed in a wide variety of products. In the case of their second-generation product, the GAP9, which is billed as an ultra-low-power AI-and-DSP-enabled RISC-V-based microcontroller, they’ve been sampling since the beginning of last year, they currently have production wafers in their hands, and their customers will be launching GAP9-based products later this year.

Just to set the scene, the GAP8 boasts 22.65 giga operations per second (GOPS) at 4.24mW/GOP, while the GAP9 flaunts 150.8 GOPS at 0.33mW/GOP. An example GAP8-based application that has been shipping for some time is shown below.

Ceiling-mounted people detector (Source: GreenWaves)

Using a GreenWaves’ GAP8 as its brains, this little beauty—a ceiling mounted infrared (IR) people detecting sensor—was created by a company called Kontakt.io (there’s little wonder I have so many problems with spelling). The sensor wakes up once a minute to count the number of people in the room and convey this information “upstairs.” Each sensor is also a Bluetooth mesh node. The sensors pass their data from node to node until it reaches a gateway (e.g., Wi-Fi or cellular) that can communicate the data to a local fog or a global cloud.

There are many applications for this sort of thing, such as meeting room utilization. For example, is this meeting room really getting used? And, if it is being used, how many people typically use it at any particular time? (If it’s a 10-person room but 99% of the time there are no more than 5 people in it, then maybe it should be divided into two meeting rooms.) Other applications include things like cafeteria utilization (“Hey, this would be a good time for you to go to the cafeteria because there aren’t many people in it”) and smart cleaning (“No one has used these toilets since they were last cleaned, so there’s no point in cleaning them again”), and so forth.

As I pen these words, GreenWaves’ partners are feverishly developing products based on GAP9 samples. The folks at GreenWaves say they can’t say (no pun intended) who their GAP9 customers are, but they can say that they’ve already had 10+ design wins, and this is for a device that’s not yet in production, which is rather exciting.

The GAP9 next to a Euro cent (Source: GreenWaves)

Let’s take a slightly deeper dive into this little rascal. In a crunchy nutshell, the GAP9 essentially embodies three different processing capabilities. The first is real-time streamed autonomous time domain digital signal processing, which is predominantly focused on audio. This employs an in-house developed IP called the smart filtering unit (SFU), which we will discuss in more detail a little later.

The second processing capability is general-purpose digital signal processing (DSP) like codecs, FFTs, frequency-based filtering, frequency domain filtering… that kind of stuff. This is performed in what they call a multi-core RISC-V computational cluster.

Last, but certainly not least, the third processing capability involves the combination of the aforementioned computational cluster with a neural network accelerator called the NE16.

In line with our earlier discussions, all these processing capabilities are focused on delivering extreme performance whilst consuming very, very low power for energy-constrained devices. Martin told me that, in addition to offering lower latency and lower power consumption to pretty much anything else on the market, a key differentiator is that “GAP processors are easy to program, as compared to most of the alternatives.” Martin also says, “GAP processors are very flexible, which allows them to cope with accelerating the types of things that are happening today, not the things which happened last year.” By this he means that these processors can implement all the latest and greatest types of neural networks, such as recurrent, convolutional, transformer, etc.

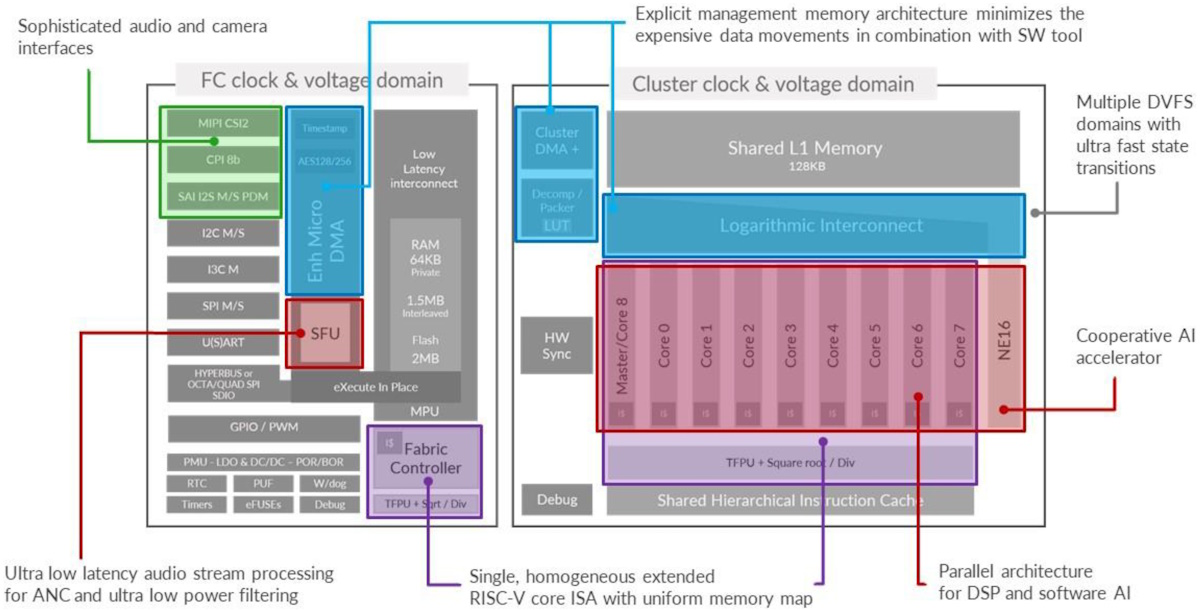

So, what does the GAP9 look like inside? Well, as we see in the diagram below, there are fundamentally two parts to the chip that we might call “the left side” and “the right side” (stop me if I’m getting too technical).

High level block diagram of the GAP9 (Source: GreenWaves)

The left side looks fundamentally like a regular MCU. What they call the “Fabric Controller” (for historical or hysterical reasons, I forget Martin’s exact wording) is basically a RISC-V core. They’ve exploited the fact that you can extend the instruction set architecture (ISA) of the RISC-V, and they’ve employed this capability to add their own custom instructions for things like DSP bit manipulation, lightweight vectorization in the cores, and what they call transprecisional floating-point, which means they can do 32-bit floating point along with two different 16-bit floating point representations in the form of IEEE FP16 and BF16 (these formats were discussed in more detail in my Mysteries of the Ancients: Binary Coded Decimal (BCD) column).

Meanwhile, on the right-hand side we find an additional nine RISC-V cores (this is the “computational cluster” we were waffling about earlier). This means that there are 10 RISC-V cores on the chip, all identical, not only in terms of their instruction sets, but also in terms of their memory map. In turn, this means you can write a function, compile it once, and run it anywhere on the chip.

Adjacent to the computational cluster on one side is the NE16 AI accelerator. On the other side, we find a hardware synchronization block, which implements all kinds of synchronization primitives—forks, joins, barriers, mutexes, so on and so forth—in hardware. This facilitates extremely fine-grained parallelism, allowing multiple cores to be working on different facets of the same task (like an FFT) or in a pipelined fashion, or on completely different tasks.

In addition to clock gating all over the device (there are multiple clock and voltage domains across the chip), the GAP9 also supports dynamic voltage and frequency scaling (DVFS), with all this power control taking place inside the chip.

Also, there’s an abundance of external interfaces, including a MIPI interface for a camera, three different serial audio interfaces with time-division multiplexing (TDM) support for up to 16 channels on each. Three pulse-density modulation (PDM) inputs and one PDM output, which can support a total of nine PDM microphones in and three PDM sources or syncs out.

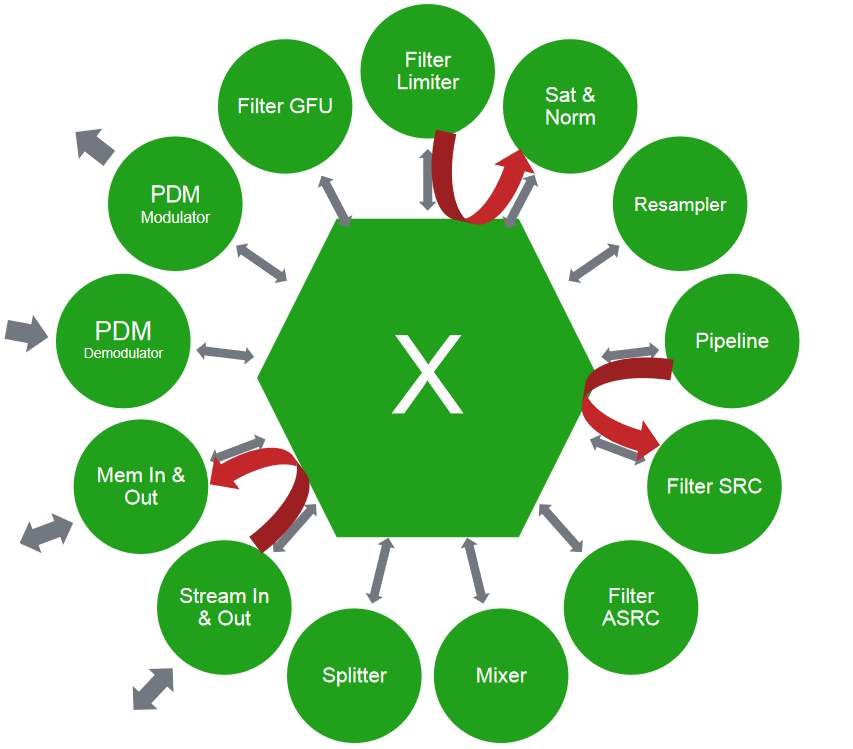

And then there’s the smart filtering unit (SFU) that we mentioned earlier. Located on the left-hand-side of the device, this is a completely autonomous unit that can process from interface-to-interface, or interface-to-memory, or memory-to-interface, or memory-to-memory (it can also be working on multiple streams simultaneously).

Block-ish diagram of the GAP9’s smart filtering unit (Source: GreenWaves)

One key aspect to this block is its interface-to-interface capability. This comes into play when we want to do something like active noise cancellation (ANC), which demands extremely low latency. One of the big markets for GAP processors is hearables, and one of the key areas for hearables is ANC. Why would you want to put ANC in a chip that can implement neural networks? Well, because you can use the neural networks to steer the ANC. To do this, the primary path of the ANC must be fast enough that—between the time the signal takes to go from the error microphone into the user’s ear—we can generate the anti-noise to cancel it. Of course, this technology can be used for lots of other things, including heavy-duty filtering, spatialization, sound effects, etc.

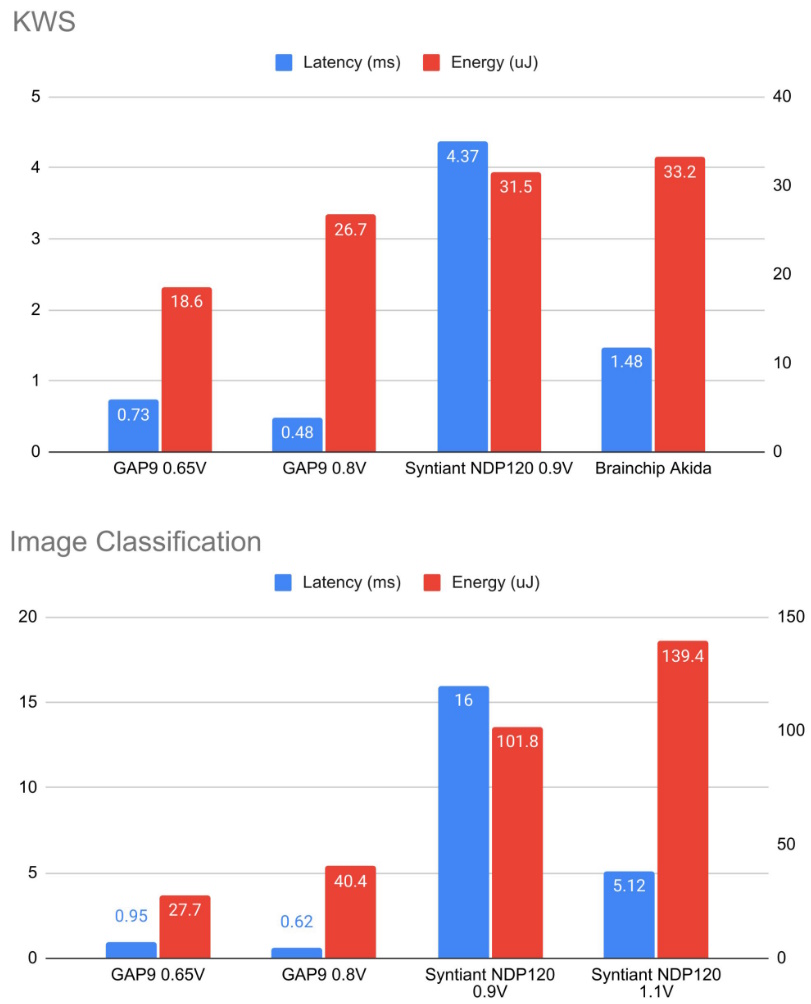

So, what are the results of all this? Well, I’m just about to show you. MLCommons is an open engineering consortium with a mission to benefit society by accelerating innovation in machine learning (ML). As part of this, MLCommons publishes the MLPerf Tiny benchmarks for embedded devices (these benchmarks are available from GitHub). The results from two of these benchmarks are shown below.

GAP9 vs. competitor benchmark results (Source: GreenWaves)

The results were that the GAP9 consumed ~2 to 3X less energy than a highly specialized analog neuromorphic chip and ~2 to 4X less energy than a specialized neural network accelerator chip, all while providing the lowest latency in all the tests.

The image above reflects only two of the four benchmarks. Martin notes that the GAP9 provided the best latency and best energy out of all the contenders on all four benchmarks except the anomaly detection benchmark. However, the company whose device reported the best latency on this benchmark didn’t report energy, which must be viewed as being a tad suspicious. So, if we limit ourselves to those companies that reported both energy and latency values, the GAP9 was the best in latency and energy on all benchmarks across the board.

All of which brings us back to thinking what can be achieved with GAP9 processors being used to power true wireless stereo (TWS) earbuds.

GAP9 deployed in TWS earbuds (Source: GreenWaves)

In turn, this brings us back to my meandering mutterings at the start of this column when I made mention of the fact that various groups are currently working on creating AI-augmented earbuds that can monitor your (well, our) brainwaves. I just had a quick Google while no one was looking and immediately found a couple of somewhat related articles: Google Spinoff Working on Earbuds That Spy on Your Brain Signals and Earbuds That Read Your Mind.

Martin tells me that the guys and gals at GreenWaves are working with another company to create earbuds for the 21st century. In addition to acting as regular TWS earbuds and/or hearing aids, these will have sensors involving flexible contacts on the rubber that you put in your ear, thereby allowing them to act like an electroencephalogram (EEG) to pick up your brainwaves.

There are obvious medical applications for this sort of thing, like epilepsy detection. But the thing that piqued my interest came in the context of hearing aids that can address the “cocktail party problem,” which involves trying to pick out one voice when multiple people are speaking at the same time, like at a party, for example.

I know several people who are hard of hearing. Can you imagine earbuds with processing power sufficient to disassemble the sound space into individual voices? Now, take this one step further and imagine using AI to monitor the brainwave signals to determine which of these voices is of particular interest to the earbud owner (well, wearer), and to selectively boost that voice whilst fading back any extraneous sounds including any other speakers.

All I can say is “Wowsers!” (and I mean that most sincerely). The more I think about this, the more applications come to mind, like gaming, for example (your earbuds could detect changes in your brainwave patterns and signal information to your game controller—I’m not sure where I’m going with this, but it’s both exhilarating and scary at the same time).

Now I’m thinking about my recent The End of the Beginning of the End of Civilization as We Know It? column. Supposing a generative AI like ChatGPT were using the signals from your EEG earbuds to monitor your brainwaves and… I’ll let your imagination run wild from here. As always, please do share any thoughts you have on anything you’ve read here in the comments below (as quickly as you can before ChatGPT gets to hear about it—again, no pun intended).