We’ve been turning it down for years.

Energy consumption has gradually grown as a concern, to the point where it’s eclipsed performance as a primary driver for many circuits. To reduce power, you can do one of two things: turn down frequency (for dynamic power) or turn down the supply voltage. We’ve already stopped driving clocks as hard as we used to, what with the shift to multicore for scaling performance. But we’re still turning down the voltage.

The first move, where we took logic from 5 V, where it had been for years, to 3.3 V happened… a long time ago. Some components still use 3.3 V, but the leading-edge stuff is all down in the 1-point-something range. And drifting south.

But there’s a problem: We’ve had this “digital” approach to using a transistor. It has an “off” state and then some sort of “on” state. For analog, “on” means biased to some delicate point with feedback keeping all the poles where they’re supposed to be so that it’s stable. For digital, we just crank the crap out of it so that it’s on “hard.”

The thing that makes the difference between “off” and “on” is the threshold voltage. We used to assume that, below threshold, the thing was “off.” In more recent years, we’ve had to deal with the annoying reality that there is current that flows even in that region. This sub-threshold leakage has not been considered a good thing. But, all in all, we’ve come to grips with the fact that, kvetch though we may, the current doesn’t actually drop to zero until the voltage does.

But this has simply been treated as a second-order effect, muddying up our definition of “off” while still treating that regime as “off.” According to that paradigm, you can bring the voltage down only to the threshold, below which, by definition, you stop turning the transistor on. And a circuit that has no transistors “on” isn’t particularly useful.

Under those assumptions, we may have to stop turning the voltage down soon; there’s not much room left to move.

But in a less-visible, somewhat scary corner of the design world, a few folks have decided to re-examine these core assumptions. Because, really, we’ve got this cognitive conflict: the state we call “off” has this nasty lingering “on” behavior. So far, we’ve pushed the technology so that we can keep pretending that it’s an “off” state. But… what if, instead, we switched our thinking: if it has “on” characteristics – that is, if current flows – then why not consider it an “on” regime?

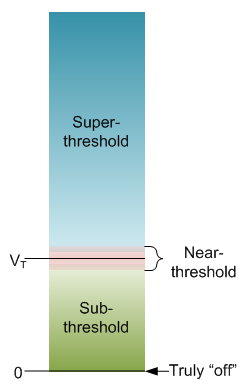

Looked at that way, the transistor is “off” only when the voltage is zero. Above that, there are three regimes: from zero to somewhere near threshold, a transition region right around threshold, and then the region we now think of as “on.” These are referred to as “sub-threshold,” “near-threshold,” and “super-threshold,” respectively.

There are at least two companies – Ambiq and PsiKick – that design circuits that reside completely in the sub-threshold regime. By today’s standards, such circuits never turn “on.” And yet they can do useful work at very low power.

Too insensitive and too sensitive

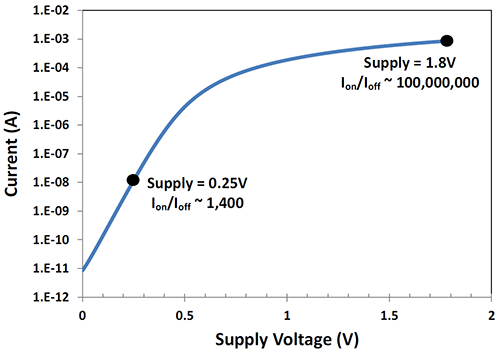

Let’s start by acknowledging that what we’re going to review here is hard. And the difficulty starts with two conflicting intrinsic characteristics of sub-threshold design as compared to super-threshold design. The first deals with defining “on” and “off” states for digital logic. It’s all about how much current flows in the transistor, and, for super-threshold design, the difference between “off” current and “on” current is many orders of magnitude. Down in the sub-threshold region? “On” current is only about 1000 times more than “off” current.

(Image courtesy Ambiq)

So right away, you have a circuit that’s not as sensitive to input swings, meaning you need a more sensitive detector.

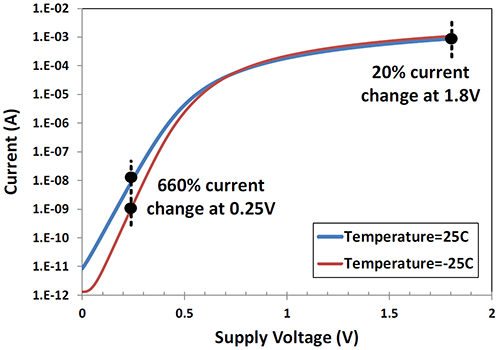

But there’s another problem. The absolute value of these currents is strongly impacted by things like temperature and process. In other words, even though the “on/off” signal is insensitive, the responsiveness to external conditions is too sensitive.

The first means being at peace with the fact that, whatever the benefits of sub-threshold, it’s a pain in the tuckus. If a particular circuit, for whatever reason, won’t provide much of a power savings by going sub-threshold, then it’s just going to be easier to do standard super-threshold design.

In other cases, you may need to juice things up a bit to get the required performance. This could mean going into the near-threshold region or even all the way through to super-threshold.

So a given circuit is likely to be mostly sub-threshold, with a possible garnish of near- and super-threshold devices. And those devices will require a higher supply.

Both companies seem to be making more noise; Ambiq with a new whitepaper, PsiKick with an A series funding round.

You might think, given the pre-eminence of power as a criterion, that everyone would be jumping into this. But these two companies have spent years getting from idea to production simply because of the nitty-gritty details – from developing a well-characterized library of circuits to cobbling together a workable design flow to achieving good yield to logistics like finding a tester with a PMU (parametric measurement unit) that can measure nanoamps. Not for the faint of heart.

And my sense of it is that all sub-threshold design – analog or digital – has that fussiness that we usually associate only with analog. Along with that comes a reliance on experienced individuals with lots of design scars to validate what it took to get that experience. When I asked PsiKick what their “secret sauce” was, CEO Brendan Richardson listed four items:

- A good understanding of sub-threshold digital systems

- Strength in extremely low-power radios

- Good knowledge of how to integrate those two together in a single chip

- Skill in dealing with sporadic power sources (vs. the constant delivery of energy typical of power supplies and batteries)

These are very specific skills that inform the kinds of projects they focus on. Sub-threshold can have value for many different areas, but, for the moment, they’re leveraging what they know best.

I also sense a similarity to analog design when it comes to EDA tools. While digital has seen rampant abstraction, analog design is mostly done by hand – with the tools helping the designers to make or implement decisions. I have a sense that this characteristic will apply to sub-threshold digital design as well, to a certain extent. That’s not to say that these guys might not love some more love from their EDA tools, but whether, for instance, automatic layout from RTL would be possible remains to be seen.

The power levels that these techniques achieve are pretty amazing. But if it remains the domain of specialists, then Ambiq and PsiKick appear to be well positioned as the owners.

More info:

Have you considered using sub-threshold techniques to reduce power?