Politicians used to argue about “the vision thing,” a borderline unintelligible swipe at opponents who didn’t share their view of the big picture. As a company, Synopsys may not be very political, but it’s definitely on board with the vision thing.

Embedded vision – that is, adding real-time image recognition to embedded systems – used to be a high-end, pie-in-the-sky kind of feature. Cheap systems couldn’t do image recognition, could they? They don’t have the processing power. And anyway, what would you do with it? Why does a thermostat need to recognize my face?

But like most things, we find all sorts of unexpected uses for the technology as soon as it becomes available and affordable. Who would have thought that greeting cards needed their own microcontrollers? But now we have musical birthday cards with MCUs glued into the back cover. Thermostats have their own IP address. Car keys have crypto chips. Embedded enables.

And so it is with embedded vision. Once you can make your system recognize faces, or road signs, or hand gestures, you will. Or, if you won’t, your competitor will. Or perhaps already has.

To supply that as-yet-unidentified demand, Synopsys has tweaked its in-house ARC processor architecture to produce a CPU cluster that’s geared toward low-cost image detection and recognition. It’s part of a soft-IP subsystem that allows SoC designers to include embedded vision (EV) into their next chip with a minimum of original design work.

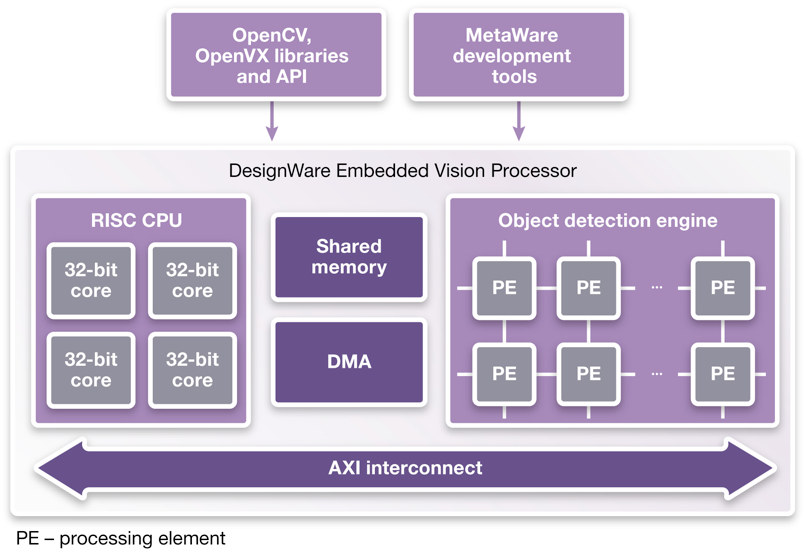

Actually, there’s quite a bit more than just the 32-bit CPUs, as the block diagram here shows. Mated with the CPUs is a matrix of video accelerators, generically named Processing Elements, or PEs. You can configure the matrix with two, four, or eight PEs, depending on how much hardware horsepower you think you need. And – let’s be honest – you probably have no idea how many PEs you want. So Synopsys gives them all to you for the same price. Once you get around to simulating your new EV subsystem you might be able to dial-in the right number of PEs, but up until then you can just guess.

The PEs are a new architecture designed specifically for convolutional neural network (CNN) programming, and each PE is identical to its neighbors. They’re programmable (i.e., they’re processors), which means a whole new compiler toolchain for them. Synopsys is building up a code library for the PE matrix, but so far it includes only face-recognition and roadway sign-recognition functions. Apart from that, you’re on your own, at least for now.

By the way, there’s nothing magical about the 2/4/8 configuration. In the future, Synopsys will probably allow configurations with up to 32 PEs, maybe even more. This is just the start.

Over on the left side of the block diagram, you get the option of either two or four CPU cores, but this is a fixed choice. Synopsys feels that most developers have a good idea whether they need two processors or four, so that detail is a different configuration option with a slightly different licensing fee.

If you’re an ARC processor aficionado (and, really, isn’t everyone?), then you’ll recognize the internal architecture and instruction set of these 32-bit CPUs. Oddly, however, Synopsys isn’t branding them as ARC processors. They’re instead called “DesignWare EV processors.” Presumably, the marketing brain trust had a good reason for tweaking the naming convention. Or they had a free afternoon.

In addition to the PE software, the company also provides code libraries for OpenCV and OpenVX, but that software runs on the left-hand CPUs, not the right-hand PE matrix. In essence, the CPUs are the interface layer between this EV subsystem and the rest of your SoC, which presumably has a processor or two of its own. That processor will dispatch OpenCV or OpenVX requests to the subsystem, which, in turn, passes off low-level CNN functions to the PE matrix. Your image sensors and a big frame buffer are not part of the subsystem. Those live elsewhere in the SoC and connect via AXI.

So… couldn’t you just use a GPU for your embedded vision system? Indeed you could; some people do. But your average nVidia or ATI GPU is geared for graphics output, not input. It’s laden with math processors, which is good, but not a lot of hardware for data movement or pattern-matching, which is bad. It works, but it consumes a lot of power. It’s just not an efficient use of a GPU.

The DesignWare EV Processor subsystem, on the other hand, is designed to do the opposite function: to take a frame buffer and search through it. The PE matrix is internally cross-connected and dynamically reconfigurable to support different CNN graphs. Synopsys says a four-CPU configuration with eight PEs running at 500 MHz can perform facial recognition at 30 frames/sec while burning just 175mW of power. That’s orders of magnitude more power-efficient than using a GPU, according to the company.

What would you use this power for? Well, Microsoft uses it for games, with its Xbox Kinect peripheral. New TVs will be using gesture-recognition to replace the remote control. Security and surveillance applications are obvious. And automakers are already designing cars that detect speed limit signs by the side of the road and automatically slow the car. (Could they also accelerate it if you’re driving below the speed limit? In the left lane? Please?) In short, we’ll find uses for it, especially as the price and power budget come down. Combined with a Bluetooth earpiece, we’ll soon be able to wave our hands in the air and talk to people who aren’t there, all at the same time.

Thanks for the article, Jim. It’s especially exciting to see what companies like Xilinx are doing with embedded vision in the area of SoCs and accelerating image processing algorithms via hardware. With the growth of expectations and features common to modern systems, my guess is we’re not too far off from FPGAs/SoCs plating a central roll in high-volume embedded systems.