To paraphrase Mark Twain, there are lies, damn lies, and benchmarks. People have been fudging their benchmark results for as long as there have been benchmarks. It’s easy enough to do. Indeed, it’s surprisingly hard not to distort benchmark results, even for the most scrupulously honest engineers. Measuring CPU performance ain’t like drag racing cars, and any comparison of benchmark scores inevitably boils down to an argument over what, specifically, you’re measuring.

Wading into this morass is EEMBC, the nonprofit organization that threw itself on the benchmarking grenade almost 20 years ago. EEMBC (which stands for Embedded Microprocessor Benchmark Consortium, but with a generous extra E) has produced a number of specialty benchmarks over the years. They have tests that measure real-time performance, multicore ability, automotive workloads, and much more. What the group hasn’t had until now is a straight-up floating-point benchmark.

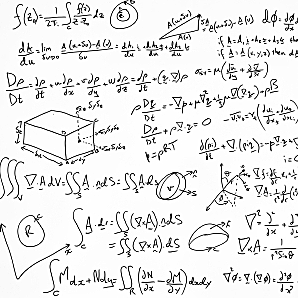

Why now? ’Cause more and more embedded processors are including FPUs, and even low-end, sub-$5 chips now have floating-point capability. It’s not that these little MCUs need to perform scientific calculations or anything; the FP comes in handy mostly for motor control. As anyone who’s done robotics, kinematics, or motion control can tell you, accurate math is absolutely mandatory when you’re keeping track of the positions of things. The inevitable rounding errors that come with integer arithmetic (even when you’re using 32-bit numbers) add up surprisingly quickly, and within a few minutes you don’t really know where your robot arm is anymore. Floating-point math virtually eliminates those rounding errors and the scary imprecision.

Combine inexpensive processors, complex math, and real-time performance needs and you have a recipe for benchmark confusion. Until now, developers typically compared chips running either their own in-house code (which meant laying hands on the chip and porting the code), or running one of a handful of freely available FP benchmarks such as Linpak, Whetstone, or Livermore loops. Either technique might (or might not) provide a rough guide to which chips provide better FP performance than others, but neither method likely measured what you really wanted to know. Today’s FP benchmarks are really just inner loops: kernels of a larger algorithm that have been passed around from generation to generation as quick-and-dirty code samples. They’re not real benchmarks in the sense of being controlled, repeatable tests.

Moreover, nobody “owns” or controls Linpack, et al. That means you’re free to adjust the code as you see fit, as many have done. That’s fine for in-house tuning, but it does nothing to make these freebie FP nuggets useful as comparison tests. What’s needed is a fixed reference point: a benchmark that can be used to compare different chips running on different days using different compilers, and so on.

What EEMBC has done is to take the Whetstones of the world and fold them all into one bona fide benchmark suite that it oversees, called FPMark. The inner loops are pretty much the same Livermore loops that are already (ahem) floating around, but codified, sanitized, and “harnessed” in such a way that they’re impervious to ham-fisted or malicious tuning. In all, FPMark exercises 10 different floating-point algorithms (including some written from scratch just for FPMark) for a total of 53 different workloads.

Why so many different variations? Each kernel comes in both single-precision and double-precision versions, because some chips support only one or the other. Each kernel is also run through a small, medium, and large data set – again, because some chips can handle only smallish address ranges or data blocks. Most kernels get run through all six permutations, giving a good indication of how the chip performs on that task under all conditions.

What if you’re interested only in single-precision, small-dataset results? No problem. Programmers using $5 MCUs with lightweight FPUs can run just the Lilliputian modes, while their colleagues in the next building can exercise the full fury of a Xeon 5500 by running the entire test suite. The beauty is, it’s the same code either way, so the results are directly comparable.

Got a multicore and/or multi-threaded processor? FPMark has that covered, too. Assuming your compiler supports it, FPMark will run multiple instances on multiple virtual cores. Note that it’s not parallelized; the component tasks are not split up and vectorized. Instead, a full instance of FPMark is run concurrently on each core. This more closely represents how designers are likely to run their own FP code.

When it’s all over, FPMark takes all 53 results (or fewer, depending) and weights them equally. The geometric mean of the results becomes your FPMark score. If you’ve opted for the lightweight data sets, you get a MicroFPMark score. Individual scores are available, too, if you’re interested only in certain tasks or variations.

As with most EEMBC benchmarks, you’re free to publish your results to the world, with or without EEMBC’s approval. If you want the gold seal of respectability, EEMBC will verify and certify your results for free; you just have to provide the hardware/software setup you used and wait. Interestingly, EEMBC’s engineers have found that certifying vendors’ results doesn’t uncover cheating as often as you might think. In fact, EEMBC gets better scores than the vendor did about half the time. That’s usually due to a change in the compiler between the time the vendor ran the tests and when EEMBC made its own run. Or it’s simple incompetence. Vendors may give the job of benchmarking to some inexperienced junior intern who lacks experience in compiler tweaking or who doesn’t understand the finer points of memory allocation. Either way, an EEMBC-certified result is everyone’s guarantee that yes, this chip can do these tasks that fast.

As with most benchmarks, FPMark says as much about the compiler and software tools as it does about the chip. Software vendors are often just as interested in benchmarks as chip makers, and a few have already discovered shortcomings in their floating-point code. Even if you’re not interested in drag racing processors, it might be worth running FPMark just to see how well your compiler stacks up.