A couple of weeks ago as I pen these words, I heard that the folks from General Micro Systems had taken home three “Best of Show” awards from the recent AUSA 2022 annual meeting and exposition, which was held 10-12 October in Washington DC, and whose purpose was to highlight computing innovations for building the Army of 2030.

On the off chance you don’t keep your ear to the ground regarding this sort of thing, the Association of the United States Army (AUSA) is a nonprofit educational and professional development association serving America’s Army and supporting a strong national defense. As they say on their website: “AUSA provides a voice for the Army, supports the Soldier, and honors those who have served in order to advance the security of the nation.” This year’s AUSA 2022 annual meeting and exposition boasted 33,000+ attendees, 650+ exhibitors, 150+ sessions, and 80+ countries (I’m not 100% sure as to exactly what the last number relates, but it’s certainly a lot of countries).

The reason for my interest in hearing that the folks from General Micro Systems (GMS) had won these “Best of Show” awards is that, just a week or two before the show, I was chatting with GMS CEO and Chief Architect Ben Sharfi, who was regaling me with details about the new X9 Spider modular and super-dense X9 distributed architecture from GMS. Ben says the X9 Spider boasts the most innovative, rugged, and reliable computing technologies available for the next-generation Army.

Before we venture into the lair of the X9 Spider, let’s first set the scene. Beware! As is usual with electronics in general, and especially with anything to do with the military, there is a motley morass of acronyms we’re going to have to wade through, so let’s all don our rubber wellington boots before we proceed (as fate would have it, I’m already wearing mine).

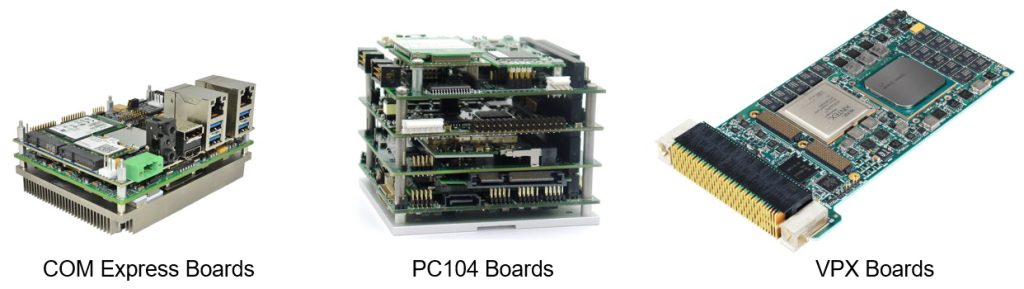

There currently exist a variety of bus-based architectures in which a collection of bus-based boards is gathered in a single chassis. This pretty much defines the concept of a centralized computing architecture. Examples of these bus-based architectures are COM Express, PC104, and VPX.

Example bus-based boards (Source: GMS)

PC/104 (or PC104) is a family of embedded computer standards in which both form factors and computer buses are defined by the PC/104 Consortium. Its name derives from the 104 pins on the inter-board Industry Standard Architecture (ISA) connector in the original PC/104 specification and has been retained in subsequent revisions, despite changes to the connectors. The PC/104 bus and form factor were originally devised by Ampro in 1987 and later standardized by the PC/104 Consortium in 1992. The specifications released by the PC/104 Consortium define multiple bus structures (ISA, PCI, PCI Express) and form factors (104, EBX, EPIC), <sarcasm mode on> so there’s no chance of confusion there <sarcasm mode off>.

The COM Express standard, which flaunts a computer-on-module (COM) form factor, was first released in 2005 by the PCI Industrial Computer Manufacturers Group (PICMG). This standard defined five module types, each implementing different pinout configurations and feature sets on one or two 220-pin connectors. It also defined two module sizes (later expanded to four), and eight different pinouts are now defined in the specification, <sarcasm mode on> so, once again, no possibility of confusion <sarcasm mode off>.

Meanwhile, the term VPX (Virtual Path Cross-Connect), also known as VITA 46, refers to a set of standards commonly used by defense contractors for connecting components of a computer. Defined by the VMEbus International Trade Association (VITA) working group starting in 2003, VPX was first demonstrated in 2004 and became an ANSI standard in 2007. The OpenVPX System Specification, which was a development of, and complementary to, VPX, was ratified by ANSI in June 2010.

The current VPX/OpenVPX standard defines 16 different profiles, where each profile has hundreds of pins of interconnect. Just for giggles and grins, I’ve been told that every supplier manages to deviate from the standard in some way. I’m reminded of the phrase “herding cats.” In turn, this reminds me of the classic cat herding video on YouTube.

The bottom line is that, although VPX/OpenVPX employs lots of very compelling buzzwords, the reality is that you cannot simply swap units from different suppliers.

Before we proceed, there are a couple of other terms that it may be handy to know at some stage in the future, so I’ll quickly cover them here. Let’s start with the Air Transportation Rack (ATR), which has been the de-facto standard form factor for electronic equipment in military ground vehicles and aircraft for the past 50 years. The idea here is that—much like a PC at home—everything (processor, graphics card, network card, power supply, cooling fan, etc.) is stuffed on one ruggedized box forming a centralized computing system.

When you talk to anyone in the military about this sort of thing, you may well hear them muttering about “SOSA” and “MOSA” under their breath. The Sensor Open Systems Architecture (SOSA) consortium was formed in 2017 to define an open sensor environment for Command, Control, Communications, Computers, Intelligence, Surveillance and Reconnaissance (C4ISR) systems (try saying that 10 times quickly). Two years later, in 2019, a US Department of Defense (DoD) Tri Services memo was published calling for a Modular Open Systems Approach (MOSA) to be used to the maximum extent possible for future weapons-systems. What both of these initiatives are really saying is that it’s critically important to ensure interoperability and commonality across key hardware and software in the military systems of the future.

All of which brings us back to GMS. As Ben told me, “We already have the most complete VPX product line in the market, but we decided that we could do something better, which is why we’ve introduced a brand-new technology in the form of the X9 Spider.”

There’s a lot to cover here, so I’m going to skim over things and present only a high-level view with which I hope to tempt and tease you, after which you can bounce over to the GMS website or contact Ben to learn more if you so desire. Let’s start with the host CPU, of which there are two models. First, we have the X9 Xeon W Host CPU boasting an Intel Tiger-H processor with up to 8 cores, 64GB of DDR4 ECC DRAM, 4x ThunderBolt 4 with Display Port and 100W power (available as copper or fiber cable with power delivery over 50 meters), an NVIDIA RTX-5000 GPGPU, dual 100GigE Ethernet ports with fiber interface, quad M.2 sites to support Wi-Fi, Mil STD-1553, Cellular, and GPS, and… so much more.

One of the Spider X9 host CPU modules (Source: GMS)

Alternatively, we have the X9 Xeon D Host CPU boasting an Intel Ice Lake processor with up to 20 cores, 64GB of DDR4 ECC DRAM, 2x ThunderBolt 4 with Display Port and 100W power (available as copper or fiber cable with power delivery over 50 meters), an NVIDIA RTX-5000 GPGPU, quad 100GigE Ethernet ports with fiber interface, quad M.2 sites to support Wi-Fi, Mil STD-1553, Cellular, and GPS, and… once again… so much more.

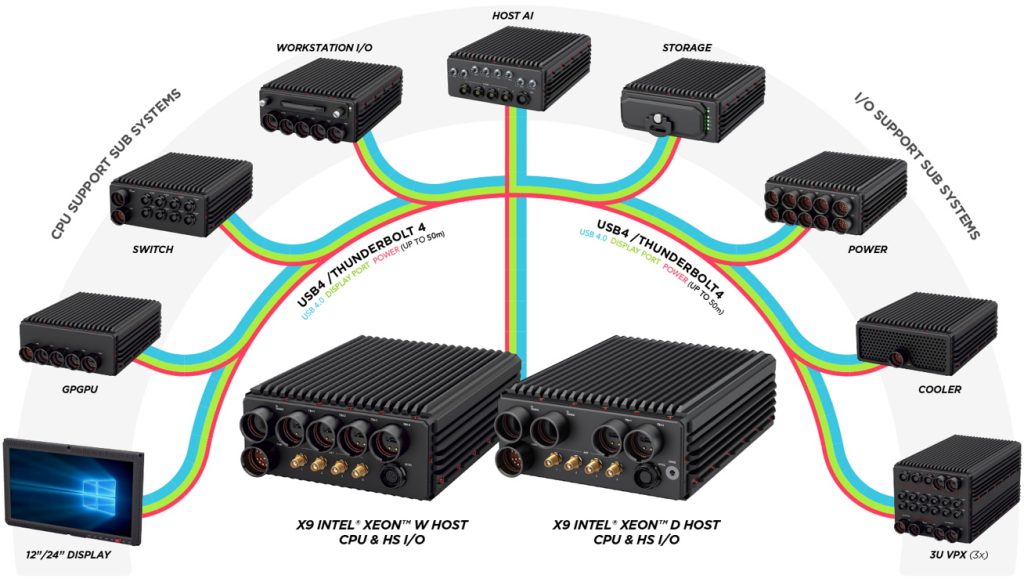

In addition to the host CPU modules, the X9 Spider suite boasts a host of other modules, including Displays, GPGPU, Switch, Workstation I/O, Host AI, Storage, Power, Cooler, and 3U VPX.

The current Spider X9 suite of modules (Source: GMS)

There are several things that aren’t readily apparent in the image above, such as the fact that all of the processing modules are sealed units, thereby allowing them to be operated in hostile environments. Also, all of the modules include voltage, temperature, shock, and tamper sensors to ensure safe operation, and they are MIL-STD-810G, MIL-STD-1275D, MIL-S-901D, MIL-STD-461F, DO-160D, and IP67 compliant.

For designers wishing to reuse existing VPX boards, the 3U VPX module supports three 3U OpenVPX modules. With 4x ThunderBolt 4 connectors on the front and a fully customizable rear I/O panel, this little scamp is less than a quarter of the size of a traditional ATR box.

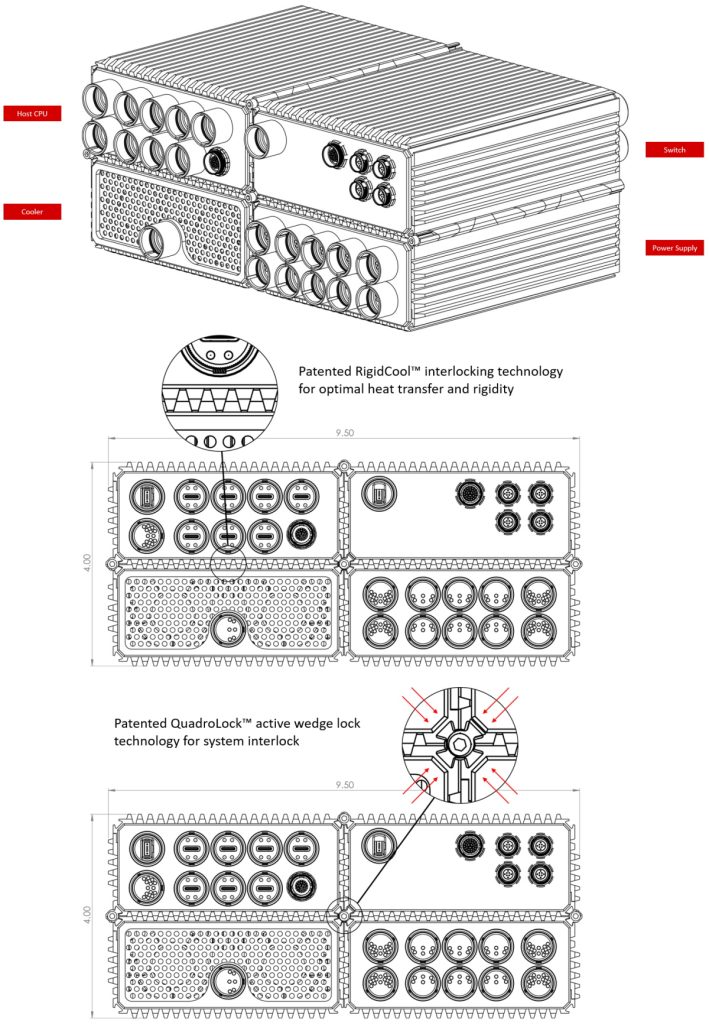

The way in which Spider X9 modules can be physically connected to realize a desired system configuration is illustrated below. Observe how, in addition to rigidity, the interlocking technology provides optimal heat transfer between the cooling module (or modules) and the other modules.

Connecting Spider X9 modules together (Source: GMS)

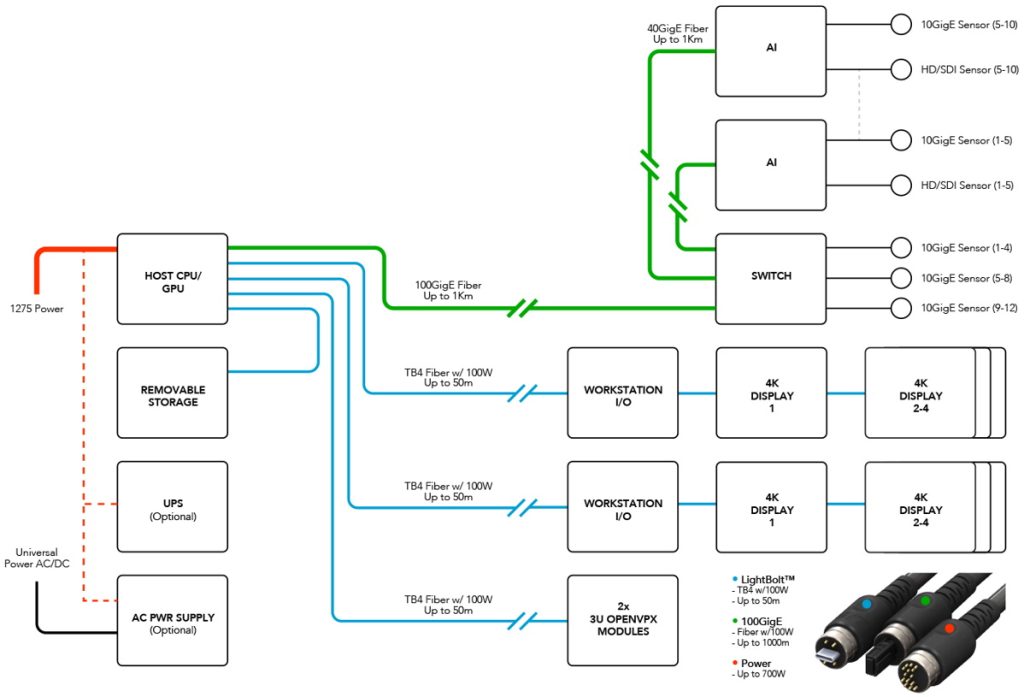

Now, although what I think of as the “Rainbow Image” shown earlier is pretty, it’s the version below that really grabbed my attention and made me say “Wow!

Connecting Spider X9 modules together (Source: GMS)

Observe the pass-through capabilities of the ThunderBolt 4 interfaces as illustrated by the blue lines feeding the displays.

In addition to the fact that all GMS products are proudly designed and manufactured in the USA (that’s always music to my ears), another great thing about this is that all of the high-speed interfaces used in the Spider X9 system are open standards, which means other vendors can create their own modules, which should make devotees of SOSA (of which GMS is a member) and MOSA jolly happy.

I don’t usually spend a lot of time looking at what the military is doing, but I have to say I think that those who don the undergarments of authority and stride the corridors of power should take a serious look at the Spider X9 system from GMS. How about you? Do you have any thoughts you’d care to share about anything you’ve seen here (including any tales of your own experiences with military equipment in the field)?