I remember reading a quote by a famous actor whose name I no longer recall (that’s how famous they were). It might have been Errol Flynn. It might have been Harrison Ford. It might have been someone else completely. The actual person isn’t important, it’s what they said that stuck in my mind.

And what they said was: “If the director or producer of a new film comes to you and asks, ‘Can you ride a horse?’ You say ‘Why, yes I can,’ and then you learn to ride a horse as quickly as possible.”

The reason this just popped into my mind was that I was recently asked, “Do you want to talk to someone about the coming generation of software-defined automobiles?” And, rather than reply, “What’s a software-defined automobile?” I immediately responded, “Why, yes I do!”

Thus it was that I found myself chatting with Jan Becker, who is President, CEO, and Co-Founder of Apex.AI. In his spare time, Jan also acts as the Managing Director of Apex.AI GmbH, which is Apex.AI’s subsidiary in Germany. As we will discuss, Apex.AI has developed a breakthrough, safety-certified, developer-friendly, and scalable software platform for mobility systems.

We are going to focus on automobiles for the purposes of our discussions here, but we should note that Apex.AI’s platform addresses all safety-critical mobility applications, including automated passenger vehicles, automated buses, automated trucks, robotics, mobile machines used in agriculture, construction, and mining, and… the list goes on.

Addressing all safety-critical mobility applications (Source: Apex.AI)

Just to establish Jan’s bona fides, it’s probably worth noting that, prior to founding Apex.AI, he was Senior Director at Faraday Future responsible for Autonomous Driving and Director at Robert Bosch LLC responsible for Automated Driving in North America. Jan also served as a Senior Manager and Principal Engineer at the Bosch Research and Technology Center in Palo Alto, CA, USA, and as a senior research engineer for Corporate Research at Robert Bosch GmbH, Germany.

Since 2010, Jan has been a Lecturer at Stanford University for autonomous vehicles and driver assistance. Previously, he was a visiting scholar at the University’s Artificial Intelligence Lab and a member of the Stanford Racing Team for the 2007 DARPA Urban Challenge. We could go on and on about Jan’s accomplishments, but then we wouldn’t have time for me to tell you all the cool stuff I wish to impart.

We started with Jan asking me, “How much automotive background do you have?” When I responded, “Well, I’ve driven a car,” he kindly pretended that I was joking.

Jan and your humble narrator covered a lot of ground in our conversation, but we can summarize things as follows. Let’s start with the fact that, assuming your car is not more than a couple of years old, it probably contains more than 100 small computers in the form of microcontrollers scattered throughout. In addition to big things like the engine, transmission, and brakes, these little rascals are everywhere. For example, every door and each window may contain one or more processors.

Unfortunately, for historical reasons, the software for each processor was probably developed in isolation. The traditional way of creating a car is for the automotive vendor to set the ball rolling by defining what the new vehicle is going to look like (system-wise, not aesthetically speaking), breaking things down into subsystems, and then sending these subsystems off to Tier 1 suppliers who develop both the hardware and the software. When I say, “the traditional way,” this is still the way a lot of manufacturers do things to this day.

Having this type of distributed implementation has multiple issues both for the manufacturer and for the end user. In the case of the manufacturer, it takes a lot of time and resources to develop and maintain myriad disparate subsystems. From the end user’s point of view, the subsystems don’t work in a coordinated way.

As one simple example, one subsystem may be in charge of forward collision warning and automatic emergency braking, while another subsystem is in charge of interfacing to the user’s smartphone. “Well, that sort of makes sense,” you may say, but what about the situation in which a distracted pedestrian ambles out in front of your car—thereby triggering a collision warning—at the same time as your phone announces an incoming call from your mother (we can only hope your dear only mom isn’t the cause of both these events). In a smart system running on a single integrated platform, the car would hold the call until the rogue pedestrian situation was resolved.

This is just one of the reasons that automotive manufacturers have started to migrate from traditional domain architectures to what are known as zonal architectures in which a smaller number of powerful processors are linked by a high-speed network. Each of these zone controllers may contain one or more system-on-chip (SoC) devices, and each SoC may contain multiple processor cores. Furthermore, these processor cores aren’t the simple microcontrollers of yesteryear, but are instead state-of-the-art 64-bit microprocessors from Intel and Arm running at gigahertz clock speeds.

But this is just the hardware. What’s missing is having a single software platform. The way Jan described this to me is that typical automotive software environments are akin to trying to run Android software and iPhone software and Nokia software that was developed 10 years ago and Blackberry software that was developed 20 years ago and Windows Mobile software that doesn’t exist anymore all on your brand-new smartphone.

The reason Android has been so successful in the mobile world is that the base software is common to all manufacturers. When it comes to application developers, they don’t need to know who manufactured the processor or the sensors or the cameras in the smartphone or tablet because the base software provides an abstraction layer that shields them from all of the nitty-gritty details.

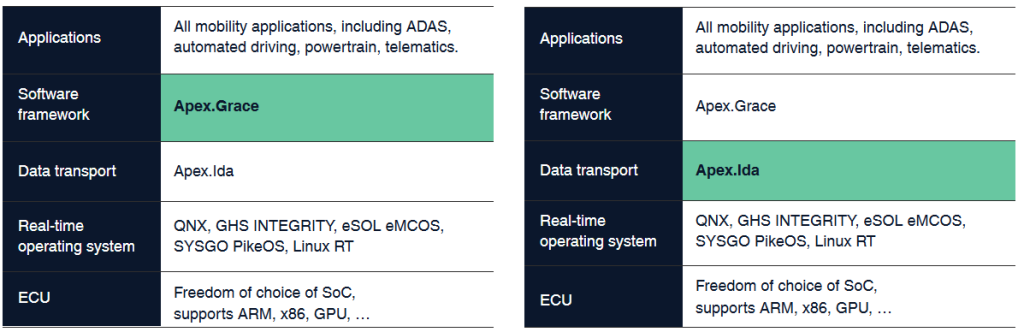

This is what the folks at Apex.AI are doing for mobility platforms in general and for automobiles in particular. They are providing a base layer in the form of Apex.OS, which is a software suite comprising the Apex.Grace SDK and Apex.Ida middleware.

As an aside, Apex.Grace honors the legacy of Grace Hopper, “the queen of code.” Hopper was one of the first women to earn a Ph.D. in mathematics from Yale University in 1934. During World War II, she joined the U.S. Navy, where she programmed the Mark I computer. She retired from the U.S. Navy as a rear admiral in 1986.

Meanwhile, Apex.Ida pays tribute to Ida Rhodes, the computer pioneer who earned her undergraduate degree in mathematics at Cornell University in 1923. By the early 1950s, Rhodes co-designed the C-10 programming language for the Census Bureau’s UNIVAC I, the first U.S.-manufactured commercial computer. She created and programmed the computers used by the Social Security Administration and her legacy continues to impact the world of coding.

Apex.OS is a software suite comprising the Apex.Grace SDK and Apex.Ida middleware (Source: Apex.AI)

The way I think of Apex.Grace is as a mixture of a hardware abstraction layer (HAL) and a software abstraction layer (SAL). Users can write their applications in C++ using the software developer kit (SDK) and application programming interface (API) provided by Apex.AI. These applications can run in any Apex.OS-equipped automobile, irrespective of the underlying software (in the form of a real-time operating system) or hardware (in the form of SoCs containing ARM or Intel X86 processors). It doesn’t matter if a car boasts one zone or multiple zones, the same application software can be run in all cases.

Hmmm, that provides a high-level view of Apex.Grace, but how can we describe Apex.Ida? Well, let’s start with the fact that traditional (or should we say “legacy”?) automotive communications employ the CAN bus, but that supports data rates of only around 500 kbps, which is almost nothing today (it’s barely enough to communicate engine data). With sensors like cameras, radar, lidar, etc., today’s vehicles need data rates that are 1,000, 10,000, or 100,000 times faster, so they support a range of communications protocols, including Ethernet.

Apex.Ida abstracts all of this. The application developers make use of a single API, and Apex.Ida employs whatever communication protocols and transport mechanisms are available in that vehicle to best satisfy the unique requirements of each application.

How is all this made possible? Well, in order to achieve real-time performance we need deterministic execution, and in order to realize deterministic execution we need containers that support deterministic execution. Historically, completely different container solutions have existed for Intel CPUs and for Arm CPUs. What the folks at Apex.AI have done is to rewrite everything from the ground up, resulting in a single container class that runs in real time in a hardware agnostic way on both Intel and Arm processors. To the best of my knowledge, Apex.AI is the only company that provides a safety-certified solution of this kind.

I fear that I’ve only been able to provide a tempting taster with respect to the wealth of capabilities provided by the Apex.OS in the form of Apex.Grace and Apex.Ida. However, the folks at Apex.AI would love to elucidate and explicate further (tell them “Max says Hi!”). In the meantime, as always, I’d love to hear what you think about all of this.