We love firsts in this industry, despite the fact that “the first” anything doesn’t necessarily win the market. Sometimes the first to market does win. Sometimes it doesn’t. When it comes to microprocessors, there’s an ongoing battle to claim bragging rights on being the first.

Usually, the argument revolves around three contenders:

- The Intel 4004 microprocessor, part of the MCS-4 chipset

- The AiResearch MP944

- The Four-Phase AL1, one chip in a microprocessor chipset

These are all microprocessors. The question is, which of these was the first?

First what?

First microprocessor? First single-chip microprocessor? Just what is a microprocessor?

Lots of questions.

I’ve been designing systems for and with microprocessors since 1975. I’ve been writing about them since 1978. I’ve served as Editor-in-Chief of two leading electronics trade publications that discussed microprocessors ad nauseum. I’ve published many articles, books, and book chapters on microprocessor IP. Unsurprisingly, I’ve got an opinion on this topic. Here it is:

A microprocessor needs:

- An ALU to perform arithmetic and logical operations

- A register set including data register(s), address registers(s), control/status register(s), stack register(s) or stack pointer register(s), and instruction register(s)

- A program counter

- An instruction-fetch mechanism and program sequencer

- An instruction decoder (may use one or more microcode levels for decoding)

- A bus interface

If all six of these elements are present, I call it a microprocessor. If all six of these elements are present on one piece of silicon, I call it a single-chip microprocessor. Other people seem to have other definitions. These are mine.

Here’s the controversy. Using my definitions, the Intel 4004, the AiResearch MP944, and the Four-Phase AL1 are all microprocessors. The Intel 4004 – developed in 1969 and 1970 but only announced publicly in November, 1971 – is the only one of the three that qualifies as a single-chip microprocessor, by my definition. I am sure that there are people who are going to argue with me about that. Bring it on, folks, but only after reading this entire article, please.

Here is my reasoning:

The Intel MCS-4 was a very elegant design and an ideal chipset for creating embedded systems. Its 4-bit orientation was perfect for Busicom’s intended purpose – making a desk calculator – because it was designed for BCD (binary-coded decimal) arithmetic. One of the very big changes Intel’s 4004 architecture made was to shift Masatoshi Shima’s calculator design from being decimal-oriented (all mechanical calculators used decimal wheels for computation, and the arithmetic algorithms were well understood), to being BCD-oriented. This was Ted Hoff’s brilliant transformation of the original problem, which was required to fit everything on one chip. Hoff’s architecture demonstrated a deep mathematical insight into the problem being solved.

The MCS-4 chipset was therefore ideal for any machine that dealt with numbers in the machine/human interaction zone. That defines pretty much all embedded systems. Machines don’t like BCD very much – they like pure binary numbers – but humans need to see decimal digits. BCD is the compromise. It’s a numeric Esperanto if you will.

The desktop computers developed at HP Loveland during the 1970s, including the HP 9825 and 9845, were called desktop calculators. They had 3-chip, 16-bit, hybrid processors with BCD arithmetic built into the third chip on the hybrid, the Extended Math Chip. Back then, BCD was used extensively for high-precision, floating-point calculations. But if you want to go fast, you need to use binary numbers with lots of bits. That’s one reason that the industry worked so hard to get to 32- and 64-bit microprocessors.

Intel made many compromises to cram the guts of the 4004 microprocessor into a 16-pin DIP. Perhaps the biggest compromise was the 4-bit bidirectional bus, which transferred addresses, instructions, and data using a multiplexing scheme. This bus didn’t have separate address and data buses like subsequent processors. It just had a 4-bit bus with a sync pin to designate the start of each instruction cycle.

During an instruction fetch, for example, a sync cycle would denote the start of an instruction fetch, and the next three bus cycles would be address transfers, in 4-bit chunks. The ROM would then place the 8-bit instruction onto the bus in 4-bit chunks over the next two cycles.

Because of the limited number of available pins and the extreme bus multiplexing, the Intel 4004 microprocessor took three bus cycles to crank out an address and needed two or more bus cycles to load an 8- or 16-bit instruction. Addresses, instructions, and data all had to traverse the 4-bit bus.

Data fared better. Because it was a BCD-centric machine, the 4-bit bus was ideal. However, addresses for data transactions with ROM and RAM still needed three bus cycles for the address transfer. As a result, overall system performance suffered.

The Intel 4004 was slow as molasses, but that was OK for the intended use: an electronic desk calculator. It was still much faster than the electromechanical predecessors.

These compromises forced Intel to create a walled garden. Standard RAMs and ROMs available at the time had simple parallel buses with separate address and data lines. These devices were not directly compatible with the Intel 4004’s bus structure. The RAM, ROM, and I/O chips in the Intel MCS-4 chipset (the 4001, 4002, and 4003 respectively) all needed to implement special bus-interface logic to reside within the 4004 microprocessor’s walled garden.

(Note: The MCS-4 chipset had a cheat code. The 4001 ROM and 4002 RAM chips monitored the bus, identified and decoded I/O instructions, and executed the instructions that targeted their integrated I/O ports. Thus there was a slight distribution of instruction decoding across the three chips, but the 4004 microprocessor still incorporated all six of my critical processor elements. The 4004 is still the world’s first single-chip microprocessor, no matter the shortcomings forced by design compromises.)

So I am entirely happy with designating the Intel 4004 as the first single-chip microprocessor, walled garden notwithstanding. It’s got everything a microprocessor needs on one chip: ALU, register set, program counter, instruction decoder, fetch mechanism, program sequencer, and bus interface. It’s all there, on one piece of silicon, and thanks in no small part to Federico Faggin’s silicon sorcery. His brilliance on this and subsequent microprocessor designs transcends genius.

The AiResearch MP944, completed in June, 1970 after a couple of years of development, is clearly a microprocessor chipset. It’s always been called that by its co-inventor, Ray Holt, who maintains a terrific Website called The World’s First Microprocessor. The six chips in the set are the Multiplier Unit, the Divider Unit, the Special Logic Unit, the Steering Unit, the ROM, and the RAS (the RAM). The Multiplier and Divider Units were early co-processor incarnations, one of many innovations in Holt’s design. The MP944’s critical processor components are spread across multiple chips in the chipset. No one chip in the set will work as a complete processor. You want proof? Read Ray Holt’s original paper from 1971. No one knew about this microprocessor until decades later because it was used to implement the Central Air Data Computer, which controlled the wing pitch in the US Navy‘s F-14 Tomcat swing-wing fighter aircraft. It was a classified project, declassified in 1998.

You have to do a lot of digging to get to the truth for the Four-Phase AL1, which first appeared in 1969. My analysis of the AL1, based on available information, is that it was designed as a bit slice (or byte slice in this case), with 8-bit slices of a register-file and ALU, similar in concept but far more complex than the 4-bit AMD 2901, which appeared more than five years later.

A bit slice is a piece of a microprocessor. It cannot operate as a microprocessor on its own. The AL1 was part of a 12-chip set used to build a processor for Four-Phase computers. Four-Phase used three AL1s to form a 24-bit ALU and register set, but you needed the rest of the chipset’s ICs to complete the processor. The AL1 slice wouldn’t work as a processor without the other chips, which I believe included the fetch unit, the instruction register, the instruction decoder, an interrupt controller, and the system bus interface.

But wasn’t there a famous lawsuit demo that proved that the AL1 was indeed a microprocessor, you ask? Yes, indeed there was. TI (Texas Instruments) had several patents based on its microcontroller and microprocessor work. The company charged licensing fees for the use of these patents and filed suit against several companies, notably Dell in this case, to extract these fees when needed. TI filed the patent lawsuit against Dell in 1990.

One of Four-Phase Systems’s founders, Lee Boysel, served as an expert witness for Dell in the lawsuit. He developed a physical demo with the AL1 to prove prior art and demonstrated the system to the court in 1992. The Four-Phase Demo made the AL1 chip look and work like a microprocessor to establish prior art.

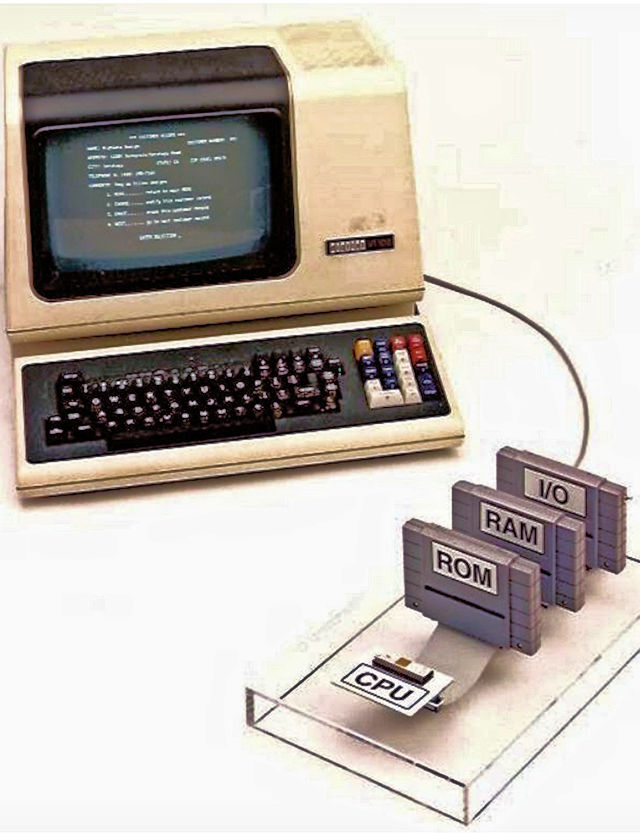

A 40-pin AL1 chip in Boysel’s Demo system connects to plastic-encased RAM, ROM, and I/O blocks over a 40-pin ribbon cable. Here’s a screen grab, taken from Boysel’s 2016 presentation titled Making Your First Million And Other Tips for Aspiring Entrepreneurs, made to a class at his alma mater, the University of Michigan, that shows a photo of Boysel’s Demo system for the lawsuit:

Photo of the AL1 Demo system that Four-Phase founder and expert witness Lee Boysel presented in the TI v Dell microprocessor lawsuit

The Demo system’s RAM/ROM and I/O blocks in this photo look to me like early Nintendo video game cartridges. They’re made of gray plastic – opaque, gray plastic. In fact, the actual Demo system is in the Computer History Museum’s archives, and the file on this artifact says the cartridge shells are actually from a Super Nintendo video game. I think the opacity of these cartridges, especially the ROM cartridge, is probably very important for the purpose of this Demo system.

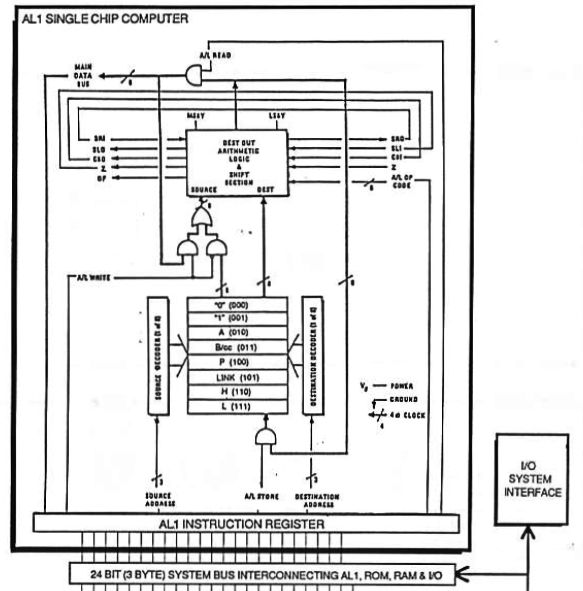

I can’t prove it, but I’m guessing that the AL1 demo was a technological magic trick. I cannot say it was a deliberate attempt to mislead, because I don’t know the motivations, but it sure looks sketchy to me. A document titled A Courtroom Demonstration System: 1969 AL1 Microprocessor published by one of Four-Phase’s founders and the AL1’s inventor, Lee Boysel, in the collection at the Computer History Museum, contains a block diagram of the demo system that shows the AL1 chip connected to demo system’s RAM, ROM, and I/O blocks. Here’s the diagram:

A Block Diagram of the Four-Phase AL1 “Single Chip Computer”

I see a classic piece of magic apparatus from this block diagram and the photo of the Demo system.

There are two parts to any magic trick: the apparatus and the performance. Magicians perform the tricks on stage, but the magic business also has a cadre of well-paid, behind-the-stage people who just dream up and build the equipment that makes the tricks possible.

One of the primary tools of performing in magic is visual misdirection: fooling people into looking at the wrong thing and thinking that they see something happening that isn’t. If you see a plastic box marked with a big sticker that says “ROM,” you naturally think there’s a ROM inside. If you see a white chip with shiny gold leads next to a drab, gray box, you focus on the shiny object and pay much less attention to the gray box. That’s human nature, and magic tricks rely on it.

The block diagram clearly shows how a 24-bit instruction word, retrieved from external ROM, enters a block labeled “AL1 Instruction Register.” The instruction stored in the “AL1 Instruction Register” then drives the register’s output pins, which directly control the chip’s ALU and select the register file’s source and destination.

Here’s what I don’t see in this diagram: an instruction decoder, a program counter, or a fetch mechanism.

From this drawing, I conclude that the box labeled “AL1 instruction register” is actually a microcode register and that the externally attached ROM in the Demo system actually operates as a microcode ROM. Is this plausible? Were at least some Four-Phase computers microcoded machines? Armed with that question, Google quickly found the answer. Yes, Four-Phase’s Series 4000 and Series 6000 computers were indeed microcoded machines with 48-bit microcode ROMs.

Here’s a quote from Lee Boysel’s biography, which appears on the University of Michigan’s Web site:

“Boysel developed some of the earliest concepts and published some of the first articles on computer architectures during the 1960s and 1970s, including MOS ROM CPU microcode control, direct video display of RAM information and single bi-directional bus structures – all staples of today’s microprocessor designs. He also worked on the design and fabrication of the semiconductor industry’s first A/D chips, the first static and dynamic MOS ROM, the first parallel ALU, and the first DRAM. Some of this technology was accomplished at Fairchild, and some at Four Phase Systems.”

Boysel obviously knew a lot about microcode.

Just where is the program counter in this Demo system? It does not appear in the AL1 block diagram, and I don’t think that it’s on the AL1 chip. I think it must be somewhere else. I’ll go out on a short limb here and say that I think the program counter must reside inside the gray plastic box labeled “ROM.”

Where’s the instruction decoder? It’s not in the AL1 block diagram, either. I cannot prove it, but I think the assembler that Boysel used to generate software for the Demo system must have generated microcode directly for the ROM. Microcode assemblers were once much more common when bit-slice processor design was popular. That was the 1980s, just before Boysel built this demo. I think the ROM in the Demo’s gray plastic “ROM” cartridge contains microcode, essentially pre-decoded instructions.

Please don’t misunderstand. Using microcode to decode microprocessor instructions is perfectly legit. Many very successful microprocessors used microcode-based instruction decoders. For example, the Motorola 68000 microprocessor used multiple microcode levels to decode machine instructions. But if that microcode lives off-chip, then the chip can’t be a single-chip microprocessor, using my definition.

Ken Shirriff confirms my suspicions in his extremely thorough blog titled “The Texas Instruments TMX 1795: the (almost) first, forgotten microprocessor.” (Shirriff’s blog is from 2016, so I readily acknowledge that he came to these same conclusions long before I did.) From perusing his Website, I can see that Shirriff is clearly an experienced computer historian and an expert in reverse engineering chips. Give him a die photo, and he can tell you what you’ve got on the die.

Shirriff analyzed the AL1 die microphotographs filed during the TI/Dell lawsuit and discovered another surprise. The “AL1 Instruction Register” shown on the block diagram doesn’t actually exist in silicon. There are only wires on the chip where the register is supposed to reside, which further confirms the AL1’s lacks an instruction register. This makes complete sense. According to the block diagram above, the only way into or out of the AL1 is through the “AL1 Instruction Register.” That means data from the AL1’s ALU section must pass into and out of the chip through the so-called instruction register. However, instruction registers are never bidirectional. Wires certainly are. I conclude that the ROM cartridge in the Demo system drives microcode to the ALU and register file directly over the ribbon cable.

None of this analysis diminishes Boysel’s many accomplishments. The AL1 chipset certainly advanced the state-of-the-art for computing. Boysel claims in his presentation that Four-Phase had a three-year lead on its competition. That’s probably quite accurate.

I highly recommend watching Boysel’s entertaining presentation from 2016. The final part of this presentation deals with the AL1 and associated chips used in the Four-Phase microprocessor. Boysel says during his presentation that the Intel 4004 was certainly the first commercial microprocessor. That’s because Four-Phase sold its processor chips only on a board, packaged in complete computer systems. The processor itself was not for sale. Boysel refused to sell such a valuable piece of technology to competitors.

“The microprocessor just didn’t come out of nowhere,” says Boysel in his presentation. He’s right, of course. Boysel also says that TI’s expert witnesses folded their tents and would not testify when they found out about the AL1 Demo system.

Case closed.

Was Boysel sharp enough to conjure the sort of grand technical illusion that I suspect? Was he sufficiently motivated to do that? Lee Boysel passed away just this year, on April 25, 2021. So I cannot ask him directly. However, here’s a quote from his obituary in the San Francisco Chronicle:

“These years of ensuring that this technology remained in the hands of, in his words, ‘John Q Public,’ were what Buff was proudest of and what he reminisced about the most.” (His friends called him “Buff.”)

I suggest that you watch the video and then decide for yourself. I know how I vote. Without a doubt, Boysel doesn’t get nearly the credit he deserves in the world of microprocessors.

Now, please thank and excuse the jury.

Note: A big thank you to my friend Brian Berg for inspiring me to research and write this article. He asks just the right questions, sometimes on purpose.

Lee Boysel was a great guy and fellow Michigan Alumn – my degree MBA 1968.

I owned private stock in Four Phase Systems. If you are not commercially successful, you are forgotten to history. Lee’s complex chips had a terrible yield. I knew my investment was in trouble when they passed out tie clips made from the failed chips.

There were hundreds of tie clips handed out at the annual meeting. Incidentally, the HQ land was sold to Apple Computer (when Apple still had “Computer” in its` name).

Another Michigan inventor was Professor Brush who had the first light bulb (arc lighting) in the 1870s before Thomas Edison’s incandescent light bulb. Not reliable.

Great analysis, Steve. What about Pico Electronics in Glenrothes, Scotland, which partnered with General Instruments to build a calculator chip called the PICO1? That part reputedly debuted in 1970, and had the CPU as well as ROM and RAM on a single chip. I can’t find much about this part.

Here’s the link to Lee Boysel’s 2016 presentation at UofM covering the years 1963-71. Just under 1 hour long.

Sorry but I don’t a see a link?

Steve, Great article. I am going to re-read it several times. Good research and good detail. Would love to discuss all this more sometime. I used the 4004 chip set in a pinball application in 1974 and the hardware required 59 TTL external chips. I just have a hard time calling the 4004 a single chip microprocessor. It certainly was not stand alone. Good to see you set down some definitions to get started. Other historical writers seem to enjoy changing definitions to fit their articles. Good work!!!

Ray Holt

Designer MP944