USB is one of the success stories in the saga of technical standards, and one of the few that lives up to its name. It truly is universal. But that’s about to go out the window.

It started off so well, too. If the funny rectangular plug fit, it worked. It was way better than the assortment of connectors we used to have on our PCs, and the even bigger assortment of device drivers that used to go with them. Before USB, jacking in your new mouse, keyboard, or printer was usually just the start of a long and painful trip down the rabbit hole. Nontechnical users were totally confused, which kept folks like us in business as the family’s (or the office’s) unofficial tech support.

Thanks to big heapings of help from Microsoft, Intel, and other vendors, USB took a big step toward brainless plug-and-play. It was complex behind the scenes, sure, but dead simple for end users, which was the whole point. My only complaint was that the rectangular connector wasn’t visually keyed, so it had the weird property of going into the socket the wrong way around 98% of the time.

Nevertheless, the bright minds behind USB have decided to tinker with success. And you know the old saying, “you can’t fix it if it ain’t broke.” Looks like they’ve found a way to “fix” it anyway.

The original USB (since retroactively named as USB 1.0) could do 12 Mbps and introduced the now-familiar rectangular connector. Five years later, USB 2.0 boosted speeds massively to 480 Mbps but kept the connector (since retroactively named Type-A). Life was beautiful.

It started to go downhill from there. USB 3.0 boosted speeds again, to 5 Gbps, while also introducing the new, smaller – and reversible! – connector dubbed Type-C. (Type-B was the odd square one you rarely see.) Vendors that stuck with the Type-A connector for USB 3.0 colored them blue to highlight their lovely new SuperSpeed™ feature. Okay so far.

Five years after that, we got USB 3.1, which sounds like a bug fix. Speeds doubled to 10 Gbps but you could still use either type of connector. Good luck figuring out if your PC or your peripheral supported USB 3.0 or 3.1, but at least it was still plug-and-play. But then we got USB 3.2, speeds doubled again to 20 Gbps – we’re well past Ethernet speeds now – and support for the old Type-A connector was dropped. And there’s still no way to tell USB 3.0 from 3.1 or 3.2. Or whether it supplies power or not.

Fearing market confusion, USB’s governing body decided to make matters worse. Henceforth, the old USB 3.0 would be known as “USB 3.2 Gen 1.” That’s the actual name. Naturally, the upgrade from that changed from USB 3.1 to USB 3.2 Gen 2 and the upgrade from that changed to… are you ready? “USB 3.2 Gen 2×2.” Oh, yeah, that’s much better.

Sensing that they were on a roll and evidently afraid that some other standards body would grab all the good names, they decreed that the next generation will be USB4. Seems simple enough, but why “USB4” and not “USB 4” or “USB 4.0?” Maybe it’s to save space on the stickers.

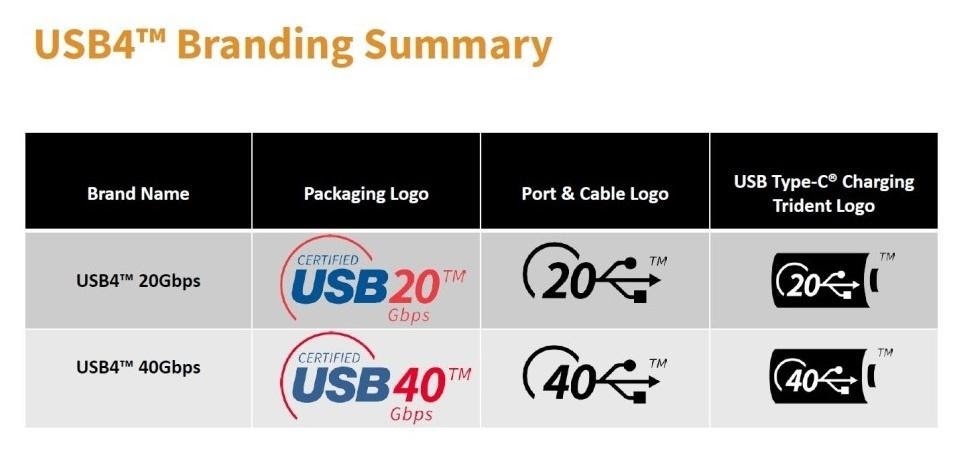

Because there will be stickers. Why? Because USB4 isn’t just one standard. That’s too easy. There will be at least two sub-brands, USB4 20 and USB4 40, with the final two digits denoting speed. So, to be clear, USB4 20 is no faster than the hideously named USB 3.2 Gen 2×2, while USB4 40 is twice as fast.

Image source: USB Implementers Forum

As an added bonus, USB4 incorporates the formerly unrelated Thunderbolt 3 standard – maybe. You see, Thunderbolt 3 support is optional. But at least power delivery is now mandatory, so no more hunting for laptop ports to charge your phone. Except that USB4 uses the same Type-C connector as previous versions, so yes you do have to rely on trial and error to find a powered port.

Your best bet is to just plug in the cable and see if it works.

Remarkably, the USB situation is better than the train wreck happening over at HDMI. The High-Definition Media Interface also started out as a comfortably reliable way to connect TVs and monitors, but that honeymoon period didn’t last. The cable probably still fits – HDMI never supported 11 different connectors like USB does – but it’s anyone’s guess what video signal you’ll get.

Does either of these groups employ marketing people? Anyone? Anyone?

We’re just now entering the era of HDMI 2.1, which should bring all sorts of lovely consumer benefits. In reality, it’s equal parts cynical branding and internecine squabbling among vendors. It’s the consumer who will lose out, because almost none of them will have a clue what features they’re actually getting.

At its heart, the “HDMI 2.1” label means very little. The specification includes a lot of optional features, but few mandatory ones. Consequently, every maker of TVs, monitors, cables, PCs, and video gear gets to pick and choose whatever features they want to support, roll them up with their own proprietary tweaks, and call it HMDI 2.1. Good luck getting the guy at Costco to explain the differences.

Support for 8K video resolution is optional. So is support for 4K, for that matter. Refresh rates can vary between 50–240 Hz; dynamic HDR is optional; eARC (back-channel audio) is optional; VRR (variable refresh rate) is optional; QMS (quick media switching) is optional; QFT (quick frame transport, mostly for games) is optional; ALLM (auto low-latency mode, ditto) is optional; and so on.

Making matters worse, TV vendors will slap their own proprietary brand names onto each individual feature, calling QFT something like GameFast™ or whatever. There’s no requirement to support any particular mix or subset of HDMI features, nor is there any rule against renaming the ones that are supported. So… how is a consumer supposed to know what features, if any, he’s paying for?

Let’s hear from HDMI’s Vice President of Marketing, Operations, and Licensing, Brad Bramy. “Consumers should, as with any [consumer electronics] purchase, consider how they will use the TV as well as the type, source, and quality of content they will consume and what experience matters the most to them,” he told Gizmodo, and “how a TV manufacturer markets its products as good for gaming that is up to them.” Okay.

Reminds me of the damning quote, reputedly from the head of the American Federation of Teachers, “When school children start paying union dues, that’s when I’ll start representing the interests of school children.” In short, it looks like the HDMI group is focused on its members’ interests more than consumers’ interests. As a licensing authority, I guess that’s fair. But will no one think of the children?