I constantly amaze myself by the vast quantities of useless nuggets of knowledge and tidbits of trivia that are rattling around in my poor old noggin. These typically resurface when I least expect them. For example, I could be discussing AI systems with someone, and—as improbable as it may sound—one of the examples they give might trigger thoughts of a man bending over tapping a railway wheel with a hammer. In fact, this very scenario just occurred.

Let’s first set the scene. When I was a kid growing up in England in the 1960s, there were a lot of working men’s clubs. These played a central part of social life in many industrial towns across Britain, especially in counties like Yorkshire (where I’m from). For many families, the club was the main place to relax, socialize, and be entertained after a week of work.

These clubs often had wonderfully peculiar names. Some were named after a street, a local industry, or the founding organization, but others ended up sounding quite odd to modern ears, like The Oddfellows Club, The Flying Horse Club, The Tempered Steel Club, and The Railway Servants’ Club.

I remember a British television variety show called The Wheeltappers and Shunters Social Club, which aired from 1974 to 1977. This sounded perfectly normal to people of that time because both these names referred to real jobs. A wheeltapper was a railway worker who tapped train wheels with a hammer to check for cracks or other defects (the sound revealed them), while a shunter was a railway worker who moved railcars around yards to assemble trains.

A wheeltapper tapping a wheel (Source: National Railway Museum/Science and Society Picture Library)

These days, modern railways rely mostly on automated inspection systems that can detect tiny cracks long before they become noticeable by tapping. These include ultrasonic testing, acoustic sensors, and wayside wheel monitoring systems. As a result, the wheeltappers have waned, and the term “wheeltapper” is now usually invoked as a metaphor for a bygone, single-purpose occupation with faintly Victorian overtones—the sort of slightly comic role that could easily have appeared in a Monty Python sketch.

Why am I waffling about waning wheeltappers here? Well, I was just chatting with Jerome Gigot (VP of Marketing, Edge AI Products) and Muneyb Minhazuddin (Customer Growth Officer) at Ambarella, so they obviously have a lot to answer for.

The guys and gals at Ambarella specialize in AI vision processors for edge applications. Earlier this year, at CES 2026 in Las Vegas, the company announced two significant developments: a new edge AI vision processor called the CV7, and a new Developer Zone (DevZone) intended to broaden its ecosystem. More recently, at Embedded World 2026 in Nuremberg, the company expanded the story further by introducing an Agentic AI framework for edge devices.

Taken together, these pieces form an interesting picture: powerful edge-AI silicon, a developer ecosystem to make it easier to build applications on that silicon, and an emerging software model that moves from perception to automation. Let’s unpack this a little.

Ambarella has been building video-processing chips for more than two decades. The company originally made its name in high-efficiency video compression, enabling everything from broadcast equipment to consumer cameras. Over time, however, the emphasis has shifted from simply capturing video to understanding what the video contains.

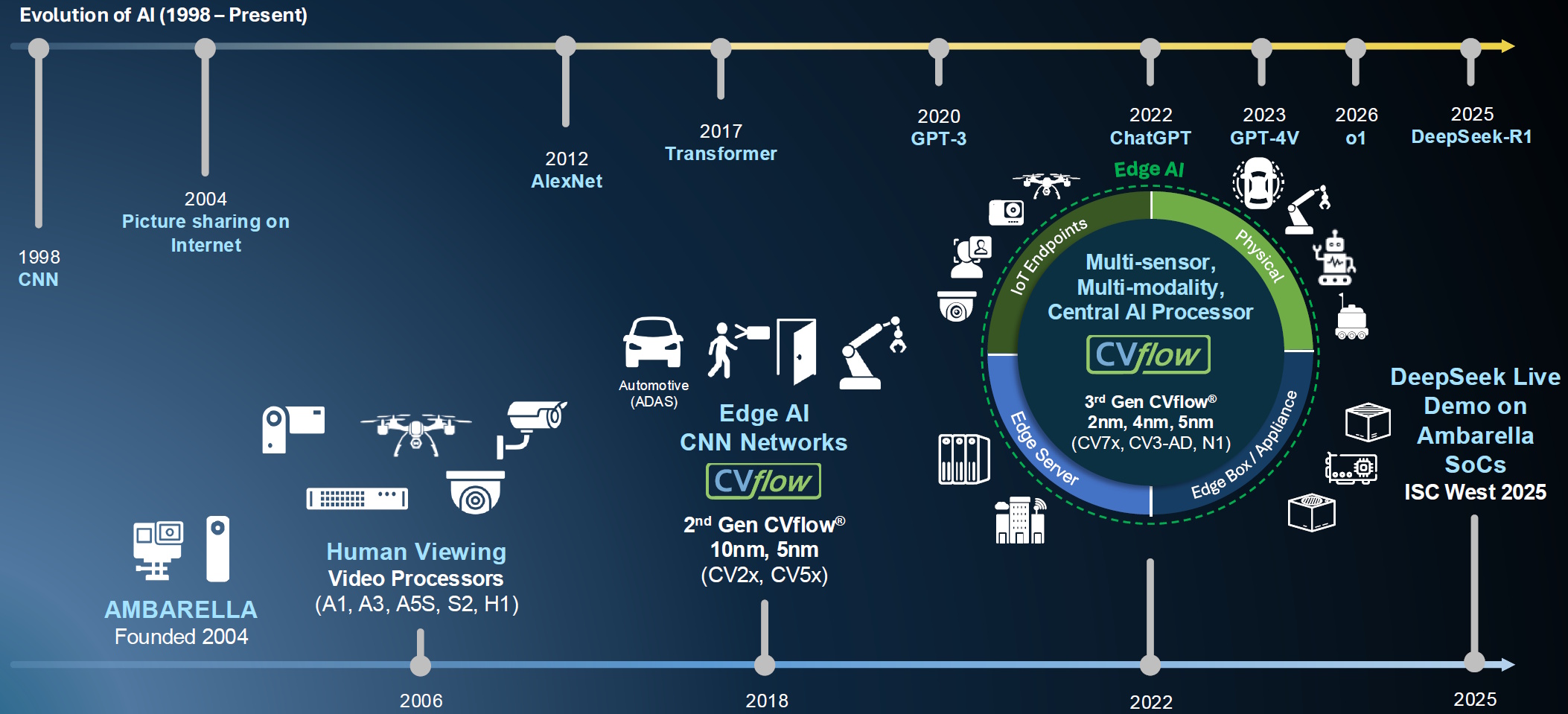

Ambarella is well placed for what it sees at the golden age of AI (Source: Ambarella)

The new CV7 processor continues that trajectory. Fabricated on a 4-nm process, the chip combines high-resolution video processing with a third-generation AI architecture known as CVflow. Compared with the previous generation, the CV7 delivers roughly 1.5× the AI performance while also supporting transformer-based neural networks, which are the same class of models that power many modern AI systems.

In practical terms, that means the chip can run LLMs (Large Language Models), VLMs (Vision-Language Models), and VLAs (Vision-Language-Action Models), all of which happen directly on the device.

The chip can ingest video from up to twelve sensors, process those streams with Ambarella’s sixth-generation image signal processor, encode high-resolution video (up to 8K at 60 frames per second), and run AI inference in parallel. The architecture is designed to minimize trips to DRAM—because shuttling data back and forth to memory is one of the biggest power consumers.

This combination of video, vision, and AI acceleration makes the CV7 suitable for a range of edge devices, including security cameras, drones and action cameras, edge infrastructure systems, and automotive fleet and aftermarket solutions.

One of the key ideas here is that AI processing is moving closer to where the data is captured. Instead of sending enormous video streams to the cloud for analysis, much of the intelligence can now run directly on the camera or embedded device at the edge where the “internet rubber” meets the “real-world road.”

Hardware is only half the story, of course. The other half involves software and developers. Historically, Ambarella’s chips were primarily deployed through a traditional semiconductor supply chain: the company sold silicon to module makers and OEMs, who integrated the chips into products such as cameras and automotive systems.

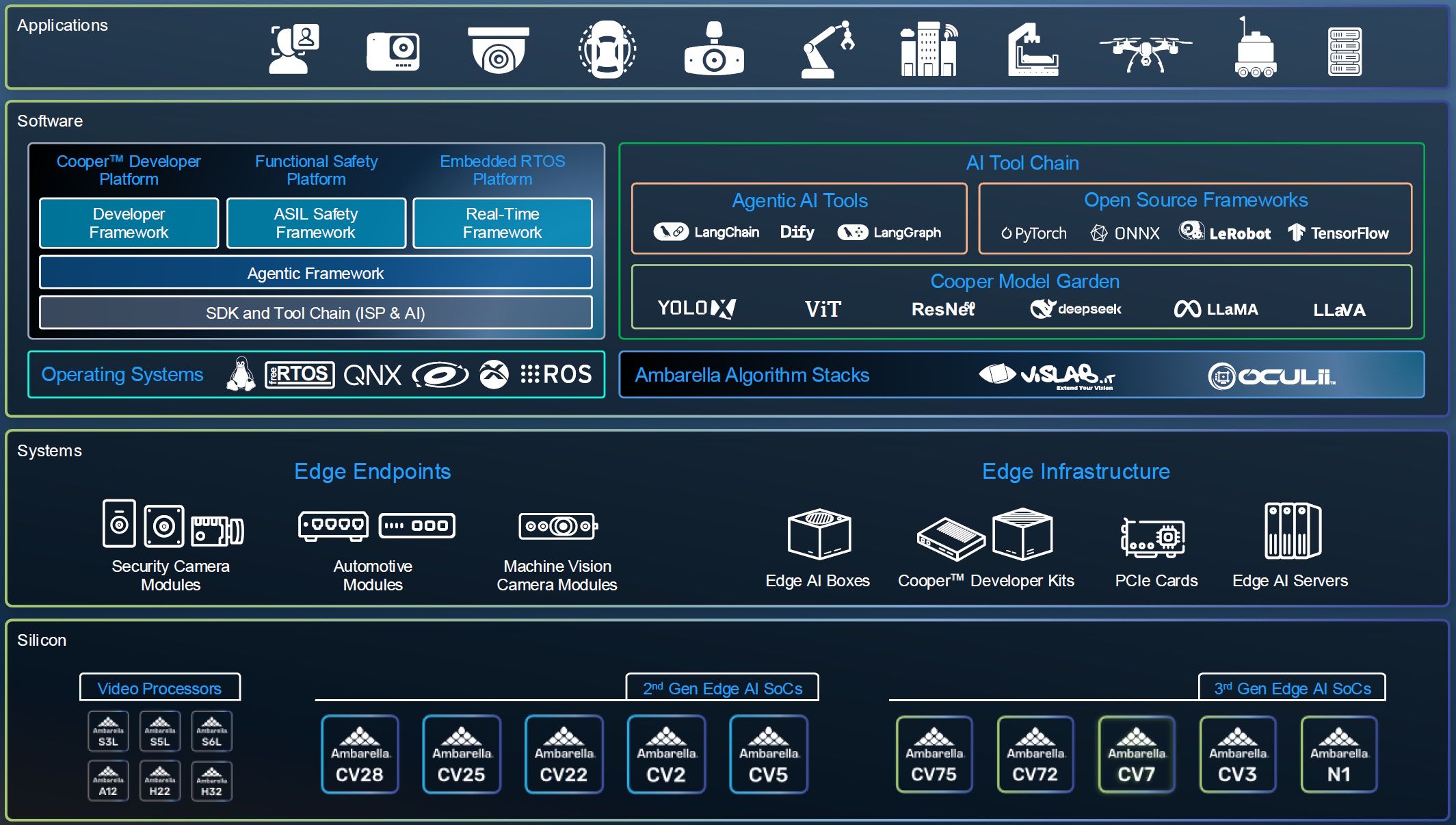

A full range of hardware and software solutions (Source: Ambarella)

The new Developer Zone expands that model by opening the platform to a broader community of application developers, independent software vendors (ISVs), and system integrators. The idea is to lower the barrier to experimentation. Developers can access optimized AI models, APIs and SDKs, and agentic workflows, all accompanied by development kits and reference designs.

Instead of diving immediately into the deep end of a full SDK, developers can

experiment with higher-level tools and workflows to quickly prototype applications. In other words, developers can focus on what they want the system to do, rather than worrying about the underlying silicon first. Only after they decide to move toward production do they need to engage with the deeper layers of the platform.

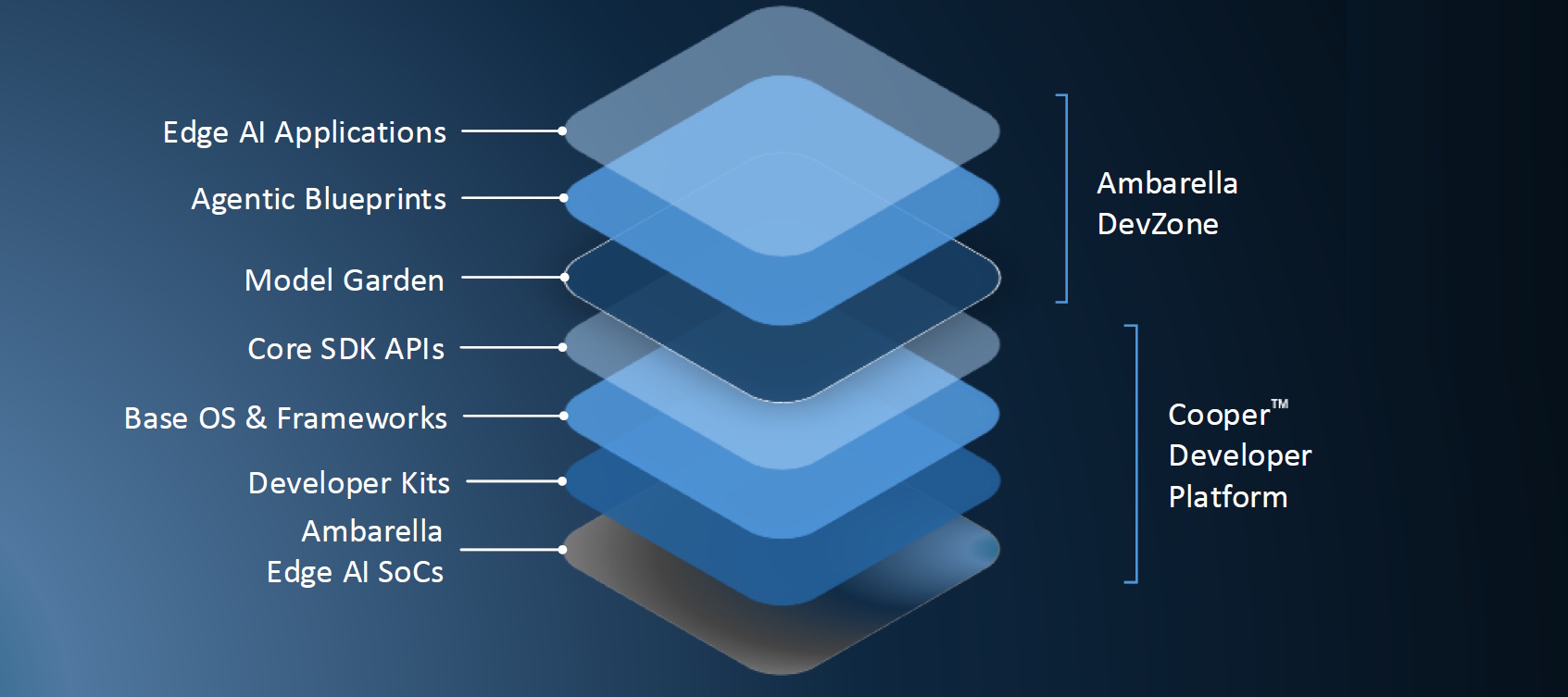

The Ambarella Edge AI Software Stack (Source: Ambarella)

The third piece of Ambarella’s strategy, showcased at Embedded World 2026, centers on Agentic AI. The idea is that many AI systems today are primarily observational. They analyze sensor data, detect objects, and generate insights, but they don’t necessarily act on them. Agentic AI closes the loop. For example, an AI vision system might detect a situation and then automatically trigger a response, turning on a sprinkler system, issuing an alert, or activating another device in the environment.

Ambarella’s architecture supports this model by combining perception AI, generative AI capabilities, and agentic AI orchestration layers. Together, these components enable developers to build closed-loop systems that integrate sensing, reasoning, and action.

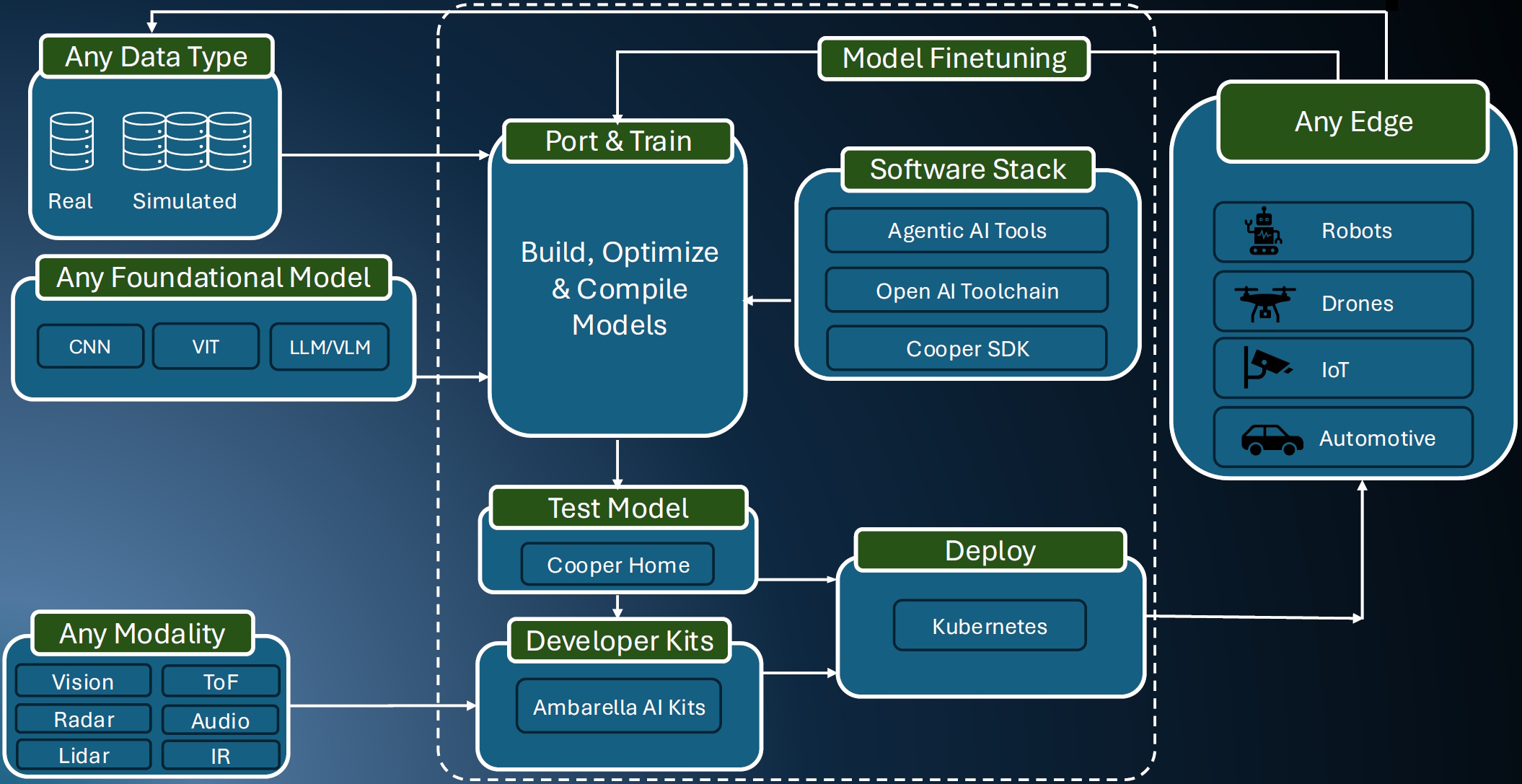

The Ambarella developer workflow: Any data, any model, any modality, and any edge device (Source: Ambarella)

All of which brings us back to the concept of wheeltappers (I love it when a plan comes together). During our conversation, Jerome and Muneyb described a fascinating application developed by an independent software vendor called Cogniac.

Cogniac works with rail networks to monitor the condition of train components as trains pass inspection points at speed. Cameras capture high-resolution images of wheels and other hardware as the train passes by, and AI models analyze the footage to detect wear, wobbling, cracks, or other potential defects.

In the past, much of this processing required large cloud-based GPU systems, with enormous volumes of video data streaming upstream for analysis. By comparison, the combination of the CV7 and Ambarella’s edge AI architecture means much of that processing can happen locally on the device. Instead of transmitting raw video to the cloud, the system can analyze the imagery on-site and send only the results—something like, “Train 4821, Carriage 3, Wheel 6. Crack Detected. Replace Immediately!” which results in a dramatically smaller data footprint.

I must admit, this example particularly captured my imagination. On my morning drive into work, I’m frequently stopped at a level crossing while a train clatters past. Normally, I just sit there with a glazed look on my face (my usual expression), mesmerized by the seemingly endless procession of wheels rolling by.

Now I find myself imagining an AI system quietly examining each wheel as the train hurls past at full speed, deciding which ones are healthy and which ones might soon need attention. In a sense, this is the modern, automated descendant of the old railway wheeltapper—only faster, more precise, and capable of inspecting every wheel on every train.

Another example Jerome and Muneyb shared with me comes from a company called meldCX, which builds AI systems for retail environments such as self-checkout and in-store analytics. Using Ambarella’s developer tools and agentic workflows, the company reportedly ported part of its application stack to the platform in just three days. This is a striking contrast to the months-long integration cycles that have traditionally been common in embedded AI systems.

This kind of rapid onboarding is exactly what Ambarella hopes its DevZone initiative will encourage: a faster ecosystem where developers can test ideas quickly and bring new edge-AI applications to market more rapidly.

Looking at the whole story, from the CV7 processor to the DevZone to agentic workflows, it’s clear that the folks at Ambarella are aiming to do more than simply ship another AI chip. Their goal is to enable complete edge-AI systems in which powerful silicon, developer tools, and application frameworks work together.

And if those systems occasionally replace a few metaphorical wheeltappers (which, sadly, are the only kind that exist these days) along the way… well, that’s probably a sign of progress. Although I must admit, there’s something rather appealing about the image of a man with a hammer walking slowly along a train, listening carefully for the telltale ring of steel on steel. Even in an age of transformer networks and agentic edge AI, some old images refuse to fade away.