There’s a really interesting company called XMOS that’s based in the UK. One of the things that’s noteworthy about the folks at XMOS is that they are extremely well known in certain circles, like audio processing and speech recognition, but relatively unknown in other spheres of activity, like artificial intelligence (AI) and machine learning (ML). Happily, all that’s about to change with their latest chip — the xcore.ai — which I think of as silicon to satisfy the appetite of the forthcoming Artificial Intelligence of Things (AIoT).

According to the IoT Agenda, “The Artificial Intelligence of Things (AIoT) is the combination of artificial intelligence (AI) technologies with the Internet of Things (IoT) infrastructure to achieve more efficient IoT operations, improve human-machine interactions, and enhance data management and analytics […] the AIoT is transformational and mutually beneficial for both types of technology as AI adds value to IoT through machine learning capabilities and IoT adds value to AI through connectivity, signaling, and data exchange.” I couldn’t have said it better myself (see also What the FAQ are the IoT, IIoT, IoHT, and AIoT?).

Actually, this might be a good time to mark your calendar for 2025, which may well be the year of the AIoT “Big Bang,” with an anticipated 65 billion connected devices, 180 zetabytes of data, and a $3 trillion spend, according to Gartner and Business Insider Intelligence.

Close to the Edge

We are poised to enter a new era in which intelligence is embedded in the fabric of the world that surrounds us — in our homes, our vehicles, our workspaces, and our cities, and even on our bodies and in the clothes we wear.

Artificial intelligence and machine learning applications will soon be all around us, from humongous power-guzzling tasks that reside in the cloud to parsimonious power-sipping applications that dwell at the edge of the network. As one approaches the center of the cloud, things tend to become more homogenous. By comparison, as one gets closer to the extreme edge, where devices are always on, always listening, and always watching, computational requirements become increasingly diverse.

A Computational Engine for All Seasons

Traditionally, AI and ML applications running close to the edge have required a powerful (but costly) applications processor (AP) or a microcontroller augmented with additional components to accelerate key capabilities. Some companies may be tempted to build an ARM-based System-on-Chip (SoC) but — in the case of a 28 nm device, for example — this can easily cost $40M to create the device and another $40M to develop the software, with the entire process taking 18 to 24 months. All of this may be thought of as placing a long-term bet on an uncertain outcome in a rapidly evolving market, which doesn’t sound very appealing when you say it out loud.

By comparison, the unique and innovative architecture of the xcore.ai allows this device to provide high-end AI and ML capabilities, while consuming very little power, at a very attractive price of around $1, which means this device has the potential to be embedded in almost anything, including smart light switches and smoke detectors, for example.

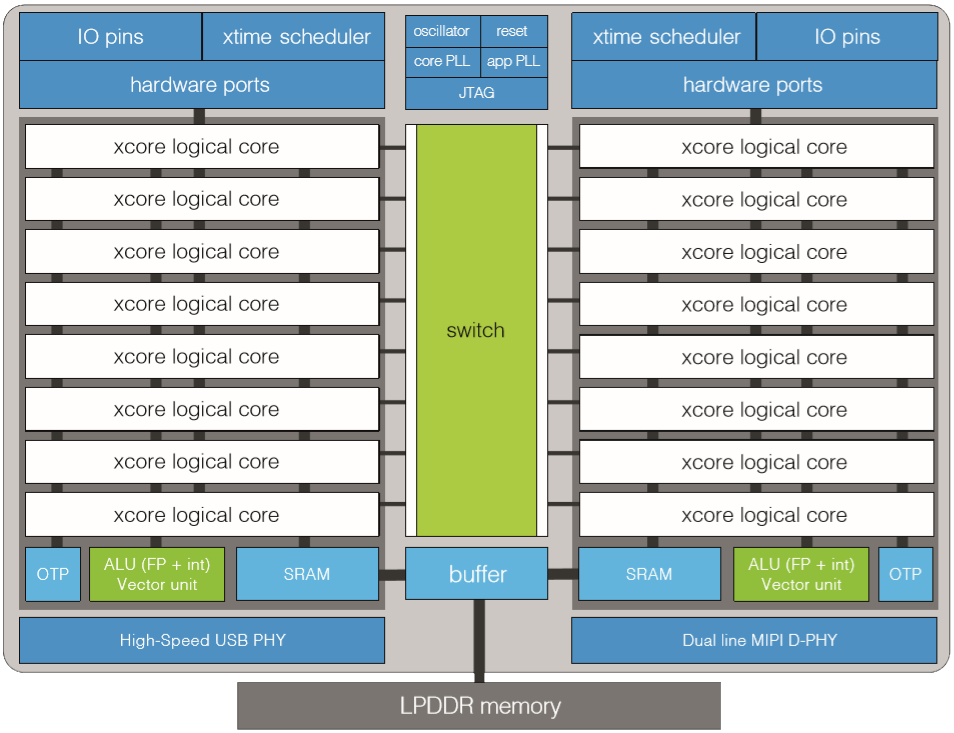

Block diagram of the xcore.ai device (Image source: XMOS)

The xcore.ai contains two tiles connected by a high-speed switch. Each tile boasts a RISC processor with a tightly coupled SRAM, where each processor has a dual-issue execution unit capable of executing instructions at twice the pipeline clock frequency. Execution is split over eight concurrent hardware threads, or logical cores, each of which is capable of running software tasks that execute I/O, control, DSP, and AI processing. In turn, each task can communicate with other tasks over channels or via memory, where the former can connect tasks locally or across multiple cores, and the latter can only connect tasks running on the same core.

This architecture delivers, in hardware, many of the elements that are usually seen in a real-time operating system (RTOS), including task scheduling, timers, I/O operations, and channel communication. As a result, tasks can typically respond in nanoseconds to events such as external I/O or timers, thereby making it possible for the xcore.ai to perform hard real-time tasks that would otherwise require dedicated hardware. By eliminating sources of timing uncertainty (interrupts, caches, buses, and other shared resources), the xcore.ai can provide deterministic and predictable performance for a wide range of applications.

The xcore.ai cores employ a standard RISC-like instruction set, with instructions for loading and storing values in SRAM, and instructions for performing operations on 32-bit integer and floating-point values. Additionally, there is a set of instructions that enables software tasks to interact with the I/O pins of the device — and to communicate and synchronize with each other — with no more than a few nanoseconds of latency.

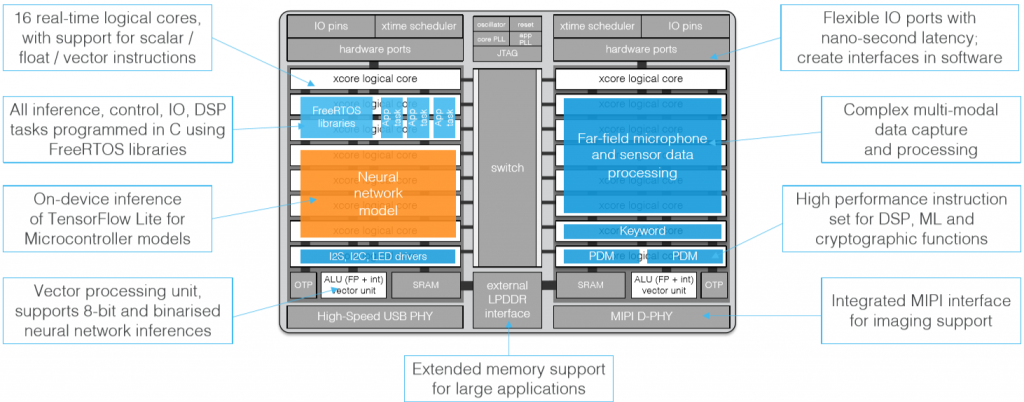

An example implementation is illustrated below, with some logical cores being employed to perform far-field microphone processing, some cores being used to implement a neural net model, and so forth. Up to 128 pins of flexible I/O can be programmed in software to provide access to a wide variety of interfaces and peripherals, which can be tailored to the precise needs of the application. Furthermore, hardware USB 2.0 PHY and MIPI D-PHY interfaces support the collection and processing of data from a wide range of high-performance sensors.

An example AIoT implementation using an xcore.ai device (Image source: XMOS)

The xcor.ai is fully programmable in C, with specific features for DSP, AI, and ML accessible via optimized C libraries. A TensorFlow Lite to xcore.ai converter facilitates easy prototyping and deployment of neural network models. Even though the xcore.ai is capable of providing RTOS-like capabilities in hardware, it also supports the FreeRTOS real-time operating system, thereby enabling developers to make use of a broad range of familiar open-source library components.

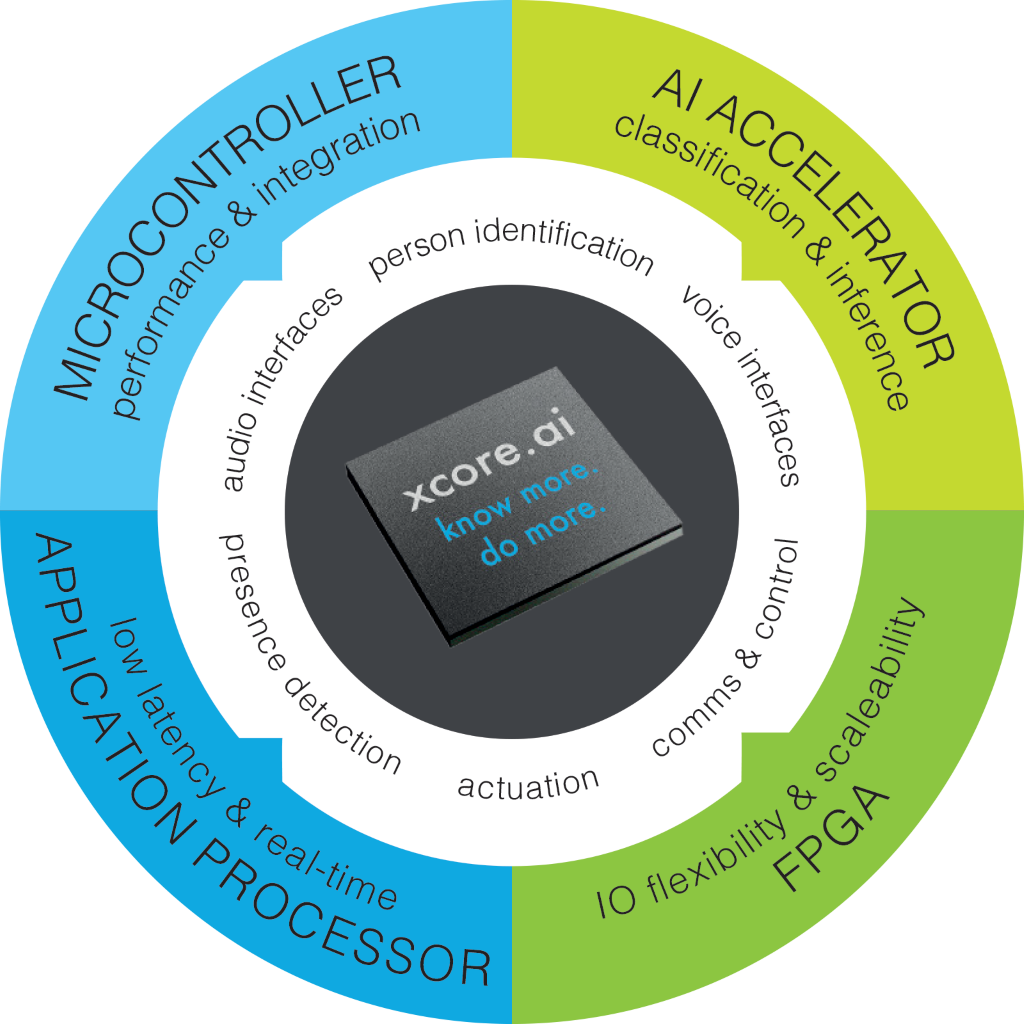

One way to think about the xcore.ai is that it provides the performance and integration of a microcontroller, the low-latency and real-time capabilities of an application processor, the I/O flexibility and scalability of an FPGA, and the classification and inference capabilities of an AI accelerator.

A computational engine for all seasons (Image source: XMOS)

The folks at XMOS tell me that, as compared to an ARM Cortex-M7 running at 600 MHz, an xcor.ai provides 16x faster I/O processing, 15x DSP performance, and 32x AI performance, which is pretty impressive for such a physically small device.

What’s That? Who’s There?

I think things are starting to move so quickly (technology-wise — I’m not saying that people are moving faster per se), that it’s not easy to envision all of the possible applications for a device like the xcore.ai, but that doesn’t stop us from bouncing a couple of ideas around.

Let’s start with smoke detectors. Speaking for myself, we have a bunch of these little rascals scattered throughout our house. Oftentimes in the case of a fire, emergency service rescue and recovery time is hampered due to a lack of understanding of the situation and environment prior to arriving on-site. Now, imagine intelligent smoke detectors that communicate with each other to determine whether there are people in the building that’s on fire and, if so, how many and where are they located. Such detectors could also clarify whether the people are moving or breathing, thereby constructing an intelligent picture of the environment that can be shared with the emergency services in real-time before they even arrive at the scene. A smoke detector equipped with radar and imaging sensors accompanied by an xcore.ai device, could provide such real-time presence and vital sign detection information.

Another example would be streetlamps for the 21st century. In addition to causing light pollution, even efficient modern streetlamps are “always on” during the hours of darkness, thereby consuming energy whether their services are required or not. Now imagine an intelligent streetlamp equipped with radar and imaging sensors accompanied by an xcore.ai device. Such a streetlamp could remain dark in an energy efficient “always ready” wait state. When the streetlamp detects the presence of a human ambling down the sidewalk or a vehicle racing along the road, it could spring into life. Furthermore, such streetlamps could communicate with each other, effectively saying things like, “heads up — there’s a human passing me strolling north at 2 mph, and a car is approaching me heading east at 40 mph,” thereby allowing its companions to light up at appropriate times in anticipation of being required.

The more I think about it, the more I can envisage these xcore.ai devices being deployed in almost everything. How about you? Do any interesting potential applications spring to mind that you would care to share?

There isn’t much of a standard programming model for the plethora of AI and in-memory ICs, I don’t see anybody getting a lot of traction until that happens.

If David May learned anything from his days at Inmos, it should be that it doesn’t matter how good your processor is if nobody knows how to use it, and if nobody knows how to use it you’ll just have to do it yourself – i.e. if any of these AI chips were actually usable, they companies would be raking it in with smart products, rather than selling ICs to the gullible.