Internet-of-things (IoT) security seems to be passing through various phases as a topic. It started as, “Wait, what? I need security? But why???” Frankly, we probably haven’t moved completely out of that one, but we’ve made progress. Next comes, “What does security mean?” And that continues as well. But a third phase has started, and that’s one of, “OK, I get it, but how the heck do I implement this? Do I have to do the whole thing from scratch??”

Much of the, shall we say, remedial? focus has been on basic security as typically implemented in Layer 4 of the OSI stack. For example, the most common security in use is TLS, which is a standard riding on the transport layer – TCP, to be specific. (There’s DTLS as the UDP equivalent.) But a couple of announcements are interesting not simply because they’re new products to talk about, but because they both address security in Layer 7 and reveal something about the nuances of security.

App-Level Security

The first comes from a company called Thirdwayv. And they’re building application-level security. Why would that be fundamentally any different from normal Layer-4 security? Here’s the thing: as a message moves down the OSI stack (on the sending side) and back up the stack (on the receiving side), the security aspects protect the message payload only at and below Layer 4. Above that, the payload is in the clear. Someone with sophisticated tools (like the government, perhaps, or your favorite social media host) may be able to figure out what’s going on by tapping into the message at higher levels.

For instance, if you send a Facebook Messenger message, it is sent securely, and yet Facebook can access the contents because, in theory, they own the message up and down the stack. If they want to, they can see the message before it is encrypted. Which is why folks who really want security prefer “end-to-end” security: that’s security in the application layer. That’s what WhatsApp and Signal have (and what the original WhatsApp developers and Facebook – not to mention the government – have been rumored to be at odds over).

With app-level security, the application builds in its own security, not relying solely on the lower stack levels for that service. The message is encrypted before it ever leaves the application, so, in theory, only the application knows the message. The app can exchange keys with the recipient so that the recipient’s app can decrypt the messages. Unless someone can trap that key (presumably not sent in the clear), then no one outside the app can see the message. The only reason the recipient can see the message is because their instance of the app, having set up a session with the sender, lets them see it.

Thirdwayv can run over any transport mechanism (including more security), so it’s not tied to any specific implementation. Having started with Bluetooth (since Bluetooth can be rather promiscuous about connecting in the presence of multiple possible connections), they also cover NFC, powerline, and Ethernet. And the specific interactions they are trying to protect involve IoT devices communicating with the cloud under the assumption that the data will go first through a phone or a gateway and thence to the cloud.

They’ve come up with two separate products for two aspects of the security: setting up a secure connection for messages and giving app developers a toolkit for adding security to their application.

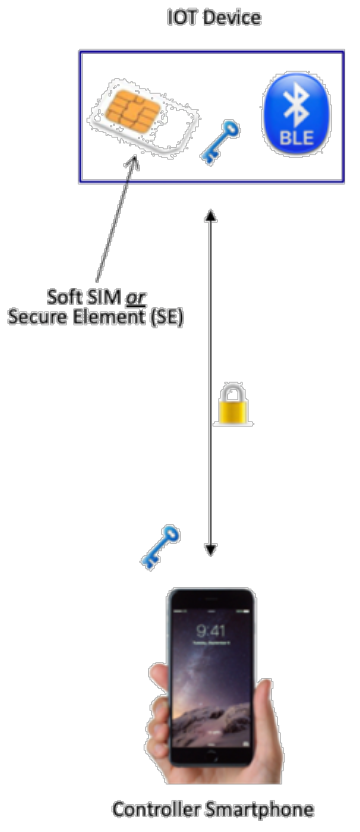

The first one is called SecureConnect. It handles the secure messaging by creating a tunnel between the sender and receiver. The session is authenticated, and messages are encrypted – all natively in the application, without relying on lower-layer services.

(Image courtesy Thirdwayv)

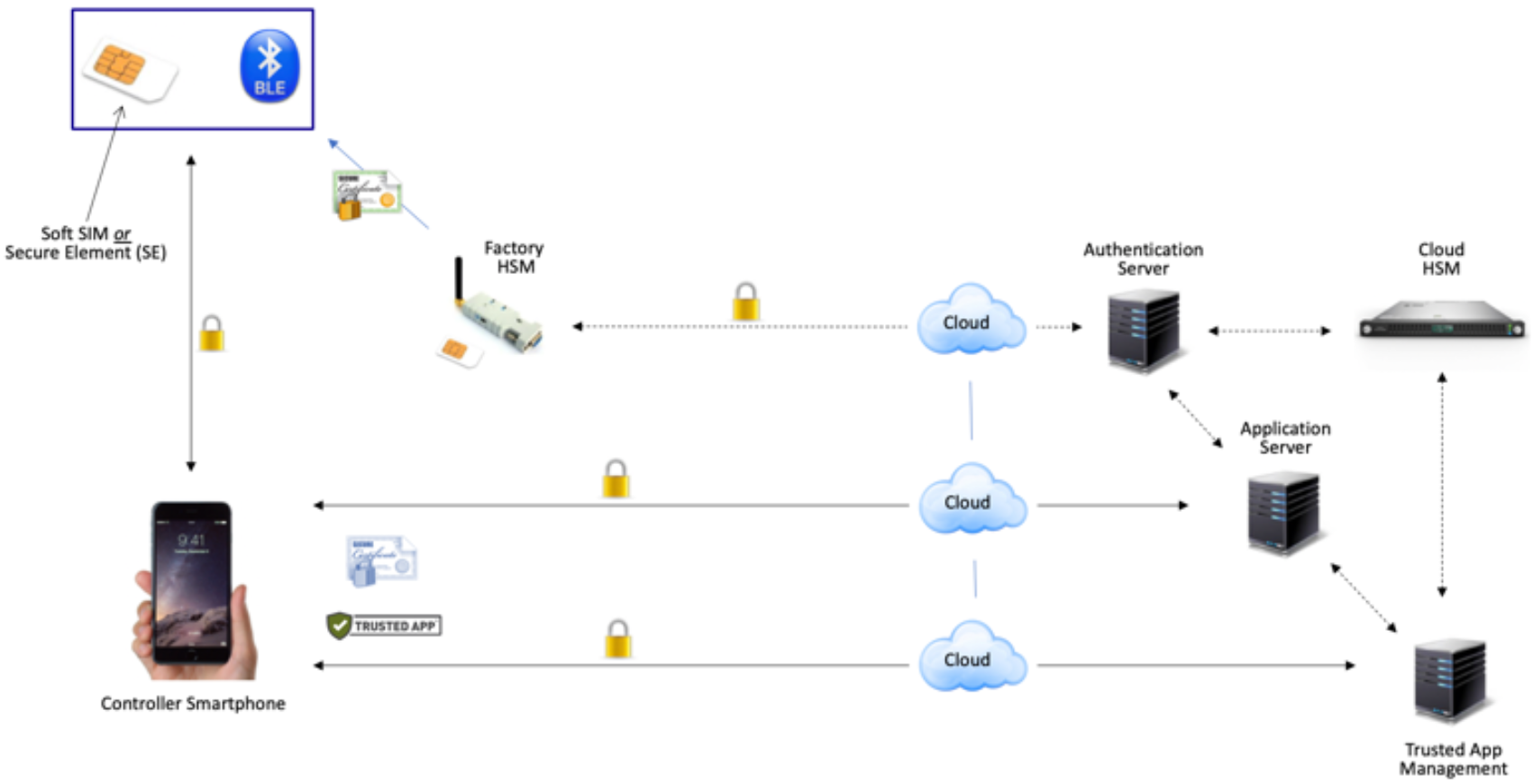

The second product, newly announced, is called AppAuth, and it gives the author of an application many choices for implementing tight or loose security. (Obviously, tight security involves more inconvenience to the user…) The author can require biometric or other authentication mechanisms, for example, before implementing particularly critical operations.

AppAuth also helps to protect the application and the platform on which the app is running by, among other things:

- Making sure that the phone is “clean” – that, as far as it can tell, it hasn’t been compromised;

- Making sure that the phone hasn’t been rooted – that is, that someone hasn’t put the phone in a privileged mode that allows monkeying with things that shouldn’t be monkeyed with;

- Making sure that platform isn’t running an outdated, rolled-back version of the OS. OS updates often protect against newly discovered vulnerabilities, but phones can have their OSes rolled back – useful if there’s an unexpected glitch in the update. Problem is, a hacker may roll the OS back specifically to re-enable a vulnerability.

- Obfuscating the application code to make reverse engineering harder;

- Protecting the application from interference from other applications. It can’t do anything about any malware on the phone – except to make sure it keeps its hands off the author’s app.

(Click to enlarge; image courtesy Thirdwayv)

The Thirdwayv software relies on a root of trust – preferably a hardware one, like a secure element (SE), which they say adds about $1.00 to the bill of materials. Absent an SE, they can implement the software equivalent and use that, with the proviso that a software version isn’t as secure as a hardware version.

Sign Here, Please

The second product comes from Keyfactor in an announcement of their integration with Thales’ hardware secure module (HSM) offering to provide what they call Keyfactor Code Assure. The problem being solved here is that of code signing.

Yeah, unless you’re writing for app stores, firmware, automotive, and medical industries, code signing may not be a part of your repertoire. The idea is that your code gets “signed” using a digital signature that incorporates a certificate. There’s a public key in the certificate, which means you can check the signature. But what about the private key, which is used to generate the signature?

This has apparently been the subject of either security weakness or coder hassle, and that’s what Keyfactor and Thales are trying to tighten up. The idea is that an HSM provides an opaque server for signing code. That server acts as a vault for certificates and keys, and they’ve added another layer of protection by locking those artifacts. Before they can be used, they must be unlocked – and the rules for doing so can depend on things like the privileges of a submitter or the time of day.

Like any secure process, the users can’t see what’s going on. They merely submit their code, and out comes the signature. The private key, used to generate the signature, never leaves the vault. The HSM can be accessed by local or remote machines; scripts don’t need to invoke API calls to make it happen. The idea was to limit how this affects a developer’s life.

(Click to enlarge; image courtesy Thirdwayv)

Now, with signed code, an end device – like an IoT device – can first run attestation to make sure that no one changed an application’s code before it was downloaded. This applies, of course, both to new downloads and to updates. If the code is different in any way, the signature check will fail. And because the private key is out of reach, then attempts to cover up a code change by regenerating a new signature should fail.

This affects only the packaged code, of course. Once unwrapped and running in a device, it’s theoretically still possible to change the code. Periodic in-memory checks are one way to make sure that, over time, the code being used remains the correct code, but that’s another topic entirely. The Keyfactor/Thales solution ends once the code has been accepted.

More info:

Sourcing credit:

Jim Kamke, CEO, Thirdwayv

Vinay Gokhale, VP Business Development, Thirdwayv

Ted Shorter, CTO, Keyfactor

Mark Thompson, VP Product Management, Keyfactor

What do you think of these security ideas that focus on the application layer?