British photographer Eadward Muybridge’s pioneering work on motion pictures in the 1870s and his invention of the zoopraxiscope to display moving images literally framed everything that followed for the next 150 years, first in movies, then in television, and finally in animated GIFs. Muybridge’s developments leveraged human persistence of vision to make the subjects in a sequence of still photos appear to be moving. Since then, all cinema and video recordings have been based on projecting still image frames in rapid succession. That mechanism is great for capturing motion-picture scenes to be reproduced for human visual consumption, but extracting movement information from these frame-based recordings for other purposes requires sifting through voluminous video image files using a tremendous amount of computing power. Image sensor maker Prophesee has just developed its fifth generation of event-driven image sensors that detect only motion, which short circuits the need for all that motion processing and detection when using frame-based image sensors.

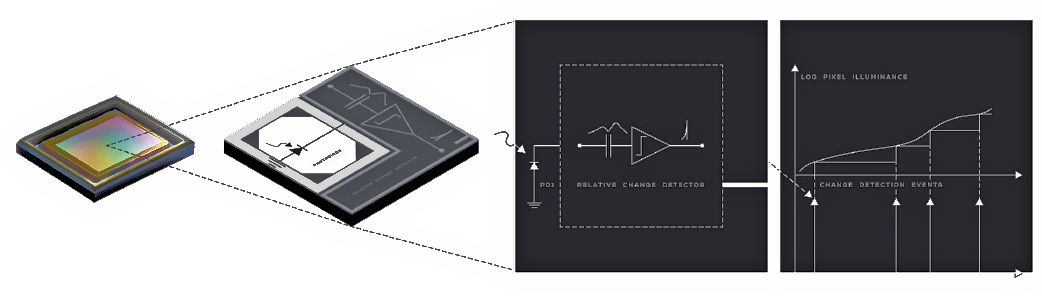

First, it’s important to understand that Prophesee’s Metavision imagers are not meant to replace conventional imagers using a synchronous frame rate when recording scenes visually. Instead, illumination changes trigger pixels when the amount of light falling on the pixel changes by a set amount, which is often caused by movement. Each of the motion sensor’s pixels incorporates a photodiode and an ac-coupled threshold detector that generates an output when the light falling on that pixel changes by the prescribed amount.

The Prophesee Metavision motion sensor incorporates a photodiode and an ac-coupled threshold detector that generates an output only when light falling on the pixel changes by a set amount. Image credit: Prophesee

This different way of capturing a scene offers several advantages. First, says Prophesee, the motion-sensing pixels have an extremely high dynamic range and are sensitive to light levels from 50 millilux to more than 10,000 lux. Because each pixel activates only when there’s a change in the light level on that pixel, the data rate from these Metavision sensors is much lower than that from high-resolution image sensors. However, the Metavision sensor pixels can respond quickly, as fast as one microsecond, when light levels change quickly. Consequently, Metavision sensors have very low power consumption. The company’s fifth generation, 320×320-pixel GenX320 sensor idles at 36 μW and draws 3 mW running at full tilt. (Note that the sensor’s response rate falls in low-light situations because the pixel needs more time to capture enough photons to trigger the threshold detector.)

In considering the uses for these motion sensors, it’s useful to recall that there are already other types of image-related sensors in use. For example, Lidar comes to mind immediately. Like Prophesee event-triggered motion sensors, Lidar systems do not record scene images. Instead, they generate 3D point clouds using distance information gleaned from photon time-of-flight data. When used in conjunction with video imaging sensors, these point clouds are often used for autonomous vehicle navigation on land, sea, and in the air.

Similarly, Prophesee’s event-triggered sensors can augment data from imaging sensors, and they can be combined with captured video for various applications such as using movement data to augment frame-by-frame deblurring of captured video. Other standalone applications for these motion sensors include:

- High-speed eye tracking for AR/VR/XR headsets

- Low-latency, touch-free human-machine interfaces (gesture control) for TVs, PCs, game consoles, smart homes, industrial equipment, etc.

- Presence detection and people counting for IoT and security cameras

- Ultra-low-power, always-on area monitoring

- Fall detection in homes and health facilities

Notably, Prophesee’s fifth generation 320×320-pixel edge-triggered motion sensor has fewer pixels than the company’s fourth generation sensor. The reduced number of pixels allows for a smaller, less expensive sensor that still meets the motion-sensing needs of many of the applications listed above. Several early adopters are already using these motion sensors in their system designs:

- Both Zinn Labs and Meta are using Prophesee Metavision sensors for eye and position tracking in AR/VR headset designs because of the sensors’ high speed, low data rate, and low power consumption. The fifth generation GenX320 sensor measures only 3×4 mm, so multiple sensors easily fit into a headset design. AR/VR headsets can track eye movements at an eye-watering one to 10 KHz using the GenX320 sensor. (Pun intended.) At the highest scan rates, it’s possible to infer a user’s emotional state from their rapid eye movements.

- Xperi is using Prophesee’s sensors for driver monitoring in vehicles. The sensors’ low-light performance, low power consumption, and small size are all beneficial in this application.

- Yun X is using Prophesee’s sensors to watch for falls in homes and healthcare facilities.

- Ultraleap has developed a hand-tracking camera to replace touchscreens and other manual controls in consumer and industrial applications.

Ultraleap is developing a human-machine interface controller using hand gestures, based on Prophesee’s motion sensor. Image credit: Ultraleap

In all these applications, privacy concerns are minimal because these sensors do not capture identifiable images, only movement.

Prophesee designed the GenX320 sensor for simple hardware integration into a system. The sensor has both MIPI and I2C interfaces. In addition to the GenX320 sensor, the company also offers a series of development kits and a growing software library to aid in system development. Some of the algorithms that Prophesee and its partners have developed include:

- Object detection, tracking, and training

- High-speed object counting

- Video-to-event and event-to-video conversion

- Pixel-level optical flow prediction and training

- Gesture classification and training

- Particle and object size monitoring

- Vibration and frequency monitoring

- Defect detection

- Spatter monitoring

- Plume monitoring

- Edgelet tracking

- Fluid dynamics monitoring

- Ultra slow-motion video generation

- Motion and video sensor synchronization

- Motion deblurring

Earlier this year, Qualcomm and Prophesee announced that the next-generation Snapdragon mobile phone reference design would integrate Prophesee’s GenX320 sensor and software library. The announcement discusses using the motion sensor to help reduce motion blur in images and video taken by the mobile phone’s cameras. However, once the Prophesee motion sensor is integrated into the mobile phone platform, it can (and likely will) be used for multiple applications.

Researchers have developed algorithms to perform the tasks listed above using conventional image sensors. The advantage of Prophesee’s edge-triggered motion sensor is that these same tasks can be performed faster, more efficiently, with less computational horsepower, and with lower power consumption. That’s especially true when these image-processing algorithms are performed on a compressed video stream, which almost certainly requires a decompression step before the additional motion processing. The tradeoff here is adding a sensor to the system, which increases the target system’s cost if the system already incorporates one or more conventional image sensors. But Prophesee’s Metavision sensors can also operate as standalone sensors for many applications, such as the Zinn Labs and Meta eye-tracking headsets and the Yun X fall-sensing application. For these applications, a conventional image sensor and its associated computational hardware aren’t required.

One thought on “Prophesee’s 5th Generation Sensors Detect Motion Instead of Images for Industrial, Robotic, and Consumer Applications”