Sometimes when I’m writing an article, the title doesn’t come to me until after I’ve finished the main body of the piece. Other times—as in the case of this column—the title writes itself and all I have to do is flesh out the words that follow (I make it sound easier than it is LOL).

Now that I’m reading the title, I’m thinking “Wow, rounding up with gusto and abandon, we’re almost a quarter of the way through the 21st Century.” I remember graduating high school in England in the summer of 1975. That was one of the rare summers when we had two weeks of solid sunshine without a cloud in the sky (people in England still tell tales of those two weeks in awe and astonishment). In the “between times” before some of us went to university and others set off to find jobs, we met in the local park to kick a soccer ball around and converse about life, the universe, and everything.

One of the things we talked about was that it was 25 years—a quarter or a century—until the year 2000. That would be seven years more than we’d been alive. It seemed like a lifetime away. We’d be 43 years old. I couldn’t imagine what it would feel like to be that decrepit. Then, suddenly, we were breaking out the party hats. I think I must have blacked out for a while because Y2K (as we used to call it) now appears almost 25 years ago in the rearview mirror of my life.

Another thing I recall from those halcyon days in the park was when one of our number—we called him “Bean” for reasons I can no longer recall—informed us that he’d signed up for a 20-year tour in the Royal Navy. We simply couldn’t wrap our brains around this news. We’d just escaped from an establishment where we abhorred wearing uniforms, and he was voluntarily exiling himself into a world where uniforms were the norm. Even more mind-blowing was the fact that he was going in for 20 years, which was 2 years longer than we’d been alive. At that time, it seemed to the rest of us that Bean was bound to make a huge blunder.

I later heard that Bean retired at the age of 38 as a Commander of one of Her Majesty’s warships, which means his pension would be nothing to be sniffed at. Apart from anything else, this means Bean has now been retired (unless he got bored and started doing something else) for 27 years as I pen these words. It really is a funny old life when you come to think about it.

As I’ve mentioned on occasion, I’m a digital hardware design engineer by trade. You know where you stand with digital. Analog is a bit more “wibbly-wobbly,” if you know what I mean. As an example, when we digital engineers run a logic simulator, if the outputs from the simulation aren’t what we expect, we don’t rail against the fates, because we can be reasonably confident that the issue is in our circuit and/or our stimulus. By comparison, it’s been my experience that if analog bods don’t like what they see when they run their simulations, they simply tweak the simulator and run again, and they keep on doing so until the simulator shows them what they want to see and tells them what they want to hear. You may think I’m making this up, but I know what I know, and I’ve seen what I’ve seen, and that’s all I have to say about that (channeling Forrest Gump).

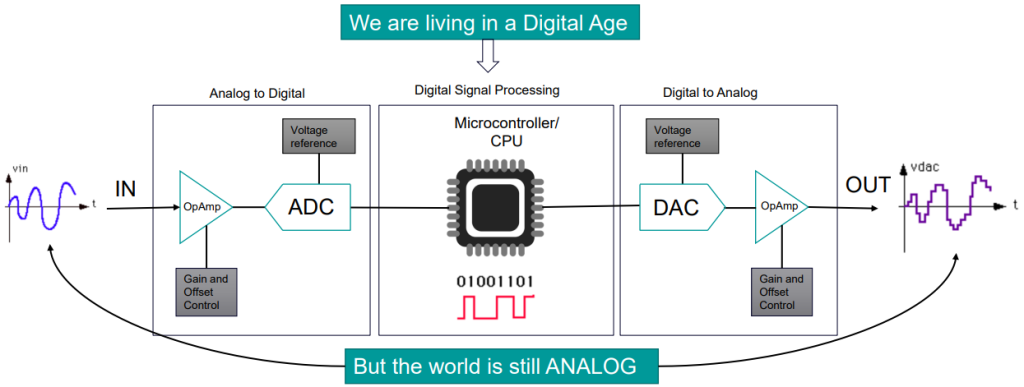

Life would be simple—well, simpler—if digital and analog folks could keep to their own sides of the track, meeting furtively only for festive occasions. The problem is that, although we are now living in a digital age, the real world doggedly continues in its analog ways, where analog in this context refers to time-varying continuous signals such as sound, light, pressure, and temperature. We use the term “mixed-signal” to describe an environment in which the analog and digital worlds collide. (Yes, of course I’m now thinking of the 1951 American science fiction disaster film When Worlds Collide with color by Technicolor. They don’t make films like that anymore (thank goodness).)

The simplest example of mixed-signal—one we can all wrap our brains around—would be taking an analog signal in the real world, performing some sort of conditioning in the analog domain, passing the signal through an analog-to-digital converter (ADC), and feeding the result into a digital processor like a microcontroller to undergo digital signal processing (DSP). Eventually, the resulting signal will be returned to us via a digital-to-analog converter (DAC), after which it may experience some additional conditioning in the analog domain before being allowed to return to the analog world to roam wild and free. (I’m happy to say I never saw the 1966 British drama film Born Free—I’m not that sort of fellow—so it seems a tad unfair that I now have its theme tune bouncing around my poor old noggin).

Perhaps the simplest mixed-signal scenario (Image source: Siemens)

Of course, things get much more complex in real-world designs, especially when analog and digital functions are combined in a single semiconductor device like a System-on-Chip (SoC) and/or in a single package like a System-in-Package (SiP).

I remember working with mixed-signal simulation environments back in the dark ages we used to know as the 1980s and 1990s. In those days of yore, we used terms like a/D (spoken as “little ‘a’, big ‘D’), A/D, and A/d (spoken as “big ‘A’, little ‘d’”). Today, by comparison, in the case of analog-centric designs with some digital, I believe the argot of the hour is to use the term Analog-on-Top (AoT), while digital-centric designs with some analog are referred to as Digital-on-Top (DoT) in the patois of the day. Having said this, I realize that I’m not sure if the AoT and DoT monikers also imply the domain that metaphorically “sits on top” preening and presenting itself to the outside world. But we digress…

We ran into all sorts of problems with early mixed-signal simulations. Our computer platforms had only miniscule computing power compared to the engines of today. Our user interfaces were enthusiastic while remaining largely ineffective. And we had to implement any A-to-D and D-to-A interfacing elements by hand. The only reason we were successful at all was that the designs in those far-off days were much smaller and much simpler than the designs that we’re seeing today.

The thing is that this is not an isolated problem. According to IBS Research, 85% of today’s SoC design starts are mixed-signal to some extent. As the folks at Siemens say: “Next-generation automotive, imaging, IoT, 5G, computing and storage applications are driving strong demand for greater analog and mixed-signal content in next-generation SoCs. Mixed-signal circuits are increasingly ubiquitous—whether it is integrating the analog signal chain with the digital-front end (DFE) in 5G massive-MIMO radios, digital RF-sampling data converters in radar systems, image sensors combining analog pixel read-out circuits with digital image signal processing, or feeding datacenter computing resources with ever more data using advanced mixed-signal circuits to deliver PAM4 signaling. For these and other highly advanced applications, mixed-signal circuits enable lower power, area, and cost while delivering ever-improving performance figures.”

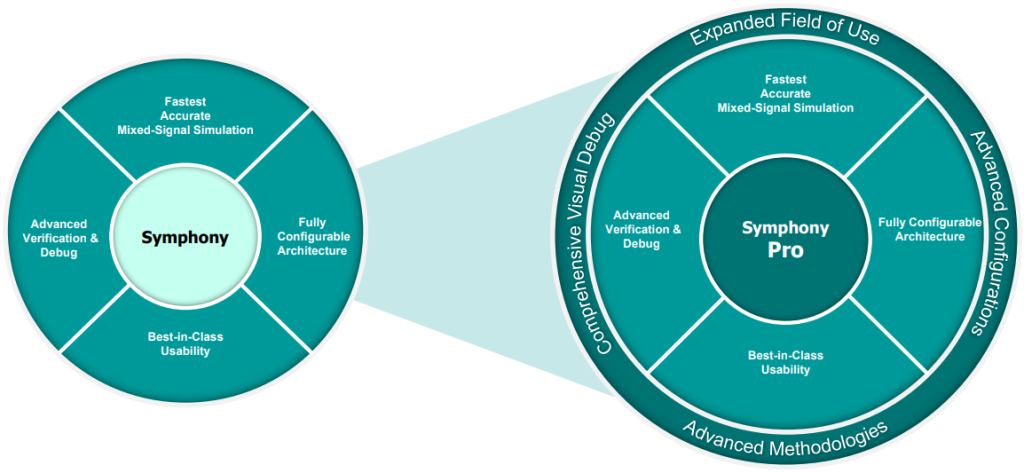

A few years ago, the guys and gals at Siemens introduced their Symphony Mixed-Signal Platform, which is digital-simulator agnostic, and which leverages their foundry-certified Analog FastSPICE (AFS) simulator to handle the wibbly-wobbly portions of the problem. More recently (just a couple of weeks ago at the time of this writing), the chaps and chapesses at Siemens announced Symphony Pro, which we might think of as “Symphony on Steroids.”

Symphony Pro is like Symphony on Steroids

(Image (but not caption) source: Siemens)

Featuring the Siemens EDA Questa logic simulator working hand-in-hand with AFS, this next-generation solution extends the robust mixed-signal verification capabilities of Siemens’ proven Symphony platform to support new and advanced Accellera standardized verification methodologies with a powerful, comprehensive, and intuitive visual debug cockpit.

In a crunchy nutshell, what we’re talking about here is support for advanced digital-centric verification methodologies for mixed-signal designs; comprehensive support for real number modeling (RNM) in which an analog circuit’s behavior is represented as a signal flow model, support of mixed-signal coverage and assertions, support of low-power mixed-signal verification, and a seamless debug experience across the entire mixed-signal design hierarchy.

Excepting my hobby projects, I no longer do any real design work myself (I just talk about how hard things used to be in the old days when I wore a younger man’s clothes). All I can say is that if I were to be tasked with creating one of today’s mindboggling mixed-signal designs, I’d be taking a serious look at Symphony Pro. However, it’s not all about me (it should be, but it’s not)—so, what do you think about all of this?

Please Max, help the cause:

The big problem is the number of television news readers and web-site and print-publication copy editors who are not schooled in proper English grammar and usage. A variety of solecisms including “graduated high school” are out of control. It is correct to say “I was graduated from high school” but it is a losing battle.

I hang my head in shame — I shall chastise myself soundly (I’ll try not to be too harsh) — the only saving grace is that everyone understands what I meant (apart from those who don’t, but they don’t count).