A couple of weeks ago, I was waffling on (as is my wont) about something or other, and I mentioned that my chum Jay Dowling had introduced me to an interesting analog computer called The Analog Thing (THAT). As I said at that time, it took me ages to realize that “THAT” was an abbreviation of “The Analog Thing” and not some esoteric part of its name.

As part of this, Jay also pointed me to a brilliant YouTube video on analog computers by Veritasium that shows all sorts of cool mechanical implementations of analog functions, like summing multiple sine waves. The mechanical analog of integration left me gasping in awe at the ingenuity of our forebears.

This reminded me that I had previously found myself engrossed in another Veritasium video entitled Future Computers will be Radically Different.

I just rewatched this video. I only now realize that the presenter, Derek Muller, has one of the aforementioned THAT machines on his desk. In the video, he demonstrates using this little beauty to simulate a damped mass oscillating on the end of a spring (this is the sort of thing they had me doing as a university student way back in the mists of time).

But we digress… The video starts with Derek noting how, for hundreds of years, analog computers were the most powerful computing engines on Earth, predicting things like eclipses and tides and controlling anti-aircraft guns. Derek then notes that the advent of solid-state (semiconductor) electronics caused digital computers to take off, to the extent that virtually every computer we use these days is digital in nature. Next, we are informed that “A perfect storm of factors is setting the scene for a resurgence of analog technology” (I feel a drum roll would be appropriate at this point in the proceedings).

This 22-minute video is well worth watching from beginning to end. If you are short of time, however, the explanation of how the neurons in our brains work (on/off with weighting), which starts at 3:50 in the video, provides a really good way of visualizing the basis of the artificial neural networks (ANNs) we use to implement today’s artificial intelligence (AI) and machine learning (ML) solutions.

I fear I’ve been a little sneaky because — once you’ve started watching this part — you will find yourself captivated by the rest of the video, on which basis you may just as well do a Julie Andrews and “start at the very beginning — a very good place to start,” and have done with it (the flash mob version of this in the central railway station in Antwerp, Belgium, always makes me smile with delight).

Did you know that, in 2015, the ResNet ANN achieved what’s known as a “Top 5 Error Rate” of only 3.6%, which is better than the average human performance of 5.1% (see the video for more details)?

All of this leads us to the fact that, as Derek says, “So the future is clear: we will see ever increasing demand for ever larger neural networks.” He continues by saying, “And this is a problem for several reasons. One is energy consumption. Training a neural network requires an amount of electricity similar to the yearly consumption of three households. Another issue is the so-called Von Neumann Bottleneck. Virtually every modern digital computer stores data in memory and then accesses it as needed over a bus. When performing the huge matrix multiplications required by deep neural networks, most of the time and energy goes into fetching those weight values rather than actually doing the computation. And finally, there are the limitations of Moore’s Law. For decades, the number of transistors on a chip has been doubling approximately every two years, but now the size of a transistor is approaching the size of an atom. So, there are some fundamental physical challenges to further miniaturization.”

This is where we get to the crux of why this is the perfect storm for performing computations using analog techniques: (a) digital computers are facing fundamental limitations, (b) the use of neural networks is growing exponentially, (c) a lot of what neural networks do boils down to matrix multiplication, and (d) neural networks don’t actually require the levels of precision provided by digital implementations. As Derek notes, “Whether the neural network is 96% or 98% confident the image contains a chicken, it doesn’t really matter, it’s still a chicken.”

This is the point in the video where Derek travels to Texas to visit an analog startup company called Mythic AI, whose claim to fame is creating analog chips to run neural networks.

I must admit I was so excited by what I saw that I immediately reached out to the folks at Mythic to ask them to tell me more. In a nutshell, the chaps and chapesses at Mythic say we are currently seeing an exponential growth in data and an explosion in AI. This is especially true when it comes to inferencing on the edge, where we will soon have billions of users and hundreds of billions of devices running algorithms that demand teraops and petaops per second.

Existing applications enriched with AI include video surveillance cameras, NVRs (computer systems that record and store video footage), smart home devices, and industrial machine vision. Emerging AI applications include lightweight drones, interactive AR/VR, and consumer and retail robotics. The guys and gals at Mythic say that AI processors are soon expected to be as common as today’s CPUs and image sensors, with a $13B market opportunity by 2024. (Eeek! So soon! I haven’t prepared a speech and I don’t have a thing to wear!)

Now, this is where things get clever. Since I’m a digital design engineer by trade, when I hear the term “flash memory cell,” I think of a transistor with a floating gate that can be loaded/not loaded with electrons, thereby representing a 0 or a 1, respectively. By comparison, by controlling the number of electrons in the floating gate, the folks at Mythic can use this cell to represent 256 different values (equivalent to 8 bits of storage). If these values are used to represent a weight (coefficient) in an ANN, then each Flash cell can be used to perform a multiplication (voltage x conductance) between its activation voltage and its coefficient. The currents from all of the Flash cells in a column are additive, which means we can think of each Flash cell as acting like a synapse and each column of cells as representing a neuron.

Do you remember earlier when I said that Derek said, “When performing the huge matrix multiplications required by deep neural networks, most of the time and energy goes into fetching those weight values rather than actually doing the computation.” Well, in the case of Mythic’s technology, the weight values are already stored in the floating gates.

The end result of all this is that the first of Mythic’s devices, the M1076, which is implemented in what would now be considered to be a cheap-and-cheerful 40nm process technology, can perform 25 trillion math operations per second whilst consuming only around 3 watts of power (an equivalent digital platform would be around the size of a 1/3 height shoebox equipped with a turbofan to dissipate the 100 watts of power it was guzzling).

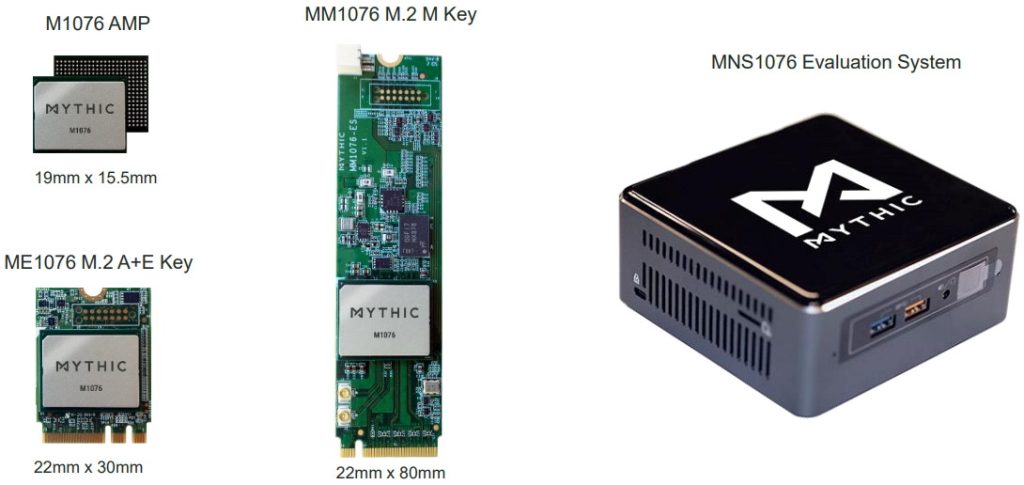

Meet the Mythic 1076 (Image source: Mythic AI)

Now, there are all sorts of nitty-gritty details that I don’t have the time or the energy (no pun intended) to go into here — such as the fact that there’s a lot of internal calibration (employing analog and digital techniques) to ensure that the device consistently hits its 8-bits of accuracy, also the fact that the layers of analog neurons are interspaced with interfaces in the digital domain — but the bottom line is that the M1076 has the capacity for around 80 million synapses. These synapses can be arranged in layers as required. That is, you could theoretically have a single layer with 80 million synapses, or 100 layers each with 800,000 synapses, or 80 million layers each with a single synapse.

Of course, very few people wish to purchase a chip on its own and then start experimenting with it. What is required is a development board with some sort of interface (say PCIe) that can be plugged into a computer. For this reason, the folks at Mythic offer a complete M1076-based family from chip to development board to evaluation system.

Meet the M1076 family (Image source: Mythic AI)

But wait, there’s more, because Mythic also offers support for a wide variety of host platforms — including X86, NVIDIA Jetson Xavier NX/TX2, Qualcomm RB5, and NXP i.MX8M — running Linux Ubunto or Linux for Tegra (NVIDIA), with pre-trained deep neural net (DNN) models for applications like an object detector (YOLOv3), Pose Estimator (OpenPose V1.5 Body25), and Classifiers (ResNet 18 and ResNet50).

I don’t know about you, but much like the classic Guinness advert, I think this is “Brilliant!” I would never have conceived the idea of creating ANNs with analog neurons formed from columns of Flash cell synapses in a million years but — now I’ve been exposed to the concept — I think this could herald a new day in AI inferencing. As always, we certainly do live in exciting times. How about you? Do you have any thoughts you’d care to share on any of this?

Honestly, I’m kinda bored waiting for the Mythic chips, I thought the analog AI approach would cause a spurt of interest in mixed-signal EDA. I interviewed there for a non-AMS job over 4 years ago, I didn’t get the job, but in retrospect they were asking me to do something you could apply AI to, and it seems to be a pattern that AI chip companies don’t actually apply AI to the task of making chips, so it’s questionable if they know how to apply it to anything.

I’ve been seeing all sorts of activity in this general area recently — plus some similar stuff using optical techniques — I think the floodgates may soon be opened (I just dispatched the butler to fetch my wellington boots 🙂