If you are a hardware design engineer, you are doubtless familiar with the concept of formal verification as it applies to hardware design, but have you ever considered how formal verification might relate to software and the developers thereof (bless their little cotton socks)?

Life can be a rum old thing, and no mistake. When it comes to formal verification, for example, it’s interesting to discover that this little rascal was originally conceived in the 1960s with software in mind. Sad to relate, however, my understanding is that few (if any) of today’s software developers actually use it, although — as we will discuss — that may be about to change.

I just “pinged” my old chum Brian Bailey, who currently flaunts the title of Technology Editor focusing on Electronic Design Automation (EDA) at Semiconductor Engineering. More importantly from my point of view, Brian is an industry expert on all things pertaining to verification, including simulation, emulation, and, of course, formal tools, techniques, and technologies. I know I’m showing my age here, but I was first introduced to the concepts of design for test (a.k.a. design for testing a.k.a design for testability a.k.a. DFT) and SCAN technology (full, partial, and boundary SCAN) at a course presented by Brian when we both hung our hats in the UK back in the early 1980s, which is closing on 40 years ago in the rearview mirror of my life at the time of this writing (see also Is Dust in the Wind All We Are?).

This was when I was working with the team that created the HiLo logic simulator, which evolved into System Hilo. In fact, we had three flavors of this simulator: a logic simulator (that could perform its magic using minimum, typical, or maximum delays for each of the gate and register elements), a fault simulator, and a dynamic timing analyzer, which was similar in concept to the logic simulator, but it dynamically simulated across minimum-maximum delays. In the case of the fault simulator, we also had an automatic test pattern generation (ATPG) capability.

While writing the above, I started wondering about the origin of the term SCAN. I was speculating if it stood for anything and — if so — why did I not know what that thing was? So, I had a quick Google while no one was looking and discovered that SCAN is not, in fact, an acronym. The best I can do to tie this down is an article I ran across a few minutes ago that I can no longer find (the internet — you have to love it) that said something like, “Early examples of DFT from the 1940s and 1950s are the switches and instruments that allowed an engineer to ‘scan’ (i.e., selectively probe) the voltages/currents at some internal nodes in an analog computer.” Well, that makes sense to me.

As an aside, and apropos of nothing at all, literally as I pen these words, my chum Charles Pfeil just sent me a link to this video snippet of the historic flight of Blue Origin’s New Shepard spaceship, which took place a little earlier today (Tuesday 20 July 2021). This video shows the passengers in New Shepard (which is named after Alan Shepard, who was the first American in space) enjoying awesome views of the Earth and space whilst merrily cavorting in zero gravity. The passengers in question are Jeff Bezos, his brother Mark Bezos, American aviator, astronaut, and Goodwill Ambassador Mary Wallace “Wally” Funk, and Dutch teenager Oliver Daemen, who has to be one of the luckiest 18-year-olds off the planet (“off the planet” — sometimes I amaze even myself).

As I replied to Charles, “This is freaking awesome! I wonder what all the Mercury, Gemini, and Apollo astronauts would have thought to see a cabin this size with those huge windows!”

But we digress…

As I mentioned earlier, hardware design engineers are at least familiar with the concept of formal verification as it applies to their designs, even if they haven’t actually used it themselves. Having said this, perhaps we should briefly refresh our memories as to hardware formal verification techniques, just to make sure we are all tap-dancing to the same set of bagpipes (and I know whereof I speak, because my dear old dad was a dancer on the variety hall stage prior to WWI; also, he was in a Scottish reconnaissance regiment during WWI, as part of which he was gifted with many glasses of beer whilst tap-dancing to the skirl of the Scottish Great Highland bagpipes — see also The Times They Are a-Changin’).

I once heard the saying, “A gentleman is someone who knows how to play the bagpipes… but doesn’t.” That reminds me of a joke about a Scotsman who plays the pipes in a bagpipe marching band. Every Saturday, the band parades through the town center performing well-known pieces, after which they retire to the local public house to quaff a few pints (quaffing is similar to drinking except that you tend to spill more down your chest). On one Saturday, while in the process of blowing the froth off a few cellar-temperature beers, he suddenly leaps to his feet and runs out of the bar exclaiming, “Oh no! I just remembered that I left my bagpipes on the back seat of the car and the doors are unlocked!” He returns a few minutes later a broken man. He got there too late. Someone has dumped three more sets of bagpipes in his car! In turn, that reminds me of the videos of Darth Vader playing flaming bagpipes whilst riding a unicycle and the Red Hot Chilli Pipers.

But, once again, we digress…

In the case of hardware formal verification, we start with “equivalency checking,” which uses formal (rigorous mathematical) techniques to compare two different representations of a design (e.g., a register transfer level (RTL) description with a gate-level netlist) to ensure they have the same input-to-output functionality. (Equivalency checking may be considered to be a subclass of “model checking,” which refers to techniques used to explore the state-space of a system to test whether or not certain properties, typically specified in the form of assertions, are true.)

This leads us to the concept of properties and assertions, but don’t ask me to explain the difference between them here. The simplest way to think about this is that you can add pragmas to your design along the lines of “this signal and that signal should never be active at the same time.” This leads to the concept of “assertion-based verification (ABV),” which comes in multiple flavors: simulation, static formal verification, and dynamic formal verification.

In the case of static formal verification, an appropriate tool takes the design — typically at the RTL level of abstraction) and then exhaustively analyzes the logic to ensure that the asserted conditions can never occur. By comparison, in the case of dynamic formal verification, an appropriately augmented logic simulator will run up to a certain point, then pause and automatically invoke an associated formal verification tool. After this, things start to get complicated…

Returning to my chum Brian; in response to a query I sent him on LinkedIn, he responded, “Formal verification was developed first for software but was never practical and still to this day is not used much. The problem is too unbounded. For hardware, formal verification can focus on much ‘simpler’ questions.”

I also turned to the forums on EmbeddedRelated.com, which is my “go-to” place to ask stupid questions (I’ve heard people say, “There’s no such thing as a stupid question,” but that’s before they met me). I posed the question, “How many of today’s software developers — both high-level application developers and firmware-inclined embedded systems developers — do you think are aware of the concept of formal verification (a) in general and (b) with regard to software?”

Almost immediately, I received a response from John Ford, who is Principal Engineer at Steward Observatory, University of Arizona, and who spake as follows: “Hi Max, I think most are aware of the concept, but I suspect that almost nobody actually does it. There’s an editorial in the July issue of the Communications of the ACM by Moshe Vardi on this very topic (DOI:10.1145/3469113). Moshe points out that formal verification isn’t done for many reasons, but also compares formal verification with model checking, which is easier and cheaper. The main obstacle to formal verification is its cost, according to Vardi. For hardware designs, where the cost of fixing a defect is very high, formal verification makes sense, whereas for most software the cost of fixing a defect isn’t as high. At least, that’s the perception. Hardware designs are also not as free-form as most large software projects, and formal verification is tractable.”

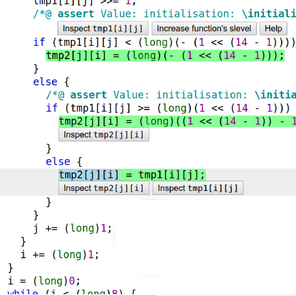

All of which leads us to the guys and gals at TrustInSoft, who may be poised to turn things on their head with regard to using formal verification to verify software. Their goal is aided by the humongous computational capacity and performance available to us today, coupled with cunning developments in formal verification tools and technologies. As we see in this video, the result is the TrustInSoft Analyzer.

I was just chatting to Fabrice Derepas, who is the Founder and CEO of TrustInSoft. Fabrice told me all sorts of incredible things regarding the current state-of-play with regard to using formal verification to ensure your code is guaranteed immune from major C/C++ language vulnerabilities, including buffer overflow, invalid pointer usage, dangling pointers, non-initialized memory access, division by zero, arithmetic overflow, NaN in floating-point computations, and overflow in float-to-int conversions. In addition to finding bugs, the TrustInSoft Analyzer also tracks down and reports the root causes for those bugs.

Unfortunately, although Fabrice regaled me with a bunch of fascinating facts, most of them went right over my head (remember that I’m a hardware designer by trade). Having said this, I understood enough to know that the time may finally have come for the real-world deployment of formal verification for software; also, that I want to make sure my software developer friends are aware of TrustInSoft and the TrustInSoft Analyzer. How about you? Have you used formal verification in the hardware domain or in the software realm, and do you have any thoughts you’d care to share on anything you’ve read here?

As an extra little snippet, EEJournal’s very own Amelia Dalton just emailed me to say: “I know the Uni-piper (Darth Vader playing the bagpipes)! His kids go to my son’s old school. Portland is such a small town. :)”

I tell you; the whole “six degrees of separation” (and “six degrees of Kevin Bacon”) is so true.