The IBM PC (aka the IBM 5150) had such a massive impact on computing history that origin stories about the machine are legion. Many books have been written to tell the stories of a rag-tag group of isolated engineers in IBM’s Entry Systems Business, down in Boca Raton, Florida. William C. Lowe initially led the group. He got the job after he told IBM’s Corporate Management Committee that IBM could not create a microcomputer from scratch inside the company due to fossilized thinking and corporate culture. IBM’s only hope, said Lowe, was to acquire a smaller, nimbler company to create such a machine. So naturally, IBM gave him the job of doing exactly what he said could not be done. He led the team that developed a proof-of-concept prototype in just 40 days. Don Estridge was named manager of Entry Level Systems—Small Systems–in 1980 and led the engineering team that brought the IBM PC to market in a remarkably short 12 months. IBM announced the IBM PC on August 12, 1981 and tilted the computing world on its axis.

However, that’s not today’s story. Instead, I’m going to discuss how and why IBM’s Entry Level Systems division chose the Intel 8088 microprocessor and set Intel on the path to becoming the world’s largest semiconductor manufacturer. It happened because of an intertwined series of seemingly unconnected events.

David House joined Intel in 1974 and became general manager of the company’s Microcomputer Components Division in 1978. He immediately inherited a huge problem. After introducing the world’s first commercial microprocessor, the 4004, quickly followed by the 8-bit 8008 and 8080 microprocessors, Intel’s processor leadership began to erode. Federico Faggin had left Intel, started Zilog, and introduced the exceptional, 8-bit Z80 microprocessor, which was object-code compatible with the 8080. Even the follow-on to the 8080, the Intel 8085, could not dent the Z-80 juggernaut. As House says of Zilog and the Z-80 in an oral history taken by the Computer History Museum, “…they were kicking our butt in the market place.”

Meanwhile, Intel’s microprocessor focus was firmly on a be-all, end-all, object-oriented processor architecture that would come to be known as the iAPX 432. When Intel realized that the iAPX 432 would be late to market, very late indeed, the company initiated a stop-gap 16-bit processor project that would become the Intel 8086 microprocessor, which the company subsequently announced in mid-1978. Although the 8086’s register set resembled a stretched, 16-bit version of the 8080’s register set, the 8086’s ISA is completely different from and is not at all compatible with the 8080’s ISA. (Intel paved over this incompatibility with a source-code translator.)

In 1980, when IBM’s Entry Level Systems division went looking for an off-the-shelf, 16-bit microprocessor on which to base the IBM PC, there were several alternatives. Texas Instruments offered the TMS 9900, a microprocessor implementation of the company’s successful 990 minicomputer architecture. However, the TMS 9900 had a 16-bit address space, which was no better than the address space of every 8-bit microprocessor on the market. As Wally Rhines wrote in his book, “From Wild West to Modern Life: Semiconductor Industry Evolution”:

“TI had tried to leapfrog the microprocessor business by introducing the TMS 9900 16-bit microprocessor in 1976. But the TMS 9900 had only 16 bits of logical address space and the industry needed a 16-bit microprocessor for address space rather than performance.”

IBM’s Entry Level Systems division did not pick the TMS 9900 as the microprocessor for the IBM PC.

Motorola Semiconductor introduced the 16/32-bit MC68000 microprocessor in 1979. This processor had a 32-bit instruction set, 32-bit data and address registers, and a 24-bit external address bus, giving it a 16-Mbyte address space. Back then, attaching 16 Mbytes of RAM to a microprocessor was unthinkable, so the MC68000’s 24-bit address space was more than ample. The MC68000’s 32-bit data and address registers allowed the processor to deliver excellent performance relative to competing 8- and 16-bit processors of the day, and expanding the original processor’s 24-bit addressing to a full 32 bits simply required more pins on the package.

The MC68000 would have been IBM’s logical processor choice, except that Motorola was only starting to sample the device and IBM wanted a processor that was already in full production, with a second source. IBM also wanted a low-cost microprocessor, and the MC68000’s original 64-pin, ceramic package (nicknamed “The Aircraft Carrier”) was anything but low-cost.

That’s why IBM’s Entry Level Systems division did not pick the Motorola MC68000 as the microprocessor for the IBM PC.

Zilog had also developed a 16-bit microprocessor, the Z8000, but IBM does not seem to have seriously considered this microprocessor for the IBM PC. According to Federico Faggin, that’s because Exxon Enterprises owned Zilog at the time and IBM viewed Zilog’s parent company as a potential competitor.

That left the Intel 8086, the stop-gap microprocessor keeping Intel’s microprocessor dynasty on life support until the magnificent iAPX 432 could be finished. The Intel 8086 had a smaller address space than either the MC68000 or the Zilog Z8000. It also delivered less performance. But it was really IBM’s only choice for a 16-bit processor, given the IBM PC’s design constraints.

There are a lot of stories that claim to explain why IBM selected an Intel 16-bit processor. Bill Gates and Paul Allen claim that Microsoft convinced IBM to pick Intel’s microprocessor. Some people have said that Regis McKenna’s Project CRUSH deserves credit for the win, but in his oral history, House says he doesn’t think there was a direct link.

David Bradley – designer of the IBM System/23 DataMaster, which was based on the Intel 8085 – joined the IBM PC design team in August, 1980. In 1990, he published an article titled “The Creation of the IBM PC” in Byte magazine that said:

“There were a number of reasons why we chose the Intel 8088 as the IBM PC’s central processor.

“1. The 64-Kbyte address limit had to be overcome. This requirement meant that we had to use a 16-bit microprocessor.

“2. The processor and its peripherals had to be available immediately. There was no time for new LSI chip development, and manufacturing lead times meant that quantities had to be available right away.

“3. We couldn’t afford a long learning period; we had to use technology we were familiar with. And we needed a rich set of support chips—we wanted a system with a DMA controller, an interrupt controller, timers, and parallel ports.

“4. There had to be both an operating system and applications software available for the processor.

“We narrowed our decision down to the Intel 8086 or 8088.”

However, IBM’s Entry Level Systems division did not pick the 8086 microprocessor for the IBM PC because IBM wanted to pay $5 for the microprocessor and Intel could not meet that price objective due to existing 8086 sales contracts with other customers. Intel really wanted to win a high-volume personal computer design and the IBM PC looked like just the thing, but those existing 8086 contracts prevented Intel from giving IBM an advantageously low price on that microprocessor. A five-buck 8086 was out of the question. Intel couldn’t put that choice on the negotiating table. The IBM PC processor pricing problem fell upon Dave House to solve.

By a happy coincidence, Intel had sent the original 8086 microprocessor design to its relatively new design facility in Haifa, Israel for a cost-reducing die shrink in 1979. In conjunction with that effort, two engineers in Haifa, Rafi Retter and Daniel (Dani) Star, converted the 8086’s 16-bit data bus into an 8-bit data bus by changing some of the bus interface unit’s circuitry and modifying some of the processor’s microcode. Because the 8086 already used a multiplexed address/data bus, the new processor’s pinout didn’t need to change from the original 8086 pinout. The microprocessor’s AD0 through AD7 address/data pins remained the same for both processors while the AD8 through AD15 address/data pins on the 8086 simply became the A8 through A15 address pins on the 8088.

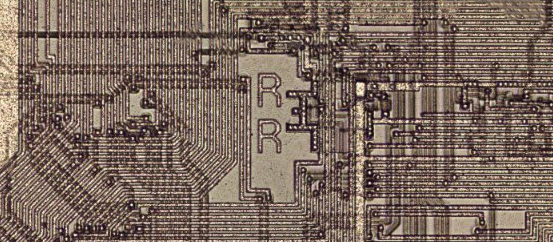

You’ll find Rafi Retter’s initials in the center of the 8088 die:

Intel 8088 microprocessor was designed by Rafi Retter and Daniel Star. Retter’s initials appear in the center of the chip. Photo credit: Ken Shirriff.

Here’s House’s explanation for what happened, taken from his oral history:

“So I’m trying to get this [IBM] design win, and I’m going to lose on architecture to the 68K [Motorola’s MC68000] or the [Zilog] Z8000. So I said, where’s that 8-bit bus version?

“…we went and sold it to IBM.”

To be crystal clear, because the 8088 was not an 8086, because it was a “completely” different microprocessor with a different part number, Intel was no longer bound by the contractual price constraints on the 8086. It used the same ISA and a very similar semiconductor die, but it was a different microprocessor. Sure, the 8088’s narrower data bus throttled system performance a bit, but IBM didn’t care so much about that. After all, the PC wasn’t going to replace IBM mainframes. Price was the overriding consideration for the IBM PC.

In the final analysis, there’s clearly no significant manufacturing-cost difference between an 8086 and an 8088. They have very similar transistor counts and die sizes, and are both packaged in the same 40-pin DIP. However, there’s a price difference, and that difference won the socket. IBM didn’t particularly want a 16-bit processor with an 8-bit data bus, but if that’s what it took to get the unit price down to $5 in volume, so be it. (Further, IBM wanted a second source, so Intel engaged AMD as a second source. That AMD deal spun out its own parallel universe of fascinating stories, too numerous to even list in this article.)

In addition to the low microprocessor price, adopting the Intel 8088 gave the IBM PC design team direct compatibility with Intel’s line of low-cost, 8-bit peripheral chips (originally designed for Intel’s 8-bit microprocessors) and many of those peripheral chips ended up in the IBM PC’s system design as well. These 8-bit peripheral chips also had alternate sources, including AMD, so competition drove their prices down as well, which made IBM happy and further cemented the deal for the 8088. The IBM PC debuted on August 12, 1981, after twelve months of development. The elephant named IBM had learned to dance.

In his oral history, House sums up the importance of the IBM PC’s introduction to Intel:

“So the IBM PC is announced, and it starts taking off, and it exceeds everybody’s expectations… And like, wow! I mean, it turns out to be the defining moment, really, for Intel.”

References:

Dave House Oral History, Computer History Museum, 2004-04-20.

Wally Rhines Oral History, Computer History Museum, 2012-08-10.

Steven, a really great article that brings it all together! It was a very memorable time when we all chased that secret IBM group in Boca Raton. Wally

Thanks Wally. Always nice to hear from you. –Steve

Steve, great article! I still have my original IBM PC, that we used to write a version of PALASM to program PALs. -John