Do you remember all the hoopla about the company Magic Leap a few years ago? I’m trying to remind myself of the timeline. The company was founded in 2010 but was in stealth mode until around 2015. In 2016 they released a teaser video that showed what purported to be augmented reality (AR) in the form of a whale surprising school students by jumping into a gym.

When I first saw this, I remember thinking, “O-M-G, I cannot wait!” I just rewatched that video. I only now realize that it was vaporware because none of the students who are exclaiming “Ooh” and “Aah” are wearing AR goggles. What a swizz!

It wasn’t long after that before Magic Leap disappeared off my radar. To be honest, if you’d asked me even a few minutes ago, I would have guessed that the company had bitten the dust. In fact, it turns out they are still going, but it looks like they are focusing (no pun intended) on things like the medical market. I believe that from the company’s founding to the present day they’ve raised around $3 billion in funding, so where’s my whale?

One negative aspect with respect to Magic Leap’s solution is its AR goggles themselves, which have a retro-futuristic steampunk look, but not in a good way. Suffice it to say that these aren’t AR goggles I can envision my dear old mother sporting 24/7. Similarly, for the Microsoft HoloLens, which has a certain Jetsons coolness factor, but not enough for me to be seen ambling around the supermarket flaunting one on my noggin.

The problems with the vast majority of today’s AR headset offerings can be summarized as follows: bulky design, uncomfortable weight, high power consumption (which equates to short times between charges), low quality visuals, low outdoor visibility, and a disturbing off mode (they filter out so much light when they are deactivated that you think you’re wearing sunglasses).

Let’s not forget that there is already a tremendous amount of interest in AR. My understanding is that there are already at least 14,000 users of Apple’s AR developer platforms like the ARKit and RealityKit, for example. The apps developed with these kits for use on iPhones and iPads are awesome—I love them—but I don’t want to have to wave my AR platform around to see what I want to see. What I want is a totally hands-free AR experience.

The sad thing is that enough of the other elements are in place to provide at least L1 AR, where L1 stands for “Level 1” (we’ll return to discuss the various levels later). For example, we already have powerful mobile computing platforms in the form of our smartphones. We also have connectivity between smartphones and the cloud in the form of cellular and Wi-Fi connections, and we could implement connectivity between smartphones and AR goggles in the form of Bluetooth, so all we need is for someone to create affordable consumer-grade AR goggles that have the overall appearance of regular eyeglasses.

All of which leads me to the fact that I was just chatting with Dr. Peter Weigand. Peter has a PhD in physics and a background in semiconductors. He was the CEO of the Swiss startup Dacuda, whose 3D scanning division was sold to Magic Leap in 2017. After dipping his toes into various technology related waters, Peter has spent most of the past two years as the CEO of TriLite Technologies. TriLite’s claim to fame is the creation of the world’s smallest projection display, which may be the solution everyone has been waiting for when it comes to creating the consumer-grade AR glasses we were just talking about.

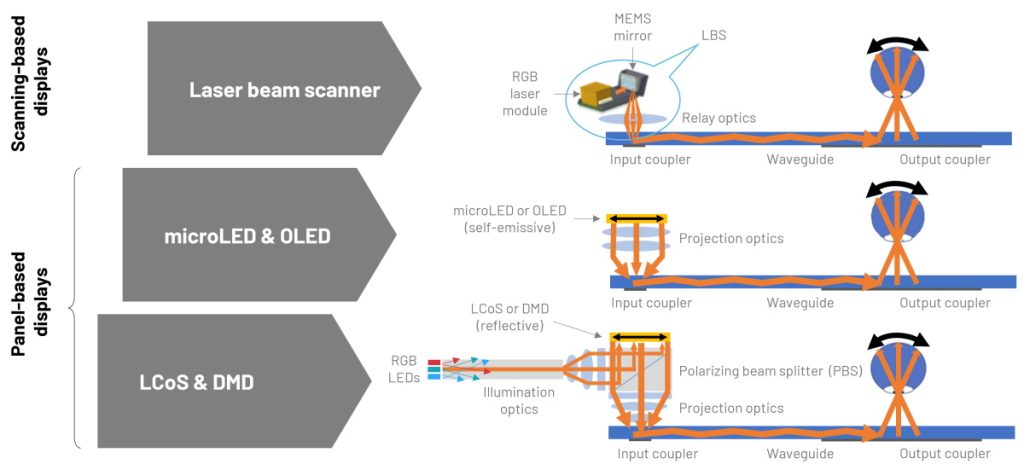

Consider the following illustration, which summarizes the differences between existing state-of-the-art panel-based displays and TriLite’s laser beam scanner (LBS) display, which they call the Trixel 3.

Panel-based displays vs. scanning-based displays (Source: TriLite)

Let’s traverse the optical path in reverse. At the end of the chain, we have the human eyeball looking through the eyeglasses. An output coupler takes the light transported through a waveguide and projects it into the wearer’s eye. An added advantage here is that only the user can see what is being displayed—to anyone else these look like regular glasses. At the other end of the waveguide is the input coupler that feeds light into the waveguide.

Thus far, everything is relatively common between the various display types. The difference is in how the light is generated and presented. Liquid crystal on silicon (LCoS) is a miniaturized reflective active-matrix liquid-crystal display using a liquid crystal layer on top of a silicon backplane. In addition to requiring illumination optics, the associated projection optics are non-trivial to say the least.

The advantage of microLEDs and OLEDs are that they are self-emissive, but their light output falls dramatically when they are shrunk down to the size required for AR eyeglass-type displays, and they still require projection optics to convey the light into the input coupler.

At the present time, laser beam scanning is the only technology capable of making the AR display small, light, and bright. In this case, the beams from red, green, and blue (RGB) lasers are bounced off a MEMS mirror, which feeds them directly into the input coupler without need of projection optics.

One point that interested me here is the way in which the scans are presented to the eye. I guess I would have expected the sort of raster scan we used to use with televisions and computer monitors that employed old-fashioned cathode ray tubes (CRTs). Implementing a raster scan using a MEMs mirror would require the mirror to be accelerated and decelerated in the X and Y directions.

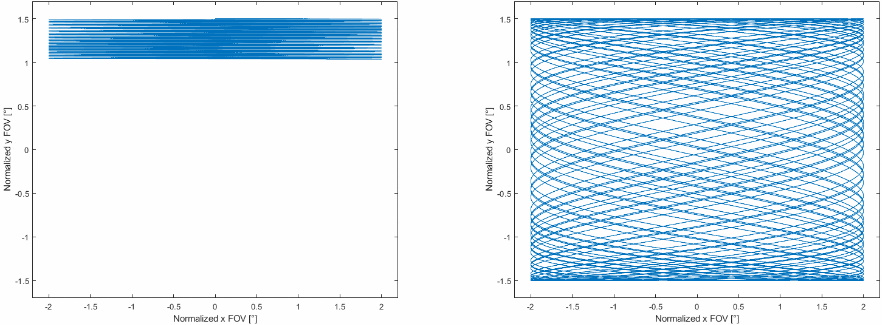

Raster scan (left) vs. Lissajous scan (right) (Source: TriLite)

By comparison, the Trixel 3 employs a Lissajous scan pattern, which results from the MEMs mirror being oscillated at its resonant frequency in the X and Y axes. This results in the mirror being easier to control and it being easier to achieve the desired frame rates.

Furthermore, the Lissajous scan is also advantageous with respect to human perception. If you think about a raster scan, it takes the entire frame between the pixels in the upper left and lower right corners being refreshed. By comparison, when using the Lissajous scan, pixels are refreshed throughout the image as the frame builds up.

Now consider that existing display technologies can occupy as much as 10 cm3. By comparison, the lasers and the MEMS mirror forming the Trixel 3 occupy less than 1 cm3.

The Trixel 3 occupies less than 1 cm3 (Source: TriLite)

The Trixel 3 also weighs only 1.5 g, it offers up to 1152 x 884 (XGA+) resolution with a color depth of 3 x 10 bits and a 90 Hz refresh rate, and—with the lasers running at only 50% capacity—it provides a brightness of 3,000 lumens while consuming only 420 mW.

The Trixel 3 (blue) and control electronics (green) (Source: TriLite)

Another point that really interested me is that one of TriLite’s partners already has a full-blown manufacturing line up and running to create the Trixel 3. Another partner has created the waveguides and input/output couplers, all of which can be presented as standalone glasses or integrated into prescription eyewear.

TriLite is currently helping other partners to create entire AR glasses, and they are interested in working with new partners also. There are many possibilities for partner engagements here, ranging from integration (design support to integrate Trixel 3 into OEM devices) to customization (adapting TriLite’s technology as required) to licensing. There’s also a Trixel 3 Evaluation Kit for those interested in learning more.

I must admit that, after talking to Peter, I’m increasingly hopeful that the AR of my dreams will arrive while I still have a corporeal presence on this planet. Of course, not everything will arrive at once. We have to think of things in levels, like the six levels of vehicle autonomy (L0 = no driving automation, L1 = driver assistance, L2 = partial driving automation, L3 = conditional driving automation, L4 = high driving automation, and L5 = full driving automation).

In the case of AR, most of us are still enjoying (or failing to enjoy) L0 = no AR whatsoever. Peter expects the first consumer-grade AR glasses to become available in the 2023 or 2024 timeframe. These will facilitate L1 AR, which will probably come in the form of information displays, whereby information from our smartphones is presented to us as a hands-free display on our AR glasses. Say we are riding our bikes, we might be presented with environmental conditions, speeds and durations, map overlays and directions, for example. Suppose you are downhill skiing and you want to know your stats (how fast you are going) and related information; you can’t do this by checking things out on your smartphone. Actually, that’s not strictly true, you can, but probably not for long. A far preferable solution would be for your smartphone to communicate this information to your AR glasses.

I’m not sure exactly what capabilities levels L2, L3, etc. will embrace, when they will become available, and when I will get to see whales leaping across my family room. What I’m longing for is when a high-level artificial intelligence (AI) that knows where I’ve left my keys is coupled with digital AR overlays of such high-fidelity that my brain can’t tell the difference between what’s real and what’s not. What say you? Do you have any thoughts you’d care to share on any of this?